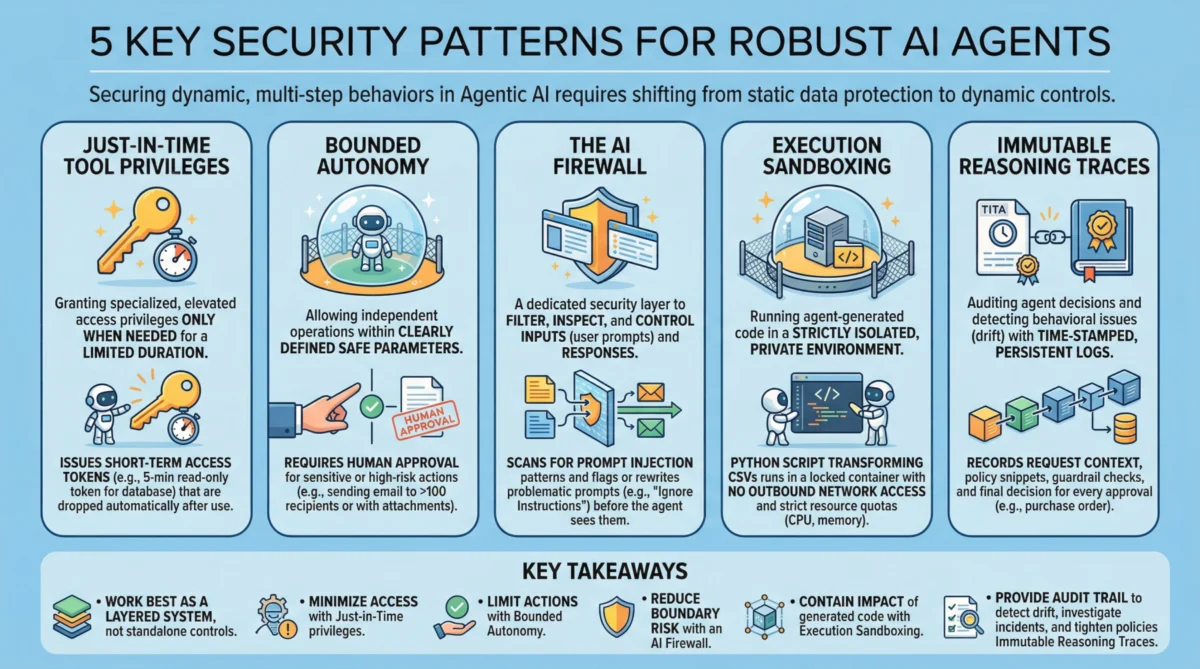

The rapid evolution of artificial intelligence, particularly the rise of agentic AI systems—autonomous software entities designed to perform complex, multi-step tasks—has heralded a new era of technological capability. These agents, central to many of the most impactful developments in generative AI and large language models, promise unprecedented efficiency and innovation across industries. However, this transformative potential is inextricably linked with a profound and evolving need for robust security frameworks. Unlike traditional software, agentic AI systems operate dynamically, make independent decisions, and interact with various tools and environments, presenting unique vulnerabilities that demand a paradigm shift in cybersecurity approaches. Securing these sophisticated systems transcends mere data protection; it necessitates safeguarding their dynamic behaviors, decision-making processes, and interactions within a complex operational ecosystem. This article delves into five pivotal security patterns that are indispensable for fortifying agentic AI, underscoring their critical role in ensuring reliability, trustworthiness, and ethical deployment.

The Evolving Landscape of AI Security: A New Imperative

The journey of AI security has progressed from protecting static machine learning models against data poisoning and adversarial attacks to the more intricate challenge of securing autonomous, goal-oriented agents. The proliferation of agentic AI, marked by its ability to plan, execute, and adapt independently, represents a significant leap from earlier AI applications. Major tech firms and research institutions have invested heavily in this domain, with market forecasts predicting exponential growth in AI-driven automation tools. This rapid adoption, however, outpaces the development and standardization of corresponding security protocols, creating potential vectors for exploitation. Cybersecurity experts universally agree that the autonomous nature of agentic AI necessitates a fundamental re-evaluation of security paradigms, moving from perimeter defense to intrinsic trust and control mechanisms. The historical chronology of software security, which has always lagged behind innovation, serves as a stark warning: proactive security integration is not merely advisable but essential for the responsible deployment of agentic AI.

1. Just-in-Time Tool Privileges: Minimizing Exposure

Just-in-Time (JIT) Tool Privileges represent a cornerstone of modern cybersecurity, emphasizing the principle of least privilege applied dynamically. In the context of agentic AI, JIT ensures that autonomous agents are granted specialized or elevated access permissions only precisely when they are needed, and critically, only for the duration required to complete a specific task. This contrasts sharply with traditional, static privilege models where permissions, once granted, often persist indefinitely unless manually revoked. The core benefit of JIT in agentic systems is the dramatic reduction of the "blast radius" should an agent become compromised. If an agent’s credentials or operational integrity are breached, the attacker’s window of opportunity and the scope of potential damage are severely limited to the brief period and specific resources for which temporary access was granted.

For instance, consider an agent tasked with generating quarterly financial reports. Instead of possessing standing read/write access to all financial databases, it would request a narrowly scoped, read-only token for specific tables containing relevant data. This token might be valid for a mere five minutes and automatically expire or be revoked the moment the data extraction is complete. This granular control mitigates risks associated with credential theft, insider threats, and supply chain vulnerabilities where a compromised tool might otherwise exploit broad, standing permissions. Implementing JIT often involves robust identity and access management (IAM) systems, API gateways, and sophisticated policy engines that can issue and revoke temporary tokens dynamically based on contextual information and predefined security policies. Industry research indicates that organizations adopting JIT access models report a significant reduction in the impact of successful cyberattacks, highlighting its efficacy in securing highly dynamic environments like those inhabited by agentic AI.

2. Bounded Autonomy: Strategic Human Oversight

Bounded Autonomy is a critical security principle that strikes a delicate balance between the efficiency of independent AI operation and the imperative for human control, particularly in high-stakes scenarios. It defines clear, safe parameters within which an AI agent can operate independently, while mandating human approval or intervention for actions that carry significant risk, ethical implications, or exceed predefined thresholds. This approach is vital for mitigating catastrophic errors that could arise from unchecked AI autonomy, creating an essential "control plane" that supports both risk reduction and compliance requirements.

The implementation of bounded autonomy varies based on the agent’s function and the potential impact of its actions. For an agent managing marketing campaigns, it might autonomously draft and schedule social media posts, but any message targeting more than 10,000 recipients or containing sensitive product launch information could be routed to a human marketing manager for final approval. In more critical applications, such as an AI agent assisting in medical diagnostics, it might suggest treatment plans with high accuracy, but a human physician would always retain the ultimate authority to review, modify, and approve the diagnosis and prescribed course of action. This principle directly addresses concerns around algorithmic bias, unintended consequences, and the need for accountability in autonomous decision-making. Regulatory frameworks like the European Union’s AI Act emphasize human oversight and accountability for high-risk AI systems, making bounded autonomy a practical and compliance-driven necessity. Experts from organizations like the National Institute of Standards and Technology (NIST) advocate for clear human-in-the-loop protocols, underscoring that while AI can augment human capabilities, ultimate responsibility and control must remain with human actors in sensitive domains.

3. The AI Firewall: Protecting the Interaction Boundary

The AI Firewall represents a specialized security layer designed to filter, inspect, and control both inputs (user prompts) and outputs (agent responses) to safeguard AI systems from malicious interactions. This dedicated defense mechanism is crucial for protecting against a range of threats unique to conversational and agentic AI, including prompt injection, data exfiltration, and the generation of toxic or policy-violating content. Unlike traditional network firewalls that inspect data packets, an AI firewall operates at a semantic and contextual level, understanding the intent and content of AI interactions.

Prompt injection, a prevalent threat, involves crafting malicious inputs to manipulate an agent into ignoring its original instructions, revealing sensitive information, or performing unintended actions. An AI firewall employs sophisticated techniques, including natural language processing (NLP), machine learning classifiers, and rule-based engines, to detect patterns indicative of such attacks. For example, incoming prompts are scanned for phrases requesting agents to "ignore previous instructions," "reveal internal protocols," or "act as someone else." Flagged prompts can then be blocked, sanitized, or rewritten into a safer form before the agent processes them. Similarly, the firewall inspects outbound responses to prevent data exfiltration—where an agent might inadvertently leak confidential information—or to ensure adherence to ethical guidelines, blocking responses that are discriminatory, hateful, or promote illegal activities. The growing sophistication of adversarial prompt engineering necessitates continuous evolution of AI firewall capabilities, often leveraging techniques like semantic similarity detection and anomaly detection to identify novel attack vectors. Cybersecurity firms specializing in AI security have seen a surge in demand for such solutions, indicating widespread recognition of the AI firewall as an indispensable layer of defense.

4. Execution Sandboxing: Containing Untrusted Code

Execution Sandboxing involves running any agent-generated code or untrusted processes within a strictly isolated and controlled environment. This isolated perimeter, whether a container, virtual machine, or secure enclave, is designed to prevent unauthorized access to host resources, mitigate resource exhaustion attacks, and contain the impact of unpredictable or malicious code execution. Agentic AI systems often possess the capability to generate and execute code dynamically, whether to perform data transformations, interact with APIs, or automate complex workflows. While powerful, this capability introduces significant security risks if the generated code is flawed, malicious, or exploited.

Consider an agent designed to analyze and transform large CSV files. If this agent writes a Python script to perform the transformation, that script would be executed inside a sandboxed environment. This sandbox would have no outbound network access, strict CPU and memory quotas to prevent denial-of-service attacks, and a read-only mount of the input data, preventing the script from modifying critical system files or exfiltrating data. Modern sandboxing technologies leverage kernel-level isolation, namespaces, and cgroups to create highly secure and resource-controlled environments. This pattern is particularly critical for agents that interact with external tools, execute user-provided code, or operate in environments where code generation is a core function. Without robust sandboxing, a single vulnerability in an agent’s code generation or execution module could lead to system-wide compromise, data breaches, or the hijacking of computing resources. Leading cloud providers and security vendors offer robust containerization and serverless execution environments that inherently support sandboxing principles, forming a foundational security layer for agentic AI deployments.

5. Immutable Reasoning Traces: Ensuring Transparency and Accountability

Immutable Reasoning Traces provide a critical mechanism for achieving transparency, accountability, and auditability in autonomous agent decision-making. This practice involves building time-stamped, tamper-evident, and persistent logs that meticulously capture an agent’s inputs, its internal reasoning processes (key intermediate artifacts used for decision-making), the policy checks it applied, and its final actions or decisions. This comprehensive logging is paramount for understanding why an agent acted in a particular way, detecting behavioral drift, investigating incidents, and demonstrating compliance with regulatory requirements.

In high-stakes application domains like finance, healthcare, or procurement, where algorithmic decisions can have significant legal or financial consequences, immutable reasoning traces are indispensable. For example, an agent responsible for approving purchase orders would record not only the final approval but also the original request context, the specific policy snippets retrieved and applied (e.g., budget limits, vendor pre-approvals), the guardrail checks performed (e.g., fraud detection algorithms), and the timestamp of each step. These logs are designed to be write-once, cryptographically verifiable, and resistant to post-hoc alteration, ensuring their integrity during independent audits. This capability is vital for detecting subtle shifts in agent behavior over time, known as "model drift," which could indicate emerging biases, security vulnerabilities, or deviations from intended operational parameters. The ability to reconstruct an agent’s decision-making process is a fundamental requirement under emerging AI regulations globally, such as the EU AI Act, which mandates explainability and auditability for high-risk AI systems. Such traces empower organizations to continuously refine policies, improve agent performance, and build trust in their autonomous systems.

A Layered Defense for the Autonomous Future

These five security patterns are most effective when deployed not as isolated controls, but as a cohesive, layered defense system. Just-in-Time Tool Privileges rigorously limit an agent’s potential access at any given moment, significantly reducing the surface area for attack. Bounded Autonomy ensures that critical or sensitive actions are always subject to human oversight, preventing unchecked autonomous operations from leading to severe consequences. The AI Firewall acts as the frontline defense, scrutinizing and sanitizing interactions at the system boundary to thwart malicious prompts and prevent undesirable outputs. Execution Sandboxing provides a vital containment mechanism, isolating any dynamically generated or untrusted code to prevent system compromise. Finally, Immutable Reasoning Traces offer the essential audit trail, providing the transparency and accountability necessary to detect anomalies, investigate incidents, and continuously refine security policies and operational parameters.

Together, these integrated limitations drastically reduce the probability of a single security failure cascading into a systemic breach, without sacrificing the operational benefits and efficiencies that make agentic AI so appealing. The integration of these patterns is not merely a technical exercise but a strategic imperative that builds trust, ensures compliance, and fosters the responsible development and deployment of autonomous AI systems. As agentic AI continues to evolve and permeate various sectors, the continuous innovation and adaptation of these security paradigms will be crucial for navigating the complex interplay between advanced automation and the paramount need for robust cybersecurity. Industry experts project that the next few years will see increased regulatory focus on AI security, driving further adoption and refinement of these essential patterns across the global technology landscape.