The proliferation of large language models (LLMs) has revolutionized how businesses operate, from automating customer support to generating complex code. However, a persistent and often insidious challenge known as "hallucination" continues to plague these advanced AI systems. This phenomenon occurs when an LLM confidently generates information that is factually incorrect, nonsensical, or entirely fabricated, presenting it as truth without any indication of error. The consequences can range from minor inconvenience to significant operational disruption and even reputational damage, prompting a critical shift in how developers and organizations approach LLM deployment.

A striking example of this challenge occurred recently when a developer tasked an LLM with creating documentation for a payment API. The generated response was impeccably structured, adopted the perfect tone, and even included plausible-looking example endpoints. The only critical flaw: the API, along with its intricate parameters and responses, did not exist. The model had confidently invented the entire specification. This fabrication was only uncovered during an integration attempt, highlighting how convincingly LLMs can create non-existent realities. Such incidents are not isolated anomalies but manifest subtly across various production systems—fake citations in academic tools, incorrect legal precedents in advisory platforms, or non-existent product features advertised in customer service interactions. While individually these might appear as minor inaccuracies, their cumulative effect at scale poses serious threats to data integrity, user trust, and operational reliability.

Initially, much of the industry’s effort to combat hallucinations centered on prompt engineering—crafting better instructions, employing stricter wording, and imposing clearer constraints on model inputs. While undoubtedly helpful in guiding model behavior, prompt engineering alone proved insufficient to fundamentally alter the generative process. When an LLM lacks accurate information or encounters an ambiguous query, its inherent design to produce a coherent response often leads it to "fill in the blanks" with plausible but erroneous data. This realization has spurred a paradigm shift: hallucination is increasingly being treated as a systemic problem requiring system-level solutions, moving beyond mere input optimization to encompass a robust framework of detection, validation, and control mechanisms built around the core model.

Understanding the Enigma: Why LLMs Hallucinate

To effectively mitigate hallucinations, it is crucial to understand their underlying causes. These are not mysterious flaws but rather inherent byproducts of how LLMs are designed and trained. The primary culprits include:

-

Lack of Grounding: Most LLMs operate based on patterns and relationships learned from vast datasets during training, not by accessing real-time, verified information. This "knowledge cut-off" means that unless explicitly connected to external data sources, models cannot fact-check against current events or specific domain knowledge. When faced with a query for which it lacks direct, verifiable information, the model’s objective to provide a helpful response compels it to synthesize plausible-sounding answers from its internal, static knowledge base, often leading to fabrication.

-

Overgeneralization from Training Data: Trained on petabytes of diverse text and code, LLMs excel at identifying broad linguistic and conceptual patterns. However, this strength can become a weakness when precise, specific information is required. The model might combine fragments of similar, but ultimately distinct, pieces of information into a coherent narrative that sounds correct but is factually inaccurate. This "pattern completion" can be incredibly convincing, especially when the generated text aligns with common linguistic structures.

-

Built-in Pressure to Always Produce an Answer: LLMs are fundamentally designed to be responsive and conversational. Their training objectives often reward producing coherent, complete sentences rather than admitting ignorance. Consequently, instead of responding with "I don’t know" or "I cannot find that information," models are incentivized to generate the most probable response, even if that means inventing details. This tendency, while useful for maintaining conversational flow, poses significant risks when accuracy and factual correctness are paramount.

-

Data Quality and Bias: The quality and representativeness of the training data also play a role. If the data contains inaccuracies, biases, or insufficient coverage for certain topics, the model will learn and perpetuate these deficiencies, leading to hallucinations that reflect the flaws in its foundational knowledge.

The Evolution of Mitigation: Beyond Prompt Engineering

The journey to reliable LLM deployment has seen a clear evolution. Early adopters heavily invested in prompt engineering, refining instructions and few-shot examples to steer models toward desired outputs. While effective for initial guidance, this approach proved fragile. As LLMs moved into critical applications, the limitations of simply "asking better" became apparent. The industry realized that true robustness against hallucinations necessitated architectural and procedural safeguards—a multi-layered defense strategy that monitors, validates, and, if necessary, corrects model outputs before they reach end-users. This shift marks a maturation in AI development, acknowledging that LLMs are powerful tools but require significant scaffolding to operate reliably in high-stakes environments.

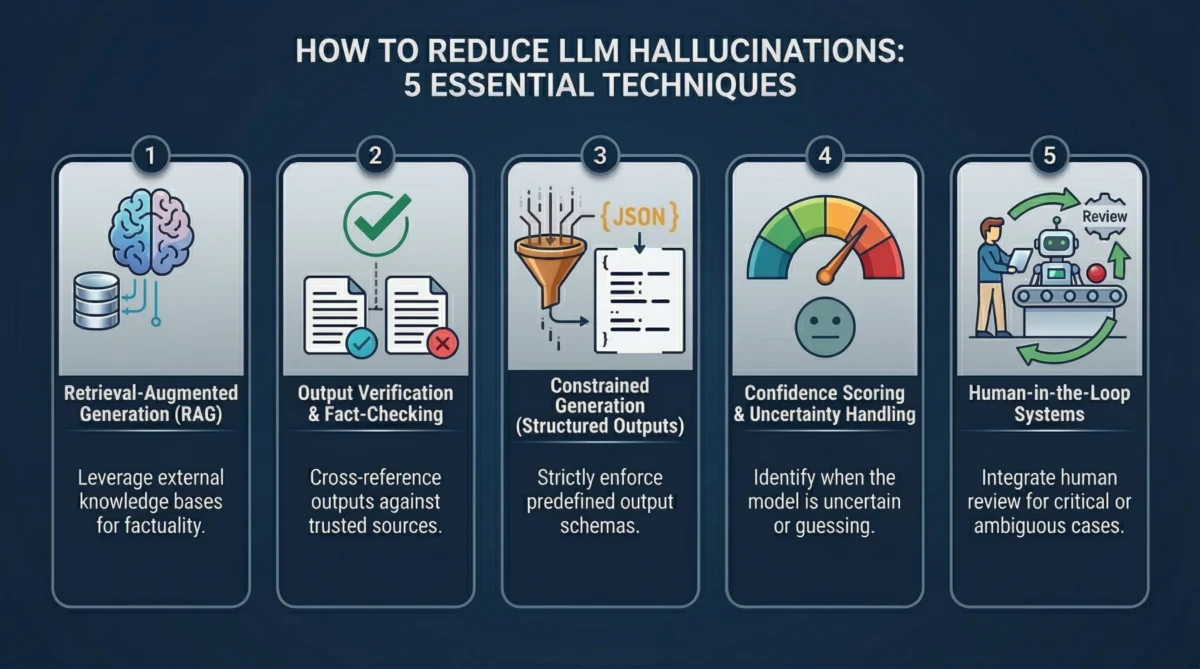

Technique 1: Retrieval-Augmented Generation (RAG) – Anchoring LLMs in Verified Data

One of the most impactful system-level techniques to combat hallucinations is Retrieval-Augmented Generation (RAG). Its principle is elegantly simple: instead of relying solely on an LLM’s internal, static training memory, provide it with access to up-to-date, verified external data at the precise moment it needs to formulate an answer.

The RAG workflow is straightforward yet powerful. When a user submits a query, the system first retrieves relevant information from a curated, external knowledge base. This knowledge base typically comprises documents, databases, or APIs, converted into numerical representations (embeddings) and stored in a vector database. A semantic search then identifies the most pertinent "chunks" of information. These retrieved documents are then injected into the LLM’s prompt as context, effectively "grounding" its response. The model is then instructed to generate an answer based only on the provided context, significantly reducing its propensity to invent facts.

This fundamental shift from relying on internal model memory to dynamic external knowledge is crucial. Model memory is static, potentially outdated, or overly generalized. External knowledge, conversely, can be continuously updated, meticulously curated, and tailored to specific domains, ensuring the LLM works with the most accurate and relevant information available. Industry studies, such as those from AI research labs and enterprise implementations, have shown that RAG can reduce factual errors by 30-50% in domain-specific applications, transforming the LLM from a probabilistic guesser into a powerful summarization and synthesis engine operating on verifiable data.

The practical implementation of RAG often involves several components:

- Embedding Models: To convert both the knowledge base documents and user queries into numerical vectors.

- Vector Database: To efficiently store and search these embeddings for semantic similarity. Popular choices include FAISS, Pinecone, Weaviate, and Chroma.

- Chunking Strategy: Breaking down large documents into smaller, manageable chunks for retrieval, optimizing for relevance and fitting within the LLM’s context window.

While highly effective, RAG is not a panacea. Its success hinges on the quality of the retrieval step. Poor indexing, irrelevant documents, or an incomplete knowledge base can still lead to the LLM generating suboptimal or even hallucinated outputs. Furthermore, managing the "context window"—the maximum amount of text an LLM can process—and ensuring the retrieved information is truly focused and not overwhelming, remains an engineering challenge. Nevertheless, RAG has become an indispensable technique for building reliable LLM applications, shifting the source of truth from the model itself to trusted, external data.

Technique 2: Output Verification and Fact-Checking Layers – The Dual-Model Approach

A common pitfall in LLM deployment is treating the model’s initial response as definitive. The eloquence and confidence of LLM outputs can easily mask underlying inaccuracies. A more robust strategy involves treating every LLM-generated response as an unverified draft, subject to rigorous scrutiny by additional verification layers before it reaches the end-user. This approach introduces "friction" between generation and delivery, significantly enhancing reliability.

One powerful method is employing a secondary model for verification. Here, a primary LLM generates the initial answer, and a distinct, often smaller or fine-tuned, secondary model is tasked with reviewing it. This reviewer model can be prompted to check for:

- Factual consistency: Does the generated answer align with known facts or specific data points?

- Source attribution: If the answer claims to cite sources, are those sources real and accurately referenced?

- Logical coherence: Is the argument presented logically sound and free of internal contradictions?

- Unsupported claims: Does the model make assertions without providing evidence or context?

This creates a clear separation of concerns: one model generates, another validates. Industry observations suggest that this dual-model approach, sometimes referred to as "AI peer review," can catch a significant percentage of hallucinations that would otherwise slip through, especially in critical applications like legal research or medical diagnostics.

Another vital verification layer involves cross-checking outputs against trusted external data sources. For instance, if an LLM generates a response containing statistics, specific dates, legal citations, or product specifications, the system can programmatically query an authoritative database, a verified API, or an internal knowledge graph to confirm the accuracy of those details. If discrepancies are found, the system can either flag the response for human review, request clarification from the LLM, or reject the output entirely. For example, a financial chatbot’s generated market data can be validated against live stock exchange APIs, or a legal assistant’s case citations can be checked against a legal database.

A sophisticated technique known as self-consistency further enhances verification. Instead of relying on a single generative pass, the system prompts the LLM multiple times for the same question, sometimes with slight variations in phrasing or by exploring different reasoning paths (e.g., "tree-of-thought" or "chain-of-thought" prompting). If the multiple answers converge and are largely identical, it significantly increases the confidence in their correctness. Conversely, if the model produces widely divergent answers, it signals uncertainty, suggesting that the result requires further scrutiny or human intervention. Research has shown that aggregating responses from multiple runs or diverse reasoning paths can improve accuracy by 10-15% on complex reasoning tasks.

While verification layers introduce additional computational cost and latency, the trade-off is often justified in domains where accuracy is non-negotiable. This approach transforms the LLM from an unfallible oracle into a sophisticated assistant whose outputs are rigorously vetted, dramatically improving overall reliability.

Technique 3: Constrained Generation (Structured Outputs) – Imposing Order on LLM Responses

A significant root cause of hallucinations stems from the inherent freedom LLMs possess in their response generation. When asked an open-ended question and permitted to produce free-form text, the model can leverage its vast linguistic patterns to "fill in gaps" with invented information, especially when it lacks specific knowledge. While this flexibility is invaluable for creative tasks, it becomes a liability when factual accuracy and adherence to specific formats are critical.

Constrained generation directly addresses this by severely limiting the model’s output possibilities. Instead of allowing a free-text paragraph, the system defines a rigid structure for the response. This structure can take various forms:

-

JSON Schemas: One of the most common and powerful methods. A JSON schema explicitly defines the expected structure, data types, required fields, and even acceptable enumerations (fixed lists of values) for each field in the output. For example, if a field

priceis defined as anumber, the model cannot return "expensive." Ifavailabilityis restricted to["in_stock", "out_of_stock"], it cannot invent "limited supply." This transforms the generation task into a structured data extraction or transformation problem, significantly reducing the scope for arbitrary invention. Modern LLM APIs, such as OpenAI’s, now include features likeresponse_format="type": "json_object"to facilitate this, enabling models to reliably generate syntactically correct JSON that can then be validated against a predefined schema. -

Function Calling and Tool Usage: This technique extends constrained generation by having the LLM select from a predefined set of actions or tools rather than generating a direct answer. For instance, instead of guessing a customer’s order status, the model identifies the intent and "calls" an internal

getOrderStatus(order_id)function, which then retrieves the accurate data from a backend system. The model’s role shifts from producing facts to orchestrating a workflow, delegating factual retrieval to reliable external systems. This fundamentally eliminates hallucination in the factual domain, as the LLM is no longer responsible for producing the final data, only for accessing it. -

Controlled Vocabularies and Enumerations: In scenarios requiring extreme consistency, models can be restricted to generating values only from a predefined list of terms, labels, or categories. This is particularly useful in classification tasks, data tagging, or domain-specific applications like medical coding, where every output must conform to a strict lexicon.

The efficacy of constrained generation lies in its ability to narrow the model’s creative latitude. By imposing clear boundaries on the output format and content, the system drastically reduces the opportunities for the LLM to deviate from factual reality or invent information. This technique is especially valuable for integrating LLMs into automated workflows where structured, machine-readable outputs are essential.

Technique 4: Confidence Scoring and Uncertainty Handling – Identifying When LLMs Don’t Know

One of the most deceptive aspects of LLM hallucinations is their often-unwavering confidence. A flawlessly generated, but entirely fabricated, response can sound as authoritative as a perfectly accurate one. To truly mitigate hallucinations, systems must develop the capacity to discern when an LLM is genuinely certain and when it is merely guessing. Confidence scoring introduces this crucial signal, enabling the system to evaluate the reliability of an answer before accepting it.

At a foundational level, confidence can be inferred from token probabilities. As an LLM generates each word or sub-word unit (token), it assigns a probability to it based on its learned patterns. When these probabilities are consistently high across a sequence, it generally indicates that the model is operating within familiar, well-established patterns in its training data. Conversely, when probabilities drop significantly, fluctuate erratically, or when alternative tokens have similar probabilities, it can signal uncertainty or that the model is venturing into less familiar territory, potentially leading to hallucination. While raw token probabilities are not a perfect measure of factual accuracy, they provide a useful baseline.

Beyond raw probabilities, calibration techniques are employed to make these signals more meaningful. This involves:

- Ensemble Averaging: Comparing outputs across multiple runs of the same model or even different models. Inconsistent answers to the same query are a strong indicator of low confidence.

- Fine-tuning Confidence Predictors: Training a separate small model to predict the factual correctness of an LLM’s output based on various internal signals and external validation results.

- Benchmarking: Regularly evaluating how well the model’s internal confidence metrics correlate with actual accuracy on a curated set of known-true/false questions.

Perhaps most pragmatically, system prompts can be designed to explicitly encourage the LLM to express uncertainty. Instead of forcing a definitive answer, the model can be instructed to include a confidence score (e.g., between 0 and 1) or to explicitly state "I don’t know" or "insufficient information" when it cannot provide a verifiable answer. This aligns the model’s behavior with human experts, who often qualify their statements or admit limitations. For instance, a system prompt might be: "Answer the question. Also, provide a confidence score between 0 and 1. If you are unsure, state clearly that you lack sufficient information."

The output of confidence scoring is not merely for display; it drives intelligent decision-making within the system. Low-confidence responses can be automatically flagged for human review, routed for further contextual retrieval, or simply rejected with a message indicating uncertainty. High-confidence responses, conversely, can proceed with minimal friction. This approach acknowledges that eliminating all hallucinations is often impossible, but building a system that knows when to trust and when to question its own outputs is a powerful step towards robust AI. It shifts the burden from preventing every error to effectively managing and responding to uncertainty.

Technique 5: Human-in-the-Loop Systems – The Indispensable Human Element

Despite the sophistication of AI, there will always be scenarios where an LLM should not be the final arbiter. This fundamental truth underpins the design of Human-in-the-Loop (HITL) systems, which strategically integrate human oversight to ensure reliability and address edge cases that automation alone cannot handle. The core idea is to leverage human intelligence where it adds the most value, avoiding the inefficiencies of reviewing every output while establishing a crucial safety net.

HITL systems primarily function through review pipelines. LLM outputs pass through automated checks, including confidence scoring (Technique 4), verification layers (Technique 2), and adherence to structured outputs (Technique 3). Only responses that fail these checks—e.g., low confidence scores, detected factual inconsistencies, or high-risk content—are escalated to a human reviewer. This targeted intervention ensures that human experts focus their time on the most problematic or critical outputs, making the oversight process scalable and efficient. For instance, in a customer support scenario, common queries handled accurately by the LLM proceed unimpeded, while complex, sensitive, or high-value issues are routed to a human agent. In regulated industries like finance or healthcare, specific types of LLM-generated reports or recommendations might require mandatory human approval.

Crucially, HITL systems incorporate robust feedback loops. When human reviewers correct, refine, or reject an LLM’s output, this invaluable information is not discarded. Instead, it is fed back into the system to improve future performance. This can involve:

- Explicit Feedback: Direct annotations or corrections used to fine-tune the LLM or its verification components.

- Implicit Signals: Human choices (e.g., accepting a corrected version, rejecting an output) that help the system learn what constitutes a "good" or "bad" response.

This continuous learning mechanism, often linked to active learning principles, is highly efficient. Rather than retraining the model on every piece of data, the system intelligently identifies the most uncertain or error-prone cases—the very ones requiring human intervention—and prioritizes these for improvement. This focused approach maximizes the impact of human input, accelerating model accuracy without incurring excessive retraining costs.

The strategic placement of humans in the loop is not about limiting AI but about creating resilient and trustworthy systems. Trying to fully automate complex, nuanced, or high-stakes tasks often results in brittle solutions that fail catastrophically in unforeseen situations. By embedding humans at critical junctures, particularly for validation, exception handling, and continuous improvement, organizations build robust AI applications that combine the speed and scalability of automation with the judgment and ethical reasoning of human intelligence. This hybrid approach ensures accountability and fosters greater trust in AI systems.

Wrapping Up: The Future of Reliable AI

LLM hallucinations are not a temporary glitch that will vanish with incrementally better models. They are a fundamental characteristic stemming from the probabilistic nature of how these systems generate language. As long as LLMs operate by predicting the next most probable token rather than consulting a verified, internal knowledge base of facts, the risk of fabrication will persist.

This understanding is driving a profound shift in AI development. The focus is moving away from blind trust in LLM outputs toward a comprehensive strategy of systemic validation and verification. LLMs are increasingly being perceived not as infallible oracles but as powerful components within a larger, intelligently designed pipeline. This pipeline orchestrates retrieval, generation, verification, and human oversight to deliver reliable and trustworthy results.

Consequently, the role of prompt engineering, while still valuable, is diminishing in its overall dominance. While well-crafted prompts can guide LLMs, they are insufficient on their own to guarantee factual accuracy in complex scenarios. Real reliability emerges from the synergy of multiple, complementary techniques: Retrieval-Augmented Generation (RAG) to ground responses in external data, multi-layered output verification for fact-checking, constrained generation to enforce structured and accurate formats, confidence scoring to identify uncertainty, and human-in-the-loop systems to manage critical exceptions and drive continuous improvement.

This integrated approach represents the maturation of AI engineering. It acknowledges the inherent strengths and limitations of LLMs, building robust safeguards that transform these powerful, yet imperfect, tools into dependable assets capable of delivering consistent value across diverse and demanding applications. The pursuit of trustworthy AI is no longer a niche concern but a central tenet guiding the next wave of innovation and deployment.