The landscape of artificial intelligence interaction is undergoing a significant transformation, moving beyond the familiar cloud-based chatbots like Google’s Gemini, OpenAI’s ChatGPT, and Anthropic’s Claude. While these platforms have accustomed users to fixed interfaces, internet dependency, and often tiered access, a paradigm shift is emerging: the deployment of powerful, fully functional large language models (LLMs) directly on mobile devices. This innovation, spearheaded by Google, promises to redefine how users engage with AI, offering unparalleled privacy, offline capabilities, and cost-free access through its pioneering AI Edge Gallery application.

The Evolution of AI Interaction: From Cloud to Edge

For years, the vast majority of AI interactions, particularly with sophisticated language models, have relied on robust cloud infrastructure. Users submit queries to remote servers, which process the information using immense computational power and then send responses back. This model has enabled the rapid development and deployment of incredibly capable AI tools, making them accessible to a global audience. However, it also introduced inherent limitations: a constant requirement for internet connectivity, potential latency issues depending on network conditions, and most critically, privacy concerns regarding data transmission and storage on third-party servers. Users often grapple with questions about how their personal data, conversations, and uploaded content are handled, processed, and potentially used to train future models. Furthermore, many advanced cloud-based AI services operate on a freemium model, limiting free usage or gating premium features behind subscriptions.

Recognizing these challenges and the immense potential of localized processing, Google has been at the forefront of developing "edge AI" solutions. Edge AI refers to the processing of AI algorithms and data on the device itself (the "edge" of the network) rather than sending it to a central cloud server. This architectural shift is not merely a technicality; it represents a fundamental change in the user-AI relationship, emphasizing control, efficiency, and data sovereignty.

Introducing AI Edge Gallery: Your Personal AI Hub

Google’s AI Edge Gallery application, available on the Play Store, is the linchpin of this new on-device AI ecosystem. It serves as a streamlined platform designed to facilitate the effortless installation and management of large language models directly onto compatible Android smartphones. The application democratizes access to advanced AI by removing the common barriers associated with cloud-based services. The process is remarkably straightforward: users simply install the AI Edge Gallery app, browse and select their desired open-source LLM, download it to their device, and immediately gain access to a powerful AI assistant that operates entirely locally.

The implications of this local execution are profound. Once a model is downloaded, its functionality is no longer tethered to an internet connection. This means users can converse with their AI, generate text, analyze data, or perform other tasks even in airplane mode, in remote areas without coverage, or simply to conserve mobile data. Crucially, all models offered through AI Edge Gallery are open source. This commitment to open-source development means not only that the AI is free to use without recurring costs, but also fosters transparency and allows the wider developer community to inspect, contribute to, and build upon these models, accelerating innovation and ensuring long-term viability.

Gemma 4: Google’s Powerful Open-Source Offering

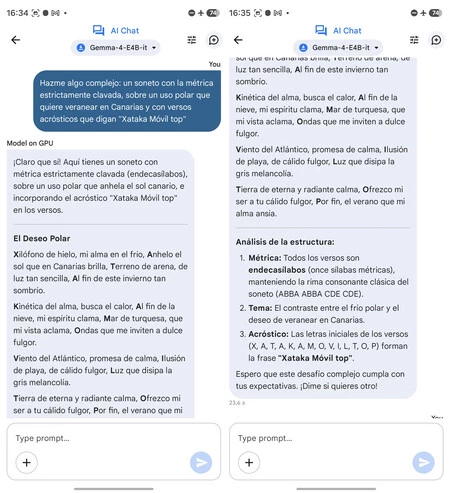

A cornerstone of the AI Edge Gallery experience is the availability of Google’s recently released Gemma models, particularly Gemma 4. Gemma is a family of lightweight, state-of-the-art open models built from the same research and technology used to create Google’s proprietary Gemini models. Released with a strong emphasis on responsible AI development, Gemma models are designed to be highly capable, offering robust performance in various natural language processing tasks, including creative writing, code generation, summarization, question answering, and conversational dialogue.

The Gemma family comprises several versions, optimized for different computational environments. For mobile devices, Google has released distilled, highly efficient variants known as E2B and E4B (Edge 2 Billion and Edge 4 Billion parameters, respectively). These smaller, optimized models are specifically engineered to run efficiently on mobile chipsets with limited memory and processing power while still delivering impressive performance. Larger versions, such as 26B and 31B (26 Billion and 31 Billion parameters), are available for more powerful desktop or server environments, offering even greater contextual understanding and capability. The inclusion of Gemma 4 within AI Edge Gallery signifies Google’s commitment to making its cutting-edge AI research accessible to a broader audience, fostering an ecosystem of innovation that extends beyond its own commercial products.

Initial testing of Gemma E4B on high-end mobile devices, such as the Samsung Galaxy S26 Ultra (likely referring to a future iteration or advanced prototype given current market models), Vivo X300 Pro, and Google Pixel 8 Pro, has yielded striking results. The models demonstrated remarkable speed and precision in generating responses, exhibiting a naturalness in conversation that belies their on-device operation. Beyond basic text interaction, these models showcase "agent skills" capabilities, allowing them to analyze photos, generate QR codes, and act as a versatile personal assistant, further expanding the utility of local AI. The effectiveness and rapidity of these on-device models have surprised many, signaling a significant leap in mobile AI capabilities.

Accessing On-Device AI: The Installation Process

Installing Gemma 4, or any other compatible LLM, via the AI Edge Gallery app is designed to be user-friendly, catering to a wide range of Android devices. However, optimal performance is directly tied to the underlying hardware capabilities of the smartphone.

Here’s a general guide to having the latest Google AI running on your phone:

- Download AI Edge Gallery: Navigate to the Google Play Store on your Android device and search for "AI Edge Gallery" (or similar official Google application for local AI models). Download and install the application.

- Open the App and Grant Permissions: Upon first launch, the app may request necessary permissions, such as storage access to download and store the AI models. Grant these permissions to proceed.

- Browse and Select a Model: Within the AI Edge Gallery interface, you will find a list of available open-source LLMs. Look for the Gemma models, specifically the mobile-optimized versions like Gemma E2B or Gemma E4B.

- Consider Your Device’s Power:

- If your mobile device is an older model or has limited processing power and RAM, it is advisable to start with a smaller model like Gemma E2B. This variant requires less computational overhead and will offer a smoother experience on less powerful hardware.

- For recent high-end smartphones equipped with powerful chipsets (e.g., Qualcomm Snapdragon 8 Gen series, Google Tensor series, MediaTek Dimensity flagship chips) and ample RAM (8GB or more), the Gemma E4B model is recommended. This larger model offers a richer contextual understanding and more nuanced responses, noticeably enhancing the practical AI experience.

- Download the Model: Once you’ve selected your preferred Gemma variant, initiate the download. Be aware that these models can be several gigabytes in size, so ensure you have sufficient internal storage space and a stable Wi-Fi connection for the download.

- Launch and Interact: After the download is complete, the model will be ready for immediate use. You can typically launch a chat interface directly from the AI Edge Gallery app.

- Activate Agent Skills (Optional): For enhanced functionality beyond conversational AI, explore the "Agent skills" option within the main screen of the application. Activating this feature can unlock capabilities such as photo analysis, QR code generation, and other utility-focused tasks, transforming your local AI into a more versatile digital assistant.

This localized chatbot is adept at general conversation, offering quick and insightful responses. Its ability to process information without external servers makes it an ideal companion for a variety of personal and professional tasks.

Advantages and Disadvantages of On-Device AI

While the shift to on-device AI brings numerous benefits, it also presents certain trade-offs that users should consider.

Pros of On-Device AI:

- Enhanced Privacy and Data Security: Perhaps the most compelling advantage is privacy. All interactions and data processing occur directly on your device. This means your conversations, prompts, and any personal information you share with the AI never leave your phone, eliminating concerns about data being transmitted to external servers, stored in the cloud, or used for model training without explicit consent.

- Offline Functionality: The ability to operate completely without an internet connection is revolutionary. This makes local AI invaluable for travelers, individuals in areas with unreliable connectivity, or anyone looking to minimize mobile data usage. From translating phrases in a foreign country to summarizing documents on a long flight, the AI remains accessible.

- Cost-Effectiveness: Since the models are open-source and run locally, there are no subscription fees or per-query charges. Once downloaded, the AI is free to use indefinitely, democratizing access to powerful computational tools.

- Reduced Latency and Faster Responses: By eliminating the round trip to cloud servers, on-device AI significantly reduces latency. Responses are often instantaneous, leading to a much smoother and more fluid conversational experience.

- Increased Reliability: Dependence on external network infrastructure is removed, making the AI’s availability and performance more consistent, unaffected by server outages or internet slowdowns.

- Customization and Control: For developers and advanced users, the open-source nature of these models allows for greater customization, fine-tuning, and integration into specialized applications or workflows.

Cons of On-Device AI:

- Hardware Dependency: The primary limitation is the requirement for powerful mobile hardware. Running complex LLMs locally demands significant processing power, ample RAM, and efficient neural processing units (NPUs). Older or lower-end smartphones may struggle to run these models efficiently, if at all, leading to slow response times or instability.

- Model Size and Capabilities: While Gemma models are highly capable, even the E4B version (4 billion parameters) might not match the sheer breadth of knowledge, the depth of reasoning, or the cutting-edge capabilities of the largest cloud-based models (which can have hundreds of billions or even trillions of parameters). There’s a trade-off between local efficiency and ultimate generative power.

- Storage Requirements: AI models, even optimized ones, consume a substantial amount of storage space on the device. Users with limited internal storage might find it challenging to accommodate multiple models or larger variants.

- Update Mechanism: Keeping local models updated with the latest knowledge or improvements requires users to manually download new versions, unlike cloud models that are continuously updated on the server side.

- Energy Consumption: Running computationally intensive AI tasks locally can consume more battery power than simply sending a query to the cloud.

- Limited Integration (Currently): While "Agent skills" are emerging, deep integration with other phone functions (like accessing real-time web data, interacting with other apps, or controlling smart home devices) might be more complex for local models compared to cloud-connected AI assistants.

Broader Impact and Future Implications

The emergence of platforms like AI Edge Gallery and powerful on-device models like Gemma 4 marks a pivotal moment in the evolution of artificial intelligence. This development has several significant implications for the tech industry and everyday users:

- Democratization of Advanced AI: By making sophisticated AI models freely available and runnable on personal devices, Google is significantly lowering the barrier to entry for developers and users alike. This fosters innovation, allowing smaller teams and individuals to experiment with and build upon powerful AI without needing access to expensive cloud resources.

- Shift in Mobile Computing Paradigms: This move signals a strategic shift where smartphones are no longer just terminals for cloud services but powerful, self-sufficient computing hubs. Manufacturers will increasingly focus on integrating specialized AI hardware (NPUs, dedicated AI accelerators) into their mobile chipsets to support these local capabilities.

- Enhanced Personalization and Accessibility: On-device AI can be tailored more specifically to individual user habits and preferences without privacy concerns. It can also enhance accessibility features, providing real-time assistance, translation, and transcription capabilities to a wider audience, including those with disabilities or in underserved areas.

- New Use Cases for Edge AI: Beyond conversational chatbots, the ability to run AI locally unlocks new possibilities for applications in augmented reality, real-time object recognition, advanced photography and video processing, localized predictive analytics, and enhanced device security, all without relying on external servers.

- Competitive Landscape: Google’s move intensifies competition in the AI space, pushing other tech giants like Apple, Samsung, and Qualcomm to further invest in and promote their own on-device AI capabilities and platforms. We can expect a race to optimize hardware and software for seamless local AI integration.

- Hybrid AI Architectures: The future of AI interaction will likely involve a hybrid model. Local AI will handle personal, latency-sensitive, and privacy-critical tasks, while cloud AI will continue to excel in tasks requiring vast real-time data, immense computational power for complex training, or collective intelligence. The synergy between these two approaches will offer the best of both worlds.

While Google has not released specific user adoption figures for AI Edge Gallery, the strategic importance of its open-source Gemma models and their integration into this accessible platform is evident. Industry analysts view this as a crucial step in Google’s broader strategy to lead in the age of AI, not just through its proprietary offerings but also by empowering the open-source community and expanding the reach of AI to the "edge."

Conclusion

The convenience of cloud-based AI chatbots has become a staple for many, but the advent of on-device AI via Google’s AI Edge Gallery and the powerful Gemma 4 models presents a compelling alternative. This shift offers a future where advanced AI is not just intelligent but also profoundly personal, private, and perpetually available, independent of internet connectivity. The ability to carry a sophisticated, free, and secure AI companion directly on one’s phone is a testament to the rapid advancements in mobile computing and AI optimization. For those with compatible hardware, embracing Gemma 4 on their mobile device is more than just trying a new app; it’s experiencing a tangible glimpse into the future of ubiquitous, responsible, and empowering artificial intelligence. The primary limitation remains the phone’s hardware, but as mobile chipsets continue to evolve, the capabilities of personal, on-device AI will only continue to expand.