AWS has announced the general availability of cross-account safeguards in Amazon Bedrock Guardrails, a significant enhancement designed to empower organizations with centralized enforcement and management of safety controls across multiple AWS accounts within their enterprise. This new capability marks a crucial step in enabling robust and consistent responsible AI practices, addressing the escalating complexity of managing generative AI deployments at scale. The introduction of this feature underscores a growing industry focus on governance and compliance as AI technologies become increasingly integrated into core business operations.

The Evolution of AI Safety on AWS

The journey towards comprehensive AI safety on AWS has seen a consistent progression, driven by the rapid adoption of generative AI models and the inherent challenges they present. Amazon Bedrock, initially made generally available in September 2023, established itself as a fully managed service that offers access to foundation models (FMs) from Amazon and leading AI companies through a single API. This platform democratized access to powerful generative AI capabilities, allowing developers to build and scale applications with FMs while maintaining data privacy and security. However, as organizations expanded their use of generative AI across various departments and projects, the need for robust content moderation and safety controls became paramount.

Recognizing this imperative, AWS introduced Amazon Bedrock Guardrails at re:Invent 2023, making it generally available earlier this year. Guardrails provide a suite of tools for implementing responsible AI policies, enabling customers to define custom safeguards tailored to their specific use cases and organizational standards. These safeguards help filter out unwanted content, prevent prompt injections, and ensure that AI interactions remain within defined ethical and operational boundaries. Initially, the management of these guardrails was primarily at an account or application level, requiring individual configuration and oversight. Today’s announcement of cross-account safeguards represents the logical next step in this evolution, moving from localized control to a comprehensive, organization-wide governance model. This chronology reflects a responsive development cycle, adapting to customer needs as their AI adoption matured from experimentation to enterprise-wide integration.

Addressing the Enterprise Challenge of Decentralized AI

In large enterprises, managing IT infrastructure often involves a multi-account strategy within AWS Organizations. This approach offers benefits such as improved billing, security isolation, and easier resource management. However, it also introduces complexities, particularly when new technologies like generative AI are deployed. Without centralized controls, each team or business unit might configure its AI applications and associated safety measures independently. This decentralized approach can lead to inconsistencies in content moderation, potential compliance gaps, and a significant administrative burden for security and governance teams tasked with ensuring uniform adherence to corporate policies and regulatory requirements.

The lack of a unified enforcement mechanism posed a substantial challenge. Security teams previously had to meticulously oversee and verify the configurations and compliance status of each individual account and application using Bedrock. This manual, account-by-account validation process was not only time-consuming and resource-intensive but also susceptible to human error, increasing the risk of policy violations or exposure to inappropriate or harmful AI outputs. A recent industry report on AI governance highlighted that over 60% of enterprises struggle with inconsistent AI policy enforcement across different organizational units, underscoring the critical need for solutions that offer centralized control without stifling innovation.

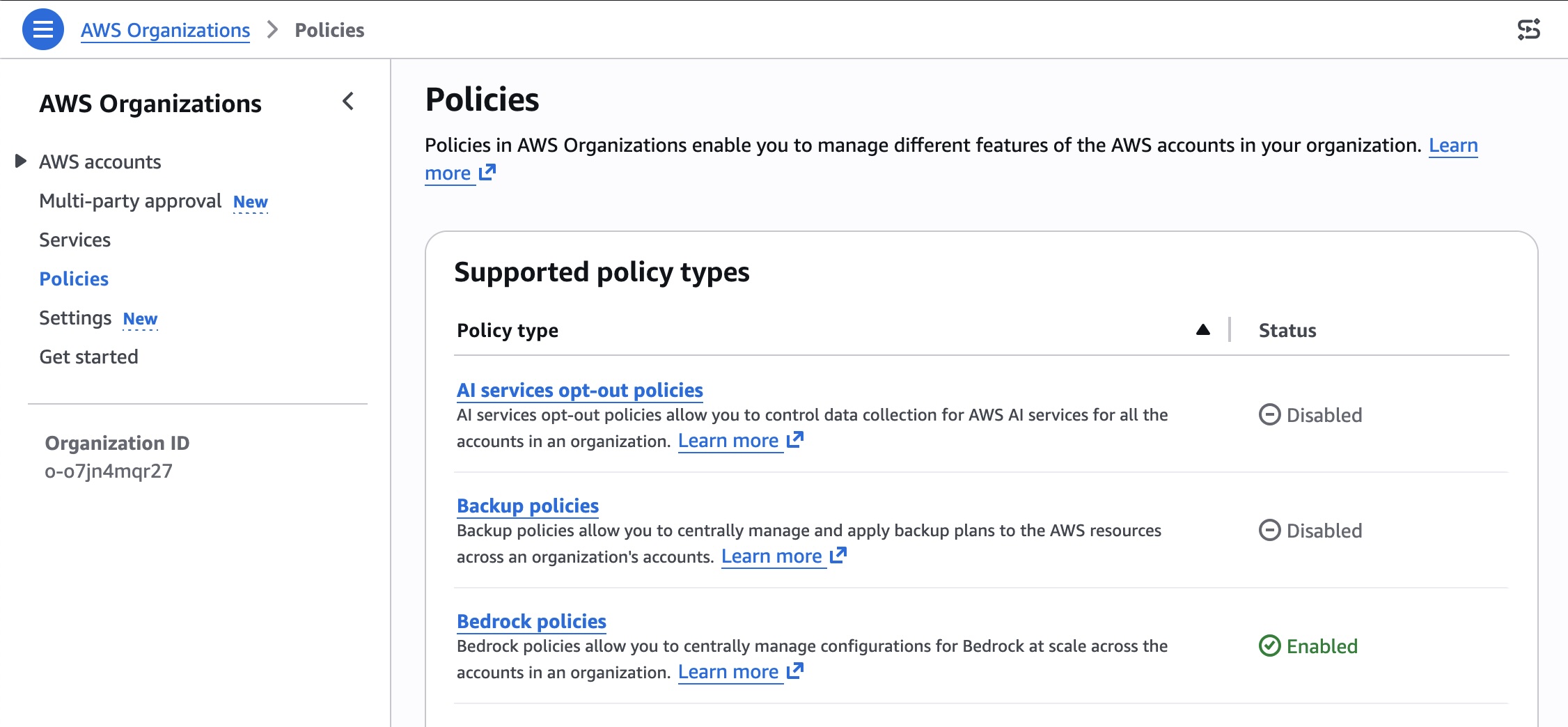

Centralized Enforcement Through Amazon Bedrock Policies

The newly introduced cross-account safeguards directly address these challenges by leveraging the power of AWS Organizations. Organizations can now define a guardrail within a new Amazon Bedrock policy in their management account. This policy then automatically enforces the configured safeguards across all member entities—be it individual accounts, organizational units (OUs), or the entire organization—for every model invocation with Amazon Bedrock. This mechanism ensures uniform protection across all accounts and generative AI applications, providing a single, unified approach to AI governance.

This organization-wide implementation supports consistent adherence to corporate responsible AI requirements, which are becoming increasingly stringent globally. Regulatory bodies are beginning to mandate clear guidelines for AI ethics and safety, making it imperative for companies to demonstrate robust governance frameworks. By centralizing guardrail enforcement, organizations can significantly reduce the administrative burden associated with monitoring individual accounts and applications. Security teams are no longer required to independently oversee and verify configurations or compliance for each account, freeing up valuable resources and allowing them to focus on higher-level strategic security initiatives. This shift towards policy-driven, automated enforcement represents a paradigm change in how AI safety is managed in enterprise environments.

Flexible Configuration and Granular Control

While offering centralized control, the new capability also provides essential flexibility to cater to diverse use case requirements. Organizations can apply account-level and application-specific controls in addition to the overarching organizational safeguards. This layered approach allows for a baseline of enterprise-wide protection while enabling individual teams or applications to implement more specific or stringent controls where necessary, without contradicting the broader organizational policy.

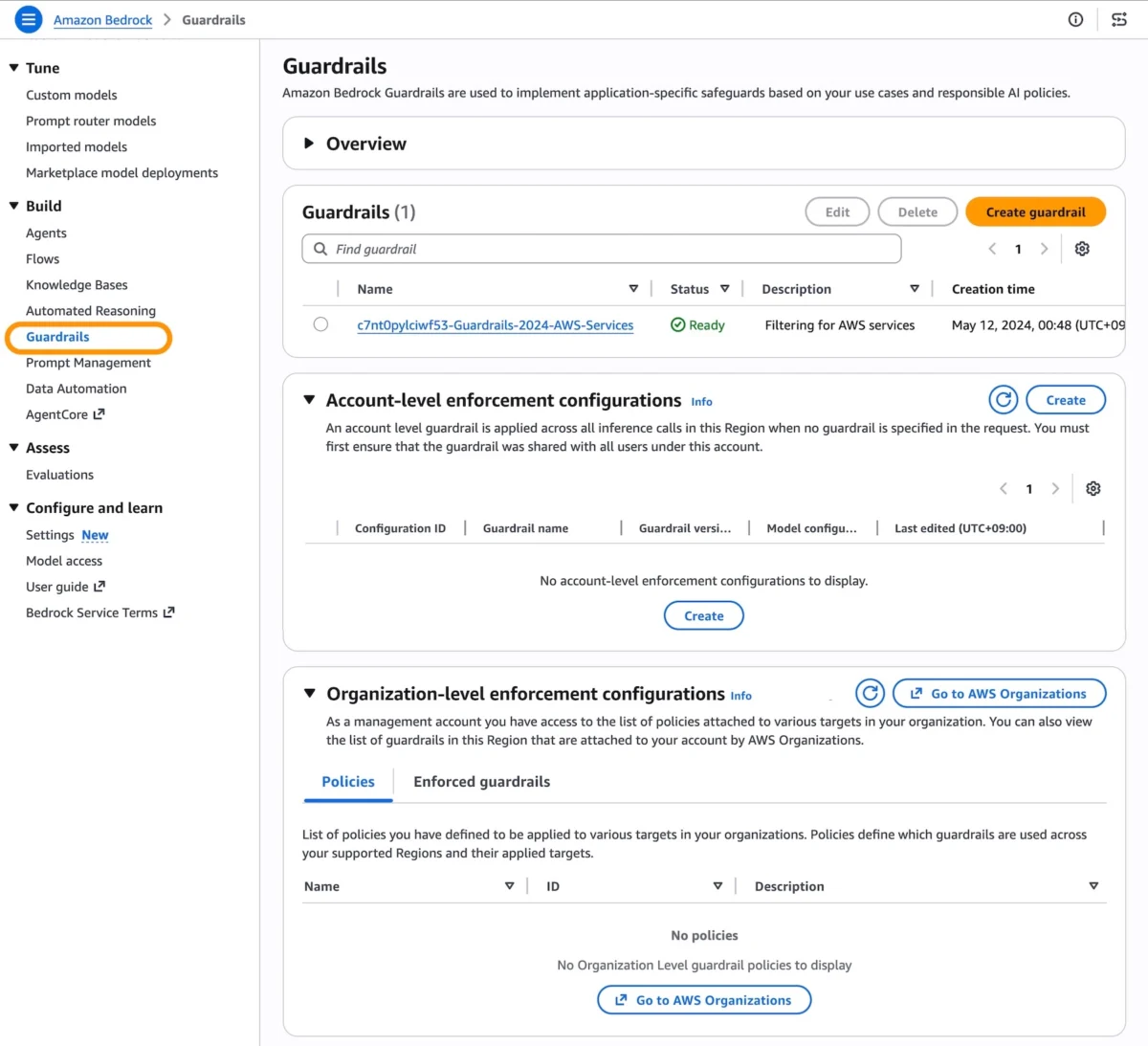

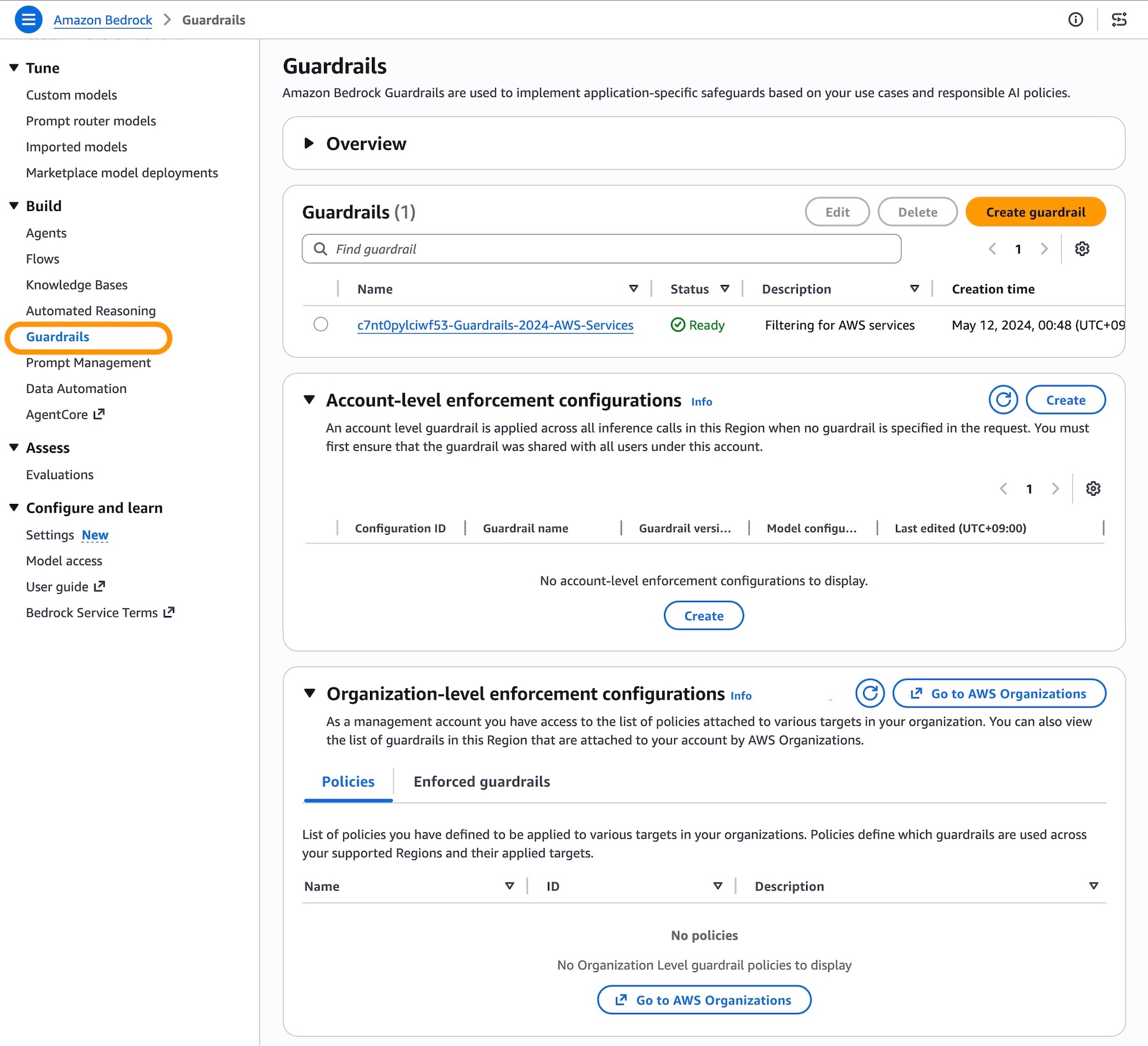

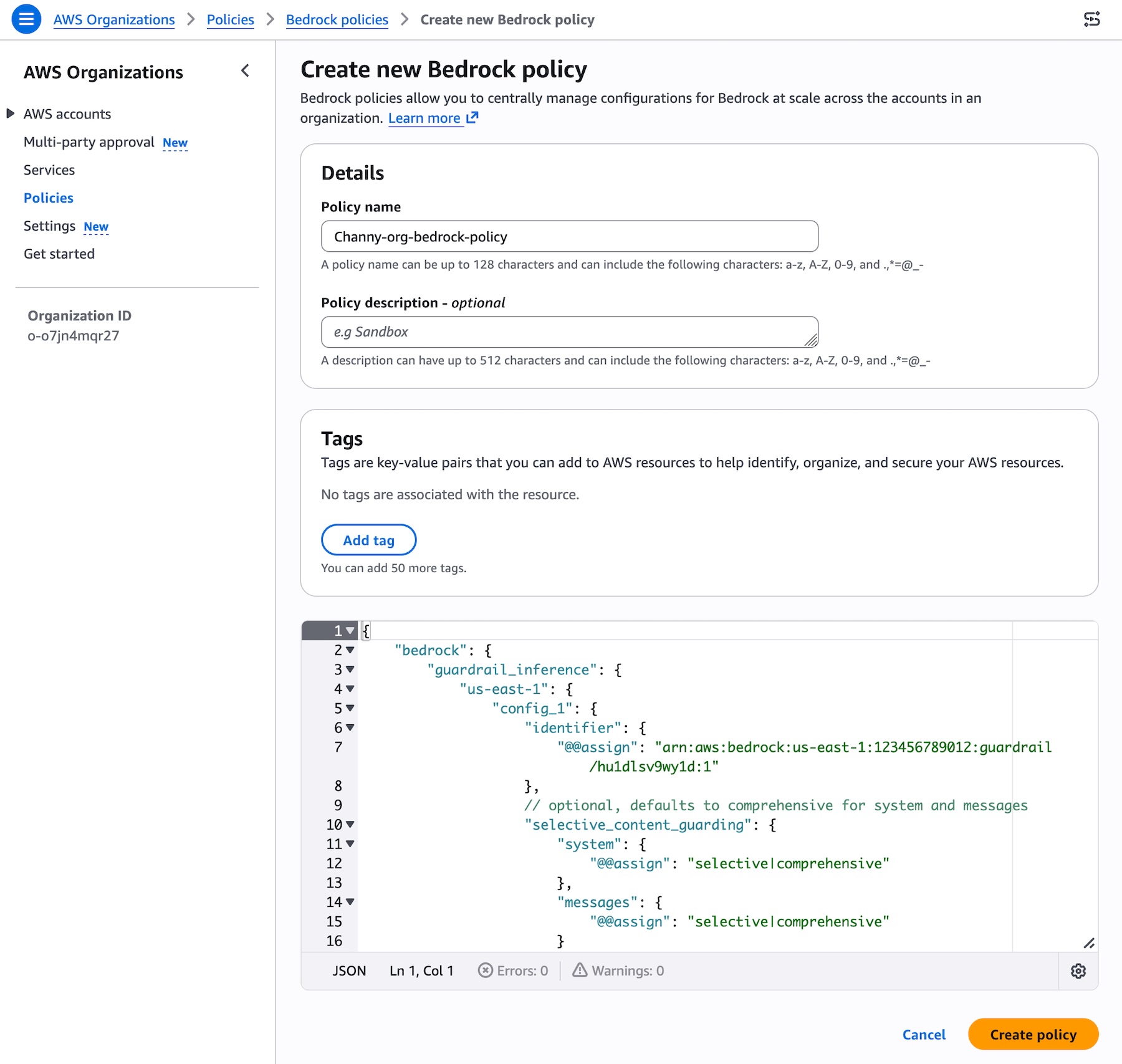

Getting started with this centralized enforcement involves configuring guardrails in the Amazon Bedrock Guardrails console. A crucial prerequisite is the creation of a guardrail with a particular version, ensuring its immutability and preventing modification by member accounts. This versioning guarantees that the organizational policy remains consistent and unalterable by individual teams, maintaining the integrity of the enterprise-wide safety standards. Resource-based policies for guardrails also need to be in place to facilitate proper access and enforcement.

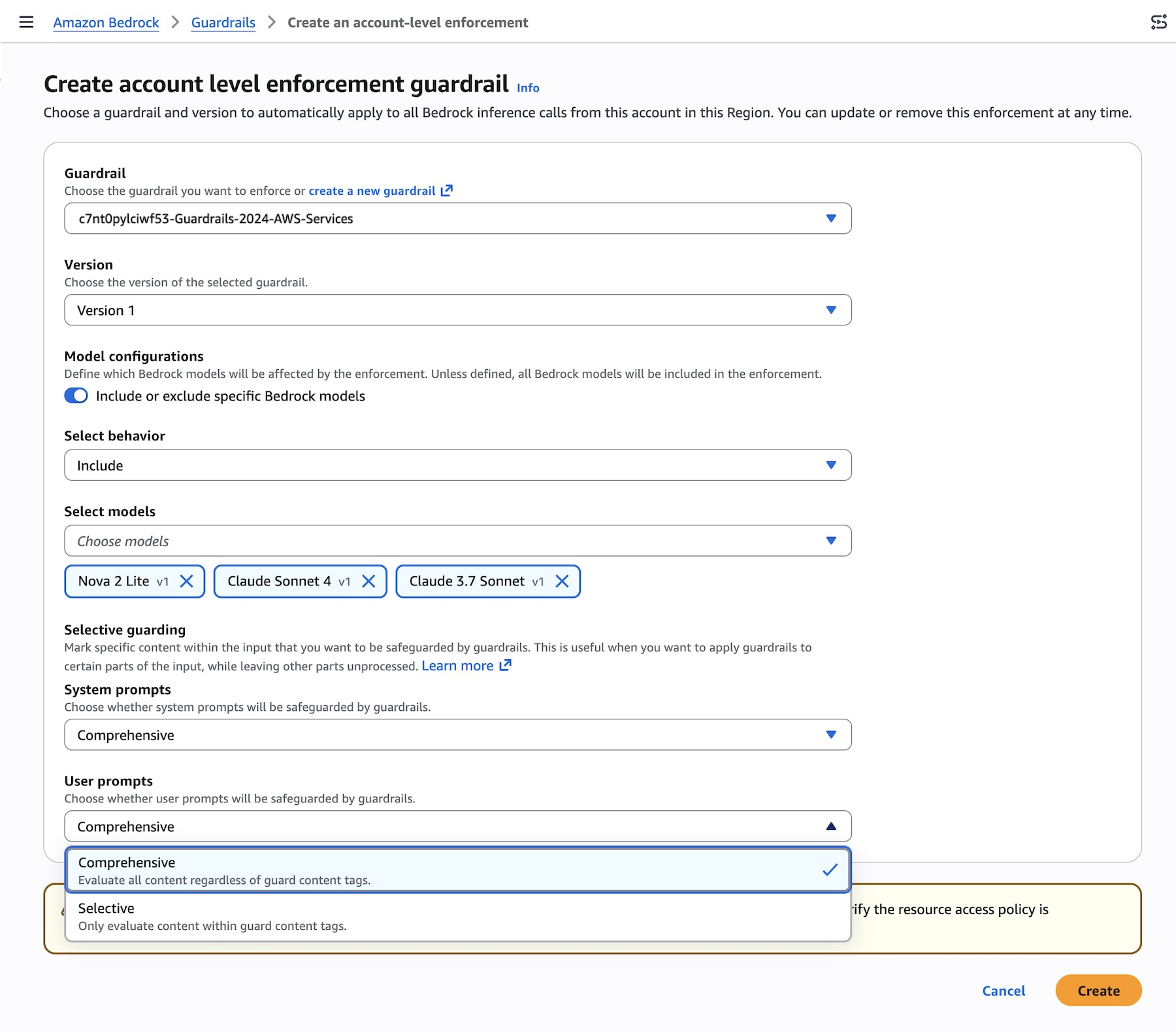

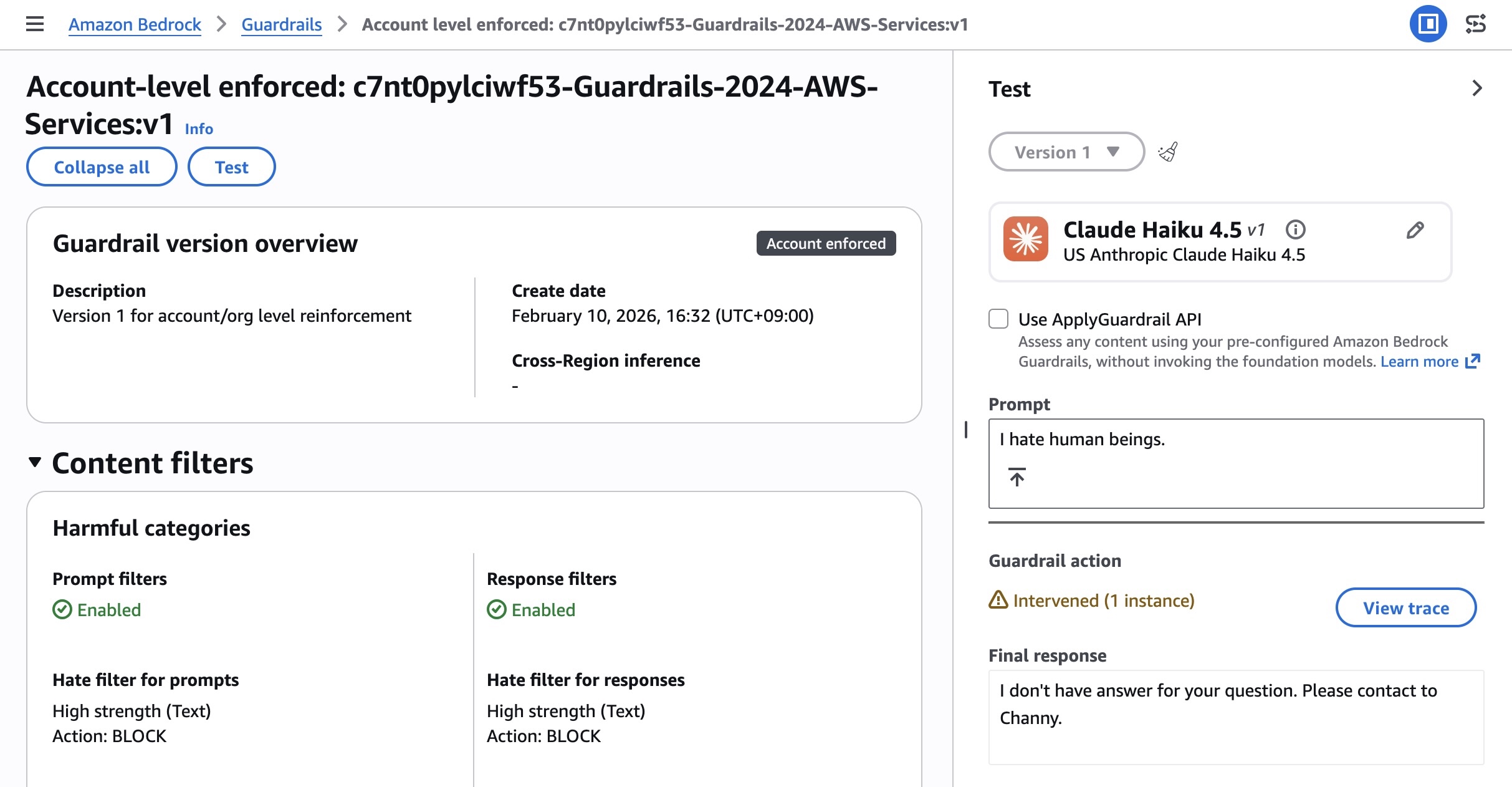

For account-level enforcement, users can create configurations that specify which guardrail and version should automatically apply to all Bedrock inference calls from that particular account in a given AWS Region. A key feature introduced with general availability is the ability to define which models will be affected by the enforcement, allowing for either an "Include" or "Exclude" behavior. This granular control means that specific guardrails can be applied only to certain models, or conversely, exempted from others, depending on their intended use and risk profile. Furthermore, organizations can configure selective content guarding controls for system prompts and user prompts with either "Comprehensive" or "Selective" options, enabling fine-tuned control over the input and output content.

Organization-level enforcement is managed through the AWS Organizations console, where users can enable Bedrock policies. A Bedrock policy is created, specifying the guardrail ARN and version, and then attached to target accounts, Organizational Units (OUs), or the root of the organization. This allows for hierarchical application of policies, enabling different OUs to have distinct guardrail policies if their business functions and risk profiles necessitate it. Once attached, the underlying safeguards within the specified guardrail are automatically enforced for every model inference request across all member entities. Verification of enforcement can be done by making Bedrock inference calls using APIs such as InvokeModel, InvokeModelWithResponseStream, Converse, or ConverseStream, and checking the response for guardrail assessment information, which will include details about the enforced guardrail.

Implications for Responsible AI and Compliance

The general availability of cross-account safeguards in Amazon Bedrock Guardrails carries significant implications for the broader landscape of responsible AI and corporate compliance. As generative AI moves from experimental stages to mission-critical applications, the potential for misuse, generation of harmful content, or hallucination becomes a paramount concern. Enterprises, especially those in highly regulated sectors such as finance, healthcare, and government, face intense scrutiny regarding their AI deployments. This new capability provides a robust framework for addressing these concerns proactively.

By offering a unified approach to AI safety, AWS is directly enabling its customers to meet evolving regulatory demands and internal governance mandates. This streamlines the path to demonstrating compliance with responsible AI principles, which typically include fairness, transparency, accountability, and safety. The ability to centrally manage and enforce these principles across an entire AWS organization reduces the risk of non-compliance and enhances the overall trust in AI systems. This is not merely a technical upgrade but a strategic tool for enterprise risk management, allowing organizations to confidently scale their generative AI initiatives while maintaining stringent ethical and safety standards. The reduced administrative burden further allows security and compliance teams to focus on continuous improvement and adaptation to new threats, rather than repetitive manual checks.

Availability and Economic Considerations

Cross-account safeguards in Amazon Bedrock Guardrails are now generally available in all AWS commercial and GovCloud Regions where Bedrock Guardrails is currently offered. This broad availability ensures that a wide range of AWS customers, including those with sensitive workloads requiring government cloud environments, can immediately benefit from these enhanced safety controls.

Regarding pricing, charges apply to each enforced guardrail according to its configured safeguards. AWS maintains a transparent pricing model, and detailed information on individual safeguards and their associated costs can be found on the Amazon Bedrock Pricing page. This cost structure encourages organizations to optimize their guardrail configurations to align with their specific needs and budget while ensuring comprehensive protection.

This latest development from AWS is a clear indication of the industry’s progression towards more mature and governed AI deployments. It reflects a deep understanding of the enterprise challenges associated with generative AI at scale and provides a tangible solution for achieving consistent AI safety and compliance. As AI continues to proliferate, such centralized governance capabilities will become indispensable for organizations aiming to harness the power of artificial intelligence responsibly and securely. Customers are encouraged to explore this new capability in the Amazon Bedrock console and provide feedback through AWS re:Post for Amazon Bedrock Guardrails or their usual AWS Support contacts, contributing to the ongoing refinement of these critical AI governance tools.