On Wednesday, a significant architectural revelation emerged from the AI development community when security researcher Chaofan Shou discovered that Anthropic had inadvertently shipped version 2.1.88 of its Claude Code npm package with a substantial 59.8MB source map file attached. This source map, intended to aid developers in debugging production code by linking it back to its original source files, instead exposed an un-obfuscated TypeScript codebase totaling over 512,000 lines across 1,900 files. The incident, though swiftly addressed by Anthropic, provided an unprecedented, albeit accidental, glimpse into the internal workings of a highly sophisticated AI agent system.

Anthropic acted quickly, pulling the affected package from the npm registry within hours of the discovery. The company confirmed the leak was the result of a packaging error, attributing it to human oversight. However, the digital mirrors of the internet had already begun to proliferate. The source code was forked extensively on GitHub, with one repository alone seeing over 41,500 forks before the original uploader replaced it with a Python port. While initial media coverage and developer reactions focused on more whimsical aspects of the leak, such as an internal Tamagotchi-like pet system and whimsical animal codenames, a deeper analysis of the exposed code reveals a far more significant story about the architectural convergence of advanced AI agent development.

Unveiling an Agent Operating System

Independent analyses of the leaked codebase by prominent tech publications such as VentureBeat and The Register, alongside contributions from numerous developers, have moved beyond the surface-level curiosities. These investigations highlight a production agent system that exhibits remarkable architectural parallels with cutting-edge patterns emerging within the open-source ecosystem. This convergence suggests that the development of robust, production-ready AI agents is coalescing around a set of core principles, irrespective of whether the systems are developed in-house or within open-source communities.

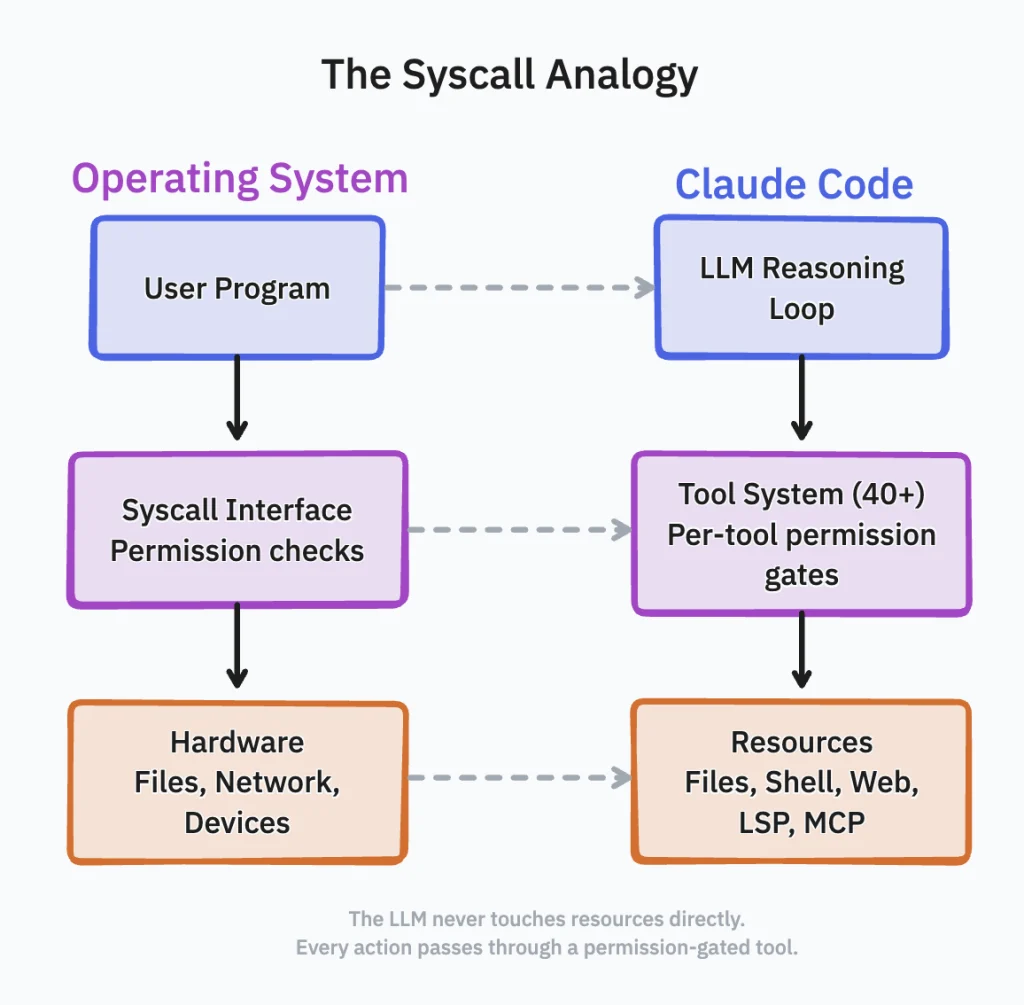

For professionals familiar with the intricacies of operating system internals, the patterns revealed in Claude Code’s architecture will feel strikingly familiar. The codebase details the implementation of syscall-like interfaces with rigorous permission gates, a process forking mechanism employing capability-based isolation, background daemons dedicated to memory management, and compile-time feature flags used for strategic software deployment. This structure indicates that Claude Code is not merely a chat wrapper or a simple interface for large language models, but rather a fully-fledged agent operating system. Its architecture directly maps onto design patterns being actively developed and evaluated for production deployments in popular open-source projects like CrewAI, Google ADK (Agent Development Kit), LangGraph, and AWS Strands.

A Permission-Gated Tooling Layer: The Sycall Equivalent for AI

A core component of the leaked architecture is Claude Code’s intricate tool system, comprising over 40 distinct capabilities. Each capability is implemented as a separate module, governed by its own permission gate. Functionalities such as file reading, bash script execution, web fetching, Language Server Protocol (LSP) integration, and connections to message queuing protocols (MCP) are all encapsulated as individual tools. These tools are invoked by the agent through a unified interface, effectively functioning as the AI’s system call layer. The base tool definition alone spans an impressive 29,000 lines of TypeScript.

This design meticulously separates the AI’s reasoning loop (the user-space program) from direct interaction with underlying system resources (the file system, terminal, or network). Every interaction is channeled through a permission-checked gateway. This architecture mirrors the security model of modern operating systems, where user applications do not have direct hardware access.

The implications of this design are significant for security and control. It addresses a problem analogous to Kubernetes’ Role-Based Access Control (RBAC) for pod-level API access. An agent configured to read files but denied permission to execute bash commands operates under a fundamentally different security profile than one granted full shell access. Claude Code enforces this granular control at the tool level, gating each capability independently. This allows for the dynamic assignment of different permission sets based on the context in which the agent is operating.

For instance, if Claude Code is deployed within an unfamiliar code repository, its tool system can be configured to restrict shell execution while permitting file reads and searches. This prevents the agent from inadvertently executing arbitrary code in an untrusted environment, without disabling its ability to analyze and understand the codebase. This fine-grained capability security, enforced through an architectural pattern that has been a cornerstone of POSIX systems for decades, represents a mature approach to agent security.

Underpinning this tool system is a robust Query Engine, spanning 46,000 lines of code. This engine manages all Large Language Model (LLM) API calls, handles response streaming, implements caching mechanisms, and orchestrates the overall workflow. As the largest single module in the codebase, it functions as the AI’s kernel scheduler. It determines which tool calls can be batched, which responses are suitable for caching, and how to manage the context window effectively across extended, long-running sessions.

Multi-Agent Swarms and Process Orchestration: A New Paradigm

The leaked source code further illuminates Claude Code’s capability to spawn sub-agents, each equipped with restricted toolsets and operating within isolated contexts. Anthropic refers to this system as "swarms," a feature gated behind the tengu_amber_flint feature flag. This architecture supports two primary modes of operation for these sub-agents: in-process teammates that leverage AsyncLocalStorage for context isolation, and process-based teammates that run in separate tmux or iTerm2 panes, distinguished by color-coded output for enhanced visual clarity. Team memory synchronization ensures these agents remain coordinated and aware of shared objectives.

This multi-agent functionality can be conceptualized as process forking augmented with capability-based security. A parent agent identifies a task that can be parallelized, spawns child agents with a subset of its own permissions, and then collects their results. Crucially, these child agents cannot escalate their own access privileges; they operate strictly within the boundaries defined by the parent. This model draws a direct parallel to container orchestration. In Kubernetes, a controller spawns pods with specific resource limits and RBAC bindings. Similarly, within Claude Code, a coordinator agent spawns sub-agents with precisely defined tool permissions and context boundaries.

This approach to agent coordination is not unique to Anthropic. Open-source frameworks like CrewAI achieve a similar outcome through their "crew" abstraction, where agents with defined roles collaborate on shared tasks. Google ADK utilizes hierarchical agent trees, enabling root agents to delegate responsibilities to sub-agents. LangGraph models these workflows as nodes within a directed graph, with explicit state transitions governing the flow of information. All these systems, despite their differing metaphors and implementation details, address the same fundamental challenge: complex tasks often require multiple reasoning threads that can execute in parallel, share context selectively, and merge their outputs effectively. Anthropic’s implementation of this paradigm is embedded within a closed-source CLI, while the open-source ecosystem has independently developed equivalent solutions. The convergence on these architectural patterns underscores their significance for building scalable and effective agent systems.

KAIROS and the Dream System: Persistent AI Services

Perhaps the most revealing aspect of the leak is the presence of KAIROS, an autonomous daemon mode referenced over 150 times in the source code. KAIROS transforms Claude Code from a conventional request-response tool into a persistent background process. It maintains append-only daily log files and receives periodic <tick> prompts, enabling it to autonomously decide whether to take proactive action or remain quiescent. A crucial constraint is the enforcement of a 15-second blocking budget, ensuring that any proactive actions undertaken by KAIROS do not disrupt a developer’s workflow for longer than a brief pause.

KAIROS can be understood as a systemd service for an AI agent. It initiates, persists, monitors, and acts according to its own schedule. When active, it employs a specialized output mode termed "Brief," designed to deliver concise responses suitable for a persistent assistant that should not overwhelm the developer’s terminal with excessive information.

Complementing KAIROS is the "autoDream" feature, which operates as a forked sub-agent within the services/autoDream/ directory. During periods of idle activity, autoDream performs memory consolidation. This process involves merging observations from across various sessions, resolving logical contradictions, and transforming tentative notes into confirmed facts. In essence, it acts as garbage collection for the agent’s accumulated knowledge. Just as operating systems run background processes to defragment memory, consolidate logs, and clean caches, autoDream performs similar functions for the knowledge base an AI agent accumulates over days and weeks of continuous operation.

Critically, none of the major open-source agent frameworks discussed – CrewAI, LangGraph, Google ADK, or AWS Strands – have yet shipped comparable background autonomy features. These frameworks typically operate in request-response modes or rely on workflow triggers. The closest equivalent observed is the Hermes Agent from Nous Research, which has recently introduced persistent multi-agent profiles with session memory. However, it lacks the proactive observation and consolidation loop inherent in KAIROS. The gap between Anthropic’s internally developed capabilities and the current offerings in the open-source ecosystem is most pronounced in this area of persistent, autonomous background operation.

Compile-Time Feature Gating: A Mature Shipping Strategy

The leaked source code also disclosed the presence of 44 compile-time feature flags. A significant portion of these flags, at least 20, gate capabilities that are fully developed and tested but have not been included in external releases. Features such as KAIROS, coordinator mode, a full voice mode with push-to-talk functionality, ULTRAPLAN (a remote planning mode that offloads complex tasks to a cloud container running Opus 4.6 for up to 30 minutes), and Buddy (the aforementioned Tamagotchi-style companion pet with 18 species and rarity tiers) are all behind flags that compile to "false" in the externally distributed build.

This practice of compile-time feature gating is a hallmark of mature platform engineering teams. Major software projects, including Google Chrome, Android, and large-scale infrastructure initiatives at Google and Meta, widely employ this strategy to decouple feature development from feature release. Engineers can continuously merge completed code into the main branch, while product managers selectively enable features via flags for specific build targets, user segments, or deployment rings. The user-facing release cadence, such as the bi-weekly feature updates for Claude Code, does not necessarily reflect the much faster internal development cadence.

Furthermore, the codebase distinguishes between "Ant-only" tools, accessible exclusively to Anthropic employees, and external tools available to all users. Internal tools include telemetry dashboards, model-switching overrides, and an "Undercover Mode" system. This system is specifically designed to prevent Claude from inadvertently revealing Anthropic’s internal codenames when contributing to public open-source repositories.

The irony of the situation is particularly poignant: Anthropic developed an entire subsystem to prevent its AI from leaking internal details in Git commits, only for that very subsystem to be exposed due to a build configuration oversight in the source map leak.

Internal Codename Revelations and Model Benchmarks

The leaked source also inadvertently exposed internal model codenames that the "Undercover Mode" was intended to conceal. Analyses of the leaked migrations directory indicate that "Capybara" refers to a Claude 4.6 variant, a model previously reported as "Mythos." "Fennec" maps to Opus 4.6, and an unreleased model designated "Numbat" is still undergoing testing. Internal benchmarks reveal that the latest iteration of Capybara exhibits a false-claim rate of 29-30%, representing a regression from an earlier version’s 16.7%. This iteration also includes an "assertiveness counterweight" designed to mitigate excessively aggressive code rewriting by the model.

The Road Ahead: Architectural Benchmarks and Security Implications

The accidental publication of Claude Code’s source code provides a rare and valuable architectural benchmark for the field of AI agents. The emergence of permission-gated tool systems, multi-agent swarms, background daemons for memory management, and compile-time feature gating are not isolated innovations unique to Anthropic. The fact that independent open-source projects like CrewAI, Google ADK, and LangGraph have independently arrived at similar solutions for capability isolation and agent coordination strongly suggests that these patterns are becoming the de facto standard architecture for production-ready agent systems.

Where Claude Code appears to maintain a significant lead is in the areas of background autonomy and cross-session memory consolidation. KAIROS and autoDream represent advanced capabilities that current agent frameworks have not yet matched. However, the availability of a concrete reference implementation for thousands of developers to study will undoubtedly accelerate the closure of this gap.

The architectural details exposed by the leak have profound security implications. The source code precisely outlines Claude Code’s permission-enforcement logic, its hook-orchestration pathways, and the trust boundaries it utilizes to govern code execution in unfamiliar repositories. This detailed blueprint presents potential attackers with a roadmap for crafting repositories specifically designed to exploit these mechanisms.

The timing of the leak further exacerbated security concerns. Within hours of the Claude Code incident, a separate supply-chain attack targeted the widely used Axios npm package, injecting a remote-access trojan into versions 1.14.1 and 0.30.4. Reports indicated that any user who installed or updated Claude Code via npm on March 31, between 00:21 and 03:29 UTC, may have inadvertently downloaded the compromised dependency.

In response, Anthropic has designated its native installer as the recommended installation method, effectively bypassing the npm dependency chain. The specific mechanism by which the 59.8MB source map file was included in the npm package is still under investigation, though Bun’s bundler, which generates source maps by default unless explicitly disabled, is a likely candidate.

The overarching lesson for all teams developing and shipping AI agents via package managers is stark and unambiguous: build pipelines are now an integral part of the attack surface. A single misconfigured ignore file or an oversight in packaging can transform a sophisticated product into an open blueprint for potential exploitation. The incident serves as a critical reminder of the heightened security diligence required in the rapidly evolving landscape of AI development and deployment.