In a move set to profoundly reshape the artificial intelligence landscape, Amazon and OpenAI have announced a comprehensive multi-year strategic partnership valued at $50 billion, designed to accelerate the development and deployment of advanced AI solutions for enterprises, startups, and consumers worldwide. The agreement, disclosed in the early part of 2026, signifies a monumental commitment from Amazon, with an initial investment of $15 billion in OpenAI, followed by an additional $35 billion contingent upon the fulfillment of specific strategic conditions in the coming months. This collaboration extends far beyond financial backing, integrating OpenAI’s cutting-edge models with Amazon Web Services (AWS) infrastructure and services, positioning AWS as a pivotal enabler for the next generation of AI-driven applications.

The announcement arrives amidst a period of intense innovation and adoption within the AI sector, a trend exemplified by the ongoing AI-Driven Lifecycle (AI-DLC) workshops being facilitated by AWS experts globally. These workshops, which have been a cornerstone of enterprise AI transformation throughout 2026, provide a structured framework for organizations to identify, prioritize, and implement AI use cases that deliver tangible business value. The AI-DLC methodology is designed to bridge the gap between experimental AI projects and production-ready solutions, meticulously aligning technical capabilities with overarching business outcomes. This foundational work in fostering enterprise AI readiness provides a pertinent backdrop for the scale and ambition of the Amazon-OpenAI partnership, highlighting the increasing demand for robust, scalable, and integrated AI capabilities across industries.

A Deep Dive into the Strategic Partnership’s Pillars

The strategic alliance is structured around several critical components, each designed to leverage the distinct strengths of both Amazon and OpenAI, ultimately aiming to democratize access to advanced AI and push the boundaries of what is currently possible.

One of the cornerstone initiatives is the co-creation of a Stateful Runtime Environment powered by OpenAI models, which will be made available through Amazon Bedrock. Amazon Bedrock, AWS’s fully managed service that offers a choice of high-performing foundation models from leading AI companies via a single API, gains a significant enhancement through this integration. The "stateful runtime" capability is a game-changer for developers, allowing AI applications to maintain context across interactions, remember prior work, seamlessly operate across various software tools and data sources, and efficiently access crucial compute resources. This evolution addresses a long-standing challenge in AI development, where applications often struggle with continuity and context retention, thereby unlocking new possibilities for sophisticated, multi-turn AI interactions and autonomous agent development.

Furthermore, AWS has been designated as the exclusive third-party cloud distribution provider for OpenAI Frontier. OpenAI Frontier represents a pivotal advancement in AI, focused on enabling organizations to build, deploy, and manage teams of highly intelligent AI agents. This exclusivity agreement means that enterprises looking to leverage OpenAI Frontier’s capabilities outside of OpenAI’s direct offerings will do so through the robust, secure, and scalable infrastructure of AWS. This move underscores AWS’s commitment to being the cloud of choice for leading AI innovators and positions it as a critical gateway for businesses seeking to operationalize complex AI agent systems, from automated customer service to sophisticated data analysis and content generation.

The financial commitment is equally matched by an unprecedented agreement regarding compute resource consumption. The partnership significantly expands an existing $38 billion multi-year agreement between OpenAI and AWS, adding another $100 billion over eight years. Under this expanded commitment, OpenAI has pledged to consume approximately 2 gigawatts of AWS Trainium capacity. This enormous allocation will span both the existing Trainium3 chips and the eagerly anticipated next-generation Trainium4 chips. AWS Trainium, Amazon’s custom-designed machine learning accelerator, is engineered specifically for high-performance deep learning training. The commitment of such a vast amount of Trainium capacity by OpenAI highlights the insatiable demand for specialized compute power required to train increasingly larger and more complex AI models. For context, 2 gigawatts is equivalent to the power output of two large nuclear power plants, underscoring the scale of AI model training and the strategic importance of AWS’s hardware innovations in meeting this demand. This ensures OpenAI has access to the cutting-edge infrastructure necessary to continue its leadership in AI research and development, while solidifying AWS’s position as a premier provider of AI/ML compute.

Chronology and Context of a Budding Partnership

While the $50 billion investment marks a significant escalation, the relationship between OpenAI and AWS is not entirely new. The mention of an "existing $38 billion multi-year agreement" being expanded by $100 billion indicates a pre-existing, substantial collaboration that has now reached a new level of strategic depth and financial commitment. This suggests a history of successful engagements, likely involving OpenAI leveraging AWS’s robust cloud infrastructure for various research, development, and deployment activities.

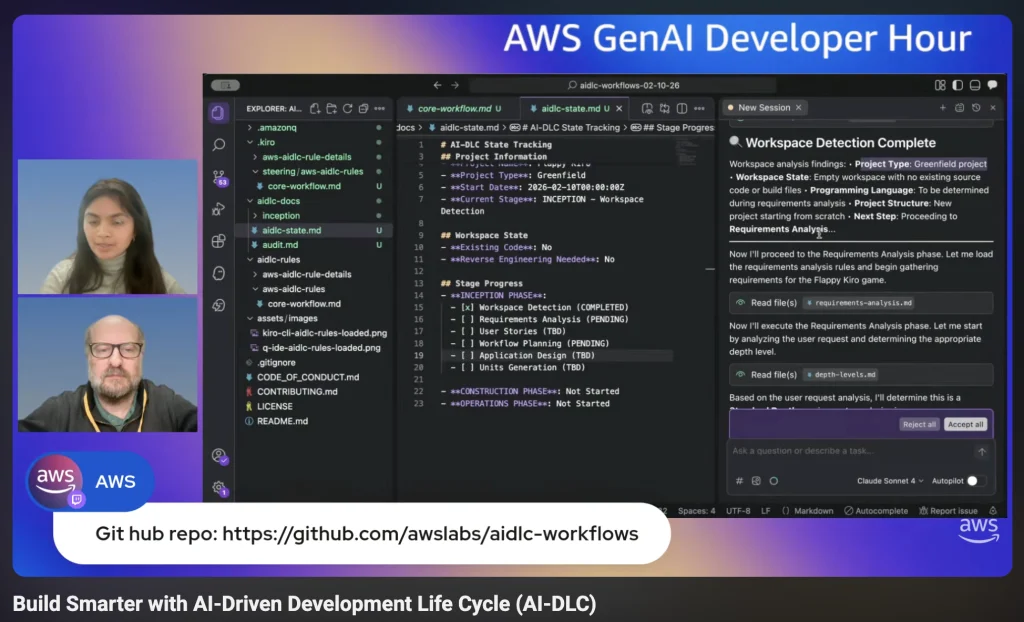

The timing of this announcement in 2026 is particularly noteworthy. The industry has been witnessing an accelerated pace of AI integration across all sectors, driven by advancements in foundation models and the growing understanding of AI’s transformative potential. AWS’s AI-DLC workshops, which have been active throughout 2026, illustrate the urgent need for enterprises to systematize their AI adoption strategies. The partnership effectively provides a powerful solution to this growing demand, offering enterprises direct, streamlined access to OpenAI’s advanced models and agent capabilities through a trusted cloud provider. The screenshot dated March 1, 2026, for a "GenAI Developer Hour" further places the announcement within a contemporary context of ongoing developer engagement and education around generative AI technologies.

Statements and Inferred Reactions from Leadership

While specific direct quotes from Amazon and OpenAI leadership were not provided, the nature and scale of this partnership allow for logical inference regarding their perspectives.

Andy Jassy, CEO of Amazon, would likely emphasize the strategic importance of this alliance in bolstering AWS’s leadership in the cloud and AI domains. He would probably highlight how the partnership reinforces AWS’s commitment to offering customers the broadest and deepest set of AI services, from infrastructure to foundation models. Jassy might articulate that this collaboration provides enterprises with unparalleled access to OpenAI’s groundbreaking models, seamlessly integrated into the AWS ecosystem, thereby empowering customers to build transformative AI applications with greater ease, scalability, and security. He would also likely point to the innovation fostered by the co-creation of the Stateful Runtime Environment and the strategic significance of the Trainium capacity commitment in advancing the frontier of AI research.

Sam Altman, CEO of OpenAI, would likely express enthusiasm for the partnership’s role in accelerating OpenAI’s mission to ensure that artificial general intelligence benefits all of humanity. He would probably stress the critical need for vast, reliable, and cutting-edge compute infrastructure to train the next generation of AI models, and how AWS’s Trainium chips and global cloud footprint provide precisely that. Altman might also highlight the importance of AWS as a distribution channel, enabling OpenAI Frontier’s agent capabilities to reach a broader array of enterprises and developers, thereby fostering a more diverse and innovative ecosystem around OpenAI’s technologies. He would likely see this partnership as crucial for scaling OpenAI’s research and products to meet global demand, while maintaining a focus on responsible AI development.

Broader Impact and Implications for the AI Ecosystem

This landmark partnership carries significant implications for the competitive landscape of the AI industry, the trajectory of enterprise AI adoption, and the future of AI development itself.

Competitive Dynamics: The alliance immediately intensifies the competition among major cloud providers for AI leadership. While Microsoft has a deep and long-standing partnership with OpenAI, this AWS agreement positions Amazon as a crucial, albeit different, strategic partner. It signals that OpenAI is pursuing a multi-cloud strategy for distribution and compute, leveraging the strengths of different providers. This move could be seen as diversifying OpenAI’s infrastructure dependencies, reducing potential vendor lock-in, and expanding its market reach. For AWS, it is a direct challenge to competitors like Microsoft Azure and Google Cloud, showcasing its ability to attract and support the most advanced AI developers and deploy sophisticated AI services. The sheer scale of the Trainium commitment could also spur further innovation in custom AI hardware across the industry, as companies race to provide the most efficient and powerful training chips.

Enterprise AI Adoption: For businesses, the partnership simplifies and accelerates the adoption of advanced AI. By making OpenAI’s models and capabilities, including the powerful OpenAI Frontier for AI agents, available through Amazon Bedrock, enterprises gain a trusted, familiar, and secure pathway to integrate cutting-edge AI into their operations. The Stateful Runtime Environment on Bedrock is particularly impactful, as it lowers the technical barrier for developing more intelligent and persistent AI applications, moving beyond single-turn interactions to truly autonomous and context-aware systems. This will likely drive a new wave of enterprise AI transformation, enabling companies to build highly personalized customer experiences, optimize complex operational workflows, and unlock new avenues for innovation.

Future of AI Development: The focus on "stateful runtime environments" and "AI agents" through OpenAI Frontier points towards a future where AI systems are not just predictive tools but active, intelligent participants in complex processes. This partnership will fuel research and development into more capable, autonomous, and integrated AI systems. The massive Trainium commitment ensures that OpenAI has the computational muscle to train these next-generation models, which are expected to be orders of magnitude larger and more complex than current iterations. This could lead to breakthroughs in areas such as reasoning, planning, and multi-modal understanding, pushing the boundaries of artificial general intelligence closer to reality.

Beyond the Headlines: AWS’s Broader Ecosystem

While the Amazon-OpenAI partnership dominated the headlines, AWS continues its consistent cadence of innovation and community engagement. The past week, like any other, saw numerous service launches and updates across its vast portfolio, further enhancing its offerings in areas such as compute, storage, networking, databases, analytics, security, and machine learning. These continuous improvements, though often incremental, collectively contribute to AWS’s robust and evolving cloud platform, supporting a diverse array of workloads for millions of customers globally.

The AWS community remains a vibrant hub of activity, with developers, architects, and data scientists sharing insights, best practices, and innovative solutions. Blogs, open-source projects, and technical forums contribute to a rich ecosystem of knowledge and collaboration. Simultaneously, AWS maintains a packed calendar of events, including in-person conferences, virtual summits, workshops, and developer-focused sessions. These events serve as crucial platforms for announcing new services, educating users, fostering networking, and gathering feedback, ensuring that the AWS platform evolves in lockstep with customer needs and industry trends.

In conclusion, the strategic partnership between Amazon and OpenAI marks a pivotal moment in the evolution of artificial intelligence. With Amazon’s substantial $50 billion investment and AWS’s critical role in distribution and compute, this alliance is set to accelerate AI innovation, deepen enterprise adoption, and fundamentally influence the direction of AI development for years to come. As the industry moves rapidly into 2026, the implications of this collaboration will undoubtedly resonate across the technological landscape, empowering a new generation of AI-driven solutions and cementing the positions of both companies at the forefront of this transformative era.