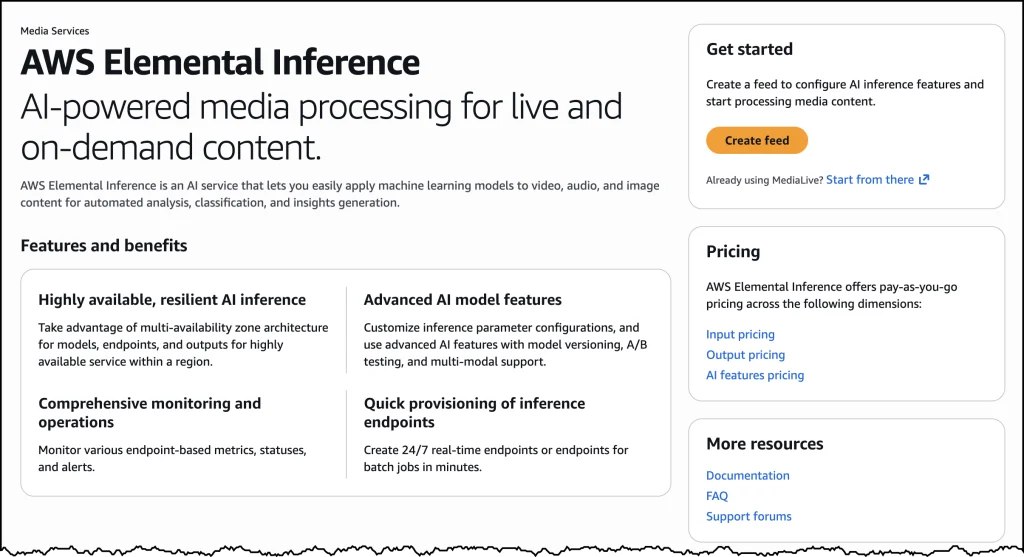

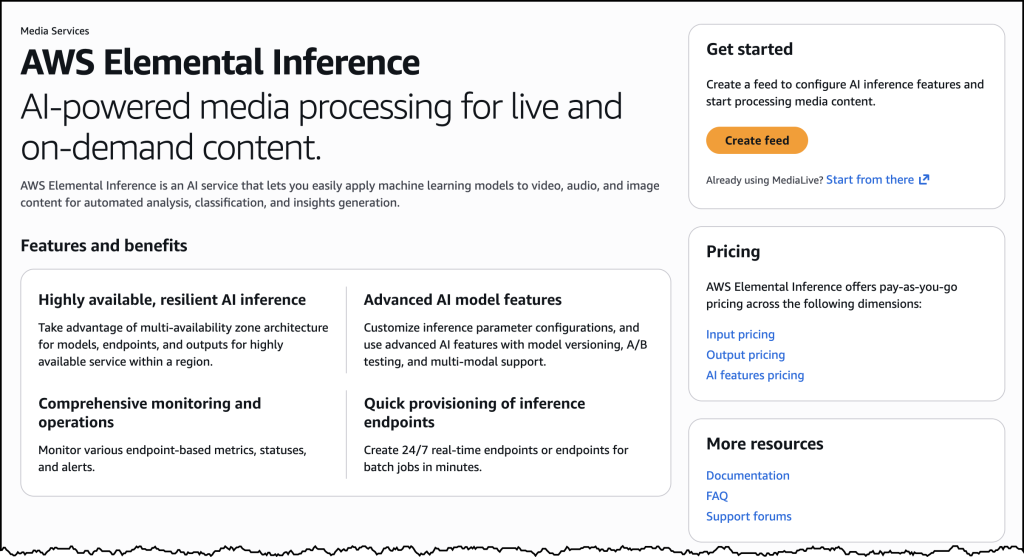

AWS has officially unveiled AWS Elemental Inference, a groundbreaking fully managed artificial intelligence service designed to autonomously transform and optimize live and on-demand video broadcasts, aiming to dramatically enhance audience engagement at scale. This pivotal launch marks a significant stride in addressing the evolving dynamics of media consumption, particularly the burgeoning demand for vertical video content optimized for mobile and social platforms. At its inception, AWS Elemental Inference empowers broadcasters and streamers to adapt their video content into vertical formats, perfectly suited for platforms like TikTok, Instagram Reels, and YouTube Shorts, all in real time, without requiring manual post-production work or specialized AI expertise.

The Shifting Tides of Video Consumption and Industry Challenges

The media landscape has undergone a profound transformation over the past decade, driven largely by the ubiquity of smartphones and the meteoric rise of social media platforms. Viewers today are increasingly consuming content on mobile devices, often preferring short-form, vertically oriented videos. Data from various market research firms consistently indicates that mobile video consumption now accounts for a substantial majority of all online video traffic, with platforms like TikTok boasting over a billion monthly active users and Instagram Reels and YouTube Shorts commanding billions of daily views. This paradigm shift presents a formidable challenge for traditional broadcasters and streamers who typically produce content in a landscape (16:9) format, optimized for larger screens like televisions and computer monitors.

Converting these traditionally produced broadcasts into vertical formats suitable for mobile-first platforms has historically been a labor-intensive and time-consuming process. It often necessitates extensive manual post-production work, requiring specialized editors to meticulously reframe, crop, and adapt footage while maintaining narrative integrity and visual appeal. This arduous process not only incurs significant operational costs, demanding dedicated human resources and specialized software, but also introduces substantial delays. Such delays frequently cause content creators to miss out on critical "viral moments" – fleeting opportunities for widespread sharing and engagement – and lose potential audience reach to competitors who are quicker to adapt. The inability to rapidly deliver content in the preferred vertical format often leads to audience fragmentation, as viewers migrate to platforms that cater more directly to their native viewing habits. AWS Elemental Inference directly confronts these inefficiencies, offering a streamlined, automated solution that eliminates the need for manual intervention or specialized AI expertise, thereby democratizing access to cutting-edge content adaptation.

AWS’s Enduring Commitment to Media & Entertainment Innovation

AWS has long been a foundational technology provider for the media and entertainment industry, offering a comprehensive suite of cloud services that power everything from content creation and production to distribution and archiving. AWS Elemental services, in particular, have been instrumental in enabling broadcasters, content owners, and pay-TV operators to process, deliver, and monetize video efficiently at scale. These services, including AWS Elemental MediaLive for live video encoding, MediaConvert for file-based transcoding, MediaPackage for just-in-time packaging, and MediaConnect for secure and reliable transport of live video, form the backbone of many modern media workflows globally.

The introduction of AWS Elemental Inference represents a natural evolution within this robust ecosystem, leveraging AWS’s deep expertise in cloud infrastructure and its burgeoning capabilities in artificial intelligence and machine learning. It integrates seamlessly into existing AWS Elemental workflows, extending their functionality with intelligent automation. This strategic move underscores AWS’s unwavering commitment to empowering media companies to innovate, reduce operational complexities, and stay competitive in a rapidly changing digital environment. By embedding AI directly into the video processing pipeline, AWS is not just offering a new tool but redefining how content can be prepared and delivered to diverse audiences across myriad platforms, ensuring maximum impact and reach.

Unpacking the Technology: How AWS Elemental Inference Works

At its core, AWS Elemental Inference operates as a sophisticated agentic AI application, which signifies its ability to function autonomously to analyze video content in real-time and apply optimal transformations without human intervention. This advanced system utilizes a combination of machine learning algorithms and computer vision techniques to intelligently detect key subjects, action, and focal points within a video frame. The AI is trained to understand visual hierarchies and dynamic movements, allowing it to make intelligent cropping decisions that preserve the most relevant parts of the scene for a vertical orientation. Based on this real-time analysis, it automatically applies the necessary optimizations, such as dynamic vertical cropping to reframe landscape content or intelligent clip generation to extract highlight reels.

A key differentiator of this service is its reliance on fully managed foundation models (FMs). These FMs are large, pre-trained AI models that have learned from vast amounts of diverse video data, enabling them to understand and process complex video content effectively. The "fully managed" aspect means that AWS handles all the underlying infrastructure, model updates, and continuous optimizations, freeing customers from the significant burden of managing complex AI pipelines, procuring specialized hardware, or hiring dedicated AI engineering teams. This significantly lowers the barrier to entry for broadcasters and streamers who may lack in-house AI expertise but desperately need to leverage its power to remain relevant in the digital age.

The service’s architecture allows for parallel processing of video streams, which is critical for demanding live broadcasting environments. While traditional post-processing approaches might take minutes or even hours to manually reformat content, AWS Elemental Inference boasts an impressive low latency of merely 6–10 seconds. This near real-time capability ensures that content can be adapted and delivered to social platforms almost instantaneously, allowing broadcasters to capture fleeting viral moments, capitalize on trending topics, and maintain audience engagement in the fast-paced digital sphere. This "process once, optimize everywhere" methodology further enhances efficiency, as multiple AI features can run simultaneously on the same video stream, eliminating the need to reprocess content for each specific capability or output format, thereby saving both time and computational resources.

Flexible Deployment Options for Diverse Workflows

Recognizing the varied operational setups within the media industry, AWS Elemental Inference offers flexible deployment options designed to integrate smoothly into existing workflows or facilitate new ones. This dual approach caters to a wide spectrum of users, from those building new content pipelines to those enhancing established broadcast operations.

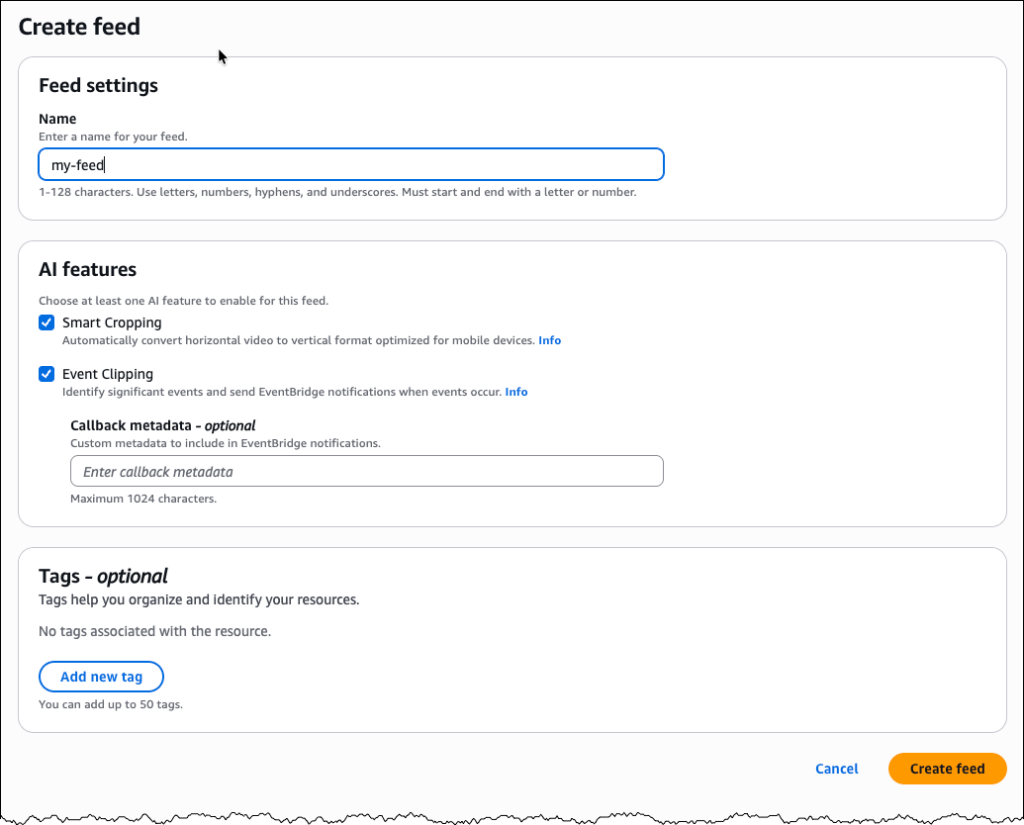

For users seeking a dedicated interface for AI-powered video processing, the service provides a standalone console. Within the familiar AWS Management Console, users can easily navigate to "AWS Elemental Inference" and initiate a "Create feed." This feed acts as the top-level resource for configuring AI transformations, encapsulating feature configurations. Once a feed is established and transitions from a "CREATING" to an "AVAILABLE" state, users can configure specific outputs, such as "vertical video cropping" or "clip generation." For vertical cropping, the service intelligently manages cropping parameters automatically based on the incoming video specifications, adapting dynamically to the content. For clip generation, users define an output, provide a descriptive name (e.g., "highlight-clips"), select "Clipping" as the output type, and enable its status. This streamlined interface simplifies the process of leveraging AI for content adaptation without requiring deep integration knowledge, making it accessible even for users without extensive cloud engineering backgrounds.

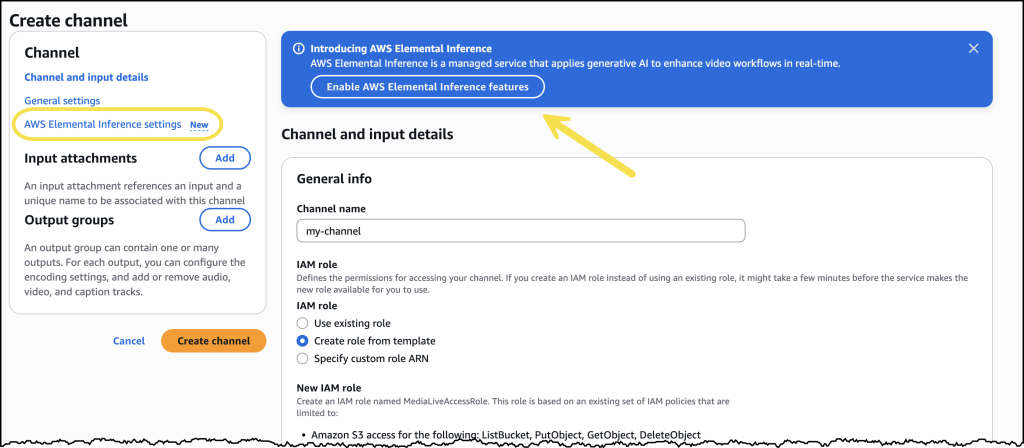

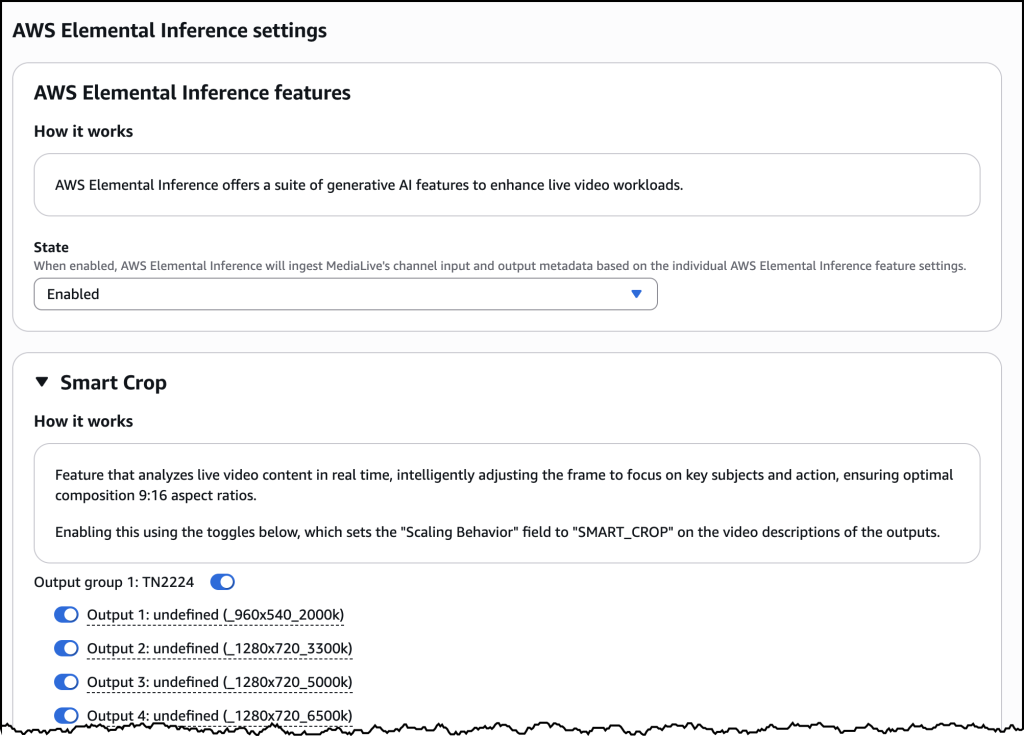

Alternatively, for broadcasters and streamers already utilizing AWS Elemental MediaLive for their live video workflows, AWS Elemental Inference can be seamlessly enabled directly within their existing channel configurations. This integrated approach allows customers to infuse AI capabilities into their current live video pipelines without necessitating architectural modifications, thus avoiding disruptive changes to mission-critical operations. By enabling desired features as part of the MediaLive channel setup, AWS Elemental Inference operates in parallel with video encoding processes. The "Smart Crop" feature, for instance, can be configured within an "Output group" to define different resolution specifications for vertical outputs, ensuring compatibility with various target platforms. A dedicated AWS Elemental Inference tab on the MediaLive channel details page provides a centralized view of the AI-powered video transformation configuration, displaying crucial information like the service’s Amazon Resource Name (ARN), data endpoints, and feed output details, including enabled features and their operational status. This deep integration ensures that the power of AI is readily accessible where it’s most needed, enhancing live production capabilities without disrupting established processes.

Key Features at Launch and a Glimpse into the Future

Upon its immediate launch, AWS Elemental Inference is providing several critical features designed to empower broadcasters and streamers in their pursuit of broader audience engagement:

- Real-time Vertical Video Cropping: Automatically transforms landscape video into optimized vertical formats, ensuring content is perfectly framed and compelling for mobile viewing on platforms like TikTok, Instagram Reels, and YouTube Shorts.

- Automated Clip Generation: Intelligently identifies and extracts key highlights or moments from live or on-demand content, facilitating rapid sharing and maximizing engagement on social media by delivering snackable, impactful segments.

- Agentic AI Application: Leverages advanced AI to perform autonomous video analysis and optimization without manual prompting, reducing human effort and potential errors.

- Fully Managed Foundation Models: Utilizes pre-trained, continuously updated AI models, eliminating the need for specialized AI expertise or infrastructure management, thus democratizing access to cutting-edge technology.

- Low-Latency Processing: Achieves near real-time transformation with latencies of 6-10 seconds, which is crucial for capturing and capitalizing on live viral moments and maintaining topical relevance.

- Seamless Integration: Offers flexible deployment via a standalone console for new users or direct integration with AWS Elemental MediaLive for existing broadcast workflows, ensuring adaptability.

- "Process Once, Optimize Everywhere" Efficiency: Runs multiple AI features simultaneously on the same video stream, reducing reprocessing time and costs, and maximizing the utility of each video asset.

AWS has also signaled a robust roadmap for AWS Elemental Inference, with plans to introduce further enhancements and capabilities throughout the year. These future developments are expected to include even tighter integration with other core AWS Elemental services, expanding the scope of AI-powered automation across the entire media workflow, potentially encompassing services like AWS Elemental MediaConvert for more advanced file-based transformations or AWS Elemental MediaPackage for sophisticated content packaging. Furthermore, AWS aims to roll out features specifically designed to help customers monetize their video content more effectively. This could encompass advanced functionalities like AI-driven dynamic ad insertion optimization based on audience segments and content context, personalized content recommendation engines to boost viewer retention, or intelligent metadata generation to improve content discoverability and audience targeting across platforms. Such advancements would further solidify AWS’s position as a comprehensive solution provider for the evolving demands of the digital media economy.

Availability and Economic Model: Scaling with Demand

AWS Elemental Inference is now immediately available across four strategically important AWS Regions: US East (N. Virginia), US West (Oregon), Europe (Ireland), and Asia Pacific (Mumbai). These regions were selected for their strategic importance as major global media hubs, ensuring that a significant portion of AWS’s global customer base can begin leveraging the service without delay. Their robust infrastructure guarantees low latency and high availability, critical for high-demand media workflows. Customers can access the service through the intuitive AWS Elemental MediaLive console or integrate it into their custom workflows using the comprehensive AWS Elemental MediaLive APIs, allowing for programmatic control and automation.

Adhering to AWS’s standard cloud philosophy, AWS Elemental Inference operates on a consumption-based pricing model. This "pay-as-you-go" approach means that customers are billed only for the specific features they utilize and the volume of video they process, with no upfront costs, minimum commitments, or long-term contracts. This economic model offers unparalleled flexibility, allowing broadcasters and streamers to scale their AI-powered video processing capabilities dynamically. During peak events or periods of high content output, such as major sports tournaments or breaking news, they can seamlessly scale up resources to meet surging demand. Conversely, during quieter periods, they can optimize costs by scaling down, paying only for what they consume. This cost-efficiency is particularly beneficial for smaller content creators, independent streamers, and startups, democratizing access to advanced AI tools that were once the exclusive domain of large enterprises with substantial capital investments.

Industry Implications and Broader Impact

The launch of AWS Elemental Inference is poised to have a significant and transformative impact across the media and entertainment industry. For broadcasters, it represents a powerful tool to bridge the gap between traditional linear television and the burgeoning world of digital, mobile-first platforms. By automating the laborious process of content adaptation, it frees up valuable creative and technical resources, allowing teams to focus on producing high-quality, compelling content rather than engaging in tedious manual reformatting tasks. This operational efficiency translates directly into significant cost savings and increased content velocity, enabling organizations to be more agile and responsive to market trends.

Content creators, ranging from independent streamers to large media houses, will gain the unprecedented ability to effortlessly expand their reach and engage new demographics on platforms where vertical video reigns supreme. This will not only foster greater audience loyalty by delivering content in preferred formats but also unlock new monetization opportunities through increased viewership and advertising potential. Industry analysts anticipate that services like AWS Elemental Inference will accelerate the convergence of traditional and digital media, enabling a more unified, cross-platform content strategy. It democratizes access to advanced AI capabilities, making sophisticated video optimization accessible to a broader range of organizations, irrespective of their size or technical expertise, thereby leveling the playing field.

An AWS spokesperson, while not quoted directly in the initial announcement, would likely emphasize the company’s commitment to innovation that solves real-world customer problems. They would probably highlight how Elemental Inference empowers customers to adapt to rapid market changes, maximize content value, and deepen audience connections in an increasingly fragmented media landscape. This service is a testament to the ongoing evolution of cloud-native media processing, where AI moves from being a specialized, niche tool to an integrated, essential component of the content supply chain, driving efficiency and innovation at every stage.

As the industry continues to grapple with audience fragmentation and the relentless demand for hyper-personalized content experiences, AWS Elemental Inference provides a timely and potent solution. It is not merely about cropping videos; it is about intelligently understanding content and delivering it in the most effective format to the right audience at the right time, thereby ensuring content remains relevant and engaging across every screen. The updates on February 24th (fixing a broken link in documentation) and March 2nd (image replacement) indicate ongoing attention to detail and refinement, characteristic of a major product launch. The full documentation and product page offer further technical implementation details for those looking to integrate this transformative service into their operations. The future of video broadcasting is increasingly intelligent, and AWS Elemental Inference is positioned at its forefront, ready to shape how content is consumed globally.