The pursuit of advanced artificial intelligence often conjures images of vast data centers, powerful graphics processing units (GPUs), and sprawling teams of specialized engineers. However, a significant and increasingly vital frontier in machine learning (ML) development lies in contexts starkly devoid of such abundant resources. This article delves into practical, empirically proven strategies for constructing useful and impactful machine learning solutions when confronted with limited computational power, imperfect data, and minimal engineering support. It addresses the critical need for adaptable methodologies that thrive in environments where resource scarcity is the norm, not the exception.

The global landscape of data science is bifurcated. While tech giants push the boundaries of large language models and complex neural networks, a substantial portion of real-world problems exist in settings where the foundational infrastructure for such endeavors is simply unavailable. This includes small businesses in rural areas, non-governmental organizations operating in developing regions, community projects with tight budgets, and individuals just starting their journey in data science with basic tools. These environments demand an approach to machine learning that prioritizes efficiency, interpretability, and robustness over sheer computational scale. The insights discussed herein draw from lessons learned in diverse, resource-constrained projects, highlighting smart patterns observed across various platforms and applications.

Defining the Low-Resource Landscape: Beyond the Hype

Working within a low-resource setting fundamentally redefines the parameters of machine learning development. It typically entails a confluence of several critical constraints. Computationally, this means reliance on standard laptops, shared cloud instances with limited specifications, or even edge devices with minimal processing power and memory. Access to high-performance computing (HPC) clusters or dedicated GPU farms is often nonexistent. Data-wise, the reality is far removed from the pristine, meticulously curated datasets prevalent in academic benchmarks or Kaggle competitions. Instead, developers grapple with incomplete, noisy, inconsistently formatted, or sparsely populated datasets, often collected manually or through rudimentary systems. Finally, the absence of a dedicated engineering team translates to a need for solutions that are simple to deploy, maintain, and integrate by individuals with varied technical backgrounds, without requiring specialized MLOps infrastructure. This combination of factors — limited compute, imperfect data, and minimal engineering support — necessitates a radical rethinking of conventional ML paradigms. According to a 2022 report by the World Bank, over 3 billion people still lack reliable internet access, and even where it exists, the quality of infrastructure can be highly variable, directly impacting data collection and model deployment capabilities. This digital divide underscores the immense potential and necessity for localized, lightweight ML solutions.

The Strategic Advantage of Lightweight Machine Learning Models

In an era dominated by the allure of deep learning, it is easy to overlook the enduring power of classic, lightweight machine learning algorithms. However, in low-resource environments, these models are not merely alternatives; they represent a strategic advantage. Algorithms such as logistic regression, decision trees, random forests, and support vector machines (SVMs) with linear kernels may be considered "old-school," but their efficacy in practical, constrained settings is unparalleled.

These models are inherently fast, requiring significantly less computational overhead for both training and inference compared to their deep learning counterparts. This translates directly to faster development cycles, lower energy consumption, and the ability to run on basic hardware, even microcontrollers or older generation processors. Furthermore, their interpretability is a profound benefit. When building tools for end-users like farmers, small business owners, or community health workers, trust and understanding are paramount. A simple decision tree, for instance, can be easily visualized and explained, allowing users to grasp why a particular prediction was made. This clarity fosters greater adoption and confidence, contrasting sharply with the "black box" nature of many complex deep neural networks. Successful applications include predictive maintenance for agricultural machinery using decision trees, inventory optimization for local shops via logistic regression, and identifying patterns in public health data using random forests. These classic models offer significant gains with minimal resource expenditure, proving that complexity does not always equate to superiority in real-world problem-solving. A study published in Nature Communications in 2020 highlighted that simpler models often outperform complex ones when data is scarce or noisy, reinforcing their utility in low-resource contexts.

Transforming Chaotic Data into Actionable Insights: The Art of Feature Engineering

One of the most formidable challenges in low-resource settings is the pervasive presence of messy, inconsistent data. Data often arrives from broken sensors, handwritten logs, incomplete surveys, or disparate legacy systems. This is where advanced feature engineering becomes less of a refinement and more of a foundational necessity. It is the process of extracting meaningful, predictive features from raw data, effectively turning noise into signal.

- Temporal Features: Even when timestamps are inconsistent, they hold valuable information. Breaking down time data into components like "hour of day," "day of week," "month," "season," or "is_weekend" can reveal cyclical patterns crucial for prediction. For example, understanding peak demand times for electricity in a village or seasonal planting windows in agriculture.

- Categorical Grouping: Datasets frequently contain categorical variables with an excessive number of unique values, which can overwhelm simple models. Grouping less frequent categories into broader classifications (e.g., combining dozens of specific product names into "perishables," "snacks," "tools") reduces dimensionality and enhances model robustness.

- Domain-Based Ratios: Ratios often convey more information than raw numbers by normalizing data or expressing relationships. Examples include "sales per customer," "yield per acre," "cost per unit," or "humidity-to-temperature ratio." These engineered features can encapsulate complex domain knowledge in a single, powerful variable.

- Robust Aggregations: When dealing with noisy data, traditional statistical measures like means can be heavily skewed by outliers. Employing robust aggregations, such as medians instead of means, or interquartile ranges, provides a more stable representation of central tendency and spread, mitigating the impact of erroneous sensor readings or data entry typos.

- Flag Variables: Binary flag variables are powerful tools for indicating the presence or absence of specific conditions or events. Examples include "is_holiday," "is_sensor_faulty," "has_missing_value," or "is_promotion_active." These flags provide crucial context to the model, allowing it to differentiate between normal operation and exceptional circumstances. A survey by IBM in 2021 indicated that data quality issues cost businesses worldwide an estimated $3.1 trillion annually, underscoring the universal challenge of imperfect data and the value of robust feature engineering.

Navigating Missing Data: A Signal in Disguise

Missing data is an almost universal problem, yet in low-resource environments, it often carries a deeper significance. Rather than merely an inconvenience, missingness can be an informative signal in itself, provided it is handled thoughtfully and transparently.

- Treat Missingness as a Signal: Sometimes, the fact that a data point is missing reveals crucial information. For instance, if farmers consistently skip certain entries in a weather log, it might indicate a lack of resources to measure that variable, a low perceived importance, or an adverse event (e.g., during extreme weather). Creating a binary "is_missing" flag for such variables can allow the model to learn from this absence.

- Simple Imputation Methods: While sophisticated multi-model imputation techniques exist, they are often computationally intensive and prone to introducing noise in low-resource settings. Simpler, yet effective, methods include imputing with the median (robust to outliers), the mode (for categorical data), or forward-fill/backward-fill (for time-series data). The key is simplicity and clear rationale.

- Leveraging Domain Knowledge: Field experts possess invaluable insights into why data might be missing or what reasonable substitute values could be. Collaborating with local stakeholders can reveal smart rules for imputation, such as using historical averages for rainfall during known dry seasons or typical sales figures for certain holidays.

- Avoiding Complex Imputation Chains: Resist the temptation to impute missing values using other imputed values in a convoluted chain. This can lead to error propagation and make the model’s behavior unpredictable. Define a few solid imputation rules based on domain knowledge and stick to them. The principle of parsimony applies here: simpler imputation is often more reliable when data quality is inherently low.

Small Data, Big Impact: The Power of Transfer Learning

Even with limited local datasets, the benefits of larger, pre-trained models are not out of reach. Simple forms of transfer learning can significantly boost model performance without requiring extensive computational resources for training.

- Text Embeddings for Unstructured Data: For unstructured text data like inspection notes, customer feedback, or incident reports, using small, pre-trained text embeddings (e.g., word2vec, GloVe, or even lightweight transformer models like Mini-BERT or Sentence-BERT) can convert qualitative information into numerical vectors. These embeddings capture semantic meaning and can be used as features for simpler models, offering substantial gains with minimal computational cost.

- Global-to-Local Model Adaptation: Take a broad, globally trained model (e.g., a general weather-yield model) and fine-tune its output or adjust its parameters using a small set of local samples. Even linear adjustments or recalibration can dramatically improve relevance and accuracy for specific local conditions. This leverages the general knowledge encoded in the global model while adapting it to local nuances.

- Feature Selection Guided by Benchmarks: Public datasets often contain features that are highly predictive for specific tasks. Even if direct transfer of a model isn’t feasible, insights from benchmarks can guide the selection of features for a local model, especially when local data is noisy or sparse. This helps prioritize data collection efforts and feature engineering focus.

- Time Series Pattern Borrowing: For time series forecasting, seasonal patterns, trend components, or lag structures observed in large, global datasets can be borrowed and customized for local needs. For instance, understanding typical daily energy consumption curves or seasonal agricultural cycles from broader data can inform the structure of a local forecasting model. This approach reduces the need to learn complex temporal dynamics from scratch with limited local data.

A Real-World Paradigm: Smarter Crop Choices in Low-Resource Farming

A compelling illustration of lightweight machine learning in action comes from a StrataScratch project focused on agricultural data from India. The objective was to recommend suitable crops based on actual environmental conditions, acknowledging the inherent messiness of real-world agricultural data, including fluctuating weather patterns and imperfect soil measurements.

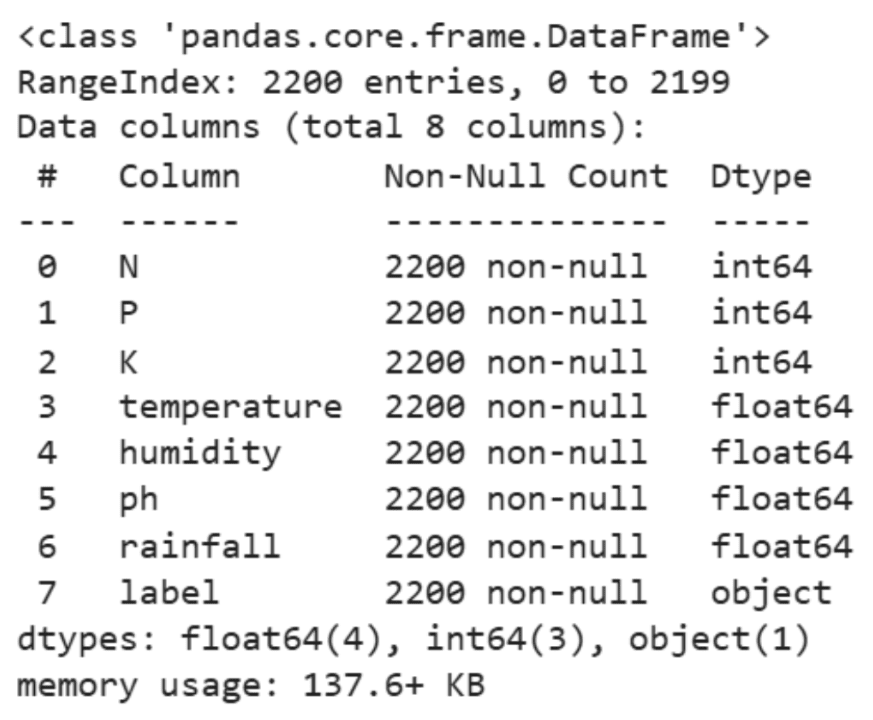

The dataset, modest by modern standards at approximately 2,200 rows, contained vital information such as soil nutrients (nitrogen, phosphorus, potassium), pH levels, and basic climate variables (temperature, humidity, rainfall). Instead of deploying complex deep learning architectures, the analysis adopted an intentionally simple, yet powerful, approach.

The workflow began with descriptive statistics to understand the basic distributions and ranges of the numerical features. This initial exploratory data analysis (EDA) step is crucial in low-resource settings, providing immediate insights into data quality, potential outliers, and the overall landscape of the variables. Following this, visual exploration using tools like Seaborn generated histograms for temperature, humidity, and rainfall. These visualizations immediately conveyed the distribution of key environmental factors, highlighting any skewness, multi-modality, or unusual patterns that might influence crop suitability. For instance, a bimodal distribution for rainfall might indicate distinct wet and dry seasons, a critical factor for crop selection.

The core analytical step involved running ANOVA (Analysis of Variance) tests to determine if environmental factors differed significantly across various crop types. For example, ANOVA was used to assess whether humidity, rainfall, or temperature levels varied statistically across different crops. A significant p-value (typically < 0.05) from an ANOVA test indicates that there is a statistically significant difference in the mean of the environmental factor across different crop types. This means that certain crops genuinely prefer specific ranges of humidity, rainfall, or temperature. The F-statistic quantifies this difference. For instance, a high F-statistic and low p-value for humidity across crop types would strongly suggest that humidity is a critical differentiator for optimal crop growth.

This project exemplifies the effectiveness of low-resource ML. The entire workflow was executed using computationally gentle tools like pandas for data manipulation, Seaborn for visualization, and SciPy for statistical tests. It ran seamlessly on a regular laptop, demonstrating that powerful insights do not necessitate expensive hardware or proprietary software. The most valuable aspect of this approach is its inherent interpretability. Farmers require clear, actionable guidance, not opaque predictions. Statements derived from the ANOVA results, such as "rice performs better under high humidity" or "millet prefers drier conditions," directly translate statistical findings into practical decisions, empowering farmers to make more informed choices about crop rotation and planting schedules. This interpretability fosters trust and facilitates the adoption of data-driven recommendations in communities where technological literacy may be varied.

Broader Implications and the Future of Data Science in Resource-Constrained Settings

The principles outlined for building smart machine learning solutions in low-resource environments extend far beyond agriculture. They are applicable across sectors such as healthcare diagnostics in remote clinics, educational resource allocation in underserved schools, disaster response planning with limited real-time data, and microfinance risk assessment. The broader impact of these methodologies is profound. They democratize access to advanced analytical capabilities, enabling local communities and small organizations to address their unique challenges without reliance on external, often costly, high-tech solutions. This approach aligns with sustainable development goals, fostering self-reliance and innovation at the grassroots level.

For aspiring data scientists, mastering these skills is not just a pragmatic necessity; it’s a profound career advantage. The ability to extract value from messy data, build robust models on limited infrastructure, and communicate insights clearly is a rare and highly sought-after commodity. It cultivates an entrepreneurial mindset, emphasizing problem-solving over tool-wielding. It teaches data intuition—the ability to understand the underlying meaning and limitations of data—and strong communication skills to bridge the gap between technical output and practical application. Furthermore, working in these contexts often brings ethical considerations to the forefront, demanding a focus on fairness, transparency, and the direct human impact of technological solutions. This is the kind of work that truly defines a great data scientist: one who can deliver tangible value where it is most needed, irrespective of resource constraints.

Conclusion

The challenge of building machine learning solutions in low-resource environments is not a limitation but an invitation for creativity, ingenuity, and passion. It necessitates a shift in perspective, moving away from the pursuit of maximal complexity towards the elegance of simplicity and efficiency. As explored in this article, lightweight models, intelligent feature engineering, a pragmatic approach to missing data, and strategic use of transfer learning are not merely workarounds but powerful, deliberate choices that lead to robust and interpretable solutions. The real triumph lies in finding the actionable signal within the noise and translating complex data into practical insights that genuinely improve lives and solve real-world problems for real people. The future of impactful machine learning will increasingly be forged not just in sophisticated labs, but in the diverse, often overlooked, corners of the world where innovation is driven by necessity.