The evolution of artificial intelligence has moved beyond static, query-response models to dynamic, agentic AI applications capable of executing multi-step workflows, engaging in extended interactions, and adapting their behavior over time. However, a critical, often overlooked component in these advanced systems is memory. Without a sophisticated memory architecture, even the most powerful large language models (LLMs) are fundamentally stateless, forced to restart from a blank slate with every interaction, leading to inefficiencies, repetitive actions, and a profound inability to personalize user experiences. This article delves into the systemic challenges and practical solutions for designing, implementing, and continuously evaluating memory systems that empower agentic AI to become more reliable, adaptive, and genuinely effective.

The Foundational Challenge: Bridging Statelessness with Persistent Intelligence

The core limitation of many contemporary AI agents stems from their inherent statelessness. Each query or interaction often begins without any recollection of prior sessions, user preferences, past attempts, or even recently failed operations. While this approach might suffice for simple, single-turn tasks, it imposes a severe ceiling on the capabilities of agents designed for complex, goal-oriented tasks or sustained user engagement. Industry analysts estimate that the lack of effective memory in agentic AI can lead to up to a 40% increase in computational costs due to redundant processing and a significant degradation in user satisfaction, particularly in personalized service scenarios.

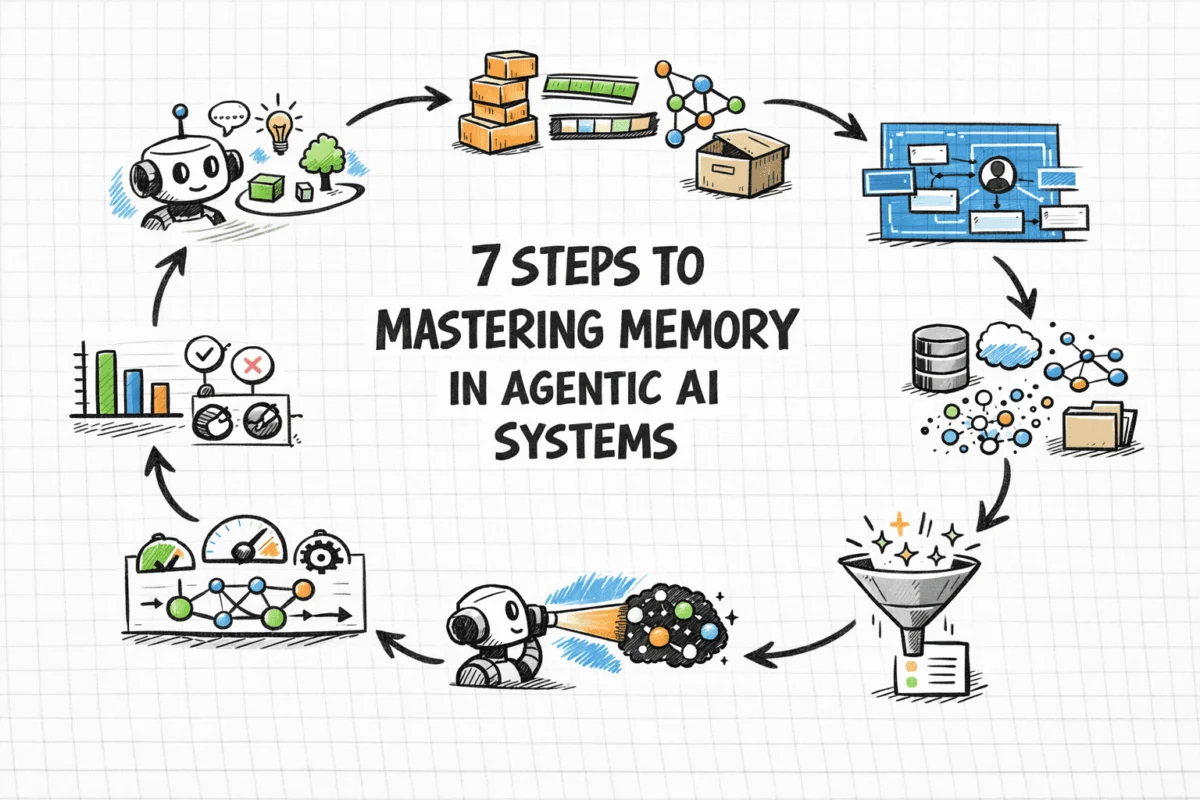

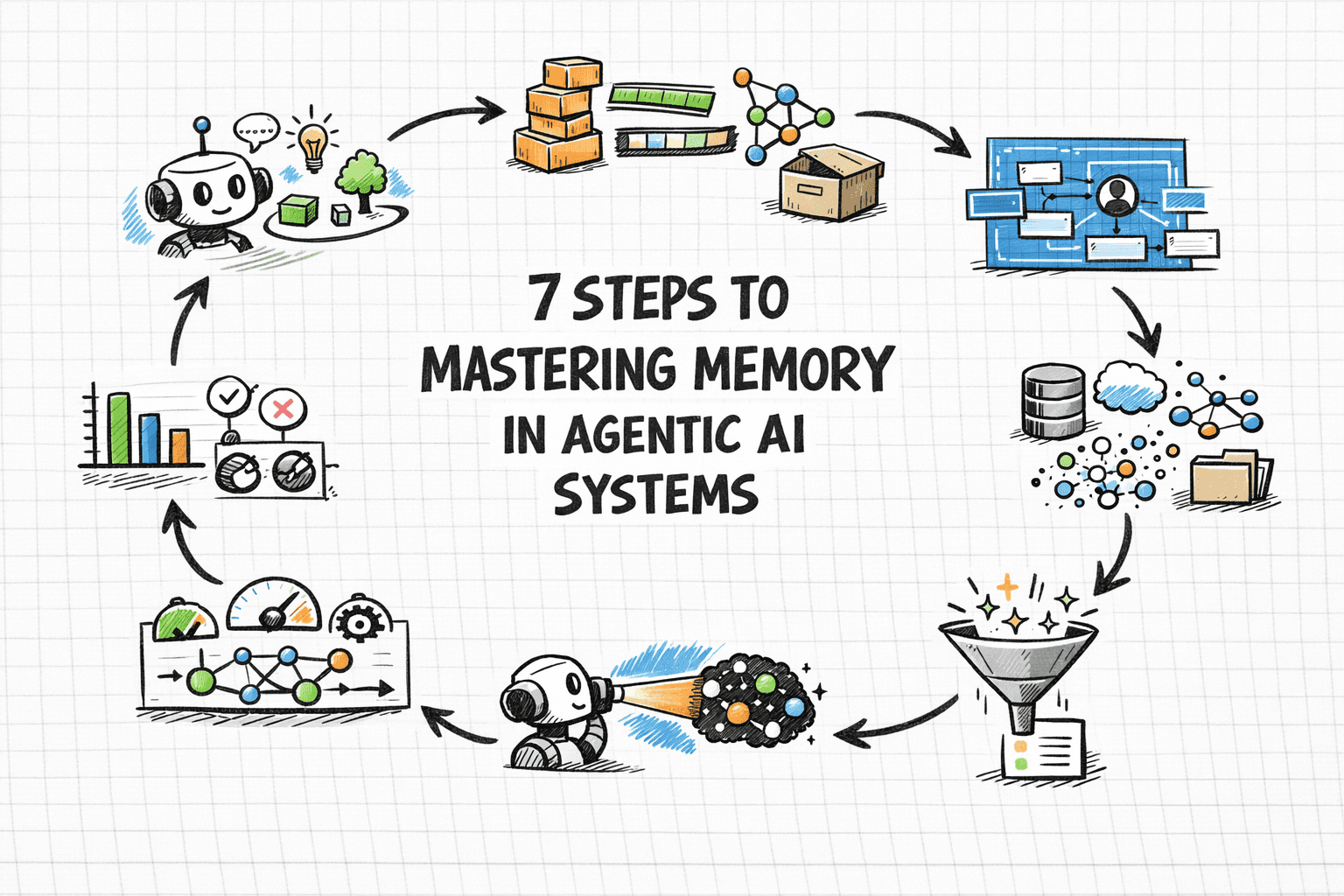

Memory is the bedrock upon which agents can accumulate context, personalize responses, avoid redundant work, and build upon previous outcomes. This capability is not monolithic; it encompasses various forms, from short-term conversational context to long-term learned preferences and sophisticated retrieval mechanisms. The challenge, therefore, lies not just in storing information but in intelligently managing what to store, where, when to retrieve it, and, crucially, what to forget. A structured, seven-step approach is essential for developing production-grade memory layers in agentic systems, focusing on architectural understanding, type taxonomy, design principles, context management, and continuous evaluation.

Memory as a Systemic Architectural Imperative

The initial instinct for many developers is to believe that simply increasing the context window size of an underlying language model will solve memory deficiencies. However, research and practical deployment experience consistently demonstrate that this approach is often counterproductive. Expanding context indiscriminately can lead to a phenomenon known as "context rot," where performance degrades under real-world workloads, retrieval becomes expensive, and operational costs escalate. The model’s attention budget becomes diluted by noise rather than focusing on pertinent information, leading to suboptimal reasoning quality.

Memory is fundamentally an architectural challenge, not merely a model parameter adjustment. It involves deliberate decisions about data storage, retrieval mechanisms, write paths, eviction policies, and consistency guarantees, much like any other production data system. As highlighted by IBM’s insights into AI agent memory, complex goal-oriented agents require memory as a core architectural component, integrated from the outset, rather than an afterthought. This necessitates a shift in perspective, moving beyond simple reflex agents to sophisticated systems that leverage persistent memory to achieve complex objectives. Developers must approach memory design with the same rigor applied to databases, considering indexing strategies, data lifecycle management, and performance optimization from the ground up.

A Taxonomy of AI Agent Memory Types

Drawing parallels from cognitive science, AI agent memory can be categorized into distinct types, each serving specific functions and mapping to particular architectural implementations:

-

Short-term or Working Memory: This is analogous to a human’s immediate consciousness, representing everything the model can actively process in a single inference call. It includes the system prompt, current conversation history, tool outputs, and any documents retrieved for immediate use. Typically implemented as a rolling buffer or conversation history array, it provides fast, immediate access but is inherently ephemeral, clearing with the end of a session. It is sufficient for single-session tasks but cannot retain information across interactions.

-

Episodic Memory: This type records specific past events, interactions, and their outcomes. For instance, an agent recalling a user’s deployment failure due to a missing environment variable last week is utilizing episodic memory. It is crucial for case-based reasoning, allowing agents to learn from past experiences and improve future decisions. Episodic memories are commonly stored as timestamped records in vector databases, retrieved via semantic or hybrid search, enabling agents to understand "what happened when."

-

Semantic Memory: Semantic memory stores structured factual knowledge, including user preferences, domain-specific facts, entity relationships, and general world knowledge pertinent to the agent’s scope. A customer service agent remembering a user’s preference for concise answers or their industry (e.g., legal) relies on semantic memory. This is often implemented through incrementally updated entity profiles, combining relational storage for structured fields with vector storage for fuzzy, context-aware retrieval, enabling agents to understand "what is true."

-

Procedural Memory: This encodes "how to do things"—workflows, decision rules, and learned behavioral patterns. In AI agents, this manifests as system prompt instructions, few-shot examples, or agent-managed rule sets that evolve through experience. An example is a coding assistant consistently checking for dependency conflicts before suggesting library upgrades. Procedural memory often resides within the agent’s core logic or prompt engineering, guiding its actions based on learned procedures.

These memory types rarely operate in isolation; robust production agents typically integrate multiple layers to achieve comprehensive, adaptive intelligence.

RAG vs. Agent Memory: Clarifying Distinct Roles

A persistent source of confusion in agentic system development is the conflation of Retrieval-Augmented Generation (RAG) with agent memory. While related, they address distinct problems, and their misapplication can lead to either over-engineered or information-blind agents.

RAG is fundamentally a read-only retrieval mechanism designed to ground a model in external, universal knowledge. It fetches relevant chunks from documentation, product catalogs, or policy documents at query time and injects them into the model’s context. RAG is stateless; each query is fresh, lacking any concept of the user’s identity or past interactions. It is ideal for "what does our refund policy state?" but unsuitable for "what did this specific customer tell us about their account last month?"

Agent Memory, conversely, is read-write and user-specific. It enables an agent to learn about individual users across sessions, recall prior attempts and failures, and adapt its behavior over time. The crucial distinction is that RAG treats relevance as a property of content, whereas memory treats relevance as a property of the user or session. Most production agents benefit immensely from both RAG and memory operating in parallel, each contributing distinct signals to enrich the final context window. For instance, a support agent might use RAG to retrieve general product troubleshooting guides and memory to recall the user’s specific past issues and preferences.

Designing Memory Architecture: Four Core Decisions

Effective memory architecture requires upfront design, as decisions concerning storage, retrieval, write paths, and eviction profoundly impact every other system component. Before any code is written, four critical questions must be addressed for each memory type:

-

What to Store? Storing raw transcripts, while tempting, often leads to noisy retrieval and inefficient resource use. Instead, the design should focus on distilling interactions into concise, structured memory objects: key facts, explicit user preferences, and outcomes of past actions. This extraction and summarization step, often involving an LLM, is where significant design effort is concentrated, ensuring only high-signal information is persisted.

-

How to Store It? The choice of storage representation is crucial and depends on the memory type:

- Vector Databases: Ideal for episodic and unstructured semantic memories, allowing for semantic similarity search. Examples include Pinecone, Weaviate, Chroma.

- Relational Databases (e.g., PostgreSQL, MySQL): Best for structured semantic memory, user profiles, and procedural rules that require strict schema and querying capabilities.

- Key-Value Stores (e.g., Redis, DynamoDB): Suitable for fast lookups of structured semantic facts or simple session states.

- Graph Databases (e.g., Neo4j): Excellent for representing complex relationships between entities, events, and concepts, enhancing contextual retrieval.

-

How to Retrieve It? Retrieval strategies must align with memory types. Semantic vector search excels for episodic and unstructured memories. Structured key lookups are more efficient for profiles and procedural rules. Hybrid retrieval—combining embedding similarity with metadata filters (e.g.,

semantic_query AND date_filterfor "what did this user say about billing in the last 30 days?")—handles the complex, real-world queries most agents encounter. -

When (and How) to Forget What You’ve Stored? Memory without forgetting is as detrimental as no memory at all, leading to information overload and degraded performance. Memory entries should include timestamps, source provenance, and explicit expiration conditions. Implementing decay strategies (e.g., weighting recent memories higher in retrieval scores) or utilizing native TTL (Time-To-Live) policies in storage layers ensures older, less relevant data does not pollute retrieval results as the memory store grows. This proactive approach to data hygiene is essential for maintaining retrieval quality and managing storage costs.

The Context Window: A Constrained and Precious Resource

Even with a robust external memory layer, all information flows through the context window, an inherently finite resource. Stuffing it indiscriminately with retrieved memories often degrades rather than enhances reasoning. Production experience highlights two prevalent failure modes:

- Context Poisoning: Incorrect, stale, or misleading information entering the context can compound silently across reasoning steps, leading to cascading errors in agent behavior.

- Context Distraction: Overloading the model with too much information can cause it to default to repeating historical patterns rather than freshly reasoning about the current problem, hindering its ability to adapt and innovate.

Managing this scarcity requires deliberate engineering. It’s about deciding not just what to retrieve, but what to exclude, compress, and prioritize. Key principles include:

- Prioritize Recency and Relevance: Give higher weight to recent interactions and memories directly relevant to the current turn.

- Summarize and Compress: Instead of injecting full transcripts, summarize past conversations or long memories into concise summaries before adding them to the context.

- Filter Noise Aggressively: Implement strict filtering mechanisms to remove irrelevant or redundant information from retrieved memory chunks.

- Adaptive Context Length: Dynamically adjust the amount of memory injected based on the complexity of the current task or available token budget.

The MemGPT research, now productized as Letta, offers an influential mental model: treating the context window as "RAM" and external storage as "disk." This allows the agent explicit mechanisms to page information in and out on demand, transforming memory management from a static pipeline decision into a dynamic, agent-controlled operation.

Memory-Aware Retrieval Within the Agent Loop

Suboptimal and expensive, automatic retrieval before every agent turn is inefficient. A more effective pattern is to empower the agent with retrieval as a tool—an explicit function it can invoke when it recognizes a need for past context. This mirrors human cognition: we don’t replay every memory before every action, but we recall specific information when prompted by a task. Agent-controlled retrieval yields more targeted queries and activates at the most appropriate moment in the reasoning chain. In ReAct-style frameworks (Thought → Action → Observation), memory lookup fits naturally as an available tool, allowing the agent to evaluate the relevance of retrieval results before incorporating them, significantly improving output quality.

For multi-agent systems, shared memory introduces additional complexity regarding consistency and concurrency. Agents might read stale data written by a peer or inadvertently overwrite each other’s episodic records. Designing shared memory requires explicit ownership, versioning, and concurrency control mechanisms to prevent data integrity issues.

A practical starting point involves incremental complexity: begin with a conversation buffer and a basic vector store. Introduce working memory (explicit reasoning scratchpads) when multi-step planning becomes necessary. Only introduce graph-based long-term memory when relationships between memories become a bottleneck for retrieval quality. Premature complexity in memory architecture is a common pitfall that can significantly slow down development.

Evaluating and Continuously Improving the Memory Layer

Evaluating an agentic system’s memory component is challenging because failures are often subtle and invisible. An agent might produce a plausible-sounding answer that is, in reality, grounded in stale, irrelevant, or missing memory. Without deliberate evaluation, these failures remain hidden until a user experiences a degraded interaction.

To counter this, memory-specific metrics are crucial:

- Retrieval Accuracy: How often does the system retrieve the most relevant memories for a given query?

- Recall Rate: What percentage of truly relevant memories are successfully retrieved?

- Retrieval Latency: How quickly can memories be retrieved and injected into context?

- User Satisfaction Scores: Specifically tied to personalization and context retention over time.

AWS’s benchmarking efforts with AgentCore Memory, evaluated against datasets like LongMemEval and LoCoMo, set a high standard for measuring retention across multi-session conversations. Building retrieval unit tests—a curated set of queries paired with expected memories—is essential to isolate memory layer problems from reasoning errors. This allows teams to quickly diagnose whether degraded agent behavior stems from retrieval, context injection, or model reasoning.

Continuous monitoring of memory growth is also vital. Production memory systems accumulate data, and retrieval quality can degrade as stores grow due to increased noise. Monitoring retrieval latency, index size, and result diversity over time, coupled with periodic memory audits to prune outdated, duplicate, or low-quality entries, is critical.

Finally, leveraging production corrections as training signals provides invaluable feedback. When users correct an agent, it’s a direct label indicating a memory issue: the agent retrieved the wrong memory, lacked relevant memory, or failed to utilize the correct memory. Closing this feedback loop, treating user corrections as systematic input for retrieval quality improvement, is one of the most powerful mechanisms for enhancing agent performance.

The ecosystem of purpose-built frameworks simplifies this complex endeavor. Frameworks like LangChain, LlamaIndex, Mem0, SuperAGI, AgentOps, and MemGPT/Letta offer tools and abstractions for managing various memory types, retrieval strategies, and integration within agentic workflows. Starting with these frameworks can significantly accelerate development compared to building from scratch.

Conclusion: The Enduring Importance of Intentional Memory Design

Memory in agentic systems is not a one-time setup; it is an ongoing design, implementation, and maintenance challenge. While tooling has advanced significantly, offering sophisticated vector databases and hybrid retrieval pipelines, the core decisions remain paramount: what information to store, what to ignore, how to retrieve it efficiently, and how to utilize it without overwhelming the agent’s context window. Good memory design hinges on intentionality regarding what is written, what is removed, and how it is integrated into the agent’s reasoning loop. Agents that master memory will inherently perform better over time, offering unparalleled personalization, efficiency, and reliability, marking a significant step forward in the journey towards truly intelligent and adaptive AI applications.