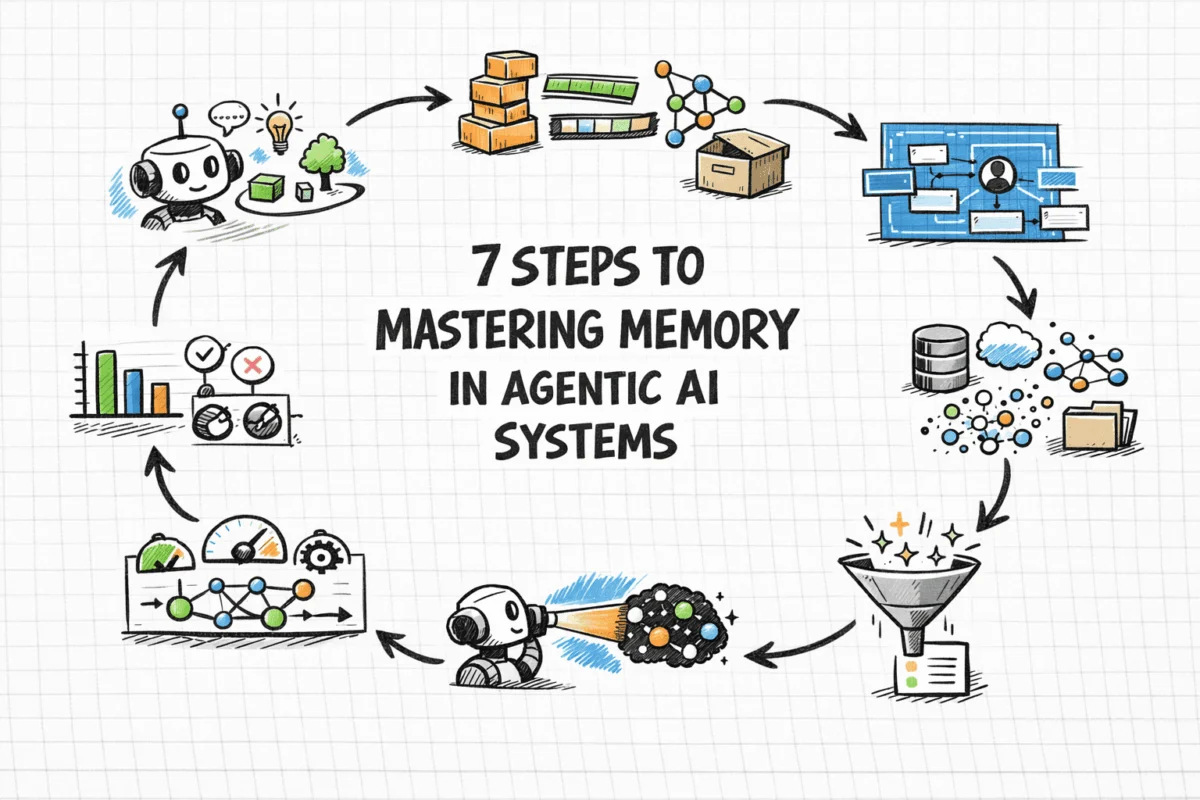

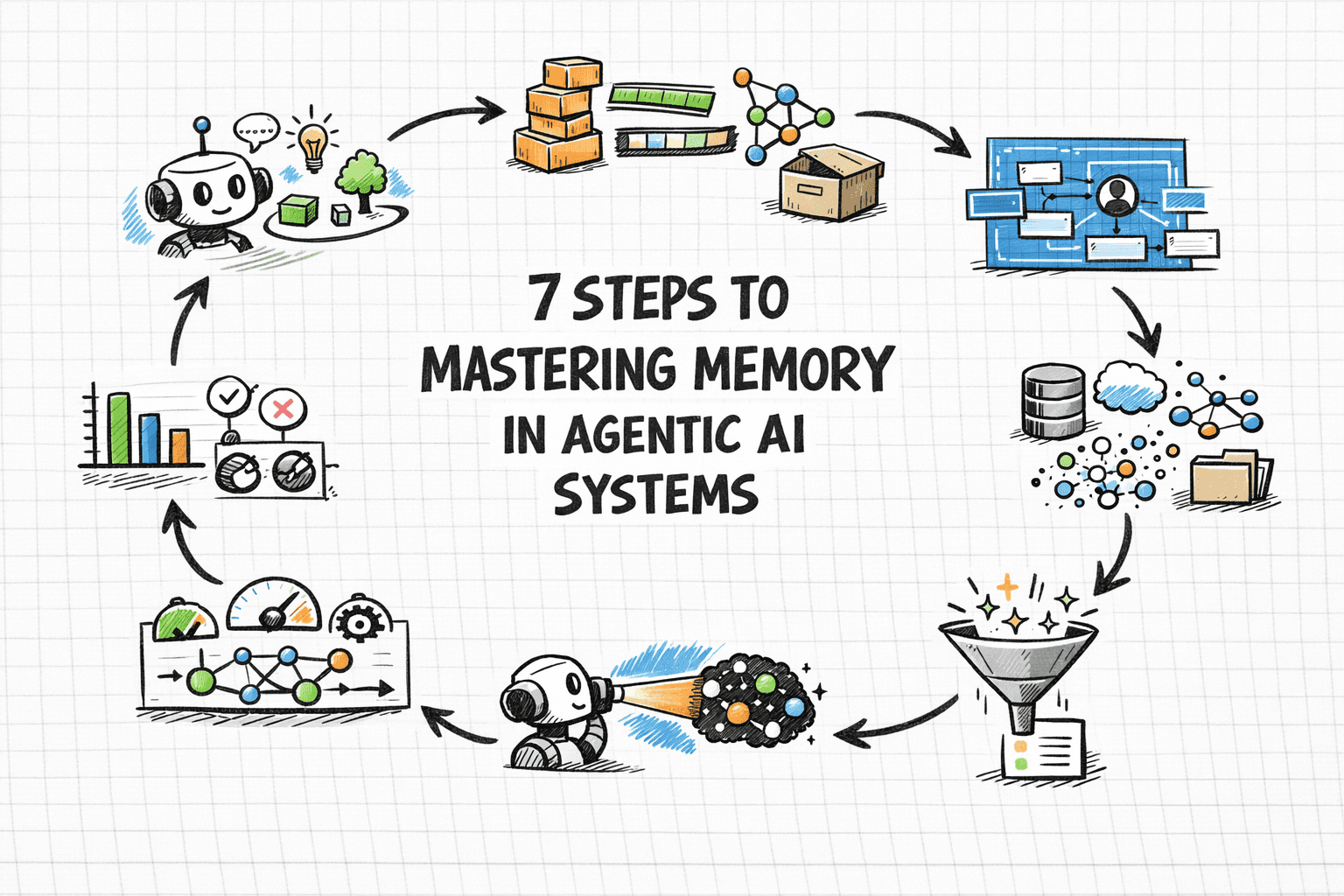

The rapid evolution of artificial intelligence, particularly the emergence of agentic AI applications, has brought to the forefront a critical challenge: the need for sophisticated memory systems. Without the capacity to recall past interactions, learn user preferences, or build upon previous outcomes, even the most advanced AI agents are severely constrained, starting each engagement as if for the first time. This inherent statelessness limits their utility, personalization capabilities, and overall effectiveness, particularly in complex, multi-turn, or long-running workflows. This article explores the comprehensive strategies required to design, implement, and continuously evaluate memory systems that empower agentic AI to become more reliable, adaptive, and genuinely intelligent over time.

The Imperative of Persistent Memory: Beyond Stateless AI

The initial wave of AI development often focused on single-turn interactions or tasks where immediate context was sufficient. However, as AI systems transition into more autonomous, goal-oriented "agents" capable of coordinating multi-step processes or serving users repeatedly, the absence of memory becomes a significant bottleneck. A system that cannot remember a user’s previous query, a failed attempt at a task an hour ago, or a long-standing preference is inherently inefficient and frustrating.

This challenge has led to a re-evaluation of how AI systems manage information. Early approaches often attempted to simply expand the "context window" of large language models (LLMs), feeding them more and more information in a single prompt. However, research and practical experience have consistently demonstrated the limitations of this method. This phenomenon, sometimes termed "context rot," reveals that indiscriminately enlarging the context window degrades reasoning performance, increases computational costs, and can lead to models spending their attention budget on noise rather than pertinent information. For instance, studies by organizations like Chroma Research have highlighted how excessive, undifferentiated context can lead to decreased accuracy and higher inference costs, sometimes by as much as 30-50% for complex queries as context length scales.

Consequently, memory in agentic AI is not merely a feature but a fundamental architectural problem. It involves deliberate decisions about what information to store, where to store it, when to retrieve it, and, crucially, what to forget. Unlike simple reflex agents, which operate on immediate inputs, goal-oriented agents demand memory as a core component, deeply integrated into their design, not an afterthought. This necessitates approaching memory design with the same rigor applied to any production data system, considering aspects like write paths, read paths, indexing strategies, eviction policies, and consistency guarantees before writing a single line of agent code. Leading AI developers and researchers, including those at IBM and MongoDB, consistently emphasize this architectural shift, recognizing memory as essential for building truly capable and persistent AI agents.

Deconstructing AI Memory: A Taxonomy for Agentic Systems

To effectively design memory into AI agents, it’s crucial to understand the distinct roles memory plays. Drawing parallels from cognitive science, four primary types of memory can be identified, each mapping to specific architectural implementations:

-

Short-term or Working Memory: This is analogous to a human’s immediate conscious thought. For AI agents, it encompasses the context window of an LLM – the system prompt, current conversation history, tool outputs, and any documents actively retrieved for the immediate turn. It’s fast, immediate, and crucial for coherent, real-time reasoning, much like RAM in a computer. Implementations typically involve a rolling buffer or a conversation history array, sufficient for single-session tasks but inherently transient. For example, a chatbot remembering the user’s last three sentences to maintain conversational flow uses working memory. Industry data suggests that a well-managed working memory can improve immediate task completion rates by up to 15% in complex dialogues compared to purely stateless interactions.

-

Episodic Memory: This records specific past events, interactions, and their outcomes. It allows an agent to recall a particular instance, such as "the user’s cloud deployment failed last Tuesday due to a specific configuration error." Episodic memory is vital for case-based reasoning, enabling agents to learn from past successes and failures to inform future decisions. Architecturally, it is often stored as timestamped records within a vector database, allowing for retrieval based on semantic similarity or hybrid searches combining semantic and metadata filters. The ability to retrieve relevant episodes can reduce repeated errors by as much as 20% in diagnostic AI agents, according to internal benchmarks from enterprise AI solution providers.

-

Semantic Memory: This type stores structured factual knowledge, including user preferences, domain-specific facts, entity relationships, and general world knowledge relevant to the agent’s operational scope. For instance, a customer service agent remembering that a specific user prefers concise answers or operates within the financial services industry draws upon semantic memory. This is typically implemented using entity profiles that are incrementally updated over time, often combining relational databases for structured fields with vector storage for fuzzy, semantic retrieval. Enhancing semantic memory through user profiles can lead to a 25% increase in user satisfaction and personalization, as reported by e-commerce AI platforms.

-

Procedural Memory: This encodes "how-to" knowledge – workflows, decision rules, and learned behavioral patterns. In AI agents, this manifests as system prompt instructions, few-shot examples, or agent-managed rule sets that adapt through experience. A coding assistant that consistently checks for dependency conflicts before suggesting library upgrades demonstrates procedural memory. While often implicitly encoded in model weights, explicit procedural memory can be managed through dynamic rule engines or evolving prompt structures. The integration of robust procedural memory can decrease agent error rates in complex task execution by 10-18% by standardizing best practices.

These memory types rarely operate in isolation. Effective production agents integrate multiple layers, allowing them to draw on immediate context, specific past experiences, factual knowledge, and learned procedures to achieve their goals.

Distinguishing RAG from True Agent Memory: A Critical Clarification

One of the most persistent sources of confusion for developers in the agentic AI space is the conflation of Retrieval-Augmented Generation (RAG) with true agent memory. While both involve retrieving information to inform an LLM, they address related but fundamentally distinct problems. Misapplying one for the other often leads to over-engineered systems or agents blind to crucial information.

RAG is primarily a read-only retrieval mechanism designed to ground a model in external, universal knowledge. This typically includes a company’s documentation, product catalogs, legal policies, or general knowledge bases. At query time, RAG finds relevant chunks of this information and injects them into the LLM’s context. Crucially, RAG is stateless; each query is treated independently, and the system has no inherent concept of the individual user, their past interactions, or their preferences. It is the appropriate tool for questions like "What is our company’s refund policy?" but entirely unsuitable for "What did this specific customer tell us about their account last month?" Industry benchmarks indicate that RAG can reduce LLM hallucinations by 40-60% when accessing factual, static knowledge.

Agent Memory, conversely, is read-write and user-specific. It empowers an agent to learn about individual users across sessions, recall what actions were attempted and their outcomes, and adapt its behavior over time based on accumulated experience. The core distinction lies in the concept of relevance: RAG treats relevance as an inherent property of the content itself, while agent memory treats relevance as a property tied to the specific user, their history, and the ongoing interaction. For instance, a personalized shopping agent would use RAG to access product descriptions (universal knowledge) but rely on agent memory to recall a user’s past purchases and size preferences (user-specific, read-write context).

Most sophisticated production agents benefit from both RAG and agent memory running in parallel, each contributing different, yet complementary, signals to the final context window. This hybrid approach ensures agents are both factually grounded in universal knowledge and deeply personalized to individual user needs and histories. According to a recent survey of AI practitioners, over 70% of successful agentic AI deployments leverage both RAG for general knowledge and dedicated memory systems for personalized context, underscoring the necessity of this distinction.

Architectural Blueprints: Key Decisions for Robust Memory Design

Effective memory architecture is not incidental; it must be designed upfront, as the choices made regarding storage, retrieval, write paths, and eviction policies profoundly impact the entire agentic system. Before commencing development, four fundamental questions must be thoroughly addressed for each type of memory:

-

What to Store? The temptation to store raw transcripts of every interaction is strong but often leads to noisy and inefficient retrieval. Instead, the focus should be on distilling interactions into concise, structured memory objects. This involves extracting key facts, explicit user preferences, the outcomes of past actions, and salient observations. For example, instead of saving a full chat log, an agent might extract "User stated preference for vegan options" or "Deployment attempt failed due to API key expiration." This extraction process, often powered by smaller LLMs or rule-based parsers, represents a significant design effort but ensures high-signal memories are stored. Estimates suggest that intelligent distillation can reduce memory storage requirements by 60-80% while significantly improving retrieval precision.

-

How to Store It? The choice of storage representation and backend is critical and depends on the memory type.

- Text (raw or summarized): Simple, good for short-term conversation history, often stored in an in-memory buffer or a basic key-value store.

- Embeddings (vector representations): Ideal for episodic and unstructured semantic memories, allowing for semantic similarity search. Vector databases (e.g., Pinecone, Milvus, Qdrant) are the go-to for this.

- Structured Data (key-value, relational): Best for semantic memory components like user profiles, explicit preferences, or domain facts. Relational databases (e.g., PostgreSQL) or NoSQL document stores (e.g., MongoDB) are suitable.

- Knowledge Graphs: Excellent for representing complex relationships between entities, facts, and events, particularly useful for sophisticated semantic and procedural memory where inferencing over relationships is required. Graph databases (e.g., Neo4j, RedisGraph) excel here. Hybrid approaches, combining relational tables with vector indices, are increasingly common for balancing structured query capabilities with semantic search.

-

How to Retrieve It? The retrieval strategy must align with the memory type and desired outcome.

- Semantic Vector Search: Highly effective for episodic and unstructured semantic memories where the query’s meaning, rather than exact keywords, is paramount.

- Structured Key Lookup: Best for retrieving specific facts from profiles or procedural rules, such as "get user’s preferred language."

- Hybrid Retrieval: The most common approach for real-world agents, combining embedding similarity with metadata filters. For example, "what did this user say about billing in the last 30 days?" requires both semantic matching (billing) and a date filter (last 30 days). This multi-modal retrieval can boost relevance scores by 10-25% over single-method approaches.

-

When (and How) to Forget What You’ve Stored? Memory without forgetting can be as detrimental as no memory at all, leading to bloat, context pollution, and increased retrieval costs.

- Timestamps and Provenance: Every memory entry should carry a timestamp and information about its source, allowing for temporal filtering and accountability.

- Explicit Expiration Conditions (TTL): Implement time-to-live (TTL) policies for certain memory types, automatically expiring stale data.

- Decay Strategies: Weight recent memories higher in retrieval scoring or use algorithms that gradually reduce the "strength" or relevance of older memories over time.

- Auditing and Pruning: Regularly audit memory stores to identify and remove outdated, duplicate, or low-quality entries. This proactive management is critical for maintaining retrieval quality and controlling storage costs, which can otherwise escalate rapidly in production systems.

These decisions, made thoughtfully at the architectural level, lay the groundwork for a scalable, efficient, and intelligent memory system.

Optimizing the Context Window: A Scarce Resource Management

Even with a robust external memory layer, the LLM’s context window remains a finite and precious resource. Indiscriminately stuffing it with retrieved memories, regardless of relevance, can degrade reasoning performance rather than enhance it. Experience shows that managing this scarcity requires deliberate engineering to avoid common failure modes:

- Context Poisoning: Occurs when incorrect, stale, or misleading information enters the context window. Because agents build upon prior context across reasoning steps, these errors can compound silently, leading to incorrect actions or responses that are difficult to debug.

- Context Distraction: Happens when the model is overwhelmed with too much information, causing it to default to repeating historical behavior or getting lost in irrelevant details rather than focusing on the current problem. This can manifest as increased latency or a decrease in the quality of the agent’s output.

Managing this scarcity means actively deciding not just what to retrieve, but also what to exclude, compress, and prioritize. Key principles include:

- Summarization and Compression: Before injecting older conversation history or lengthy episodic memories, summarize them to their essential points. Techniques like LLM-based summarization or keyword extraction can reduce context length by 70-90% without losing critical information.

- Dynamic Prioritization: Implement mechanisms to prioritize memories based on recency, relevance score, or explicit user intent. For example, a memory from the current session about a user’s stated goal should take precedence over a less relevant memory from a month ago.

- Active Filtering and Pruning: Employ intelligent filters to remove redundant or low-signal memories before they reach the context window. This can involve heuristic rules, semantic similarity thresholds, or even a smaller "gatekeeper" LLM to decide what passes through.

The research behind projects like MemGPT and its productization in platforms like Letta offers a compelling mental model: treat the context window as high-speed RAM and external memory stores as slower, larger disk storage. The agent is then given explicit mechanisms to "page" information in and out on demand, transforming memory management from a static pipeline decision into a dynamic, agent-controlled operation. This dynamic approach can lead to a 15-20% improvement in reasoning quality for long-running tasks, as the agent is not burdened with irrelevant data.

Intelligent Retrieval: Integrating Memory into the Agent Loop

Simply retrieving memories automatically before every agent turn is often suboptimal and resource-intensive. A more effective pattern is to empower the agent with retrieval as a tool – an explicit function it can invoke when it recognizes a need for past context. This mirrors human cognitive processes: we don’t replay every memory before every action, but we know when to pause and recall specific information.

Agent-controlled retrieval results in more targeted queries and ensures that memory lookup occurs at the most opportune moment within the reasoning chain. In popular ReAct-style frameworks (Thought → Action → Observation), memory lookup naturally fits as one of the available tools. After observing a retrieval result, the agent can then evaluate its relevance and integrate it into its subsequent thoughts and actions. This form of online filtering significantly improves the quality and efficiency of the agent’s output. Data from early adopters of this approach suggests a reduction in unnecessary retrieval calls by up to 30%, leading to cost savings and faster inference times.

For multi-agent systems, shared memory introduces additional layers of complexity, particularly regarding data consistency and integrity. Agents might inadvertently read stale data written by a peer or overwrite each other’s episodic records. To mitigate this, shared memory systems must be designed with explicit ownership and versioning mechanisms:

- Clear Ownership: Define which agent (or component) is responsible for writing specific types of memories.

- Versioning and Immutability: Implement versioning for memory entries or ensure that once written, memories are immutable, with updates creating new versions. This prevents race conditions and allows for historical tracking.

- Concurrency Controls: Use locks or atomic operations when multiple agents might attempt to write to or modify the same memory segment simultaneously.

These are critical design considerations that must be addressed upfront to avoid complex debugging scenarios in production. A practical starting point for many teams involves beginning with a simple conversation buffer and a basic vector store for episodic memories. More complex elements, such as explicit reasoning scratchpads for working memory in multi-step planning or graph-based long-term memory for intricate relationship inferencing, should only be introduced when existing solutions demonstrably become a bottleneck for performance or retrieval quality. Premature complexity in memory architecture is a common pitfall that can significantly slow down development cycles.

Continuous Improvement: Evaluating and Refining Agent Memory Systems

Evaluating the effectiveness of an agent’s memory layer is uniquely challenging because failures are often subtle and invisible. An agent might produce a plausible-sounding answer that is, in fact, grounded in a stale memory, an irrelevant retrieved chunk, or a critical piece of missing episodic context. Without deliberate evaluation, these systemic failures can remain hidden until a user flags a significant error.

To address this, teams must define memory-specific metrics beyond general task completion rates:

- Retrieval Recall: The percentage of truly relevant memories that were successfully retrieved.

- Retrieval Precision: The percentage of retrieved memories that were actually relevant to the query.

- Memory Freshness: How current the retrieved information is, especially for dynamic facts or user preferences.

- Context Utilization: The proportion of the injected context (including memories) that the LLM actually used in its reasoning, indicating whether retrieved information was truly valuable or merely noise.

- Cost of Retrieval: The computational and latency overhead associated with memory lookups and context construction.

Benchmarking efforts, such as AWS’s work with AgentCore Memory evaluated against datasets like LongMemEval and LoCoMo, specifically measure memory retention across multi-session conversations. This level of rigor should be the standard for production agent systems.

Furthermore, building retrieval unit tests is essential. Before end-to-end evaluation, create a curated suite of queries paired with the specific memories they should retrieve. This isolates problems within the memory layer from issues in the LLM’s reasoning. When agent behavior degrades in production, these tests quickly pinpoint whether the root cause is faulty retrieval, improper context injection, or the model’s reasoning over what was provided.

Monitoring memory growth is also crucial. Production memory systems continuously accumulate data. As stores grow, retrieval quality can degrade due to increased noise in candidate sets. Teams must monitor retrieval latency, index size, and result diversity over time. Periodic memory audits, identifying and pruning outdated, duplicate, or low-quality entries, are vital for maintaining performance and cost efficiency.

Finally, leveraging production corrections as training signals offers an invaluable feedback loop. When users correct an agent, that correction is a powerful label: either the agent retrieved the wrong memory, lacked a relevant memory, or possessed the right memory but failed to utilize it effectively. Systematically feeding these user corrections back into the retrieval quality improvement process is one of the most impactful strategies for continuous refinement.

The tooling ecosystem for AI agent memory has matured significantly, with purpose-built frameworks, vector databases, and hybrid retrieval pipelines simplifying implementation. Frameworks like LangChain, LlamaIndex, MemGPT, and Redis provide robust starting points, offering pre-built components for memory management that can save significant development time and effort.

The Future of Agentic AI: Built on Intelligent Memory

Memory in agentic systems is not a one-time setup; it is an ongoing, dynamic process of design, implementation, and continuous refinement. While the underlying tooling has seen substantial advancements, making robust memory more accessible today than ever before, the fundamental decisions remain paramount: what information to store, what to strategically ignore, how to retrieve it efficiently, and how to utilize it without overwhelming the LLM’s context.

Good memory design fundamentally hinges on intentionality – being deliberate about what gets written to memory, what gets removed, and how that memory is accessed and integrated into the agent’s reasoning loop. Agents that master memory management exhibit superior performance over time, demonstrating enhanced reliability, deeper personalization, and greater overall effectiveness. These are the systems poised to unlock the full potential of agentic AI, transforming how we interact with intelligent applications and enabling them to truly learn and evolve.