In 2026, machine learning has transcended its traditional role as a prediction-focused analytical tool, evolving into deeply integrated, action-oriented systems that are fundamentally reshaping real-world workflows across industries. This paradigm shift marks a critical juncture in the maturation of artificial intelligence, moving beyond mere data analysis to active participation in operational processes, often without direct human intervention. The transition, which has accelerated significantly over the past two years, underscores a strategic pivot within the technology sector towards outcome-driven AI deployments that deliver tangible business value and operational efficiencies.

The Evolution of Machine Learning: A Decade of Transformation

For years leading up to 2023, the majority of machine learning systems operated discreetly in the background, serving primarily as sophisticated assistants. These models would ingest data, generate predictions, and present insights, typically via dashboards, leaving the crucial decision-making and subsequent actions to human operators. This "human-in-the-loop" model, while effective for certain applications, presented a bottleneck in the pursuit of true automation and efficiency. The boundary between analysis and action, once distinct, has progressively blurred, culminating in the current landscape where machine learning systems are increasingly designed to act autonomously.

The period between 2023 and 2024 was characterized by a fervent "capability race." The industry’s focus was largely on pushing the boundaries of what AI could achieve: developing larger, more complex models, achieving impressive benchmarks, and showcasing groundbreaking demos. Companies rushed to integrate AI features into their products, often to demonstrate technological prowess rather than to solve specific operational challenges. However, this initial wave of enthusiasm was met with a dose of reality. Many of these early implementations struggled in production environments. They proved to be prohibitively expensive to maintain, challenging to integrate seamlessly, and frequently disconnected from the intricate realities of existing business workflows. This led to a critical re-evaluation of AI strategy across enterprises.

By 2025, the industry began to undergo a profound shift. The focus moved decisively from merely generating impressive outputs to achieving measurable outcomes. Machine learning systems are now expected to complete entire tasks, not just provide assistance. For instance, a customer support model in 2026 doesn’t merely suggest a reply; it resolves the entire ticket, from understanding the query to executing the necessary steps. Similarly, an intelligent data pipeline no longer just flags anomalies; it autonomously triggers corrective actions, such as rerouting traffic or initiating maintenance protocols. This subtle yet significant difference in expectation is reshaping how every aspect of machine learning systems is conceived, built, and deployed.

This operational maturation is starkly reflected in the financial commitments pouring into the sector. Global AI spending is projected to reach an staggering $2.02 trillion by the end of 2026, according to Plunkett Research. Concurrently, the broader machine learning market is anticipated to expand to $1.88 trillion by 2035, as reported by Itransition. These figures are not indicative of speculative investment but rather reflect substantial capital flowing into systems that are already deeply embedded within core business operations, driving fundamental transformations. As Dr. Evelyn Reed, a lead analyst at Quantum Insights, recently stated, "The industry has matured beyond mere experimentation. We’re seeing a strategic pivot towards operationalizing AI, where the true value lies not in what a model can predict, but what it does." In 2026, the power of these models is defined not just by their computational prowess, but by the depth of their integration, making machine learning an indispensable component of modern enterprise architecture.

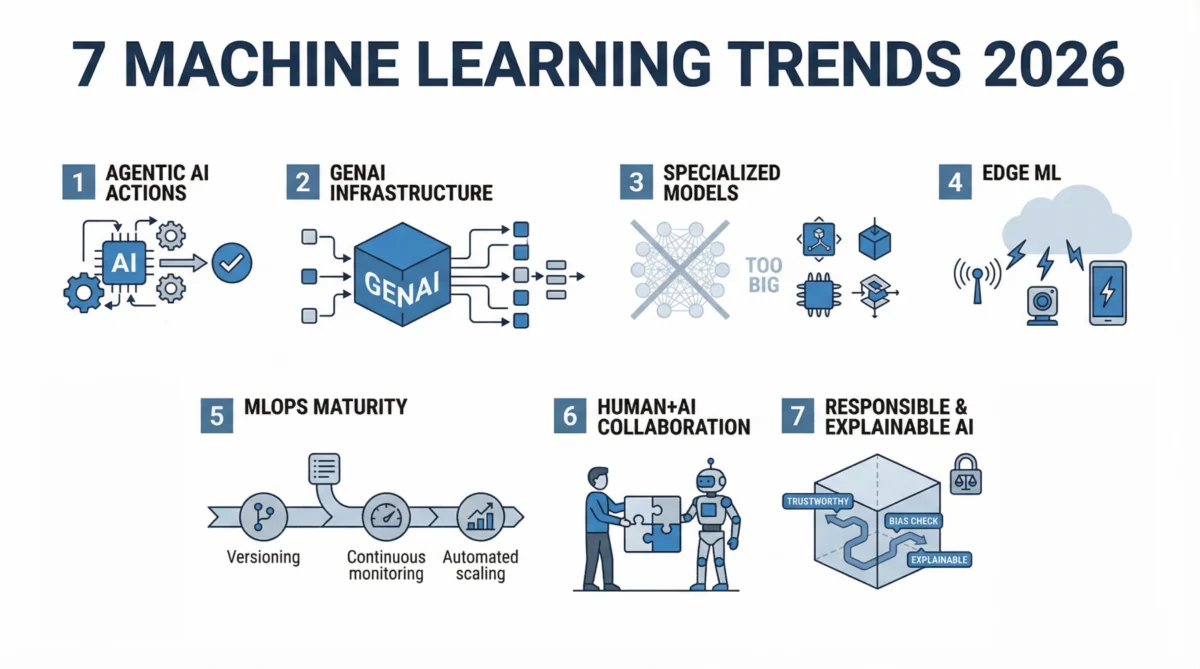

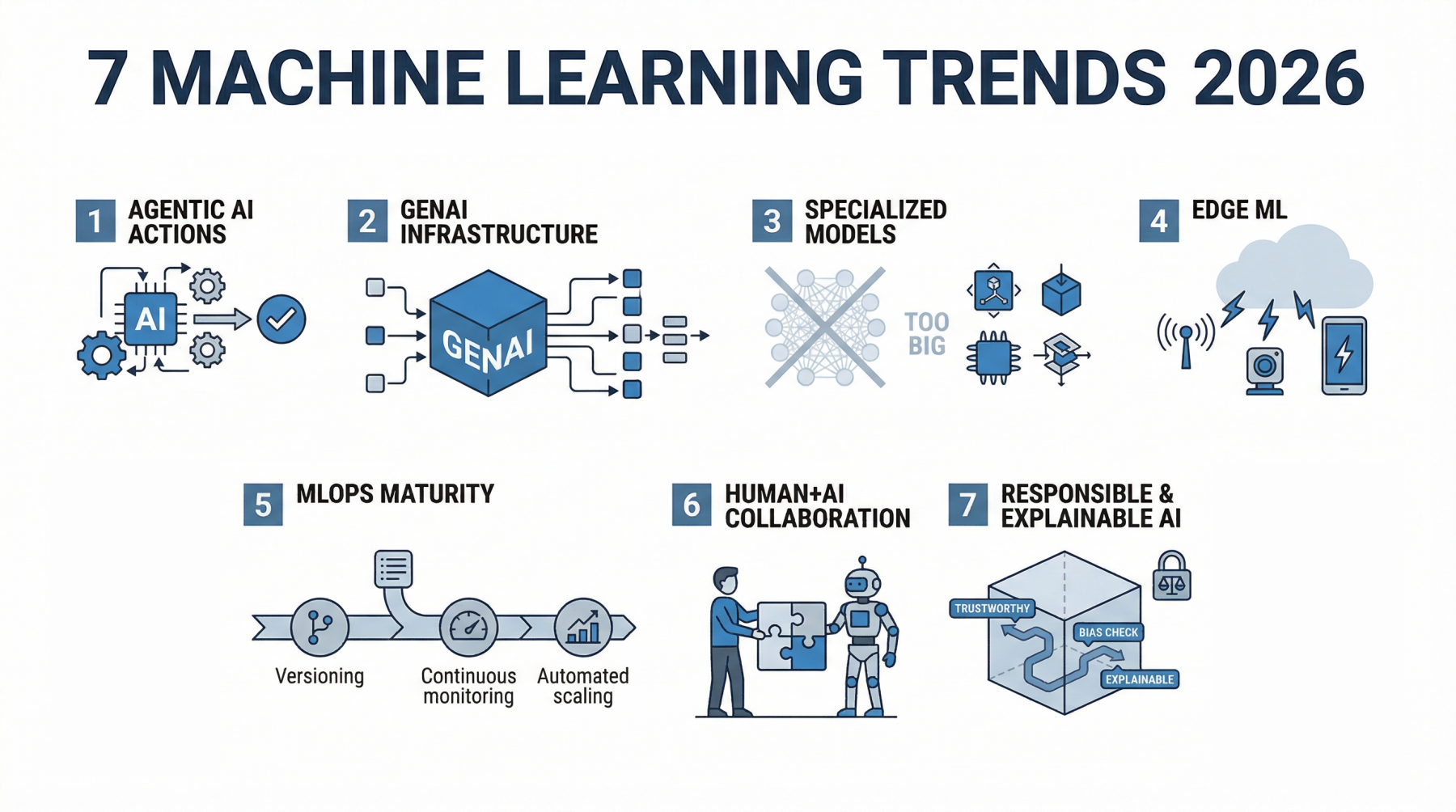

Here are the seven key trends fundamentally shaping how machine learning is being built and utilized in 2026:

1. Agentic AI: From Assistants to Autonomous Decision-Makers

For a considerable period, machine learning systems functioned as passive assistants, providing outputs that still required human or external system intervention for action. This model is rapidly being superseded by Agentic AI, which fundamentally redefines the role of intelligent systems. Agentic AI empowers systems to plan, make decisions, and execute multi-step tasks from initiation to completion, largely autonomously.

The distinction from traditional ML is profound. While a conventional model might predict customer churn or classify support tickets, an agentic system takes this further. It can identify a high-risk customer, determine the optimal retention strategy, craft a personalized communication, and then trigger the outreach, monitoring its effectiveness. The output is no longer a mere prediction; it’s a series of coordinated actions designed to achieve a specific objective. This capability is underpinned by the system’s ability to decompose complex goals into smaller, manageable tasks, execute them sequentially, and dynamically adjust its strategy based on real-time feedback and environmental changes. This multi-step workflow execution, drawing data from various sources and interacting with external APIs, mirrors human problem-solving more closely than previous ML paradigms.

The impact of this shift is observable across multiple sectors. In customer support, advanced AI agents are resolving entire inquiries without escalation, significantly reducing resolution times and operational costs. In manufacturing and logistics, they manage inventory, combining demand forecasts with supply chain constraints to optimize stock levels and distribution. Healthcare is leveraging agentic systems to summarize extensive patient records, recommend diagnostic next steps, and streamline administrative tasks, thereby freeing clinicians to focus on direct patient care and critical decision-making.

Market indicators reflect the rapid acceleration of this trend. The AI agents market is projected to reach an impressive $93.2 billion by 2032, according to Softteco. Furthermore, industry reports suggest that up to 40% of enterprise applications may incorporate AI agents by the close of 2026. This level of anticipated adoption signals a foundational change in how software is designed and how business processes are automated. As a CTO from a leading logistics firm noted, "Companies are increasingly seeking solutions that don’t just inform but act. Agentic systems are streamlining complex workflows, freeing up human capital for strategic oversight." This capability for autonomous action is arguably the most significant development in machine learning today, influencing everything from model architecture to infrastructure design and user interface paradigms.

2. Generative AI as Foundational Infrastructure, Not a Feature

The initial excitement surrounding generative AI often positioned it as a novel, headline-grabbing feature—a chatbot here, a content generator there. While impressive, these early applications were frequently isolated, existing at the periphery of core product functionalities. In 2026, this perception has fundamentally changed; generative AI is no longer an add-on but an integral part of the underlying infrastructure powering daily operations.

This integration is evident in its ubiquitous application. In software development, generative AI is now embedded directly into integrated development environments (IDEs), assisting developers in writing code, conducting real-time code reviews, and even refactoring existing codebases, dramatically accelerating development cycles. Within business operations, it has become indispensable for generating comprehensive reports, summarizing lengthy meetings, and extracting critical insights from vast, unstructured datasets, eliminating the need for laborious manual analysis.

The transformation lies not just in enhanced capability but in its strategic placement. Generative models are now deeply woven into core workflows, moving beyond simple content creation to becoming a foundational layer for information processing and task execution. This shift has necessitated a move from experimental deployment to robust production-grade implementation. The last two years saw organizations exploring the potential of generative AI; the current focus is on reliability, cost-effectiveness, and consistency. Models are being rigorously fine-tuned, integrated with traditional machine learning systems, and connected to structured data sources. This hybrid approach leverages generative AI for unstructured tasks like natural language understanding and reasoning, while traditional models handle precise prediction and optimization.

The tangible benefits are already being reported. Companies are experiencing up to a 30% reduction in workload after integrating generative AI into their operational workflows, according to Forbes. Such substantial improvements are not derived from isolated features but from deep, systemic integration. The industry conversation has evolved from "should we adopt generative AI?" to "where is generative AI still missing, and which parts of our workflow are operating without its benefits?" This signifies its status as a non-negotiable component of modern digital infrastructure.

3. The Ascendancy of Specialized and Efficient Models

For an extended period, the trajectory of machine learning progress was largely defined by scale: bigger models, more parameters, larger datasets, and consequently, superior performance. This philosophy propelled the industry towards the development of massive systems demanding immense computational resources, substantial financial investments, and complex infrastructure. However, 2026 marks a decisive shift. Smaller, more specialized models are gaining significant traction, not for their general impressiveness, but for their superior practicality and efficiency.

These specialized models are meticulously designed for specific tasks, trained on highly focused datasets, and optimized for real-world application rather than abstract benchmark supremacy. Small Language Models (SLMs) exemplify this trend. Rather than attempting to master every conceivable task, SLMs are engineered to perform exceptionally well within narrow domains, such as legal document analysis, specialized customer service dialogues, or internal corporate knowledge retrieval. In these niche applications, a smaller model with deep contextual understanding frequently surpasses the performance of a larger, more generalized counterpart.

The operational advantages of SLMs are compelling. They are significantly cheaper to run, exhibit faster response times, and are simpler to deploy. Their reduced computational footprint allows them to operate on local servers or even directly within edge devices and applications, minimizing reliance on external cloud infrastructure. This not only decreases latency but also provides organizations with greater control over performance, data privacy, and security—a critical concern in an era of increasing data regulation.

Success metrics are also evolving. The question is no longer "how powerful is this model in general?" but "how effectively does it perform in this specific context?" A specialized model that consistently delivers accurate and reliable results for a single, business-critical task is often deemed more valuable than a sprawling general-purpose model that performs acceptably across many tasks but lacks precision where it matters most. This emphasis on efficiency and return on investment (ROI) is paramount. The high costs associated with training and maintaining massive models are becoming increasingly scrutinized, and not every use case justifies such an investment. Specialized models offer a more optimal balance between performance and operational cost, particularly when deployed at scale across an enterprise. As Dr. Kenji Tanaka, a research lead at AI Innovations Lab, noted, "The push for specialized models reflects a pragmatic shift. Enterprises are realizing that a highly efficient, purpose-built model can deliver far greater business value than an unwieldy general-purpose behemoth for specific tasks." In 2026, model size is no longer a marker of prestige; practical utility and economic viability are the true indicators of success.

4. Machine Learning Moves to the Edge: IoT and Real-Time Intelligence

For many years, the cloud served as the primary residence for most machine learning systems. Data was collected from various sources, transmitted to centralized servers for processing, and then returned as predictions or insights. While functional, this cloud-centric model presented inherent trade-offs, including noticeable latency, escalating bandwidth costs, and mounting concerns regarding data privacy and sovereignty. In 2026, this architectural paradigm is undergoing a significant transformation, with an increasing number of models being deployed closer to the point of data generation—at the edge.

Edge machine learning involves running models directly on devices or proximate to them. This localized processing capability transforms applications such as security cameras, which can now detect unusual activity in real-time without sending sensitive video feeds to a remote server. Mobile applications can process voice commands or image data instantaneously on the device itself. Industrial machines equipped with edge AI can monitor their own performance and react autonomously to anomalies, eliminating critical delays associated with cloud round trips.

The core advantage of edge machine learning lies in its ability to deliver unparalleled speed and control. Cloud systems offer immense power and scalability, but they inevitably introduce delays. Edge systems, by contrast, reduce latency to near zero, as computation occurs locally. This instantaneous response capability is crucial for use cases demanding immediate action, such as autonomous vehicles, critical healthcare monitoring systems, and smart infrastructure management, where even minimal delays can have severe consequences.

Privacy considerations are also a significant driver of this trend. Transmitting vast quantities of raw data, especially sensitive personal or proprietary information, to the cloud raises substantial privacy concerns and compliance challenges under regulations like GDPR and CCPA. Edge machine learning allows the bulk of data processing to happen locally, with only necessary, aggregated insights potentially being shared. This localized processing significantly reduces data exposure and simplifies regulatory compliance.

The proliferation of connected devices further amplifies the necessity of edge computing. The number of IoT devices is projected to reach an astounding 39 billion by 2030, according to Forbes. With such an immense volume of devices generating continuous streams of data, the traditional model of sending everything to the cloud becomes inefficient, impractical, and economically unfeasible. This shift does not signify a complete abandonment of cloud computing but rather a strategic redistribution of computational workloads. While certain complex tasks will continue to necessitate centralized cloud processing, an ever-increasing proportion of critical decisions are being made instantaneously at the edge, revolutionizing real-time intelligence.

5. MLOps and LLMOps Become Mandatory for Production Reliability

The ease of building a machine learning model has never been greater, thanks to the proliferation of open-source tools, pre-trained models, and readily available APIs, often allowing a functional prototype to be established within hours. However, the true challenge and differentiator lie in running these systems reliably and efficiently in production environments. This is where MLOps (Machine Learning Operations) has become indispensable. MLOps encompasses the entire lifecycle post-model development, focusing on versioning, continuous monitoring, robust deployment, scalable infrastructure, and iterative updates.

With the advent of generative AI and agentic systems, the scope of operational excellence has expanded into specialized domains like LLMOps (Large Language Model Operations) and even AgentOps. Each new layer of complexity introduces novel challenges: meticulous prompt management, rigorous response evaluation, seamless tool integration, and orchestration of multi-step execution paths—all requiring careful oversight and automation.

The transition from isolated experimentation to widespread production deployment has exposed significant gaps in traditional software development practices when applied to ML. A model that performs flawlessly in a controlled testing environment can exhibit unpredictable behavior in real-world conditions due to data drift, evolving user patterns, or subtle environmental shifts. Without robust monitoring and operational frameworks, these issues can escalate rapidly, impacting users and compromising system integrity.

Enterprises are now treating machine learning systems with the same rigor and criticality applied to core software infrastructure. This entails comprehensive performance tracking over time, sophisticated version control for models and their underlying data, and the establishment of automated CI/CD pipelines that enable seamless updates without disrupting existing services. Crucially, it also involves building in safeguards: extensive logging of outputs, sophisticated anomaly detection mechanisms, and robust fallback systems to mitigate failures.

Scaling remains another critical pressure point. A model that functions adequately for a handful of users may buckle under heavy demand, leading to increased latency, spiraling costs, and inconsistent performance. MLOps practices are essential for managing this by optimizing model serving, ensuring efficient resource allocation, and implementing auto-scaling capabilities.

In 2026, it is unequivocally clear that machine learning is no longer an ancillary project; it is deeply embedded within core business systems. Its failure directly translates to product failure and operational disruption. Consequently, operational maturity in AI deployment has emerged as a significant competitive advantage. Organizations capable of consistently deploying, monitoring, and improving models will iterate faster and build more resilient systems. Conversely, those lacking robust MLOps practices will find themselves perpetually entangled in firefighting, diverting resources from value creation to problem resolution. At this stage, merely knowing how to build a model is insufficient; the true differentiator lies in mastering its reliable and scalable operation.

6. Human-AI Collaboration Becomes the Default

The early discourse surrounding artificial intelligence frequently revolved around themes of human replacement, job displacement, and the automation of entire functions. However, 2026 reveals a more pragmatic and productive reality: the predominant value generated by AI stems from synergistic collaboration, rather than outright substitution. AI is increasingly perceived not merely as a tool, but as a dynamic co-worker, fundamentally reshaping how work is accomplished.

This shift manifests in redefined workflows where individuals interact with systems that can suggest solutions, generate content, review outputs, and refine processes in real time. The human role evolves to setting strategic direction, providing essential context, and making final, nuanced decisions, while the AI manages the computationally intensive or repetitive tasks in between.

Consider its application across various professional domains. In healthcare, AI systems compile and summarize complex patient histories, highlight critical risks, and propose potential next steps, enabling clinicians to dedicate more time to empathetic patient interaction and expert judgment. Marketing teams leverage AI to rapidly generate innovative campaign ideas, conduct A/B testing on countless variations, and analyze performance metrics with unprecedented speed and depth, far surpassing manual capabilities. In engineering, developers collaborate with AI systems that assist in writing, reviewing, and debugging code, maintaining the rapid pace of modern software development.

The profound impact extends beyond mere speed; it’s transforming professional roles. Tasks that once consumed hours are now completed in minutes, reallocating human effort from execution to higher-order functions such as strategy formulation, critical validation, and creative problem-solving. Measurable gains in productivity are now commonplace across industries utilizing AI-assisted workflows, with many organizations reporting significant efficiency improvements as these collaborative systems become ingrained in daily operations. These gains are not achieved by removing humans from the loop but by optimizing their interaction within it.

This paradigm also cultivates a new set of essential skills. The ability to articulate precise questions, effectively guide AI outputs, and critically evaluate generated results becomes as vital as traditional technical expertise. Individuals proficient in collaborating with AI systems are demonstrating superior agility and delivering enhanced outcomes. The notion of humans "competing" with AI is steadily losing relevance. The contemporary competitive advantage lies in mastering how to work alongside AI, discerning where human judgment remains paramount, and leveraging AI’s capabilities for augmented intelligence.

7. Responsible and Explainable AI Takes Center Stage

As machine learning systems become increasingly integral to critical decision-making processes across society, a fundamental question gains paramount importance: can we truly trust what these systems are doing? For a considerable period, many sophisticated models operated as "black boxes," producing accurate results but offering little insight into the underlying reasoning behind their conclusions. While acceptable in low-stakes scenarios, this opacity becomes problematic when these same systems are deployed in high-impact domains such as finance, healthcare, human resources, or law enforcement.

This is where Explainable AI (XAI) emerges as a critical necessity, focusing on enhancing the transparency of model decisions. Rather than merely presenting an output, XAI-enabled systems can delineate which specific inputs most influenced a particular decision and quantify the strength of that influence. This transparency is invaluable for development teams to validate results, identify and rectify errors, and build robust confidence in the system’s behavior. For end-users and stakeholders, it provides crucial clarity and accountability.

Concurrently, the regulatory landscape is rapidly evolving to address the pervasive adoption of AI. Governments and regulatory bodies worldwide are introducing comprehensive frameworks that mandate greater accountability from companies regarding the development and deployment of their AI systems. These regulations encompass stringent requirements for data collection practices, model training methodologies, and the fairness and transparency of decision-making processes. Compliance is no longer a peripheral legal consideration; it is becoming an intrinsic feature of AI product design and development.

Bias and fairness are receiving intensified scrutiny. Machine learning models inherently learn from the data they are trained on, and if that data reflects existing societal biases, the model can inadvertently amplify them. Practically, this can lead to inequitable outcomes in critical areas like loan approvals, hiring decisions, or risk assessments. Addressing this complex issue demands more than purely technical fixes; it necessitates meticulous data selection, continuous monitoring for algorithmic bias, and clear lines of accountability for the system’s outcomes.

Companies are now taking these ethical considerations with utmost seriousness, driven not solely by regulatory pressures but also by escalating user expectations. Individuals increasingly demand to understand how decisions that directly affect their lives are made. If an AI system denies a credit application or flags a security risk, a clear, understandable explanation is expected and, in many jurisdictions, legally required.

This growing emphasis on responsible AI is universally evident across both industry best practices and policy initiatives. Ethical considerations are no longer relegated to side discussions or post-hoc audits; they are becoming foundational principles integrated into the very design and development lifecycle of AI systems from their inception. The rationale is straightforward: without trust, widespread adoption will inevitably falter. Regardless of a system’s power or accuracy, if people are hesitant to rely on it, its potential remains untapped. In 2026, building accurate models is only part of the equation; cultivating systems that engender understanding and trust is equally, if not more, critical.

Wrapping Up

In 2026, machine learning has solidified its position not as a collection of experimental tools or isolated features, but as an indispensable operational backbone. It has seamlessly integrated into the fabric of daily workflows, silently powering critical decisions, automating complex tasks, and engaging in sophisticated collaboration with human counterparts. The industry’s emphasis has decisively shifted from merely building larger or flashier models to architecting systems that demonstrate true autonomy, integrate fluidly with existing processes, and deliver measurable, real-world impact.

The trends explored—the rise of agentic AI, the infrastructural embedding of generative AI, the strategic preference for specialized models, the expansion of machine learning to the edge, the mandatory adoption of MLOps for operational excellence, the ubiquitous nature of human-AI collaboration, and the imperative of responsible and explainable AI—are not disparate developments. Rather, they are interconnected forces that collectively define a new standard for machine learning: intelligent systems that function reliably, ethically, and meaningfully at the very core of modern business and daily life.

Machine learning in 2026 is less about conceptualizing smarter models and profoundly more about constructing intelligent systems that actively and effectively perform the work, driving tangible progress and shaping the future of automation.