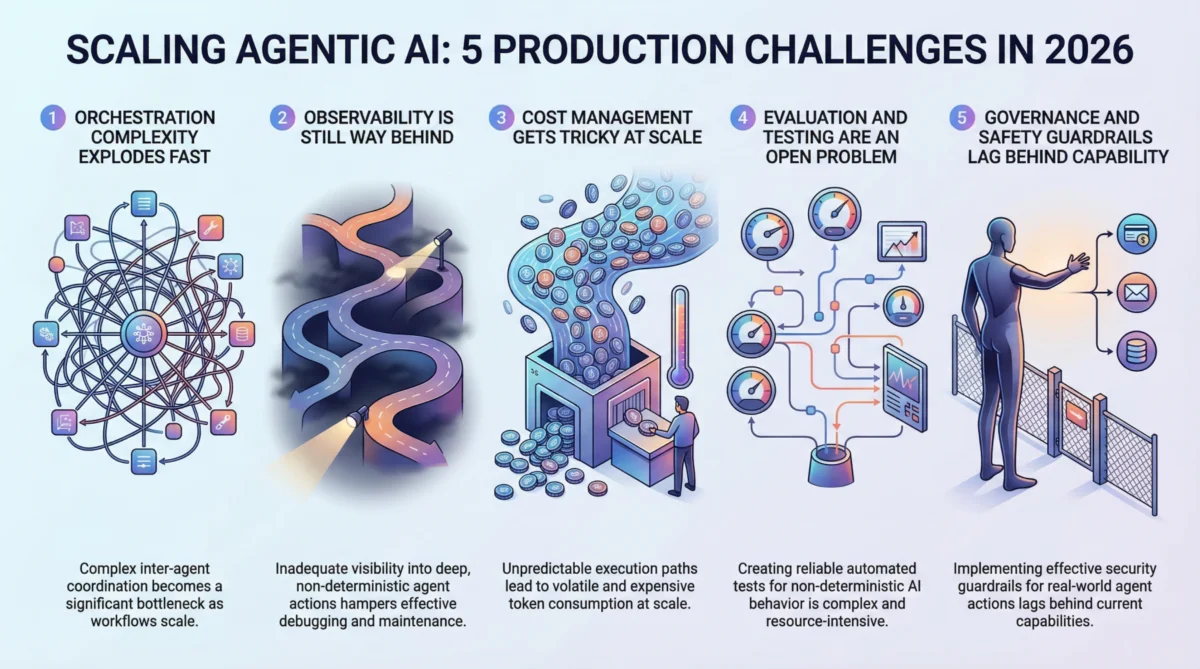

The year 2026 marks a pivotal juncture in the evolution of artificial intelligence, as the burgeoning field of agentic AI transitions rapidly from experimental prototypes to mission-critical production systems. While early demonstrations and proof-of-concept models have showcased remarkable capabilities, the journey to operationalize these autonomous, decision-making systems at scale presents a formidable array of challenges that are currently testing the limits of engineering, infrastructure, and governance frameworks. This article delves into five major hurdles that teams are confronting as they endeavor to deploy agentic AI systems effectively and reliably in real-world environments.

The Ascent of Agentic AI: A New Frontier in Automation

The past few years have witnessed an unprecedented acceleration in AI development, with large language models (LLMs) forming the bedrock for a new generation of intelligent agents. Unlike traditional machine learning models that primarily perform pattern recognition or prediction based on fixed inputs, agentic AI systems are designed to perceive their environment, reason about goals, plan sequences of actions, execute those actions (often through external tools or APIs), and learn from feedback. This paradigm shift enables applications far beyond simple chatbots or data analysis, extending into complex workflows such as automated customer service, dynamic content generation, autonomous research, and sophisticated process automation.

The allure of agentic AI is clear: enhanced efficiency, reduced human intervention, and the potential to solve problems previously considered intractable for machines. Investment in agentic AI startups and research initiatives has surged, with market analysts projecting significant growth in sectors adopting these technologies, from finance and healthcare to manufacturing and logistics. However, the rapid pace of innovation has outstripped the maturity of the operational frameworks needed to manage these systems in production. The leap from a "magical demo" to a "reliable, scalable enterprise solution" is proving to be far more complex than anticipated, revealing deep-seated technical and organizational gaps that demand urgent attention.

1. The Labyrinth of Orchestration Complexity

One of the most immediate and profound challenges in scaling agentic AI is managing the exponential increase in orchestration complexity. A single agent performing a narrow task might be straightforward to define and monitor. However, real-world applications often necessitate multi-agent architectures where agents delegate sub-tasks, coordinate actions, dynamically select tools, and handle failures across intricate, interconnected workflows.

"The moment you move beyond a single-turn interaction, the complexity explodes," states Dr. Anya Sharma, a lead AI architect at a major tech firm. "Traditional workflow engines, designed for deterministic processes, simply buckle under the dynamic, non-linear nature of agentic decision-making. We’re seeing race conditions, deadlocks, and cascading failures that are incredibly difficult to diagnose and even harder to prevent." Teams are finding that the overhead of coordinating these intelligent entities becomes the primary bottleneck, overshadowing the performance of individual model calls. This often leads to the development of bespoke orchestration layers, which themselves become critical points of failure and maintenance nightmares. The situation is further exacerbated under load, where patterns that function flawlessly at low request volumes disintegrate when scaled to thousands or millions of interactions, highlighting a critical need for new paradigms in distributed systems design tailored for autonomous agents.

2. The Observability Blind Spot in Autonomous Systems

Effective debugging and maintenance of any software system hinge on robust observability. For agentic AI, this requirement is amplified, yet the existing tooling lags significantly. Traditional machine learning monitoring focuses on metrics like latency, throughput, and model accuracy. While still relevant, these metrics offer only a superficial view of an agentic system’s internal workings.

When an agent executes a multi-step process, potentially involving dozens of decisions and tool calls, understanding why a particular outcome occurred—or failed to occur—becomes paramount. Why did the agent choose Tool A over Tool B? What was the rationale behind a specific retry loop? Why did the final output deviate from expectations, even if intermediate steps appeared correct? The inherent non-determinism of agentic behavior means that the same input can lead to vastly different execution paths, rendering simple log analysis insufficient. As Dr. Ben Carter, a researcher specializing in AI diagnostics, notes, "You can’t just snapshot a failure and reliably replay it; the context, the environment, and the model’s internal state all contribute to an unpredictable journey. We need deep, granular tracing infrastructure that can reconstruct the agent’s entire ‘thought process’ and action sequence, something far beyond what current LLM-specific tools offer." Without this visibility, teams are often left "flying blind," struggling to identify root causes of failures, ensure ethical behavior, or optimize performance, leading to prolonged debugging cycles and increased operational risks.

3. Navigating the Financial Fog of AI Operations at Scale

The economic implications of scaling agentic AI systems are proving to be a rude awakening for many organizations. Each action an agent takes frequently translates into one or more large language model (LLM) calls, which incur token-based costs. When agents engage in complex reasoning, extensive tool use, or multiple retries, these costs can accumulate at an astonishing rate, transforming a seemingly inexpensive prototype into a budget-devouring production system. A workflow costing a few cents per execution might appear negligible until processing millions of daily requests, quickly escalating to prohibitive monthly expenditures.

"Cost predictability is a major headache," laments Sarah Chen, CFO of a tech scale-up. "Unlike traditional APIs with fairly stable pricing models, agentic systems have highly variable execution paths. An unexpected edge case can trigger a cascade of actions and retries, costing orders of magnitude more than the average path. This makes accurate forecasting and budget allocation incredibly challenging." Teams are adopting various strategies to mitigate this, including routing simpler tasks to smaller, more cost-effective models, aggressive caching of intermediate results, and implementing "kill switches" to terminate runaway agent loops. However, this often creates a delicate balance between cost efficiency and output quality, necessitating continuous optimization and experimentation. The lack of transparent, real-time cost attribution across complex multi-agent interactions further complicates financial oversight.

4. The Uncharted Waters of Evaluation and Testing

The cornerstone of reliable software deployment is robust testing. However, for agentic AI, traditional evaluation methodologies are proving inadequate. The non-deterministic nature of these systems, coupled with their ability to take diverse paths to achieve a goal, breaks the assumptions of fixed input-output mappings common in conventional software and even traditional machine learning. How does one comprehensively test a system that might never behave the same way twice?

The industry is grappling with this fundamental question. Approaches being explored include "LLM-as-a-judge" pipelines, where a separate AI model evaluates the performance and quality of the agent’s output against defined criteria. Scenario-based testing, which focuses on validating behavioral properties rather than exact outputs, is also gaining traction. Furthermore, some leading organizations are investing heavily in sophisticated simulation environments to stress-test agents against thousands of synthetic, diverse scenarios before deployment. Yet, as Dr. Evelyn Reed, an AI ethics researcher, points out, "None of these methods are fully mature, and there’s no industry consensus on what constitutes ‘good enough’ evaluation for complex agentic workflows. The tooling is fragmented, benchmarks are inconsistent, and many teams still rely heavily on slow, unscalable human review processes, introducing significant bottlenecks and potential for oversight." This lack of standardized, scalable evaluation frameworks represents a critical impediment to building trust and ensuring the consistent performance of agentic AI in production.

5. Crafting Robust Governance and Safety Frameworks

Perhaps the most critical challenge, with the broadest societal implications, is the lagging development of governance and safety guardrails for agentic AI. These systems are not merely processing data; they are designed to take autonomous actions in the real world—sending emails, modifying databases, executing financial transactions, and interacting with external services. The inherent autonomy introduces significant safety, ethical, and legal risks.

The dilemma lies in implementing guardrails that are robust enough to prevent harmful, unintended, or unethical actions without overly restricting the agent’s utility. Striking this delicate balance is a complex process, often learned through trial and error in early deployments. Mechanisms like granular permission systems, mandatory human approval workflows for high-impact actions, and strict scope limitations are being developed. However, these often introduce friction, potentially undermining the very efficiency and autonomy that make agentic AI appealing.

Regulatory bodies globally are beginning to take notice. As agentic systems increasingly make decisions that directly affect individuals, businesses, and critical infrastructure, urgent questions about accountability, auditability, transparency, and compliance are emerging. "The pace of technological deployment is far outstripping the development of coherent regulatory frameworks," states a representative from a leading policy think tank. "Organizations deploying agentic AI without a proactive, robust governance strategy risk hitting significant legal and ethical barriers as regulations inevitably catch up, potentially leading to substantial fines, reputational damage, and erosion of public trust." This area demands concerted effort from technologists, policymakers, ethicists, and legal experts to ensure responsible innovation.

Industry Responses and the Path Forward

Despite these formidable challenges, the AI ecosystem is demonstrating resilience and innovation. Collaborative efforts are underway to develop standardized protocols for agent communication and orchestration. New observability tools are emerging, focusing on tracing agent execution paths and decision rationales. Research into more efficient LLM inference, model distillation, and adaptive routing is helping to address cost concerns. Furthermore, a growing emphasis on AI safety research and the development of ethical AI guidelines is laying the groundwork for more robust governance frameworks.

Leading organizations are recognizing that successful deployment of agentic AI requires a multidisciplinary approach, blending expertise from machine learning engineering, DevOps, distributed systems, ethics, and legal compliance. The pain points currently experienced by early adopters are generating invaluable lessons that are rapidly shaping best practices and informing the next generation of AI infrastructure.

Broader Implications for Innovation and Society

The successful navigation of these scaling challenges is not merely a technical endeavor; it holds profound implications for the future of innovation, economic productivity, and societal trust in AI. Overcoming these hurdles will unlock the full potential of agentic AI to transform industries, drive scientific discovery, and solve complex global problems. Conversely, failure to address them adequately could lead to widespread system failures, economic losses, erosion of public confidence, and potentially harmful societal impacts, stifling the very innovation it seeks to foster.

Conclusion

The journey of agentic AI from prototype to production at scale in 2026 is undoubtedly fraught with complexities. Orchestration nightmares, observability blind spots, unpredictable costs, inadequate testing, and lagging governance frameworks represent significant barriers. However, these challenges are also catalysts for innovation, driving the development of new tools, methodologies, and collaborative frameworks within the AI community. The organizations that proactively invest in understanding and solving these foundational problems will be best positioned to harness the transformative power of agentic AI, building systems that are not only intelligent and autonomous but also reliable, safe, and truly scalable in the real world.