Since the initial announcement of Amazon Nova customization capabilities within Amazon SageMaker AI at the…

Tag: inference

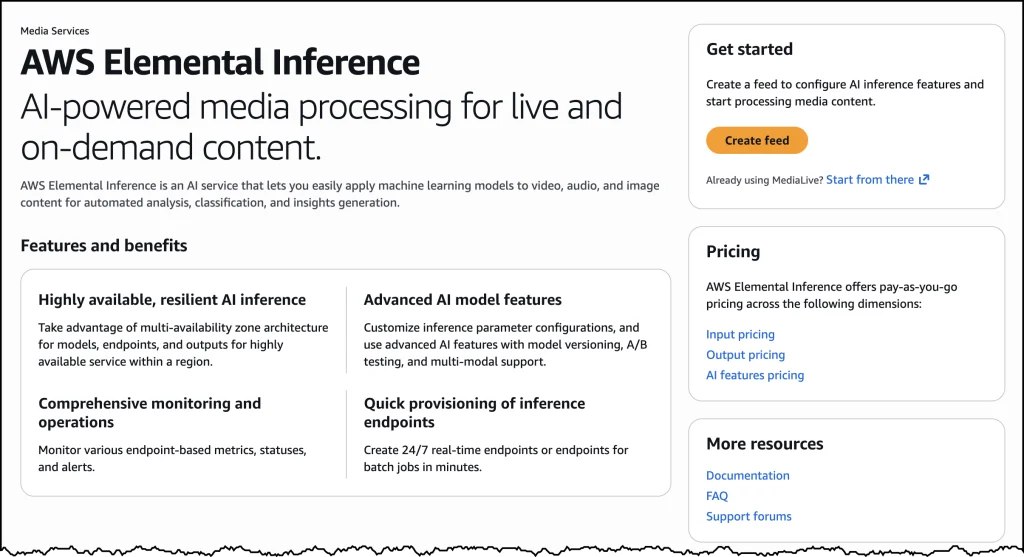

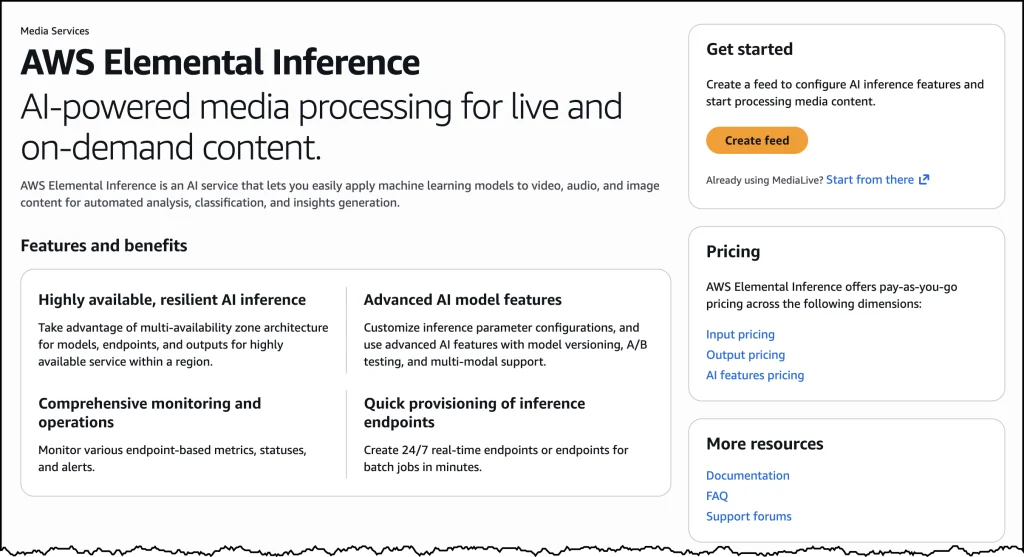

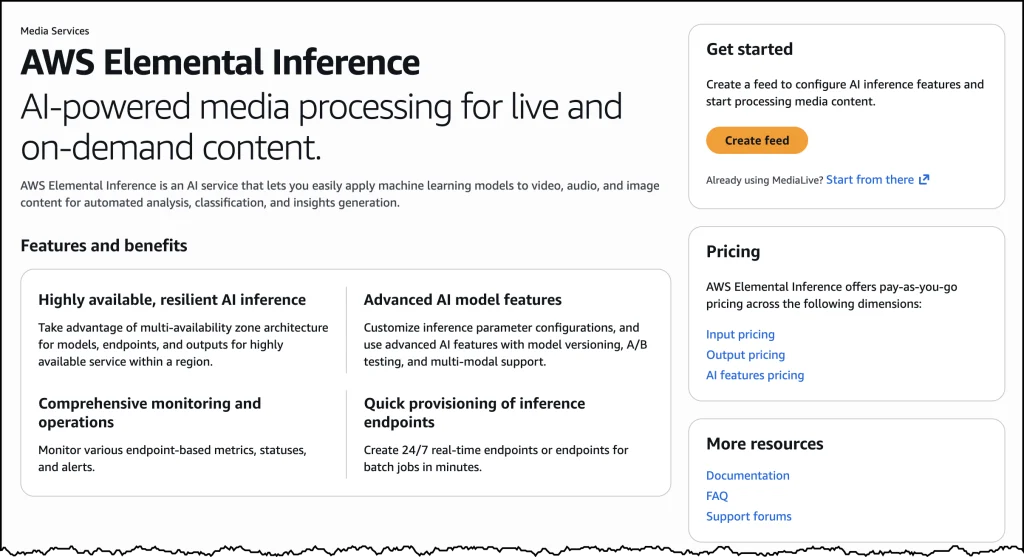

AWS Elemental Inference Revolutionizes Video Broadcasting with AI-Powered Real-Time Vertical Content Adaptation

AWS has officially unveiled AWS Elemental Inference, a groundbreaking fully managed artificial intelligence service designed…

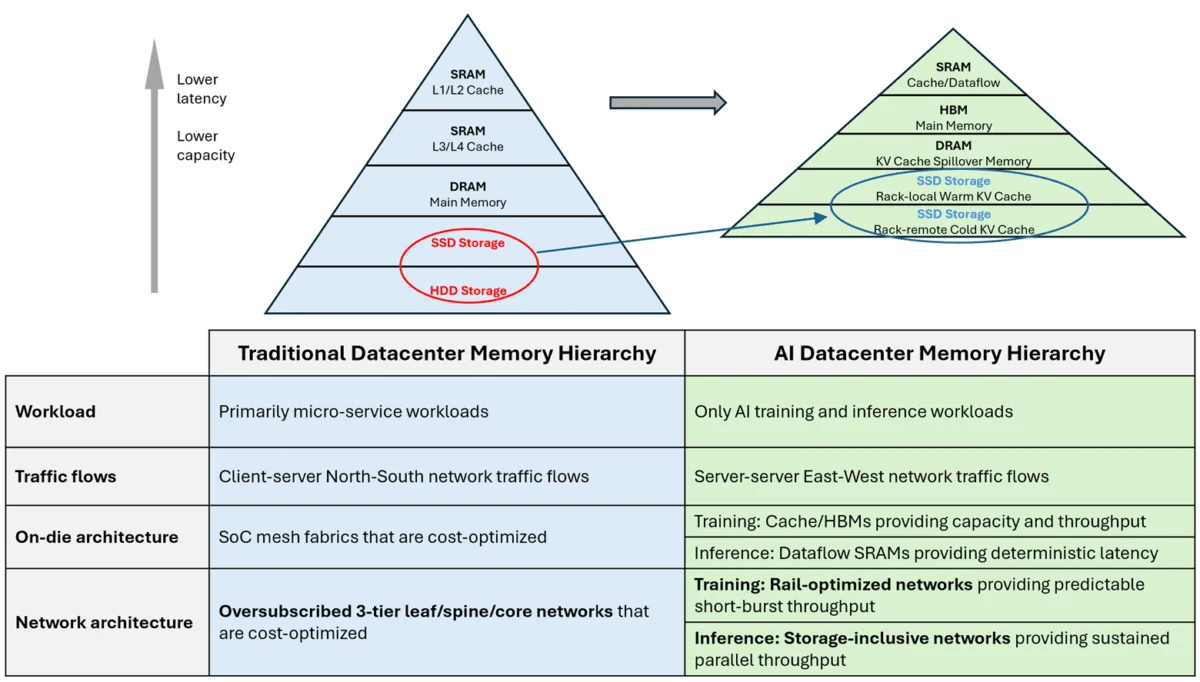

Deconstructing Large Language Model Inference: The Essential Roles of Prefill, Decode, and KV Caching for Scalable Text Generation

The intricate process by which large language models (LLMs) generate coherent and contextually relevant text,…

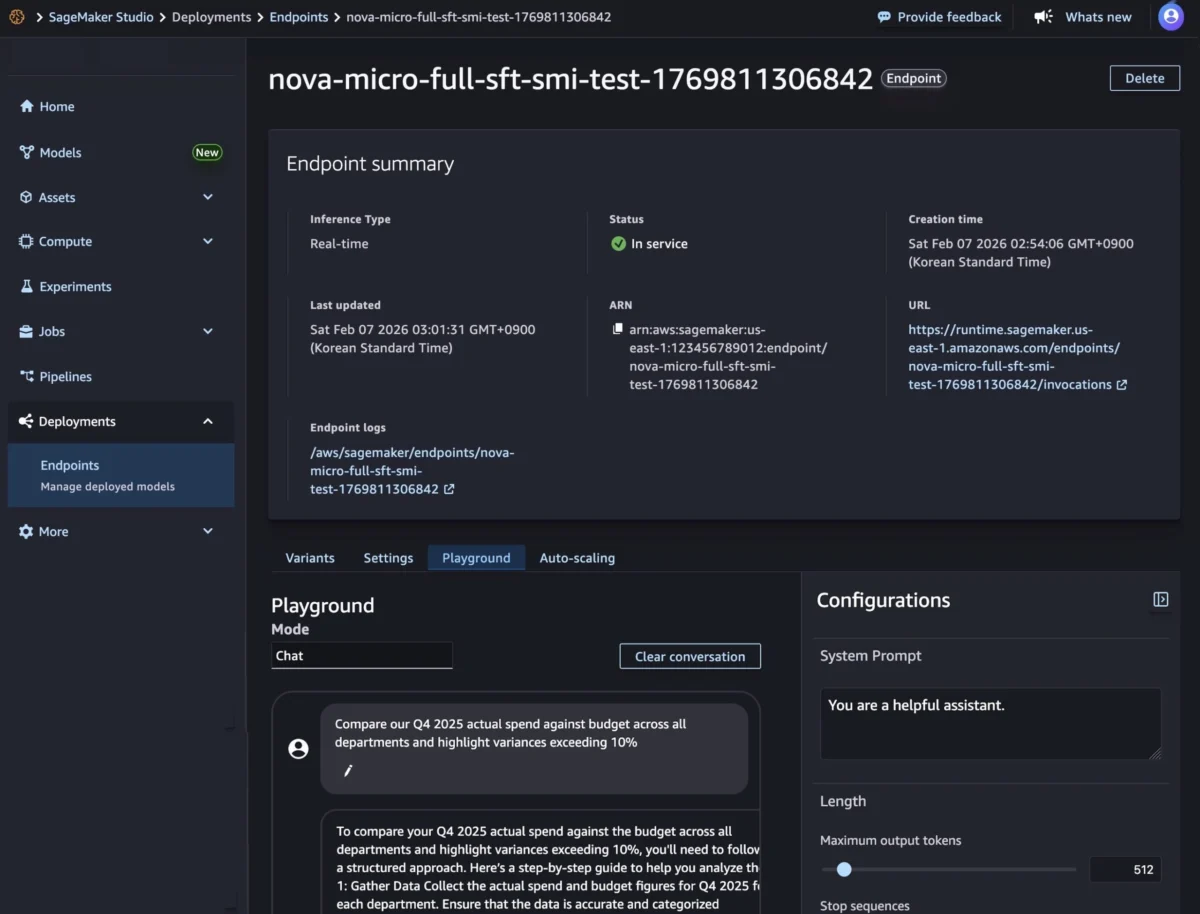

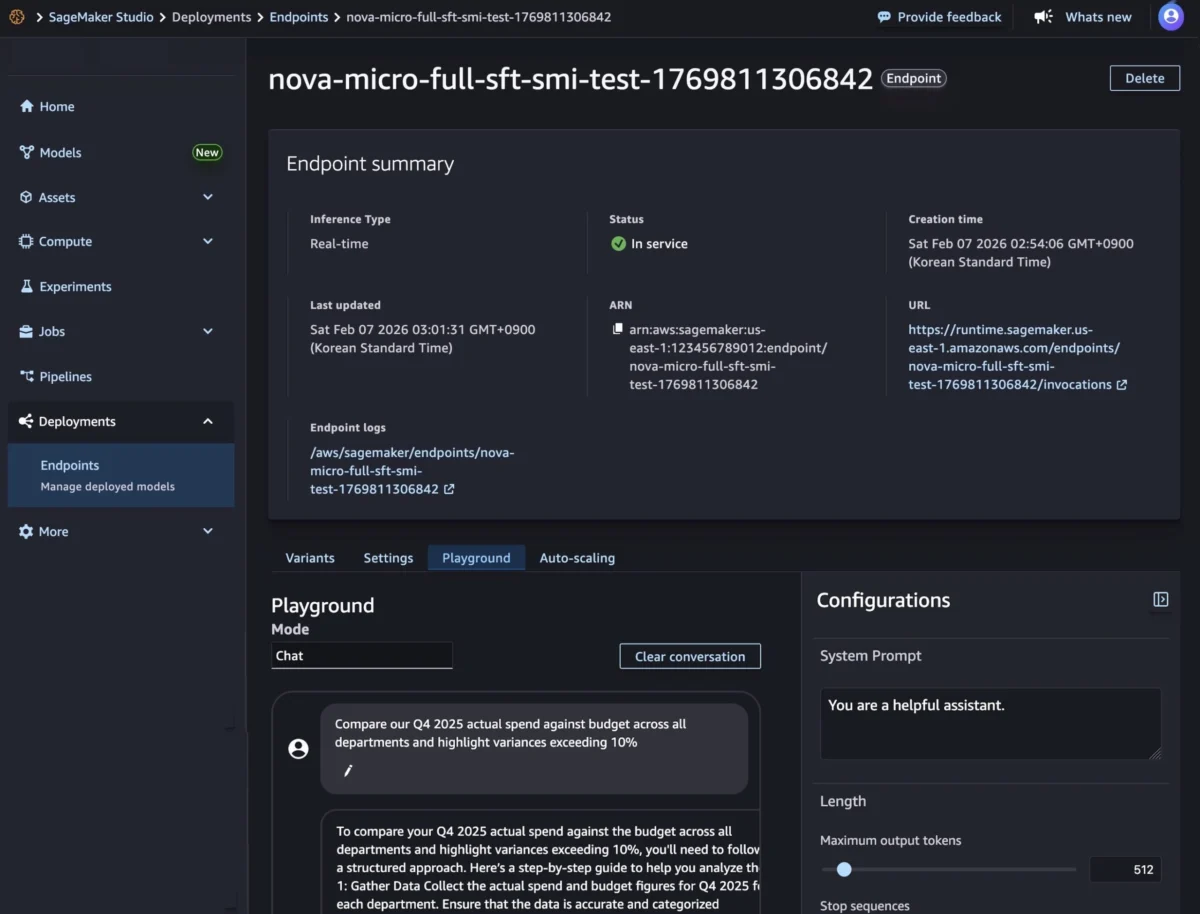

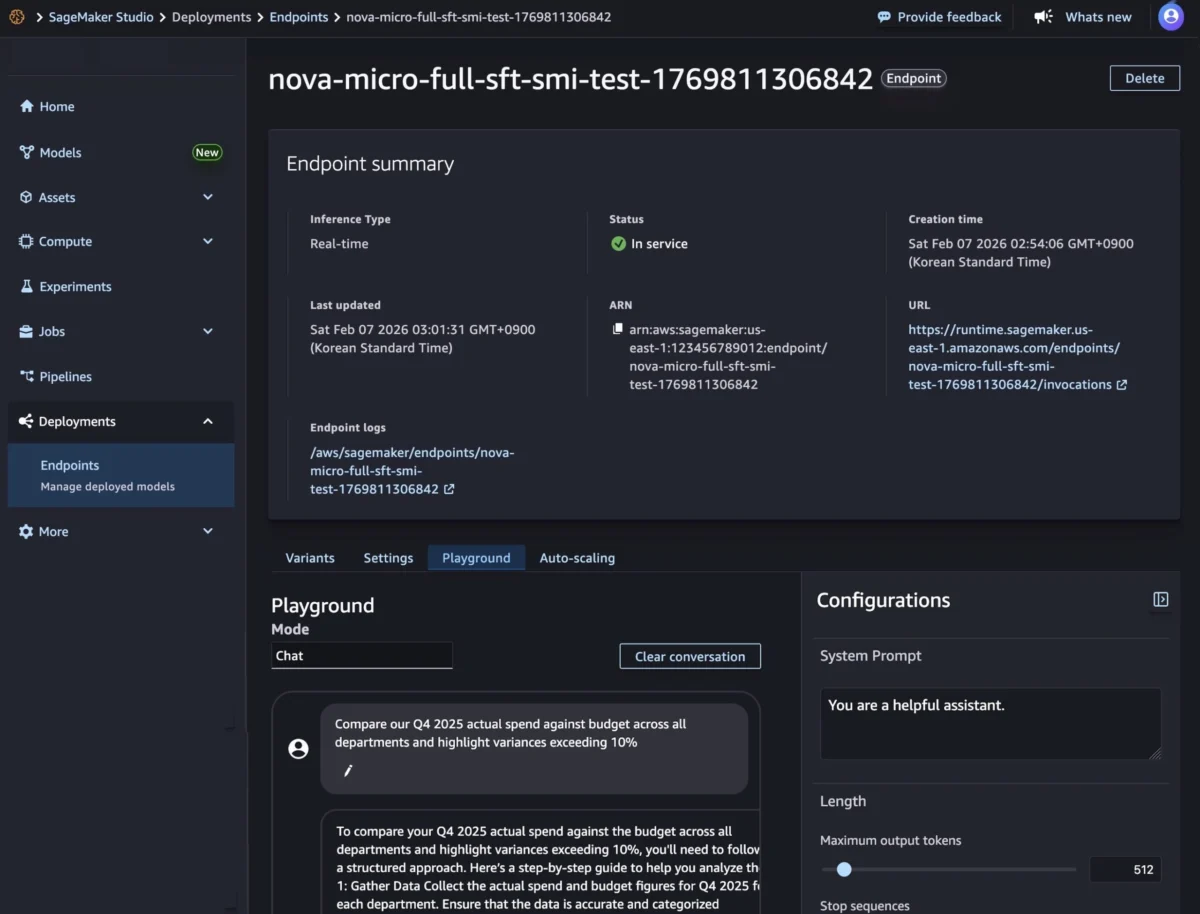

Amazon SageMaker Inference Now Generally Available for Custom Nova Models, Enhancing Enterprise AI Deployment and Efficiency

Amazon Web Services (AWS) today announced the general availability of custom Nova model support in…

Characterizing CPU-Induced Slowdowns in Multi-GPU LLM Inference.

The rapid evolution of generative artificial intelligence has centered almost exclusively on the raw computational…

AWS Elemental Inference Launches, Empowering Broadcasters with AI-Powered Real-Time Video Transformation for Mobile and Social Platforms

Amazon Web Services (AWS) has announced the immediate availability of AWS Elemental Inference, a fully…

The Evolution of AI Data Centers: How Inference-Driven Memory Fabrics Are Redefining Modern Networking Architecture

The rapid acceleration of artificial intelligence deployment is precipitating a fundamental transformation in data center…

Amazon SageMaker Inference Now Generally Available for Custom Nova Models, Offering Enhanced Control and Cost Efficiency

Since the highly anticipated launch of Amazon Nova customization in Amazon SageMaker AI at the…

AWS Elemental Inference Unveiled: Revolutionizing Live and On-Demand Video for the Mobile-First Era

Amazon Web Services (AWS) today announced the launch of AWS Elemental Inference, a fully managed…