The Imperative for Persistent AI Memory

The journey of AI agents from rudimentary, stateless tools to sophisticated, intelligent assistants hinges significantly on their capacity for memory. Without the ability to retain information from previous interactions, AI agents are effectively reset with each new query, rendering them incapable of learning user preferences, maintaining conversational context, or accumulating knowledge. This fundamental limitation restricts their effectiveness in complex, multi-turn dialogues and personalized applications. The challenge of implementing effective memory systems is multi-faceted, encompassing efficient data storage, rapid and relevant retrieval mechanisms, intelligent summarization of past interactions, and dynamic context management. As AI engineers increasingly strive to build agents that are not merely reactive but truly adaptive and anticipatory, the need for advanced memory frameworks extends far beyond simple conversation history. These frameworks are essential for enabling agents to recall specific facts, learn from past experiences, internalize user preferences, and retrieve pertinent context precisely when required, fostering a more natural and productive human-AI interaction.

The rapid advancements in large language models (LLMs) have brought unparalleled generative capabilities to AI, yet their inherent statelessness presents a significant hurdle for building truly intelligent agents. The "context window" limitation, where LLMs can only process a finite amount of information at any given time, means that older conversational turns or background knowledge quickly fall out of scope unless explicitly re-introduced. This challenge has spurred intense innovation in memory management, leading to the development of dedicated layers and systems that can augment LLMs with long-term memory. The industry widely recognizes that overcoming these memory limitations is crucial for unlocking the next generation of AI applications, from highly personalized digital assistants to complex enterprise problem-solvers. Developers are prioritizing solutions that allow agents to evolve, learn, and maintain continuity, moving beyond the current paradigm of isolated interactions.

Evolution of AI Agents and Memory Solutions

The concept of AI agents has evolved considerably, moving from rule-based systems to sophisticated entities leveraging deep learning. Early AI systems often relied on explicit programming for memory, where data was stored in structured databases and retrieved through predefined queries. With the advent of machine learning and, more recently, large language models, the ambition for AI agents shifted towards more autonomous, adaptive, and context-aware behaviors. However, the initial iterations of LLM-powered agents still grappled with short-term memory constraints, often exhibiting "amnesia" between interactions. This limitation spurred the development of specialized memory modules and external knowledge bases. The current phase of innovation, particularly in 2026, is characterized by the emergence of dedicated memory layers and frameworks designed to imbue AI agents with persistent, intelligent memory. These solutions represent a critical step towards agents that can build a cumulative understanding of their environment and users, mimicking human-like cognitive abilities to learn and recall. This chronology highlights a clear trend: from hard-coded memory to dynamic, self-managing, and context-sensitive memory systems that are integral to an agent’s intelligence.

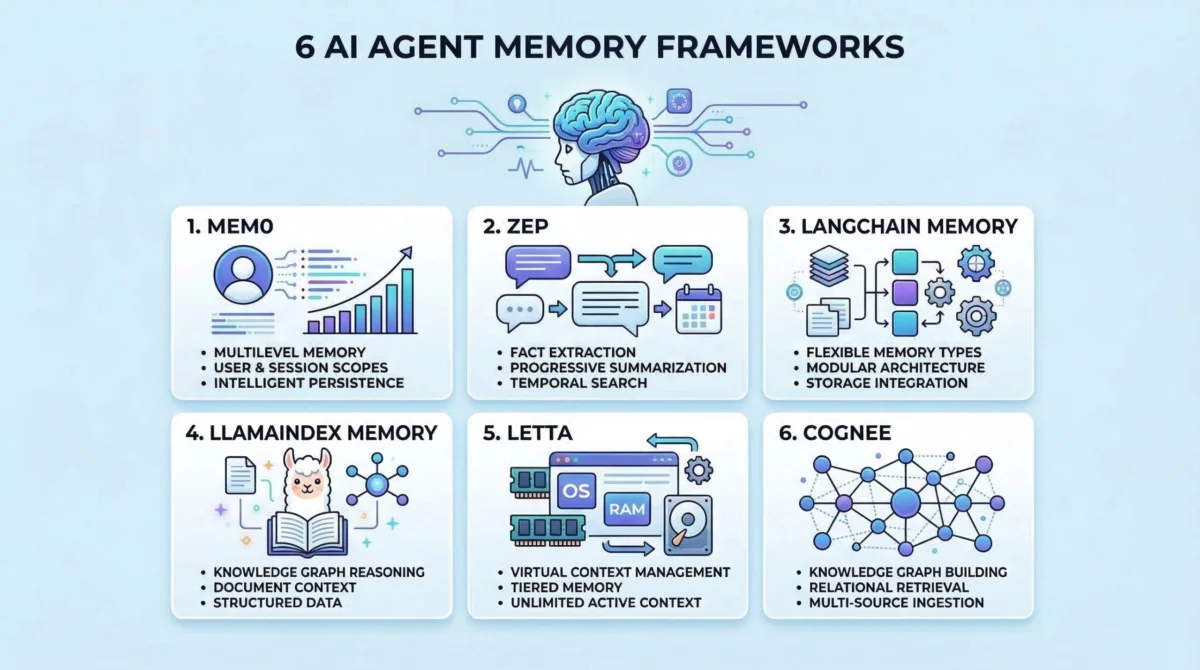

Deep Dive into Leading AI Agent Memory Frameworks

The market for AI agent memory solutions is dynamic, with several innovative frameworks emerging to address the diverse needs of developers. Each framework offers a unique approach to managing, storing, and retrieving information, catering to different architectural preferences and use cases.

1. Mem0: The Intelligent Memory Layer for AI

Mem0 positions itself as a dedicated memory layer specifically engineered for AI applications, providing intelligent and personalized memory capabilities that transcend typical conversational history. Its core strength lies in offering long-term memory that not only persists across multiple sessions but also evolves and adapts based on ongoing interactions. This capability is vital for agents that require a deep, cumulative understanding of users or complex domains.

Mem0’s architecture emphasizes flexibility and intelligence. It provides various memory types, allowing developers to choose the most suitable storage mechanism for different kinds of information, from simple facts to complex relational data. The platform incorporates advanced retrieval mechanisms, often leveraging vector databases and semantic search to ensure that recalled information is not just accurate but also contextually relevant. Furthermore, Mem0 includes built-in summarization and inference capabilities, meaning it doesn’t just store raw data but can also distill key insights and infer new knowledge from its memory stores. This proactive approach to memory management significantly enhances an agent’s ability to provide personalized responses and maintain consistent context, making it particularly useful for customer service bots, personalized learning platforms, and sophisticated virtual assistants that require a "memory" of past interactions and user preferences to deliver truly tailored experiences. The platform’s commitment to evolving memory ensures that agents become more knowledgeable and effective over time, rather than remaining static.

2. Zep: Optimized Long-Term Memory for Conversational AI

Zep is purpose-built as a long-term memory store, specifically tailored for the demanding requirements of conversational AI applications. Its design philosophy centers on efficiently extracting salient facts, summarizing extensive conversations, and providing precise, relevant context to agents in real-time. This focus makes Zep an indispensable tool for applications where dialogue coherence and contextual understanding are paramount.

Key to Zep’s efficacy is its sophisticated approach to conversation processing. It automatically extracts entities, facts, and key themes from ongoing dialogues, structuring this information for efficient storage and retrieval. This capability allows agents to quickly grasp the essence of lengthy conversations without needing to re-process the entire transcript. Zep also excels in dynamic summarization, generating concise summaries of past interactions that can be injected into an LLM’s context window, thereby mitigating the limitations of short context windows. Its advanced context block construction ensures that only the most relevant snippets of memory are retrieved, optimizing token usage and improving the accuracy of agent responses. Zep’s robust API and integration capabilities make it a strong contender for building scalable conversational agents, such as sophisticated chatbots, customer support automation systems, and interactive digital tutors, where maintaining a deep and evolving understanding of the user’s ongoing dialogue is critical for a seamless experience.

3. LangChain Memory: The Versatile Ecosystem Component

LangChain, a prominent framework for developing LLM-powered applications, includes a comprehensive memory module that stands out for its versatility and seamless integration within its broader ecosystem. This module offers a diverse array of memory types and strategies, empowering developers to select the most appropriate approach for various use cases, from simple conversational buffers to complex knowledge graphs.

The strength of LangChain’s memory module lies in its modularity and flexibility. It supports multiple memory strategies, including simple buffer memory for short-term recall, summary memory for condensing long conversations, and vector store-backed memory for semantic retrieval of information from external knowledge bases. This adaptability allows developers to implement sophisticated memory hierarchies, combining different types to achieve optimal performance and contextual awareness. Its tight integration with other LangChain components, such as agents, chains, and retrievers, means that memory can be effortlessly incorporated into complex application workflows. For AI engineers building agents that need to interact with various data sources, perform multi-step reasoning, or maintain complex state, LangChain’s memory module provides a robust and extensible foundation. It is particularly well-suited for building general-purpose AI agents, multi-agent systems, and applications requiring dynamic memory management based on the specific interaction context.

4. LlamaIndex Memory: Powering Knowledge-Intensive Agents

LlamaIndex, known for its powerful data framework, extends its capabilities to include robust memory features specifically designed for agents that need to remember and reason over structured information and vast document collections. Its strength lies in its ability to transform unstructured data into queryable, indexable formats, making it ideal for knowledge-intensive AI applications.

LlamaIndex Memory excels in managing and querying large external knowledge bases. It leverages advanced indexing techniques, including vector indexing and graph indexing, to efficiently store and retrieve information from documents, databases, and APIs. This allows agents to access a broad spectrum of external knowledge and integrate it into their reasoning processes. The framework’s ability to handle diverse data types and integrate with various data sources makes it invaluable for agents that require deep factual recall or need to synthesize information from multiple documents. LlamaIndex facilitates both short-term conversational memory and long-term knowledge retention, enabling agents to answer complex questions, generate comprehensive reports, or perform in-depth analyses by drawing upon their structured memory. This makes it a preferred choice for building enterprise-grade agents, research assistants, and expert systems that operate on extensive and often evolving knowledge domains.

5. Letta: The Operating System Inspired Context Manager

Letta introduces a highly innovative and unique approach to AI agent memory by drawing inspiration from operating systems. It implements a virtual context management system that intelligently orchestrates the movement of information between an agent’s immediate processing context and its long-term storage. This methodology directly addresses the challenge of limited context windows in LLMs by actively managing what information is "loaded" into working memory at any given time.

What distinguishes Letta is its "virtual context" paradigm. Similar to how an operating system manages virtual memory, Letta dynamically pages relevant information into the LLM’s active context as needed, offloading less critical data to long-term storage. This intelligent orchestration ensures that the agent always has access to the most pertinent information without exceeding token limits, leading to more coherent and contextually accurate responses over extended interactions. Letta’s design also emphasizes stateful agents, enabling them to maintain complex internal states and pursue multi-step goals with greater consistency. Its ability to intelligently manage the "working memory" of an AI agent makes it exceptionally powerful for complex task execution, multi-stage problem-solving, and scenarios where an agent needs to maintain a coherent narrative or plan over many interactions. This framework is particularly suited for advanced robotic control, long-duration conversational agents, and autonomous systems requiring sophisticated state management.

6. Cognee: The Knowledge Graph for Dynamic AI Understanding

Cognee is an open-source memory and knowledge graph layer for AI applications, designed to go beyond mere text storage by structuring, connecting, and retrieving information with exceptional precision. It aims to provide agents with a dynamic, queryable understanding of data, transforming raw information into interconnected knowledge that fuels intelligent reasoning.

Cognee’s core innovation lies in its native support for knowledge graphs. It automatically extracts entities, relationships, and events from diverse data sources and organizes them into a structured graph format. This allows agents to not only recall facts but also understand the intricate connections between them, enabling more sophisticated reasoning and inference. The framework provides powerful querying capabilities, allowing agents to traverse the knowledge graph to discover hidden relationships or answer complex questions that require synthesizing information from multiple nodes. By providing agents with an interconnected web of knowledge, Cognee empowers them to exhibit a deeper understanding of the world, anticipate user needs, and generate more insightful responses. This makes it an ideal solution for building advanced recommendation engines, expert systems, and agents that require a profound, structured understanding of complex domains to perform tasks such as anomaly detection, causal analysis, or strategic planning.

Broader Implications of Advanced AI Memory

The proliferation and refinement of AI agent memory frameworks carry profound implications for the future of artificial intelligence. Enhanced memory capabilities directly translate into more capable, reliable, and user-centric AI systems. For enterprises, this means the potential for highly efficient automated customer service, personalized marketing campaigns, and intelligent decision-support systems that learn and adapt over time. In healthcare, memory-equipped AI agents could assist with patient history analysis, drug interaction monitoring, and personalized treatment plans, all while maintaining strict data privacy. For individual users, the promise is of truly intelligent personal assistants that understand their unique preferences, remember past conversations, and proactively offer relevant support, moving beyond the current generation of relatively stateless digital helpers.

However, the advancement of AI memory also presents new challenges. Issues such as data privacy, ethical memory management, and the potential for biases embedded in long-term data necessitate careful consideration. Ensuring that AI agents "forget" when appropriate, or that their memories are auditable and explainable, will be critical for public trust and regulatory compliance. The development of robust memory frameworks is not just a technical endeavor; it is a socio-technical one that requires continuous dialogue between engineers, ethicists, policymakers, and end-users to shape a responsible and beneficial future for AI.

Challenges and Future Outlook

While the frameworks discussed represent significant strides, the journey to perfect AI agent memory is ongoing. Challenges persist in scaling memory solutions to truly massive knowledge bases, ensuring real-time retrieval performance, and developing more sophisticated mechanisms for memory compression and consolidation to manage costs and complexity. The integration of episodic memory (remembering specific events) and semantic memory (remembering facts and concepts) in a seamless, synergistic manner remains an active area of research.

The future of AI agent memory is likely to see further convergence of these diverse approaches. Hybrid models that combine the strengths of knowledge graphs, vector databases, and intelligent context managers are expected to become more prevalent. Moreover, advancements in neuromorphic computing and new memory architectures could potentially offer hardware-level solutions to some of the current software-based challenges. As AI agents become more deeply embedded in our daily lives and professional workflows, the sophistication and reliability of their memory systems will be a defining factor in their success, paving the way for a new era of truly intelligent and adaptive AI.

Conclusion

The landscape of AI agent memory frameworks in 2026 is rich with innovation, offering developers powerful tools to overcome the inherent limitations of stateless AI. From dedicated memory layers like Mem0 to conversational memory specialists like Zep, versatile ecosystems like LangChain and LlamaIndex, operating system-inspired approaches like Letta, and knowledge graph pioneers like Cognee, each framework brings a unique set of capabilities to the table. These solutions are not merely technical enhancements; they are foundational elements enabling the next generation of AI agents to be more intelligent, personalized, and genuinely useful. For AI engineers and organizations, understanding and strategically adopting these frameworks will be critical to unlocking the full potential of AI, moving towards a future where intelligent agents can learn, adapt, and remember, truly transforming human-computer interaction.