Over the past two years, a profound shift has occurred in the economics of software development, fundamentally altering the relationship between code creation and its validation. While the production of code has been dramatically accelerated by the advent of AI coding agents, the process of verifying the correctness and safety of that code has become a significant and escalating challenge. This inversion, particularly pronounced in complex enterprise environments, is forcing a re-evaluation of development infrastructure and processes that were never designed for the speed at which AI can now generate code.

For individual developers working on standalone applications, the impact of coding agents has been largely transformative and immediately apparent. The traditional development feedback loop, characterized by writing, running, observing, and adjusting, has been compressed. Agents can rapidly suggest code snippets, refactor existing logic, and even generate entire functions, allowing for a tight, local iteration cycle. This immediate acceleration in output velocity is a clear benefit for simpler projects.

However, in the intricate landscape of enterprise software development, where applications are often composed of dozens or even hundreds of microservices managed by multiple, independent teams, the gap between code generation and validation is widening into a critical bottleneck. AI agents can refactor a single service in mere seconds, a feat that previously might have taken hours or days of human effort. Yet, the crucial step of proving that this rapid change actually works correctly, integrates seamlessly with other services, and does not introduce regressions, still relies on existing infrastructure and validation processes that are fundamentally ill-equipped to handle such velocity. These legacy systems were designed for a slower, more deliberate pace of development, and they are now struggling to keep pace with the output of AI.

The industry has long discussed the concept of "shifting left," aiming to move testing and quality assurance activities earlier in the development lifecycle. Coding agents are now poised to not just encourage, but to mandate this shift. Forward-thinking platform engineering teams are recognizing this imperative and are actively seeking to build and provide the necessary infrastructure. This infrastructure must grant developers and AI agents both seamless access to realistic development environments and the sophisticated tools required to safely and efficiently validate code against the complex, interconnected reality of the entire application dependency graph.

The CI Feedback Loop: A Bottleneck Too Late

In the vast majority of enterprise organizations, the concept of "safety" in software development has historically been synonymous with a Continuous Integration (CI) pipeline. This pipeline typically triggers only after a pull request (PR) has been opened and submitted for review. This model, while effective when developers generated a handful of PRs per week, is now demonstrably inadequate. When AI agents empower developers to produce a handful of PRs per hour, the existing CI process becomes a significant impediment.

The mathematical implications are stark. If each code change necessitates an average of 30 minutes for validation within a shared staging environment, and an agent-assisted developer generates six PRs daily, that developer would spend the majority of their time waiting in a deployment queue rather than actively building software. The AI agent effectively accelerates code output velocity, but if the surrounding validation system remains static and slow, this velocity inevitably hits a hard wall. The genuine bottleneck in modern software development is no longer the speed of writing code; it is the speed at which that code can be reliably validated. By the time code reaches a CI pipeline, it is, in effect, already too late. The validation process must be integrated directly within the development loop, not relegated to a stage that occurs after it has concluded.

The Complexity Ceiling for AI Agents

This validation challenge is further compounded as the overall complexity of enterprise systems continues to escalate. For a monolithic application or a relatively simple API, an AI agent might be able to run local tests and achieve a reasonable degree of confidence in its changes. However, this approach falters significantly in the context of cloud-native, distributed systems comprising dozens of interdependent services.

When a seemingly minor change in one service has ripple effects across multiple downstream dependencies, an AI agent operating without direct access to realistic infrastructure becomes effectively blind. It can generate code that appears correct in isolation, but it will likely fail during deployment because it lacks the necessary visibility into the broader system’s actual runtime behavior. The agent cannot trace how a request flows through the system, observe the impact of a schema change on a downstream consumer, or verify that a newly introduced endpoint behaves correctly when invoked by the actual services that rely on it.

This deficiency forces developers into a frustrating and inefficient cycle. The AI agent generates a PR, the developer manually scrutinizes it, deploys it to a shared environment, waits for the validation process to complete, discovers a subtle side effect that only emerges under real-world infrastructure conditions, and then must start the entire process anew. In this scenario, the AI agent has performed its intended function, but the system surrounding it has failed to provide the contextual awareness the agent requires to execute its task effectively.

The Foundation: Kubernetes Sandboxes for Ephemeral Environments

Addressing this widening gap necessitates a foundational shift in how development and validation environments are provisioned and utilized. A critical piece of the puzzle involves providing AI agents with access to realistic infrastructure without incurring the prohibitive overhead of duplicating entire production or staging clusters for every development iteration.

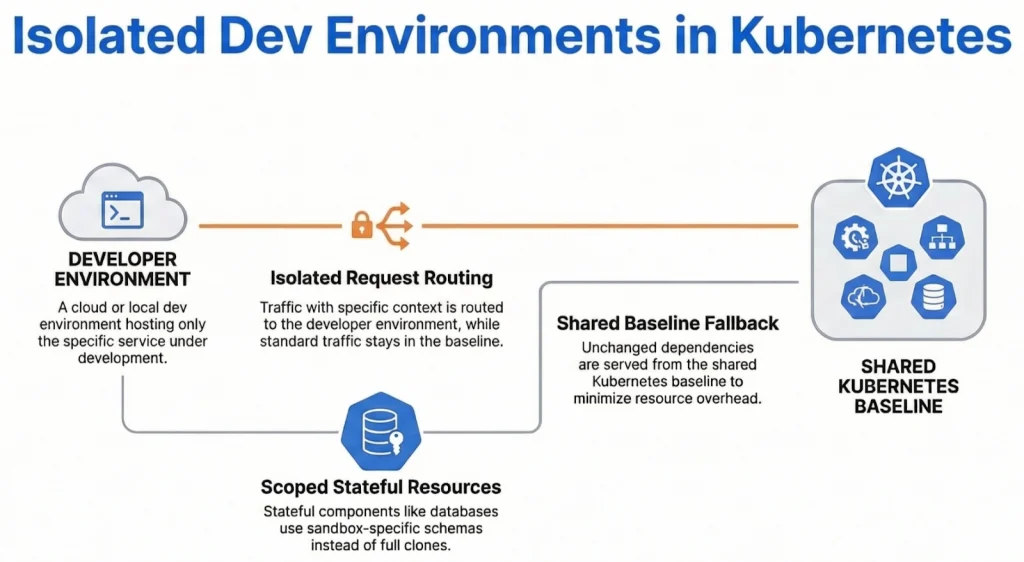

To achieve this, a promising approach leverages service meshes like Istio or Linkerd to create "sandboxes." These are lightweight, ephemeral environments that utilize sophisticated request routing mechanisms to provide a realistic runtime simulation without the need for full environment replication. Instead of provisioning a complete replica of a staging cluster for each code change, a sandbox deploys only the modified service. It then intelligently routes specific development-related requests through this modified service while allowing the bulk of production or staging traffic to continue flowing through the established, shared infrastructure.

This architectural innovation drastically reduces the cost per environment, often to a mere fraction of traditional methods, and enables sandboxes to be spun up in seconds rather than minutes. This paradigm shift fundamentally alters the economic calculus. When development environments are inexpensive, rapid, and disposable, they cease to be a scarce, competitive resource. Instead, they transform into programmable tools that AI agents can utilize programmatically as an integral part of their standard workflow, allowing them to test changes against a live, representative version of the entire system without impeding the progress of other developers.

However, mere access to realistic infrastructure is insufficient. An AI agent also requires structured, reliable, and predictable mechanisms to interact with that infrastructure. Furthermore, enterprise teams demand robust confidence that agents can consistently and safely validate code across the entire organization. This represents the next frontier for platform engineering. Just as platform teams currently provide essential services like CI pipelines, deployment tooling, and observability as shared resources, they will increasingly need to offer integrated validation capabilities that developers and AI agents can leverage directly during the development phase itself.

The core insight here is that validation in a distributed system is not a singular, monolithic check. Instead, it is a composed sequence of carefully orchestrated steps. These steps encompass infrastructure provisioning, intricate service interactions, and rigorous result verification.

Closing the Loop: From Generation to Verified Delivery

The ultimate vision for this paradigm shift is straightforward. When a coding agent generates a code change, it should possess the capability to independently verify that change against realistic infrastructure before presenting it to a human developer. The developer should then receive not merely a pull request, but a comprehensive "proof of correctness." This proof would comprise a detailed record demonstrating that the AI agent has thoroughly tested its work against live services, that critical integration points behave as expected, and that no regressions have been inadvertently introduced.

This transformative approach effectively collapses the traditional, multi-stage CI feedback loop into the development phase itself. The protracted cycle of "write, commit, open PR, wait for CI, discover failure, and fix" is replaced by a streamlined "write, validate, present verified result." This dramatically reduces cycle times and accelerates the delivery of high-quality, robust software.

Signadot’s Approach: The Skills Framework

At Signadot, we are actively building towards this vision through what we term the "Skills" framework. Our Skills are built upon our ephemeral sandbox infrastructure and leverage a curated library of platform-governed primitives we call "Actions." These Actions include capabilities such as sending an HTTP request to a specific service within a sandbox, capturing detailed logs, or asserting that a service’s response adheres to an expected schema.

Each Action is individually governed by the platform team. This ensures that critical security and compliance requirements are enforced at the most granular level, rather than being an afterthought or a bolt-on solution. Because these Actions are designed to be deterministic, platform teams can empower developers and AI agents with the flexibility to compose their own bespoke validation workflows. This can be achieved without sacrificing the crucial consistency in how code is validated across the entire organization.

A developer or an AI agent can author a "plan," which is essentially a sequence of Actions designed to validate a specific behavioral aspect of the code. This plan is then tagged, versioned, and exported as a native skill for the developer’s chosen AI coding agent. When the agent makes a code modification, it automatically executes the relevant skill within a live sandbox environment and transparently reports the results.

Our fundamental goal is to grant AI agents the autonomy to rigorously validate their own work, while simultaneously ensuring that platform teams retain ultimate control over the operational boundaries and security parameters. We firmly believe that this delicate balance between developer autonomy and platform governance is absolutely essential for enterprises to fully realize the profound benefits of agentic development at scale. The future of software development hinges on empowering AI while maintaining robust control and ensuring the integrity of the entire ecosystem.