The landscape of global communications technology reached a definitive turning point in March 2026 as the Optical Fiber Communications Conference (OFC) in Los Angeles and Nvidia’s GTC in San Jose occurred simultaneously, signaling a total convergence between artificial intelligence compute and optical networking. For decades, OFC was the premier venue for telecommunications giants focused on transcontinental and transoceanic long-haul fiber. However, the 2026 event was dominated by a shift toward data center AI, reflecting a reality where the bottlenecks of machine learning are no longer found in the processors themselves, but in the interconnects that feed them. As AI models transition from simple chatbots to complex "agentic" systems, the industry is entering a five-year window where all high-bandwidth data interconnects are expected to become optical, effectively moving fiber from the backbone of the internet to the very heart of the server rack.

The Exponential Demand of the Inference Era

The primary catalyst for this shift is the explosive growth in AI inference. While 2023 and 2024 were defined by the training of Large Language Models (LLMs), 2026 has seen inference—the actual use of these models by consumers and enterprises—become the dominant driver of hardware demand. According to data disclosed by Nvidia CEO Jensen Huang during GTC 2026, the demand for AI compute has transitioned from a solid backlog of $500 million in previous cycles to a staggering $1 trillion. This surge is mirrored by the financial performance of AI pioneers; both Anthropic and OpenAI are now operating at annual revenue run rates of approximately $25 billion, a meteoric rise from near-zero just a few years prior.

This growth is fundamentally changing the nature of data center traffic. Early AI applications like the original ChatGPT were "one-shot" interactions. Modern AI has evolved into deep reasoning and agentic workloads—autonomous systems that can perform multi-step tasks. These workloads require orders of magnitude more tokens per query, making the speed of response (Tokens Per Second, or TPS) a critical metric for human-centric applications. To satisfy this, hyperscalers—who represent 60% of Nvidia’s demand—are boosting capital expenditures to record levels, seeking hardware that can provide 2X to 35X more throughput within the same power (megawatt) envelope.

The Hardware Roadmap: From Blackwell to Feynman

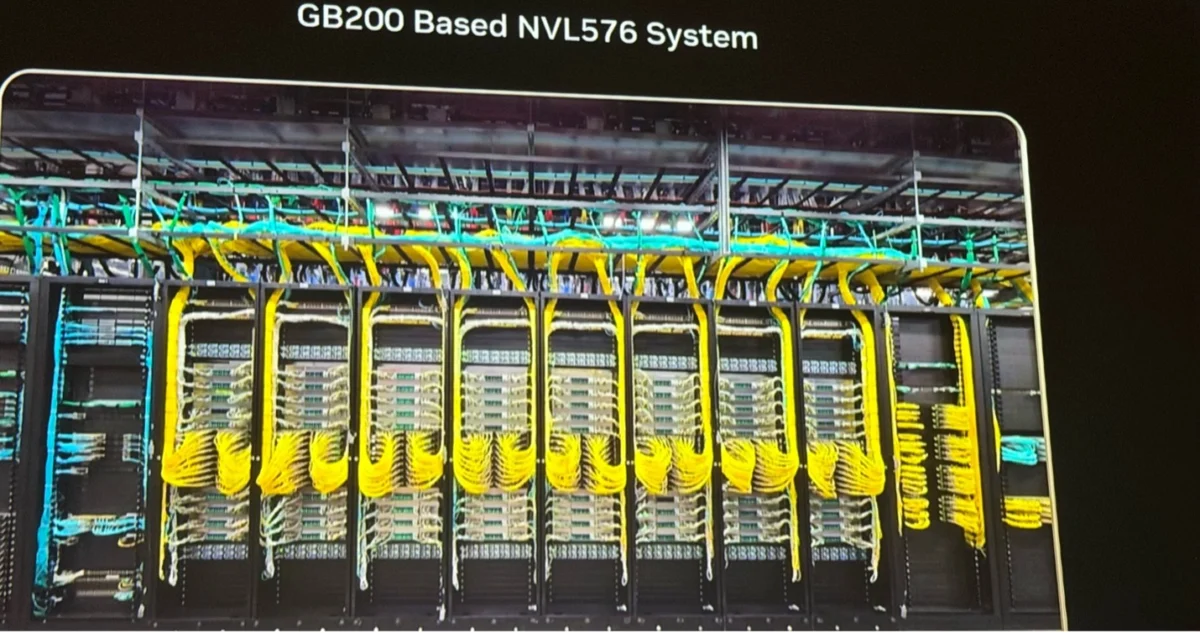

The transition to all-optical interconnects is best illustrated by Nvidia’s aggressive hardware roadmap. The industry is currently moving through the Blackwell architecture, but the focus has shifted toward the upcoming Rubin and Feynman generations. At OFC 2026, industry leaders observed a clear evolution in how these systems are physically linked. While current architectures like the GB200 NVL576 prototype still utilize copper for in-rack connections, they have shifted entirely to optical fiber (identifiable by the ubiquitous bright yellow cables) for inter-rack communication.

Nvidia’s upcoming Feynman architecture, slated for 2028, is expected to introduce NVLink 8 switches featuring Co-Packaged Optics (CPO). While copper may remain an option for the shortest distances within a rack, CPO is projected to take over all scale-up connections as bandwidth requirements exceed the physical limits of electrical signaling. This transition is not merely a choice but a necessity; as frequencies rise, copper cables suffer from significant signal attenuation and reliability issues, whereas optical fibers offer near-lossless transmission over the distances required for 1,000+ GPU pods.

Technical Innovations in Co-Packaged Optics (CPO)

The move toward CPO represents a fundamental re-partitioning of how optics and silicon interact. Traditionally, optical transceivers were pluggable modules located at the front panel of a switch. CPO brings the optical engine directly onto the same package as the GPU or switch silicon. This proximity reduces power consumption and increases signal integrity by shortening the electrical path between the processor and the light-emitting components.

A centerpiece of this technological shift is TSMC’s Compact Universal Photonic Engine (COUPE). The COUPE architecture utilizes a three-dimensional stacking method:

- Photonic Integrated Circuit (PIC): Fabricated on a 65nm SOI (Silicon on Insulator) process, this chip handles the flow of optical signals.

- Electronic Integrated Circuit (EIC): Fabricated on a 7nm FinFET process, this chip is bonded to the PIC and provides the electro-optical interface, controlling heaters for ring oscillators and reading photodetectors.

By bringing light in through the top of the stack via a grating coupler and utilizing metallic backside reflectors to reduce signal loss, Nvidia has demonstrated transmission losses as low as 1 dB. This level of efficiency is vital for maintaining the "blast radius" of reliability—ensuring that if a component fails, the entire system doesn’t go down. To mitigate failure risks, many new designs utilize external, pluggable laser sources. These lasers provide the light for the CPO but can be easily replaced if they fail, similar to how one might replace a lightbulb without replacing the entire fixture.

The Rise of Optical Circuit Switching (OCS)

While CPO handles the connections between individual chips, Optical Circuit Switching (OCS) is revolutionizing the data center network architecture. Google pioneered this field by developing its own OCS to replace traditional electrical switches at the top of its network hierarchy. By using mirrors to route light directly rather than converting it to electricity and back again, Google achieved a 40% reduction in power consumption and significantly lower latency.

The market for OCS is expanding rapidly. Leading optics suppliers Lumentum and Coherent have seen their OCS revenue potential jump from $100 million to over $400 million in just a few quarters, with Lumentum recently announcing a billion-dollar deal with a single hyperscale customer. Analysts at CignalAI project the Total Available Market (TAM) for OCS to exceed $3 billion as other hyperscalers like AWS and Meta adopt the technology.

The 2026 conference also highlighted the emergence of silicon photonics startups challenging the established players:

- iPronics (Spain): Has already deployed 32×32 OCS switches to hyperscalers and is developing modular 4U systems capable of supporting 1,536 ports.

- n-eye (USA): Utilizing a unique 2D crossbar architecture with MEMS (Micro-electromechanical systems) that can switch circuits in approximately one microsecond.

- Salience Labs (UK): Developing high-density switch boards that integrate semiconductor optical amplifiers (SOAs) to maintain signal strength across large-scale fabrics.

The goal for these companies is to meet a specific "wish list" shared by researchers: zero-loss switches with thousands of ports per rack that can be reconfigured in nanoseconds to map around network failures or optimize for specific AI workloads.

Reliability and the Future of Dense Wavelength Division Multiplexing (DWDM)

A significant barrier to the adoption of new optical technologies has historically been reliability. However, at OFC 2026, Meta presented extensive data comparing traditional pluggable transceivers with newer CPO solutions. Their findings suggest that CPO is actually more reliable than pluggable modules because it is a simpler, less mechanical semiconductor product. As this data builds confidence among data center operators, the transition from copper to fiber is expected to accelerate.

The next frontier for the industry is Dense Wavelength Division Multiplexing (DWDM). Currently, most optical links use a single wavelength of light. DWDM increases the capacity of a single fiber by sending data over multiple wavelengths (2, 4, 8, or 16) simultaneously. This is achieved using Micro-Ring Modulators (MRMs), which allow the photonics to be miniaturized enough to fit within the cramped confines of a GPU package. As DWDM matures, it will allow for even higher bandwidth at lower power, potentially extending the life of current fiber infrastructure for several more generations of AI hardware.

Broader Industry Implications and Conclusion

The "opticalization" of the data center has profound implications for the semiconductor industry. For decades, CMOS (Complementary Metal-Oxide-Semiconductor) engineers and optical engineers worked in separate silos. Today, those worlds have merged. Executives at leading chip firms now recognize that their ability to compete in the AI market depends as much on their optical integration strategy as it does on their transistor density.

The shift toward optical interconnects and switching is also redrawing the map of data center efficiency. As power availability becomes the ultimate constraint on AI growth, the energy savings provided by photonics are no longer a luxury but a survival requirement for hyperscalers. The roadmap presented at OFC and GTC 2026 suggests that by 2030, the "yellow cable" will be the standard for every high-speed connection in the data center, from the transoceanic landing station down to the individual AI accelerator.

The era of the "all-optical" data center is no longer a theoretical projection; it is an industrial reality currently being built. As Nvidia, Google, and their partners deploy Rubin and Feynman-class systems, the synergy between light and silicon will define the next decade of human technological progress, providing the high-speed nervous system required for the increasingly complex brain of global artificial intelligence.