Containers have emerged as a transformative technology in the realm of software development and deployment, fundamentally altering how applications are packaged, delivered, and managed. At their core, containers are lightweight, standalone, executable packages of software that bundle everything a piece of software needs to run: its code, runtime environment, system tools, essential libraries, and configuration settings. This approach is a key component of a broader technological movement known as containerization, which focuses on isolating software and its dependencies from other processes on a host system. This article delves into the intricacies of containerization, exploring its fundamental components, its critical distinctions from virtual machines, its diverse applications, inherent benefits, potential challenges, and the cutting-edge technologies shaping its future.

Understanding the Mechanics of Containers

Containerization empowers developers to package and execute applications within isolated environments. This process ensures a consistent and efficient deployment experience, irrespective of the underlying infrastructure, ranging from a developer’s local machine to robust production servers. The key advantage lies in eliminating the "it works on my machine" dilemma, which often stems from discrepancies in operating system configurations and hardware dependencies.

Unlike traditional deployment methodologies, containers encapsulate an application along with all its necessary dependencies into a compact container image. This image serves as a blueprint, containing the application’s code, runtime, libraries, and system tools. A crucial characteristic of containers is their ability to share the host system’s kernel. While they maintain their own isolated filesystem, CPU, memory, and process space, this shared kernel architecture makes them significantly more resource-efficient and faster to launch compared to virtual machines (VMs).

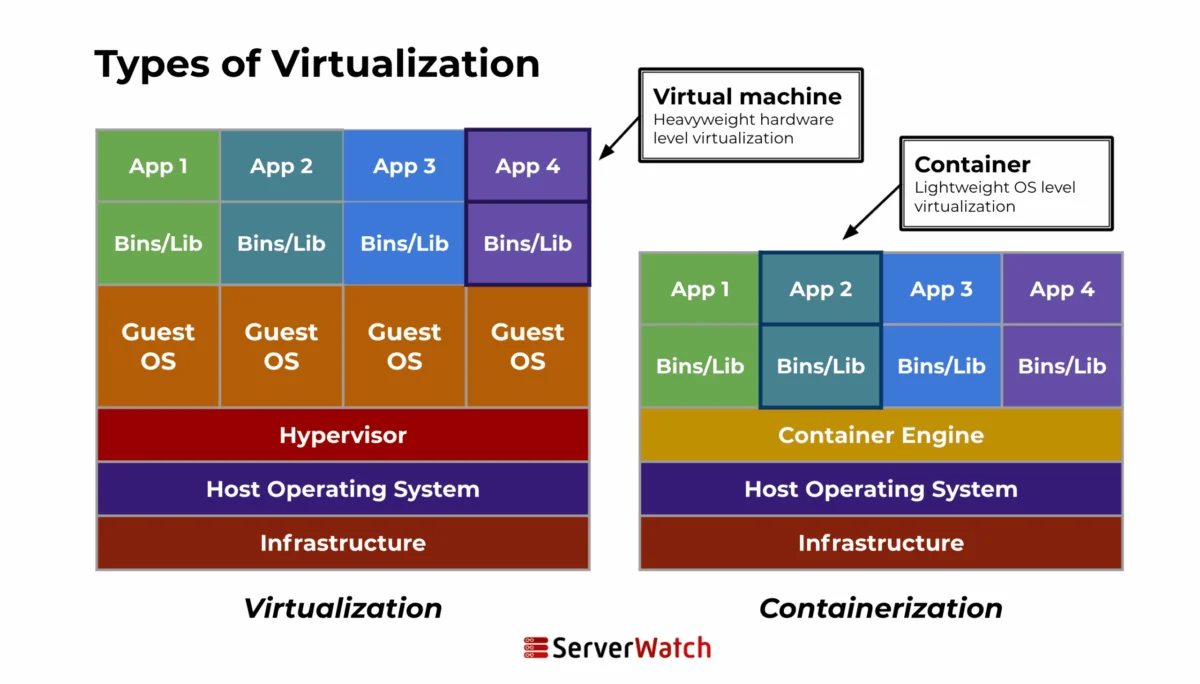

A visual representation of containerization versus virtualization highlights this fundamental difference. Virtualization employs a hypervisor to create entirely separate virtual hardware environments, each running a complete guest operating system. This approach offers strong isolation but incurs a higher resource overhead. Containerization, on the other hand, leverages a container engine that utilizes the host’s kernel, creating isolated user-space environments for each container. This efficiency gain is a primary driver for the widespread adoption of container technology.

Containers vs. Virtual Machines: A Critical Distinction

While both containers and VMs serve the purpose of providing isolated environments for applications, their underlying architectures and operational mechanisms differ significantly. Understanding these differences is crucial for selecting the appropriate technology for specific use cases.

| Feature | Containers | Virtual Machines |

|---|---|---|

| Architecture | Containers share the host system’s kernel, isolating application processes from the core OS. They do not require a full guest OS for each instance, leading to a lightweight footprint and rapid startup times. | Each VM includes not only the application and its dependencies but also an entire guest operating system. This OS runs on virtual hardware managed by a hypervisor, which resides on top of the host’s physical hardware. VMs offer strong isolation from each other and the host. |

| Resource Management | Containers are highly resource-efficient, consuming fewer resources as they share the host kernel and only need the application and its runtime. This leads to better hardware utilization. | The necessity of running a full OS within each VM results in higher resource consumption, potentially leading to less efficient utilization of underlying hardware compared to containerized environments. |

This divergence in architecture directly impacts performance and resource utilization. Containers, by sharing the host OS kernel, avoid the overhead of booting and managing multiple operating systems, making them ideal for scenarios demanding rapid deployment and high density. VMs, conversely, offer a more robust security boundary and greater flexibility in running different operating systems simultaneously, making them suitable for legacy applications or environments requiring strict separation.

The Inner Workings of Containerization

The process of containerization involves packaging an application into a container with its own dedicated operating environment. This typically entails several key steps:

- Image Creation: Developers define the contents of a container image using a set of instructions, often in a Dockerfile. This file specifies the base operating system image, the application code, dependencies, and configuration commands.

- Image Building: A container engine (like Docker) processes the Dockerfile and builds a layered image. Each instruction in the Dockerfile creates a new layer, allowing for efficient storage and sharing of common base layers.

- Container Runtime: Once the image is built, it can be used to launch a container. The container runtime, managed by the container engine, creates an isolated environment based on the image, allocating resources and running the application.

- Isolation Mechanisms: The operating system kernel provides the underlying mechanisms for isolation, such as namespaces (for process, network, and filesystem isolation) and control groups (cgroups) for resource management (CPU, memory, I/O).

Key Components of a Container

A container is a self-contained unit, comprising several critical components that ensure its functionality and isolation:

- Application Code: The actual software that the container is designed to run.

- Runtime Environment: The specific language runtime or framework required by the application (e.g., Java Virtual Machine, Python interpreter, Node.js).

- Libraries and Dependencies: All the external libraries and dependencies that the application needs to function correctly, ensuring it doesn’t rely on libraries installed on the host system.

- System Tools: Essential utilities and command-line tools that the application might require for its operation.

- Configuration Settings: Application-specific configuration files and environment variables that dictate its behavior.

- Filesystem: A read-only layer from the container image, augmented by a writable layer on top where the application can make changes during runtime.

- Process Space: A dedicated process tree for the application, isolated from other processes on the host.

- Network Interface: A virtual network interface that allows the container to communicate with other containers or the outside world, often managed by the container runtime.

Diverse Use Cases for Containerization

The versatility and efficiency of containers have led to their widespread adoption across a multitude of application development and deployment scenarios.

Microservices and Cloud-Native Applications

Containers are intrinsically aligned with the principles of microservices architecture, where applications are decomposed into small, independently deployable services. Each microservice can be encapsulated within its own container, fostering isolation, simplifying updates, and enabling independent scaling. In the context of cloud-native development, containers are fundamental to building highly scalable, resilient, and agile applications. Orchestration platforms like Kubernetes further enhance this by enabling dynamic management, automated healing, and efficient load balancing in response to fluctuating demand. This architectural shift allows organizations to build complex applications from smaller, manageable components, accelerating innovation and improving fault tolerance.

Continuous Integration/Continuous Deployment (CI/CD) Pipelines

Containerization has revolutionized CI/CD pipelines by providing consistent and reproducible environments throughout the development lifecycle. From developer workstations to testing, staging, and production servers, containers ensure that the application behaves identically across all stages. This consistency drastically reduces the "it works on my machine" problem and minimizes deployment failures caused by environmental discrepancies. Automated testing within containerized environments, which mimic production conditions, leads to earlier bug detection and a faster, more reliable release cadence. For instance, a CI/CD pipeline can automatically build a container image upon code commit, run automated tests within that container, and if all tests pass, deploy the container to a staging environment for further validation.

Application Packaging and Distribution

The ability of containers to bundle an application with all its dependencies makes them an ideal solution for application packaging and distribution. This portability ensures that applications can be deployed across diverse platforms and cloud environments without requiring modifications. Container registries, such as Docker Hub or Google Container Registry, serve as central repositories for storing and managing container images. These registries facilitate version control, allowing for easy rollbacks to previous stable versions in case of deployment issues, thereby enhancing the reliability and stability of software delivery. The ease of sharing and deploying these self-contained units simplifies the entire software supply chain.

The Multifaceted Benefits of Containerization

The widespread adoption of containerization is not merely a trend; it is driven by a compelling set of advantages that address critical challenges in modern software engineering.

- Enhanced Portability: Container images are designed to run consistently across different environments, from local development machines to on-premises servers and various cloud platforms. This eliminates the need for environment-specific configurations, saving significant time and effort.

- Improved Resource Utilization: By sharing the host OS kernel, containers consume fewer resources (CPU, memory, storage) compared to VMs. This leads to higher application density on a single server, reducing infrastructure costs.

- Faster Deployment Cycles: The lightweight nature of containers allows for rapid startup times, enabling applications to be deployed and scaled in seconds rather than minutes or hours. This agility is crucial for meeting dynamic business demands.

- Increased Consistency: Containers provide a consistent runtime environment, ensuring that applications behave predictably regardless of where they are deployed. This predictability reduces the likelihood of "works on my machine" issues and simplifies debugging.

- Simplified Development Workflow: Developers can package their applications and dependencies into containers, ensuring that the development environment closely mirrors the production environment. This reduces friction between development and operations teams.

- Scalability and Elasticity: Container orchestration platforms like Kubernetes enable applications to be scaled up or down automatically based on demand, ensuring high availability and optimal performance.

- Isolation and Security: While not as robust as VM isolation, containers provide process-level isolation, preventing applications from interfering with each other or the host system.

- Cost Efficiency: Improved resource utilization, faster deployment, and reduced infrastructure overhead contribute to significant cost savings for organizations.

- Microservices Enablement: Containers are a natural fit for microservices architectures, allowing each service to be developed, deployed, and scaled independently.

- Streamlined CI/CD: Containers integrate seamlessly into CI/CD pipelines, facilitating automated builds, testing, and deployments.

- Disaster Recovery: Containerized applications can be quickly redeployed on different infrastructure in the event of a failure, improving disaster recovery capabilities.

- Developer Productivity: By abstracting away infrastructure complexities, containers allow developers to focus more on writing code and delivering business value.

- Innovation Acceleration: The agility and efficiency provided by containers empower organizations to experiment with new ideas and bring innovative products to market faster.

Navigating the Challenges and Considerations

Despite their significant advantages, the implementation and management of containerized environments are not without their challenges. Organizations must be aware of these potential hurdles to ensure successful adoption.

Security Issues

While containers offer isolation, they are not immune to security vulnerabilities. Key concerns include:

- Image Vulnerabilities: Container images can inherit vulnerabilities from their base operating system or included libraries. Regular scanning and patching are essential.

- Container Escapes: Although rare, there’s a risk of a malicious process within a container breaking out of its isolation and accessing the host system or other containers.

- Insecure Registries: Storing container images in unsecured registries can expose them to tampering or unauthorized access.

- Runtime Security: Misconfigurations in container runtime environments or orchestration platforms can create security loopholes.

- Credential Management: Securely managing sensitive credentials and secrets within containerized applications is a complex but critical task.

Complexity in Management

While containers streamline application deployment, managing a large-scale containerized infrastructure can introduce new complexities:

- Orchestration Complexity: Tools like Kubernetes, while powerful, have a steep learning curve and require significant expertise to configure and manage effectively.

- Networking Challenges: Managing container networking, especially in distributed environments with multiple hosts and complex inter-container communication, can be challenging.

- Stateful Applications: Managing the lifecycle and data persistence of stateful applications (like databases) within containers requires careful planning and specialized solutions.

- Monitoring and Logging: Aggregating and analyzing logs and metrics from a multitude of containers across a cluster can be a daunting task.

- Resource Allocation and Scheduling: Optimizing resource allocation and ensuring efficient scheduling of containers across the cluster requires sophisticated management strategies.

Integration with Existing Systems

Integrating containerization into an established IT infrastructure often presents unique challenges:

- Legacy System Compatibility: Migrating legacy applications that were not designed for containerization can be a complex and time-consuming process.

- Data Migration: Moving data from traditional storage solutions to container-friendly storage or managing persistent data for stateful containers requires careful planning.

- DevOps Culture Shift: Successful container adoption often necessitates a cultural shift towards DevOps practices, requiring training and adaptation across teams.

- Toolchain Integration: Integrating container workflows with existing CI/CD tools, monitoring systems, and security platforms requires careful consideration and potential adjustments.

- Skill Gaps: Organizations may face a shortage of skilled personnel with expertise in container technologies, necessitating investment in training and recruitment.

Leading Container Technologies

The container ecosystem is rich with powerful tools and platforms, with some standing out due to their widespread adoption and comprehensive feature sets.

Docker

Docker has been instrumental in popularizing containerization, making it accessible and standardized for a broad audience. It provides a complete platform for developing, shipping, and running containerized applications.

Key Features of Docker:

- Containerization Engine: The core technology for building and running containers.

- Dockerfile: A text-based script for defining container images.

- Docker Hub: A cloud-based registry for sharing and discovering Docker images.

- Docker Compose: A tool for defining and running multi-container Docker applications.

- Docker Swarm: A native clustering and orchestration solution for Docker.

Benefits of Docker:

- Ease of Use: Docker’s intuitive interface and clear syntax lower the barrier to entry for containerization.

- Rich Ecosystem: A vast community and extensive library of pre-built images accelerate development.

- Portability: Docker containers can run on any system with Docker installed.

- Consistency: Ensures applications run the same way across development, testing, and production.

Kubernetes

Kubernetes (K8s) has emerged as the de facto standard for container orchestration, automating the deployment, scaling, and management of containerized applications. It is designed to work seamlessly with container runtimes like Docker.

Key Features of Kubernetes:

- Automated Rollouts and Rollbacks: Manages application updates and can revert to previous versions if issues arise.

- Service Discovery and Load Balancing: Automatically exposes containers to the network and distributes traffic.

- Storage Orchestration: Allows mounting of various storage systems, both local and cloud-based.

- Self-Healing: Restarts failed containers, replaces and reschedules containers when nodes die, and kills containers that don’t respond to health checks.

- Secret and Configuration Management: Allows for secure storage and management of sensitive information and application configurations.

Benefits of Kubernetes:

- Scalability: Effortlessly scales applications up or down based on demand.

- High Availability: Ensures applications remain accessible even during node failures or maintenance.

- Portability: Runs on-premises, in public clouds, and in hybrid environments.

- Extensibility: Its architecture allows for integration with a wide range of tools and services.

- Resilience: Manages the complex lifecycle of containerized applications with robust fault tolerance.

Other Notable Container Technologies

Beyond Docker and Kubernetes, several other technologies contribute to the containerization landscape:

- containerd: An industry-standard container runtime that emphasizes simplicity, robustness, and portability. It manages the complete container lifecycle.

- Podman: A daemonless container engine for developing, managing, and running OCI containers on Linux systems. It offers a Docker-compatible CLI.

- Buildah: A tool that facilitates building OCI container images. It allows users to create an empty image or start from an existing one and add layers incrementally.

- LXC (Linux Containers): A lightweight virtualization technology that provides OS-level virtualization for Linux. It is a foundational technology for many container solutions.

- rkt (Rocket): An open-source container runtime that focuses on security and composability, although its development has slowed significantly.

Future Trends in Containerization

The evolution of containerization is closely intertwined with advancements in emerging technologies and the development of new industry standards. The future promises even deeper integration and broader adoption.

Integration with Emerging Technologies

Containerization is increasingly becoming a foundational technology for other cutting-edge fields:

- Artificial Intelligence (AI) and Machine Learning (ML): Containers provide isolated and reproducible environments for training and deploying complex AI/ML models, simplifying dependency management and ensuring consistent results across different hardware. Platforms like Kubeflow leverage Kubernetes to orchestrate ML workflows.

- Edge Computing: As computing power moves closer to data sources, containers are essential for deploying and managing applications on resource-constrained edge devices. Their lightweight nature and rapid deployment capabilities are ideal for edge environments.

- Serverless Computing: While serverless abstracts away infrastructure, containers often form the underlying execution environment for serverless functions, providing isolation and portability.

- WebAssembly (Wasm): The growing interest in WebAssembly for running code securely and efficiently across various platforms suggests potential future integrations with containerization for more portable and secure workloads.

- Internet of Things (IoT): Containers can help manage and update software on a vast number of diverse IoT devices, enabling more scalable and resilient IoT deployments.

Evolution of Container Standards and Regulations

As containerization matures and becomes more integral to critical infrastructure, the development of robust standards and the adherence to regulations are becoming paramount:

- Open Container Initiative (OCI): The OCI is a Linux Foundation project that defines open industry standards around container formats and runtimes. Adherence to OCI standards ensures interoperability between different container tools and platforms.

- Supply Chain Security: With increasing concerns about software supply chain attacks, there is a growing focus on securing the entire lifecycle of container images, from development to deployment. This includes signing images, scanning for vulnerabilities, and ensuring the provenance of components.

- Compliance and Governance: As containerized applications become more prevalent in regulated industries, ensuring compliance with industry-specific regulations (e.g., GDPR, HIPAA) becomes critical. This involves implementing robust security controls, audit trails, and data governance policies.

- Interoperability: Efforts are underway to improve interoperability between different container orchestration platforms and cloud providers, reducing vendor lock-in and fostering a more open ecosystem.

- Sustainability: As containerization enables higher resource utilization, there is also a growing awareness of its potential environmental impact, leading to discussions around optimizing resource consumption and energy efficiency in containerized data centers.

Bottom Line: The Role of Containers Will Continue to Grow

Containers have fundamentally reshaped the landscape of software development and deployment, offering unparalleled efficiency, scalability, and consistency. As these technologies continue to mature and integrate with emerging innovations, their role is poised to expand even further, driving advancements and efficiencies across a multitude of industries.

Looking ahead, the potential of containerization is immense. Its inherent ability to seamlessly integrate with future technological advancements and adapt to evolving regulatory landscapes positions it as a cornerstone of digital transformation strategies. Organizations that effectively leverage container technologies will find themselves at the forefront of innovation, better equipped to navigate the complexities and seize the opportunities of a rapidly evolving digital world. The ongoing development of container standards and the integration with new paradigms like AI and edge computing signal a future where containers are not just a deployment tool but a foundational element of modern computing infrastructure.

For those seeking to understand related technologies, exploring virtual machines offers valuable insights into alternative isolation methods. Additionally, examining the offerings of leading virtualization companies can help organizations make informed decisions about their infrastructure strategies.