The conversation among engineering leaders has irrevocably shifted. The initial excitement and debate surrounding AI coding assistants—whether they could effectively generate boilerplate code or not—have receded. The imperative for AI adoption is no longer a question of "if," but "how." Boards have mandated its integration, developers are actively utilizing these tools, and the flow of AI-generated code is accelerating. The pressing, and far more daunting, question now preoccupying technical leadership is: what are the breaking points when autonomous agents begin producing code at a rate ten times that of human engineering teams?

For those at the helm of engineering organizations, this transition represents a dizzying shift. The promised revolution of a tenfold increase in shipping velocity has, for many, devolved into a growing, stagnant backlog of pull requests. Staging environments are experiencing constant failures, and senior engineers are facing burnout not from the act of writing code, but from the Herculean effort required to review, test, and untangle the sheer volume of machine-generated code. The stark reality is that the bottleneck in software delivery has not been eliminated; it has merely been relocated.

The rate-limiting step in software delivery has moved from code creation to code validation. AI agents have effectively solved the challenge of writing code, but the complex, arduous process of verifying that this AI-generated code functions correctly in production-like conditions, before it ever merges into the main branch, now represents the critical bottleneck.

The Cloud-Native Collision Course

For organizations operating with modern, cloud-native architectures, this validation bottleneck is not merely an inconvenience; it is a fundamental point of failure. In distributed microservices environments, services are inherently interconnected. A seemingly isolated modification to a single backend service, generated by an AI agent, can trigger a cascade of failures, impacting multiple downstream services and potentially corrupting shared database schemas.

When this reality is amplified by dozens, or even hundreds, of AI agents working asynchronously and pushing code in parallel, existing continuous integration (CI) pipelines face an existential crisis. They transform from streamlined workflows into massive traffic jams. Historically, the primary method for validation has been shared staging environments. Developers would merge code, deploy it to staging, execute integration tests, and hope for the best. However, a shared staging environment functions like a single-lane bridge. When both human developers and AI agents, each representing a deluge of new code, attempt to traverse this bridge simultaneously, collisions are inevitable. Staging environments become perpetually unstable, and teams spend an inordinate amount of time debugging to identify which commit precipitated an outage.

Failure to address this structural flaw in the validation process carries severe consequences:

- The Deploy Gap: A significant divergence emerges between the code that is generated and the code that is actually deployed to users. Unmerged code, languishing in repositories, represents a liability rather than an asset, delivering no tangible business value.

- Post-Merge Failures: In a desperate attempt to clear the backlog, teams may be compelled to lower their validation standards. This invariably leads to a surge in production incidents and an increase in rollback queues, undermining system stability and user trust.

- Negative Return on Investment (ROI) on AI: The substantial investments made in AI tooling are rendered moot if the output cannot be safely and efficiently integrated into the product. The very tools intended to accelerate development can, if not properly managed, lead to stagnation and increased technical debt.

Companies that successfully navigate and resolve this validation bottleneck are poised to achieve shipping velocities significantly exceeding industry averages. Conversely, those that fail to adapt risk being overwhelmed by the sheer volume of their own AI-generated code.

Rethinking Validation for the Agentic Era

The solution to this challenge does not lie in simply augmenting existing Jenkins servers with more compute power or implementing more stringent pull request review policies. Human review alone cannot possibly keep pace with an avalanche of machine-generated code. What is required is a fundamental architectural re-evaluation of how software is validated. To enable AI agents to function as truly autonomous developers, they must be equipped with the infrastructure to test their work with the same rigor as a senior human engineer.

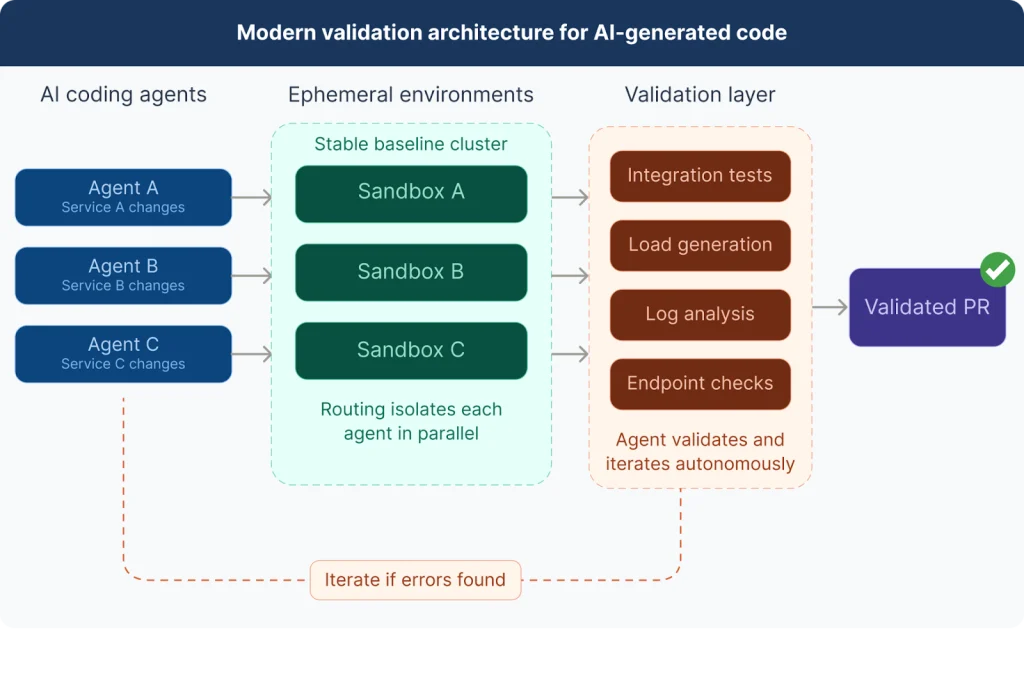

This necessitates the implementation of a modern validation architecture, built upon two foundational pillars:

1. A Scalable Approach to Ephemeral Environments

The era of the shared staging bottleneck must come to an end. Every AI agent, and every individual pull request, requires its own isolated environment for validating changes against the complete, complex system. However, provisioning an entire replica of a large-scale microservices cluster for each pull request is prohibitively expensive and painfully slow. The contemporary approach centers on lightweight, highly scalable ephemeral environments. Instead of duplicating the entire system, the architecture maintains a stable baseline of the existing infrastructure. Changes made by an agent are then isolated through intelligent request routing.

When an AI agent generates a new version of a specific service, such as "Service A," the infrastructure dynamically provisions only that updated service. Test traffic is then routed through the stable cluster, with only the relevant requests being directed to the agent’s sandbox. This sophisticated routing mechanism allows for hundreds of agents to concurrently test hundreds of distinct architectural modifications against the full system without interference or exorbitant cloud expenditure. This eliminates the shared staging bottleneck and the associated waiting times.

2. The Skills-Based Validation Layer

Providing an isolated environment is only one part of the solution. An environment is rendered ineffective if the agent lacks the capability to utilize it. Human developers do not merely write code; they possess the crucial skill of validation. They interact with endpoints, query databases, monitor Grafana dashboards, and scrutinize server logs to verify their logic. AI agents must be endowed with these same proficiencies.

A skills-based validation layer equips coding agents with the programmatic tools necessary to interact with their ephemeral environments. Instead of generating code and immediately submitting a pull request for human review, the agent can now generate the code, deploy it to its dedicated sandbox, and then leverage its "skills" to execute integration tests, simulate load, and analyze the resultant logs.

Should the agent detect an error within the logs, it enters an iterative loop. It autonomously corrects its own code, redeploys to the sandbox, and retests. This closed-loop system allows the agent to achieve a mathematically proven state of correctness within the broader system context before ever requesting human intervention. The agent only initiates a human review after it has independently verified the integrity and functionality of its changes.

Enabling True Autonomy and Continuous Delivery

The synergy between scalable ephemeral environments and a skills-based validation layer fundamentally transforms the development paradigm. AI agents evolve from rudimentary autocomplete engines into truly autonomous contributors. They are no longer responsible for merely offloading untested code for senior engineers to rectify. Instead, they assume accountability for the entire lifecycle of their assigned tasks, from initial generation through comprehensive system-wide validation.

This represents the sole viable path forward for achieving continuous delivery in the age of AI. Continuous delivery has long been considered the ultimate aspiration of software engineering. AI agents have not altered this fundamental goal; rather, they have elevated the underlying infrastructure required to attain it from a desirable feature to an absolute necessity.

Bridging the Gap

The structural challenges and imperative for a new approach to validation are precisely the issues that platforms like Signadot are designed to address. Signadot offers a solution that provides every human developer and AI agent with their own lightweight, isolated environment. This enables the validation of changes against the full system in parallel and at a massive scale, prior to any code merge. By visiting Signadot.com, organizations can explore how to unblock their CI pipelines and translate their investments in AI into tangible improvements in shipping velocity.

The current landscape of software development is defined by the rapid integration of AI into core engineering workflows. While the benefits of increased coding speed are undeniable, the critical challenge of ensuring the quality, stability, and reliability of this AI-generated output cannot be overstated. The traditional methods of code validation are proving insufficient, leading to a re-evaluation of architectural strategies. The future of efficient and safe software delivery hinges on the ability of engineering teams to build robust systems capable of handling the unprecedented scale and complexity introduced by autonomous coding agents. This evolution requires a proactive shift in how we approach testing, integration, and deployment, ensuring that the promise of AI-driven velocity does not become a precursor to systemic instability. The race is on for organizations to adapt their validation processes, lest they find themselves drowned in the very code their advanced tools are creating.