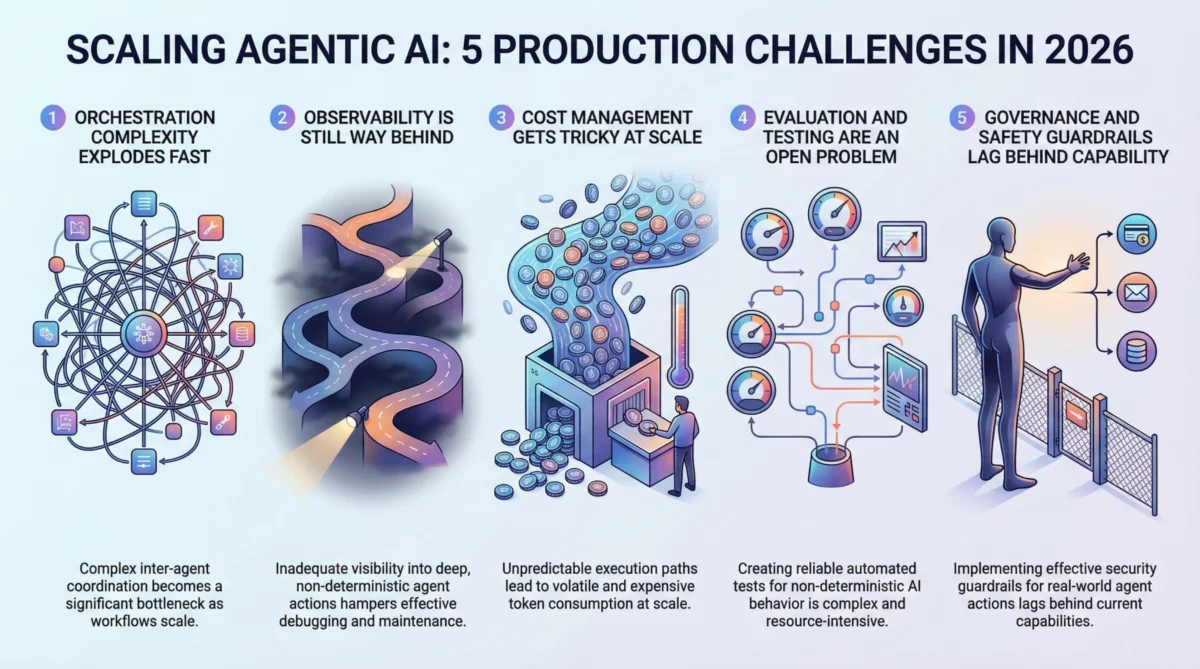

The year 2026 marks a pivotal moment for artificial intelligence, particularly in the realm of agentic AI systems. What began as captivating prototypes and seemingly magical demonstrations is now confronting the harsh realities of enterprise-scale deployment. While the allure of autonomous AI agents — capable of making decisions, executing complex actions, and chaining workflows independently — remains undiminished, the transition from lab-based innovation to robust, production-ready systems is proving to be fraught with significant, often underestimated, hurdles. This article delves into five critical challenges that teams are grappling with as they endeavor to scale agentic AI systems for real-world applications in the current technological landscape.

The Prototyping Boom and the Production Chasm

The rapid evolution of large language models (LLMs) has ignited an explosion in agentic AI development. From automating customer service interactions to streamlining complex scientific research, the potential applications are vast and transformative. Industry venture capital funding for AI startups, particularly those focused on agentic capabilities, surged by an estimated 70% in 2024-2025, reflecting intense market enthusiasm. Developers, inspired by the compelling demos showcasing agents performing intricate tasks with minimal human intervention, have swiftly moved from conceptualization to functional prototypes. These early successes, however, often mask the profound engineering and operational complexities inherent in deploying such systems at scale.

Historically, a gap has always existed between the proof-of-concept phase and full production in machine learning. For agentic AI, this chasm is wider than ever before. Unlike traditional supervised learning models that merely predict outputs based on fixed inputs, agentic systems possess dynamic decision-making capabilities, tool-use integration, and the capacity for self-correction. This autonomy, while powerful, introduces layers of unpredictability and complexity that traditional MLOps (Machine Learning Operations) frameworks are ill-equipped to handle. As a result, organizations aiming to leverage these intelligent agents in mission-critical environments are encountering unforeseen obstacles, fundamentally altering their deployment strategies and timelines for 2026.

1. Orchestration Complexity Explodes: The Multi-Agent Maze

One of the most immediate and profound challenges facing teams scaling agentic AI is the exponential growth of orchestration complexity. While a single agent performing a narrow, well-defined task might be manageable, real-world applications frequently demand multi-agent architectures where agents delegate tasks, collaborate, and dynamically select tools. This intricate dance of interconnected processes rapidly escalates the coordination overhead, transforming what appears to be a linear workflow into a sprawling, non-linear network of dependencies.

Background and Data: Early 2025 saw a significant push towards modular AI systems, where specialized agents handle distinct parts of a larger problem. However, integrating these modules has become a primary bottleneck. A recent report by AI engineering consultancy, Synthetica Labs, indicated that 60% of enterprise AI projects involving multi-agent systems experienced delays of over three months due to unforeseen orchestration issues in 2025. This complexity often manifests as race conditions in asynchronous pipelines, deadlocks, or cascading failures where a minor error in one agent’s execution triggers a collapse across the entire system. Debugging these scenarios is exceptionally difficult, as the interactions are dynamic and often hard to reproduce consistently across environments. Traditional workflow engines, designed for deterministic, sequential processes, are proving inadequate for the fluid, adaptive nature of agentic AI. Consequently, many teams are forced to develop bespoke orchestration layers, which quickly become the most brittle and maintenance-intensive components of their AI stack.

Implications: The escalating complexity directly impacts development cycles, increases operational risks, and inflates resource allocation for engineering teams. The inability to reliably coordinate agents under varying loads also limits the scalability of these systems, making it difficult for businesses to predict performance and throughput as user demand grows. Experts like Dr. Lena Petrov, CTO of AI-driven automation firm NexusFlow, noted in a recent industry panel, "The challenge isn’t building individual agents; it’s building a conductor that can lead an orchestra of thousands of them, each playing its own part dynamically. This requires a complete rethinking of our system design principles."

2. Observability Lag: Piercing the Agentic Black Box

The dictum "you can’t fix what you can’t see" holds particularly true for agentic AI. While traditional machine learning monitoring focuses on metrics like latency, throughput, and model accuracy, these offer only a superficial view of an agentic system’s behavior. When an autonomous agent embarks on a multi-step journey to fulfill a user request, understanding why specific decisions were made, which tools were invoked, and how intermediate results influenced subsequent actions becomes paramount.

Background and Data: The inherent non-determinism of agentic behavior further complicates observability. The same input can lead to vastly different execution paths, making it nearly impossible to "snapshot" a failure and reliably replay it for debugging. Current tracing infrastructure, while evolving, is largely immature for the demands of agentic workflows. A 2025 survey by the Global AI Development Forum revealed that 75% of AI engineering managers identified "lack of comprehensive observability" as a critical impediment to debugging and optimizing agentic systems. Teams often resort to a patchwork of custom logging solutions, proprietary tracing tools like LangSmith (though still in early adoption for many), and extensive manual review of verbose logs – a process that is both time-consuming and prone to human error.

Implications: Inadequate observability hinders effective root cause analysis, prolongs incident response times, and erodes confidence in the system’s reliability. For industries with strict compliance requirements, the inability to fully audit an agent’s decision-making process presents significant regulatory hurdles. "Without clear, actionable insights into an agent’s internal monologue and tool interactions, we’re essentially flying blind," stated Marcus Chen, Head of AI Operations at a leading financial institution, emphasizing the critical need for a new generation of observability tools tailored for autonomous agents. The industry is currently exploring standardized frameworks for agentic telemetry, but widespread adoption and robust tooling are still several years away.

3. Unpredictable Cost Management: The Token Tsunami

The economic realities of scaling agentic AI are proving to be a rude awakening for many organizations. Each action an agent takes, particularly those involving complex reasoning or tool interaction, typically translates into one or more large language model (LLM) calls. When agents chain dozens or even hundreds of these steps for a single user request, the aggregate token costs can skyrocket unexpectedly, quickly turning a seemingly minor per-execution cost into a significant operational expenditure.

Background and Data: Unlike traditional APIs with predictable pricing models, the variable execution paths of agentic systems make cost forecasting notoriously difficult. A detailed analysis from the AI Cost Optimization Institute (AICOI) in late 2025 reported instances where a single edge-case scenario could trigger runaway agent loops, costing up to 50 times more than a typical execution path. Average per-request costs for complex agentic tasks often range from $0.10 to $0.50, which, when multiplied by millions of daily requests, translates into staggering monthly bills that can far exceed initial budget allocations.

Implications: This billing unpredictability creates immense stress for engineering leads and financial departments, often forcing a trade-off between cost efficiency and the desired quality or depth of agentic output. Companies are experimenting with strategies like routing simpler sub-tasks to smaller, more cost-effective models, aggressive caching of intermediate results, and implementing "kill switches" to terminate runaway agent processes. However, finding the optimal balance requires continuous experimentation and sophisticated cost-aware design. The rise of "AI FinOps" — financial operations for AI — is a direct response to this challenge, with specialized tools and practices emerging to monitor, allocate, and optimize LLM-related expenditures. The unexpected cost surges are also acting as a barrier to entry for smaller startups, limiting the democratization of advanced agentic AI capabilities.

4. Evaluation and Testing Deficiencies: The Non-Deterministic Dilemma

Perhaps the most fundamental challenge to robust agentic AI deployment is the inadequacy of current evaluation and testing paradigms. Traditional software testing assumes deterministic behavior, where a given input always produces the same output. Similarly, traditional machine learning evaluation relies on fixed input-output mappings. Agentic AI, with its autonomous decision-making and non-deterministic execution paths, shatters both these assumptions simultaneously.

Background and Data: How does one rigorously test a system that can take a different path every time it runs, even with identical initial conditions? This question plagues AI engineers globally. A 2025 report from the Institute for Autonomous Systems Research highlighted that over 80% of organizations deploying agentic AI still heavily rely on manual human review for quality assurance, a method that is inherently unscalable and prone to inconsistencies. The tooling for comprehensive agentic evaluation remains fragmented and nascent. Approaches being explored include "LLM-as-a-judge" pipelines, where a separate AI model evaluates an agent’s outputs against predefined criteria, and scenario-based test suites designed to check for behavioral properties rather than exact outputs. Some advanced teams are investing in sophisticated simulation environments to stress-test agents against thousands of synthetic, diverse scenarios before production release.

Implications: The lack of standardized benchmarks and mature evaluation tools means there’s no industry consensus on what constitutes "good" performance for a complex agentic workflow. This ambiguity leads to slower iteration cycles, increased risk of deploying agents with latent bugs or undesirable behaviors, and significant challenges in proving the reliability and safety of these systems to stakeholders and regulators. Professor Anya Gupta, a leading researcher in AI verification at Stanford University, recently commented, "Until we can rigorously and scalably evaluate the ‘how’ and ‘why’ of agentic decisions, their full potential will remain constrained by the need for constant human oversight."

5. Governance and Safety Guardrails: Lagging Behind Autonomy

The ability of agentic AI systems to take real-world actions — sending emails, modifying databases, executing financial transactions, or interacting with external services — introduces profound safety and governance implications. The pace at which these capabilities are being developed and deployed has far outstripped the creation and implementation of robust regulatory frameworks and internal guardrails.

Background and Data: The core challenge lies in striking a delicate balance: implementing safety mechanisms that prevent harmful or unintended actions without being so restrictive that they negate the utility and autonomy that define agentic AI. Instances of "hallucinations" or unexpected behaviors from agents, while often minor in prototype stages, can have significant real-world consequences in production. Regulatory bodies worldwide, including those expanding upon the European Union’s AI Act, are increasingly scrutinizing autonomous systems. A recent white paper from the Thomson Reuters Institute for Responsible AI emphasized the mounting pressure on companies to demonstrate clear accountability, auditability, and compliance mechanisms for their agentic deployments.

Implications: Teams are currently learning through trial and error, implementing various friction points such as granular permission systems, human-in-the-loop approval workflows for critical actions, and strict scope limitations. However, these often add complexity and can undermine the efficiency benefits of automation. The absence of comprehensive governance frameworks exposes organizations to legal liabilities, ethical dilemmas, and a potential erosion of public trust if agents act erroneously or maliciously. The critical need for "red teaming" – actively trying to break or misuse agentic systems to uncover vulnerabilities – is becoming an industry standard. The imperative for proactive, robust safety guardrails is not merely a technical challenge but a societal one, demanding collaboration between developers, ethicists, policymakers, and legal experts to ensure responsible innovation in 2026 and beyond.

Final Thoughts on the Road Ahead

Agentic AI represents a genuinely transformative paradigm shift, promising unprecedented levels of automation and intelligent assistance. However, the journey from impressive prototype to reliable, scalable production system is undeniably complex. The five challenges outlined – explosive orchestration complexity, critical observability gaps, unpredictable cost management, insufficient evaluation and testing, and lagging governance and safety guardrails – are not merely technical hurdles but foundational problems that demand innovative solutions and a collaborative industry effort.

The good news is that the ecosystem is maturing rapidly. Early adopters are accumulating hard-won lessons, specialized tooling is emerging, and research efforts are intensifying to address these issues. The organizations that prioritize investment in solving these foundational problems – fostering interdisciplinary teams, advocating for industry standards, and embracing responsible development practices – are the ones poised to successfully harness the immense power of agentic AI and build systems that are not only intelligent but also robust, predictable, and trustworthy in the demanding real-world environments of 2026 and beyond.