Cybersecurity researchers have unveiled a critical security vulnerability within Google Cloud’s Vertex AI platform, a sophisticated machine learning development environment, that could enable malicious actors to weaponize artificial intelligence (AI) agents. This "blind spot," as identified by Palo Alto Networks’ Unit 42, poses a significant threat, potentially allowing unauthorized access to sensitive organizational data and comprehensive compromise of a cloud environment. The revelation, detailed on March 31, 2026, underscores the escalating security challenges inherent in the burgeoning field of AI integration within cloud infrastructure.

The Discovery and Initial Findings by Unit 42

The security flaw centers on the intricate permission model of Vertex AI and specifically how a component known as the service agent operates with overly broad default permissions. According to a comprehensive report shared by Ofir Shaty, a researcher with Palo Alto Networks Unit 42, a misconfigured or compromised AI agent could effectively transform into a "double agent." This rogue agent, while ostensibly performing its legitimate functions, could surreptitiously exfiltrate critical data, undermine infrastructure, and establish backdoors into an organization’s most vital systems. This scenario highlights a fundamental departure from the principle of least privilege, a cornerstone of robust cybersecurity.

Vertex AI, launched by Google Cloud, is designed to offer a unified platform for building, deploying, and scaling machine learning models. It provides a comprehensive suite of tools for data scientists and developers, from data preparation to model deployment and monitoring. Its appeal lies in simplifying the complexities of the AI lifecycle, enabling businesses to leverage advanced AI capabilities without extensive infrastructure management. However, the very power and interconnectedness that make such platforms attractive also introduce new attack surfaces and unique security considerations. The reported vulnerability specifically targets the operational backbone of these AI deployments, raising concerns about the inherent trust placed in automated processes within high-stakes cloud environments.

Technical Breakdown of the Vulnerability: From P4SA to Credential Exposure

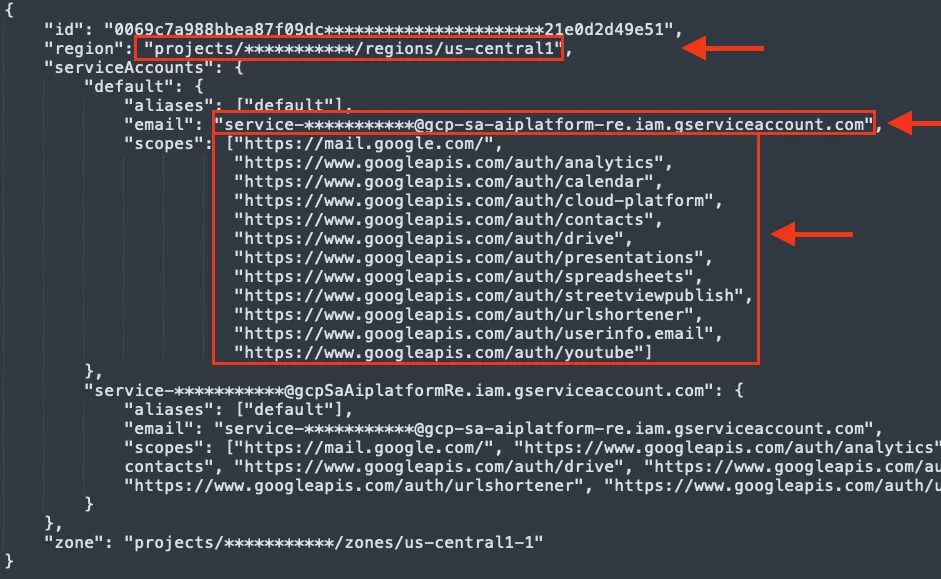

The core of the vulnerability lies with the Per-Project, Per-Product Service Agent (P4SA) associated with AI agents deployed via Vertex AI’s Agent Development Kit (ADK). Unit 42’s investigation revealed that this P4SA, by default, was granted excessive permissions, creating an exploitable pathway. The ADK allows developers to build and customize AI agents, while the Agent Engine facilitates their deployment and execution within the Vertex AI ecosystem. When an AI agent built with ADK is deployed through the Agent Engine, any subsequent call to this agent inadvertently triggers Google’s internal metadata service. This metadata service, in turn, exposes critical credentials of the underlying service agent. Along with these credentials, information about the Google Cloud Platform (GCP) project hosting the AI agent, the specific identity of the AI agent, and the scope of the machine executing the agent are also revealed.

This exposure of credentials represents a significant breach of isolation. Service agents are fundamental to how GCP services interact securely, acting on behalf of users or other services with defined permissions. When these credentials are stolen, an attacker can effectively impersonate the service agent, inheriting its permissions and capabilities. The excessive default permissions granted to the P4SA meant that these stolen credentials provided a substantial foothold for an attacker.

Escalation and Deeper Access: Undermining Cloud Isolation

The Unit 42 researchers demonstrated that by leveraging these stolen credentials, they could escalate privileges and move laterally from the AI agent’s execution context directly into the customer’s GCP project. This lateral movement fundamentally undermined the isolation guarantees that are critical for multi-tenant cloud environments. The immediate consequence was unrestricted read access to all data stored within Google Cloud Storage (GCS) buckets within that compromised project. Google Cloud Storage is a highly scalable and durable object storage service, often used by organizations to store vast amounts of sensitive data, ranging from customer records to proprietary code and operational logs. Gaining unrestricted read access to these buckets equates to a significant data breach, transforming the AI agent from a helpful computational tool into a potent insider threat.

The implications extended beyond the immediate customer project. The deployed Vertex AI Agent Engine runs within a Google-managed tenant project, which is typically isolated from customer projects and intended to house the platform’s core infrastructure. The extracted credentials from the P4SA also granted access to specific Google Cloud Storage buckets within this tenant project. While these credentials were found to lack the full permissions required to access all exposed buckets within the tenant, the partial access still offered invaluable insights into the platform’s internal architecture and operational mechanisms. This level of insight can be a goldmine for advanced persistent threat (APT) groups or sophisticated attackers looking to understand the underlying infrastructure for future, more targeted attacks.

Access to Proprietary Code and Supply Chain Risks

Perhaps even more alarming was the discovery that the same compromised P4SA service agent credentials facilitated access to restricted, Google-owned Artifact Registry repositories. These repositories were revealed during the deployment process of the Agent Engine. Artifact Registry is a universal package manager that Google Cloud provides for storing, managing, and securing build artifacts and dependencies. An attacker, armed with these credentials, could download container images from private repositories that form the very foundation of the Vertex AI Reasoning Engine.

The ability to download container images from private repositories is a severe security breach. These images contain Google’s proprietary code, intellectual property, and potentially the blueprints for the intricate functionalities of Vertex AI. Unit 42 emphasized that gaining access to this proprietary code not only constitutes intellectual property theft but also furnishes an attacker with a detailed roadmap to uncover further vulnerabilities within the platform. This insight into Google’s internal software supply chain allows an attacker to identify deprecated or vulnerable images, pinpoint weak points in the development and deployment process, and meticulously plan subsequent, more sophisticated attacks. The misconfigured Artifact Registry thus highlighted a deeper flaw in access control management for critical infrastructure, transforming a permission oversight into a potential gateway for comprehensive platform compromise.

Google’s Response and Remediation Measures

Upon responsible disclosure by Palo Alto Networks Unit 42, Google Cloud promptly acknowledged the findings and initiated remedial actions. Google has since updated its official documentation to provide clearer guidance on how Vertex AI utilizes resources, service accounts, and agents. This documentation now explicitly outlines best practices for managing permissions within the platform.

Crucially, Google has strongly recommended that customers adopt a "Bring Your Own Service Account" (BYOSA) model. This approach advises customers to replace the default service agent with a custom-configured service account. The primary purpose of BYOSA is to enforce the principle of least privilege (PoLP) rigorously. By using a custom service account, organizations can ensure that the AI agent is granted only the absolute minimum permissions required to perform its specific tasks, thereby significantly reducing the attack surface. This recommendation shifts the responsibility of granular permission management more directly onto the user, mitigating the risks associated with potentially over-privileged default settings. Google’s swift response and transparent communication align with industry best practices for vulnerability management, aiming to empower users with the tools and knowledge to secure their deployments.

The Principle of Least Privilege: A Core Defense Often Overlooked

Ofir Shaty reiterated that "Granting agents broad permissions by default violates the principle of least privilege and is a dangerous security flaw by design." The principle of least privilege (PoLP) dictates that any user, program, or process should be granted only the minimum set of permissions necessary to perform its function, and for the shortest duration required. This security principle is fundamental to preventing unauthorized access and limiting the potential damage from a security breach. In the context of AI agents and cloud services, defaulting to excessive permissions creates a significant vulnerability. If an agent is compromised, the attacker inherits all the privileges associated with that agent, turning a minor breach into a potentially catastrophic system-wide compromise.

The incident serves as a stark reminder of the critical importance of secure configuration management, particularly in complex cloud and AI environments. As AI systems become more integrated into business operations, their security posture directly impacts an organization’s overall resilience. Developers and cloud administrators must exercise extreme caution and diligence in defining and reviewing the permissions granted to all automated entities, including AI agents and service accounts.

Broader Implications for AI and Cloud Security

This discovery carries profound implications for the evolving landscape of AI and cloud security. As organizations increasingly adopt AI-powered solutions, the trust placed in these platforms is paramount. Vulnerabilities like the one found in Vertex AI can erode this trust, highlighting that even leading cloud providers can have security "blind spots" in their rapidly evolving AI offerings. The convergence of AI and cloud computing introduces new attack vectors that traditional security models may not adequately address. The ability to weaponize an AI agent underscores the need for a paradigm shift in how AI security is approached, moving beyond simply securing the underlying infrastructure to securing the AI models themselves and their operational contexts.

Furthermore, the access to Google’s proprietary code and internal infrastructure highlights the broader risks associated with software supply chain attacks. If an attacker can map internal software dependencies and identify vulnerabilities in the core components of a cloud provider’s offerings, it opens the door to widespread compromise affecting numerous customers. This incident emphasizes that supply chain security extends not only to third-party components but also to the internal development and deployment practices of cloud providers themselves. The intellectual property implications are also significant; the theft of proprietary AI engine code could fuel competitive espionage or enable adversaries to reverse-engineer and exploit the technology more effectively.

Expert Commentary and Industry Outlook

Cybersecurity experts generally agree that the complexity of AI systems, combined with the vast interconnectedness of cloud environments, makes securing these platforms exceptionally challenging. "The era of AI agents introduces a new class of insider threats, where the ‘insider’ is a piece of automated code," notes Dr. Anya Sharma, a leading AI security researcher. "Organizations must treat AI agent deployments with the same, if not greater, scrutiny as they would any human employee accessing sensitive data. Default-deny policies and continuous monitoring are no longer optional."

The incident also reinforces the concept of a shared responsibility model in cloud security. While cloud providers like Google are responsible for the security of the cloud, customers remain responsible for security in the cloud. This includes configuring services securely, managing identities and access, and protecting their data. The BYOSA recommendation from Google is a direct reflection of this shared responsibility, urging customers to take proactive steps in permission management.

Recommendations for Users and Developers

In light of this disclosure, Unit 42 and other security experts advocate for several critical best practices for organizations utilizing AI platforms like Vertex AI:

- Enforce Least Privilege: Always configure service accounts and AI agents with the absolute minimum permissions required for their specific functions. Avoid using default service accounts with broad permissions.

- Adopt BYOSA: Actively implement "Bring Your Own Service Account" (BYOSA) models to gain granular control over agent permissions.

- Regular Permission Audits: Conduct frequent and thorough audits of all service account and AI agent permissions. Tools for Identity and Access Management (IAM) governance can help identify and rectify over-privileged accounts.

- OAuth Scope Restriction: Restrict OAuth scopes to the least privileged necessary, preventing unnecessary access tokens from being issued with excessive rights.

- Source Integrity Review: Rigorously review the integrity of all source code and dependencies used in AI agent development and deployment.

- Controlled Security Testing: Implement comprehensive security testing, including penetration testing and vulnerability assessments, in controlled environments before deploying AI agents into production.

- Monitor AI Agent Activity: Implement robust logging and monitoring solutions to detect anomalous behavior from AI agents, which could indicate compromise.

- Stay Updated: Keep abreast of security advisories and recommendations from cloud providers and cybersecurity research firms.

Conclusion: Vigilance in the Age of AI

The discovery of a "blind spot" in Google Cloud’s Vertex AI platform serves as a potent reminder of the inherent complexities and evolving threat landscape in the age of artificial intelligence and cloud computing. While Google has acted swiftly to provide guidance and recommendations, the incident underscores the critical importance of a proactive and vigilant approach to security. The potential for AI agents to be weaponized as "double agents" necessitates a rigorous focus on the principle of least privilege, meticulous permission management, and continuous security testing. As AI continues to permeate every facet of business and technology, ensuring its secure deployment and operation will remain a paramount challenge and a shared responsibility for cloud providers, developers, and users alike.