A container is a lightweight, standalone, executable package of software that includes everything needed to run a piece of software: code, runtime, system tools, system libraries, and settings. This technology is part of a broader trend known as containerization, a method of packaging software so it can be run with its dependencies isolated from other processes.

Containerization has emerged as a transformative force in the world of software development and deployment, offering unparalleled efficiency, portability, and scalability. At its core, a container is a self-contained unit of software that packages an application along with all its necessary components – code, runtime, libraries, and configuration files – ensuring it can run reliably across different computing environments. This approach addresses long-standing challenges related to application compatibility and infrastructure dependencies, fundamentally altering how software is built, delivered, and managed.

This article delves into the intricacies of containerization, exploring its fundamental principles, contrasting it with traditional virtualization methods, and examining its pivotal role in modern IT infrastructures. We will dissect the key components that define a container, illuminate its diverse use cases across various industries, and detail the significant benefits it confers upon development and operations teams. Furthermore, we will address the inherent challenges and considerations associated with adopting containerization, highlight prominent technologies driving its adoption, and forecast future trends that will continue to shape its evolution.

Understanding the Mechanics of Containers

At its heart, containerization empowers developers to package and execute applications within isolated environments. This process, often referred to as containerization, provides a consistent and highly efficient mechanism for deploying software. Applications can transition seamlessly from a developer’s local workstation to production servers without the typical anxieties surrounding discrepancies in operating system configurations or underlying infrastructure.

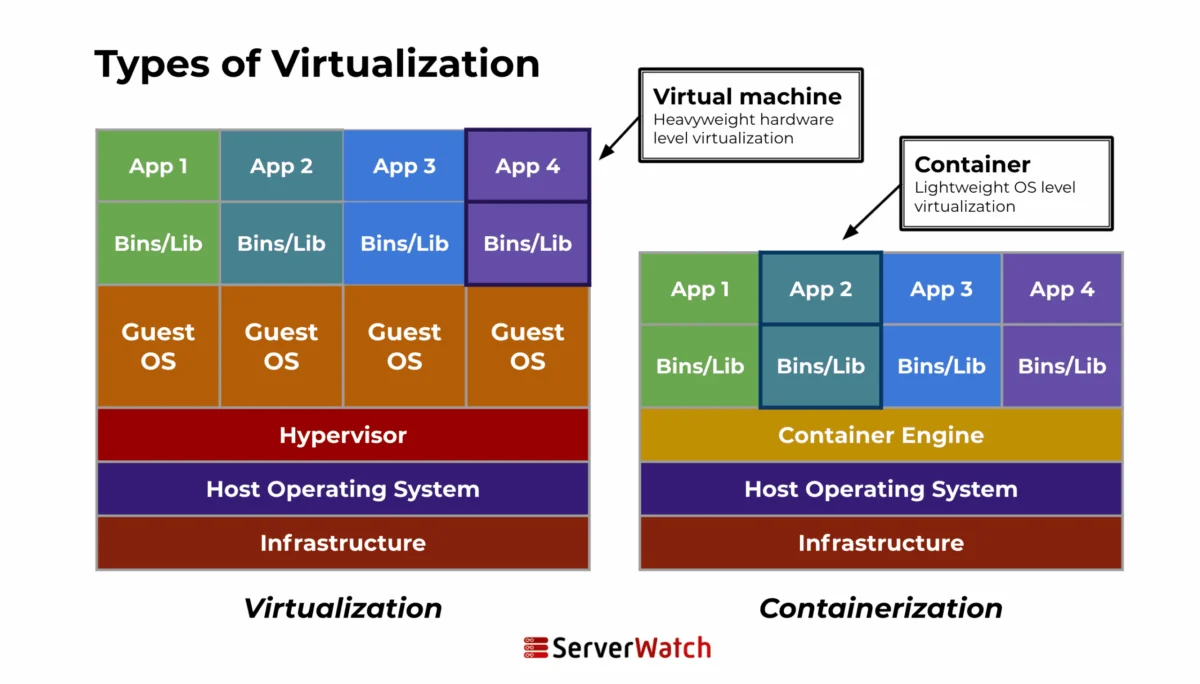

Unlike conventional deployment methodologies, containers encapsulate an application and its dependencies within a container image. This image serves as a blueprint, containing all the elements an application requires for execution: its code, the runtime environment, essential libraries, and necessary system tools. A crucial distinction lies in how containers leverage the host system’s kernel. While they maintain their own isolated filesystem, CPU, memory allocation, and process space, they share the host’s kernel. This architectural choice renders them significantly more lightweight and resource-efficient compared to virtual machines (VMs).

Visualizing the difference, imagine a single operating system kernel acting as the foundation. Containerization builds multiple, isolated "rooms" on top of this foundation, each containing a specific application and its necessities. Virtualization, conversely, involves building entirely separate "houses" (VMs), each with its own foundation (guest OS), on top of the existing landscape. This analogy underscores the inherent resource advantage of containers.

Containers vs. Virtual Machines: A Fundamental Divergence

While both containers and virtual machines (VMs) are instrumental in providing isolated environments for running applications, their underlying operational mechanisms differ significantly. The key distinctions lie in their architecture and resource management strategies.

| Feature | Containers | Virtual Machines |

|---|---|---|

| Architecture | Containers share the host system’s kernel, isolating application processes from the system. They do not necessitate a full operating system for each instance, making them lighter and faster to initiate than VMs. | A VM encapsulates not only the application and its dependencies but also an entire guest operating system. This OS operates on virtual hardware managed by a hypervisor, which resides on the host’s physical hardware. VMs are isolated from each other and the host. |

| Resource Management | Containers are exceptionally efficient, consuming fewer resources. This efficiency stems from sharing the host system’s kernel and requiring only the application and its runtime environment. | The requirement to run a complete OS within each VM leads to higher resource consumption. This can result in less optimal utilization of the underlying hardware resources. |

This architectural divergence has profound implications. Containers, by sharing the host OS kernel, can be spun up in milliseconds, whereas VMs, which must boot an entire operating system, typically take minutes. This speed difference is critical in dynamic environments demanding rapid scaling and deployment.

The Mechanics of Containerization: A Step-by-Step Process

Containerization involves meticulously encapsulating an application within a container, complete with its dedicated operating environment. This comprehensive process typically unfolds through several key stages:

- Image Creation: Developers define the container’s contents and configuration in a Dockerfile (or a similar definition file). This file acts as a set of instructions for building the container image.

- Image Building: A container engine (like Docker) interprets the Dockerfile and constructs the container image. This image is a read-only template containing the application code, libraries, dependencies, and runtime.

- Image Registry Storage: The built image is often pushed to a container registry (e.g., Docker Hub, Google Container Registry), serving as a central repository for storing and distributing container images.

- Container Instantiation: When an application needs to be run, a container instance is created from the image. This involves launching a running process from the image, creating a writable layer on top of the read-only image layers.

- Runtime Execution: The container engine manages the lifecycle of the container, orchestrating its execution, resource allocation, and interaction with the host system’s kernel.

Key Components of a Container

A functional container is comprised of several essential elements that work in concert to provide an isolated and executable environment:

- Container Image: This is the static, immutable blueprint containing the application code, runtime, libraries, environment variables, and configuration files. It is the foundation upon which containers are built.

- Container Runtime: This is the software responsible for running containers. It manages the container’s lifecycle, including starting, stopping, and monitoring processes. Examples include containerd, CRI-O, and Docker Engine.

- Container Engine: A higher-level component that provides the user interface and tools for building, running, and managing containers. Docker Engine is a prime example, encompassing the runtime, API, and command-line interface.

- Namespace: A core Linux kernel feature that provides process isolation. Each container operates within its own set of namespaces, ensuring that processes within one container cannot see or interact with processes in another, or with the host system’s processes.

- Control Groups (cgroups): Another Linux kernel feature that limits, accounts for, and isolates the resource usage (CPU, memory, disk I/O, network) of a collection of processes. Cgroups ensure that containers do not consume excessive resources, thereby preventing resource starvation for other containers or the host system.

- Union File Systems (UnionFS): Technologies like OverlayFS or AUFS enable containers to have a layered filesystem. This allows multiple containers to share common read-only image layers while maintaining their own writable layer for changes, contributing to efficiency and speed.

Pivotal Container Use Cases Driving Adoption

The versatility and efficiency of containerization have cemented its role across a broad spectrum of applications in the modern software landscape, catering to diverse needs in development, deployment, and ongoing management.

Microservices and Cloud-Native Architectures:

Containers are inherently aligned with the principles of microservices, a design paradigm where applications are deconstructed into small, independent services. Each microservice can be encapsulated in its own container, ensuring isolated environments, minimizing potential conflicts, and facilitating independent updates and scaling. In the realm of cloud-native development, containers enable applications to achieve remarkable scalability and resilience. They can be readily replicated, managed, and monitored, thereby optimizing load balancing and ensuring high availability. Orchestration tools like Kubernetes further enhance this by enabling dynamic management of containers, ensuring optimal resource utilization, automated self-healing capabilities, and streamlined scaling in response to fluctuating demand.

Continuous Integration/Continuous Deployment (CI/CD) Pipelines:

Containerization seamlessly integrates into CI/CD pipelines, fostering consistent environments that span from the initial development phase through to production deployment. This uniformity is instrumental in identifying and rectifying issues early in the development lifecycle. Moreover, containers can automate testing environments, guaranteeing that every code commit is rigorously tested in a production-like setting. This meticulous approach leads to more dependable deployments and accelerates release cycles. Crucially, because containers bundle the application and its environment, they ensure predictable behavior across development, testing, staging, and production environments, significantly reducing deployment failures attributed to environmental inconsistencies.

Application Packaging and Distribution:

The ability of containers to encapsulate an application and all its dependencies simplifies the process of packaging and distributing software across heterogeneous environments. The inherent portability of containers means applications can be deployed on various platforms and cloud environments without requiring any modifications. Furthermore, container registries offer robust version control, enabling the storage of multiple container image versions, which facilitates straightforward rollbacks to previous stable versions if issues arise. This capability significantly bolsters the reliability and stability of application deployments.

The Multifaceted Benefits of Containerization

Containerization has evolved into a foundational element of contemporary software development and deployment strategies, owing to its extensive array of advantages. These benefits collectively contribute to increased agility, reduced costs, and enhanced operational efficiency.

- Portability: Containers run consistently across different environments – development laptops, on-premises servers, public clouds, and private clouds – eliminating the "it works on my machine" problem.

- Consistency: Applications and their dependencies are packaged together, ensuring identical behavior regardless of the underlying infrastructure.

- Isolation: Containers provide process, network, and filesystem isolation, preventing conflicts between applications and enhancing security.

- Resource Efficiency: Containers share the host OS kernel, consuming fewer resources (CPU, RAM, storage) compared to VMs, leading to higher density and lower infrastructure costs.

- Speed and Agility: Containers start up in seconds, enabling rapid deployment, scaling, and iterative development cycles.

- Scalability: Applications can be easily scaled horizontally by launching multiple instances of a container to handle increased load.

- Agility in Development: Developers can build and test applications in environments that closely mirror production, speeding up the development feedback loop.

- Simplified Management: Container orchestration platforms automate deployment, scaling, and management of containerized applications, reducing manual overhead.

- Improved Security: While requiring careful management, container isolation mechanisms can enhance security by limiting the blast radius of vulnerabilities.

- Cost Savings: Reduced infrastructure needs, efficient resource utilization, and faster deployment cycles contribute to significant cost reductions.

- Faster Time to Market: Streamlined development, testing, and deployment processes accelerate the delivery of new features and applications.

- Developer Productivity: Developers can focus on writing code rather than managing infrastructure, leading to higher productivity.

- Vendor Lock-in Reduction: Containerization promotes portability across cloud providers, mitigating vendor lock-in.

Challenges and Considerations in Containerization

Despite its undeniable advantages, the widespread adoption of containerization is not without its hurdles. Organizations must proactively address these challenges to harness the full potential of container technologies.

Security Concerns:

The shared kernel architecture, while efficient, can present unique security challenges. Vulnerabilities in the host kernel or the container runtime could potentially impact all containers running on that host. Furthermore, misconfigurations in container images or orchestration platforms can expose sensitive data or create unauthorized access points. Security best practices, including image scanning, least privilege principles, and robust network segmentation, are paramount.

Complexity in Management:

While individual containers are simple, managing a large-scale deployment of interconnected containers can become complex. Orchestration platforms like Kubernetes, while powerful, introduce a steep learning curve. Ensuring high availability, effective load balancing, seamless updates, and efficient resource allocation across a distributed containerized environment requires specialized expertise and robust tooling.

Integration with Existing Systems:

Migrating legacy applications to containerized environments or integrating containerized applications with existing on-premises infrastructure can be a significant undertaking. This often involves refactoring applications, adapting deployment pipelines, and addressing data persistence and networking challenges that differ from traditional monolithic applications. Organizations must carefully plan their integration strategy to ensure a smooth transition.

Popular Container Technologies Leading the Charge

The containerization ecosystem is robust and dynamic, featuring several prominent technologies that have been instrumental in its widespread adoption.

Docker:

Docker stands as a pioneer and arguably the most ubiquitous container platform. It democratized containerization by providing an accessible and standardized toolset for developing, shipping, and running containerized applications. Docker’s key innovations include its user-friendly command-line interface, its image building system, and its extensive registry ecosystem.

- Key Features: Dockerfiles for defining images, Docker Hub for image registry, Docker Compose for multi-container applications, Docker Swarm for native orchestration.

- Benefits: Ease of use, large community support, extensive documentation, comprehensive tooling for the entire container lifecycle.

Kubernetes:

Kubernetes (K8s) has emerged as the de facto standard for container orchestration. Developed by Google and now maintained by the Cloud Native Computing Foundation (CNCF), Kubernetes automates the deployment, scaling, and management of containerized applications at scale. It is designed to be platform-agnostic, allowing it to run on-premises, in public clouds, and in hybrid environments.

- Key Features: Automated rollouts and rollbacks, service discovery and load balancing, storage orchestration, self-healing capabilities, secret and configuration management.

- Benefits: High availability, resilience, scalability, efficient resource utilization, declarative configuration, extensibility.

Other Notable Container Technologies:

Beyond the dominant players, several other container technologies and related tools contribute to the ecosystem:

- containerd: A core container runtime that provides the necessary functionality for managing the container lifecycle, often used by higher-level orchestrators.

- CRI-O: Another Kubernetes-native container runtime designed specifically to support pods, offering a lightweight alternative.

- Podman: A daemonless container engine for developing, managing, and running OCI containers on Linux systems, often positioned as a direct alternative to Docker.

- LXC/LXD: Linux Containers offer OS-level virtualization, providing a more traditional approach to system containers that can run multiple processes.

- OpenShift: Red Hat’s enterprise Kubernetes platform, offering additional developer and operations tools on top of Kubernetes for a more comprehensive container application platform.

Future Trends Shaping Containerization

The trajectory of containerization is intrinsically linked to the evolution of emerging technologies and evolving industry standards. The future promises deeper integration and more sophisticated applications of container technologies.

Integration with Emerging Technologies:

Containerization is poised to become increasingly intertwined with cutting-edge fields. Its role in AI and Machine Learning involves packaging complex ML models and their dependencies for consistent training and deployment across diverse hardware. In the Internet of Things (IoT), containers can streamline the deployment and management of applications on resource-constrained edge devices. Serverless computing is also benefiting, with platforms increasingly adopting containerization for function execution, offering greater flexibility and portability.

Evolution of Container Standards and Regulations:

As containerization matures and becomes more integral to critical business operations, the development of robust standards and regulatory frameworks is gaining momentum. Initiatives like the Open Container Initiative (OCI) are crucial for ensuring interoperability between different container runtimes and image formats. The increasing focus on supply chain security, compliance, and auditing within containerized environments will drive the need for more sophisticated tools and processes to ensure the integrity and trustworthiness of containerized software.

Bottom Line: The Enduring and Expanding Role of Containers

Containers have irrevocably reshaped the landscape of software development and deployment, delivering unprecedented levels of efficiency, scalability, and consistency. As these technologies continue their rapid evolution, containers are set to become even more deeply embedded in the fabric of modern IT, acting as catalysts for innovation and efficiency across a multitude of sectors.

Looking ahead, the potential of containerization remains vast. Its inherent ability to seamlessly integrate with future technological advancements and adapt to evolving regulatory landscapes positions it as a cornerstone of digital transformation strategies. Organizations that adeptly leverage container technologies will undoubtedly find themselves at the vanguard of innovation, well-equipped to navigate and thrive in the challenges and opportunities of an increasingly dynamic digital world.

For a deeper understanding, explore the nuances of virtual machines and identify the leading virtualization companies that can best support your infrastructure needs.