The artificial intelligence landscape, often dominated by the colossal models from tech giants like Anthropic, OpenAI, and Google, is experiencing a significant undercurrent of change. While headlines focus on the ever-expanding capabilities of proprietary systems, a parallel and increasingly dynamic market for open-source AI models is rapidly evolving. Developers worldwide are turning to these downloadable, adaptable, and customizable models for greater control over cost, deployment, and innovation. This burgeoning ecosystem, prominently showcased on the AI-centric platform Hugging Face, is revealing a striking geopolitical shift: China is emerging as a leading force in both the creation and utilization of open AI models, challenging the long-held dominance of US-based developers.

The Rise of Open Models and the Hugging Face Phenomenon

For years, the development of leading open-source AI models was largely spearheaded by Western entities, most notably Meta with its Llama family of models. Hugging Face, acting as a central hub for AI developers akin to GitHub for software, has provided an invaluable window into the trends shaping this field. Its platform hosts an extensive collection of models, datasets, and tools, fostering collaboration and innovation. Recent data from Hugging Face, as highlighted in its "State of Open Source on Hugging Face: Spring 2026" report, indicates a significant recalibration of this landscape. The center of gravity for open AI model development and adoption appears to be tilting eastward, with China increasingly at the forefront.

The "DeepSeek Moment": China’s Ascendancy in Open AI

A pivotal moment in this shift appears to be the release and subsequent adoption of models like DeepSeek R1. Launched in January 2025, DeepSeek R1 rapidly garnered attention for delivering performance competitive with leading proprietary systems at a significantly lower cost. Crucially, its model weights were made available for developers to freely download, adapt, and deploy, igniting a wave of interest and development within China.

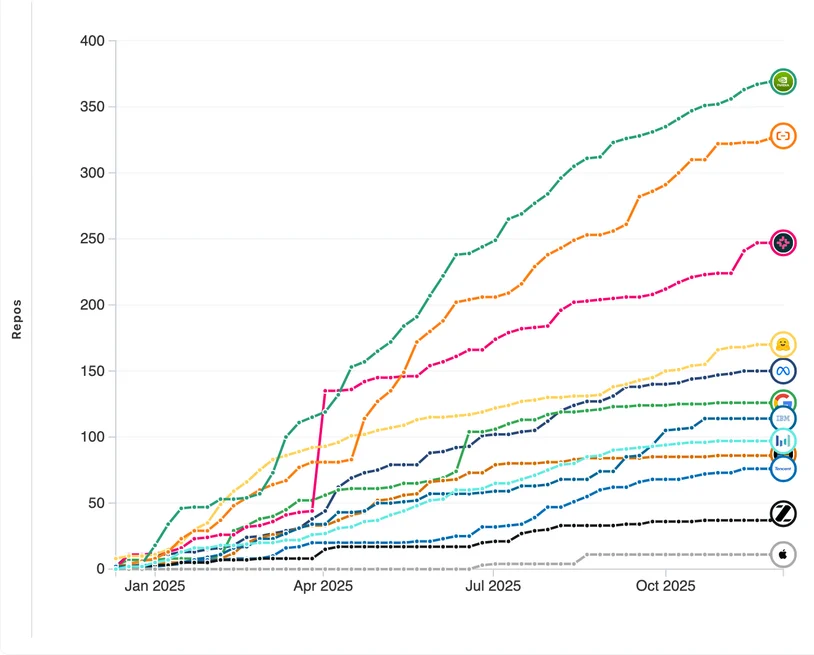

This event catalyzed broader engagement from Chinese technology companies. Following DeepSeek’s success, established players like Baidu, which had minimal presence on Hugging Face in 2024, saw their contributions surge to over 100 repositories in 2025. Major tech firms such as TikTok’s parent company ByteDance and Tencent reportedly expanded their model releases by as much as nine times, according to Hugging Face data. Even companies that previously focused on closed-source models, like MiniMax, have begun to embrace open releases. Most recently, Chinese smartphone manufacturer Xiaomi unveiled MiMo-V2-Pro, a trillion-parameter model positioned to rival top US systems in performance while offering a substantial cost advantage. The company has also indicated plans to release its model weights once stability is achieved, further fueling the open-source movement.

The impact of this surge is evident in deployment statistics. A report by RunPod, a provider of AI infrastructure, indicates that Alibaba’s Qwen models have surpassed Meta’s Llama as the most widely deployed self-hosted large language models. This trend is mirrored on Hugging Face itself, where models from Alibaba, including Qwen, have inspired over 100,000 derivative projects, underscoring their extensive adaptation and reuse by the developer community.

Developer sentiment, often gauged by "likes" on Hugging Face as an indicator of popularity and engagement, also reflects this seismic shift. A year prior, Meta’s Llama models dominated the top rankings. Today, DeepSeek-R1 holds the number one position, accompanied by a more diverse array of models from Chinese, German, and UK-based organizations, signaling a broadening of influential players beyond the traditional US stronghold.

The Unshakeable Foundation: Nvidia’s Hardware Dominance

Despite the dramatic rise in Chinese-developed open-source models, a critical dependency remains: the underlying hardware infrastructure. Nvidia, the semiconductor giant that transitioned from gaming graphics cards to the indispensable engine of the AI revolution, continues to exert unparalleled influence. Its market value has soared, recently surpassing $5 trillion, a testament to the insatiable demand for its Graphics Processing Units (GPUs), which are essential for both training and running complex AI models.

Nvidia’s strategy, however, extends beyond hardware. The company is actively pushing into the software and model layers of the AI stack, developing its own tools and models to guide developers and foster deeper integration with its ecosystem. This initiative is demonstrably visible on Hugging Face, where Nvidia has amassed over 350 repositories by the end of 2025, more than any other organization. This prolific output reflects a strategic effort to shape how AI systems are built and deployed, reinforcing its position as a central pillar of the AI infrastructure. Projects like its Nemotron model family and NemoClaw platform for AI agents exemplify this broader engagement.

The reality is that the vast majority of AI models, including the rapidly developing open-source ones from China, are still designed to operate on Nvidia GPUs. While support for alternative hardware, such as AMD systems and increasingly sophisticated chips developed by Chinese companies, is improving, the prevailing architecture remains firmly rooted in Nvidia’s technology. This symbiotic relationship explains Nvidia’s substantial investments in AI infrastructure, including a reported $26 billion over the next five years dedicated to developing open AI models. The logic is straightforward: by ensuring that AI models are optimized for its hardware, Nvidia solidifies its control over the entire AI value chain.

Navigating Geopolitical Tensions and Future Implications

This dynamic creates a fascinating dichotomy: while China is rapidly advancing in the realm of AI model development and adoption, the hardware enabling this progress remains largely under Western control, specifically Nvidia’s. This mirrors historical patterns seen in cloud computing, where a few US companies have dominated infrastructure even as competitors sought to build alternatives. Europe’s ongoing efforts to reduce reliance on these providers, with limited success thus far, highlight the enduring challenges of achieving digital sovereignty.

The implications for the future of AI are profound. The proliferation of open-source models offers unprecedented opportunities for innovation and accessibility. However, the continued dependence on a single hardware supplier, even as alternative domestic solutions are being developed, raises questions about supply chain resilience and geopolitical influence. Chinese companies, such as Alibaba, are actively investing in domestic AI inference chips to mitigate reliance on US hardware, particularly in the face of export restrictions. These efforts, though still in their nascent stages, signal a strategic imperative to build a more self-sufficient AI ecosystem.

The current landscape presents a clear divide: at the model level, Chinese developers are demonstrating remarkable innovation and adoption, as evidenced by Hugging Face data. Conversely, at the infrastructure level, Nvidia’s dominance is largely unassailable for the time being. As open-source AI models continue to spread globally, the underlying hardware question remains a critical factor shaping the future trajectory of this transformative technology. Regardless of which nation leads the charge in model development, the foundational reliance on specific hardware platforms underscores the complex interplay of technological advancement, economic competition, and geopolitical strategy in the ongoing AI revolution.