As the semiconductor industry approaches the physical and economic boundaries of Moore’s Law, the transition from monolithic System-on-Chip (SoC) architectures to multi-chiplet designs has become a strategic necessity for high-performance computing, artificial intelligence, and data center applications. The primary driver for this shift is the reticle limit, a physical constraint in lithography that restricts the maximum size of a single die to approximately 858 square millimeters. To exceed this capacity, engineering teams must now disaggregate large designs into smaller, modular components known as chiplets, which are then integrated into a single package using advanced 2.5D or 3D interconnect technologies.

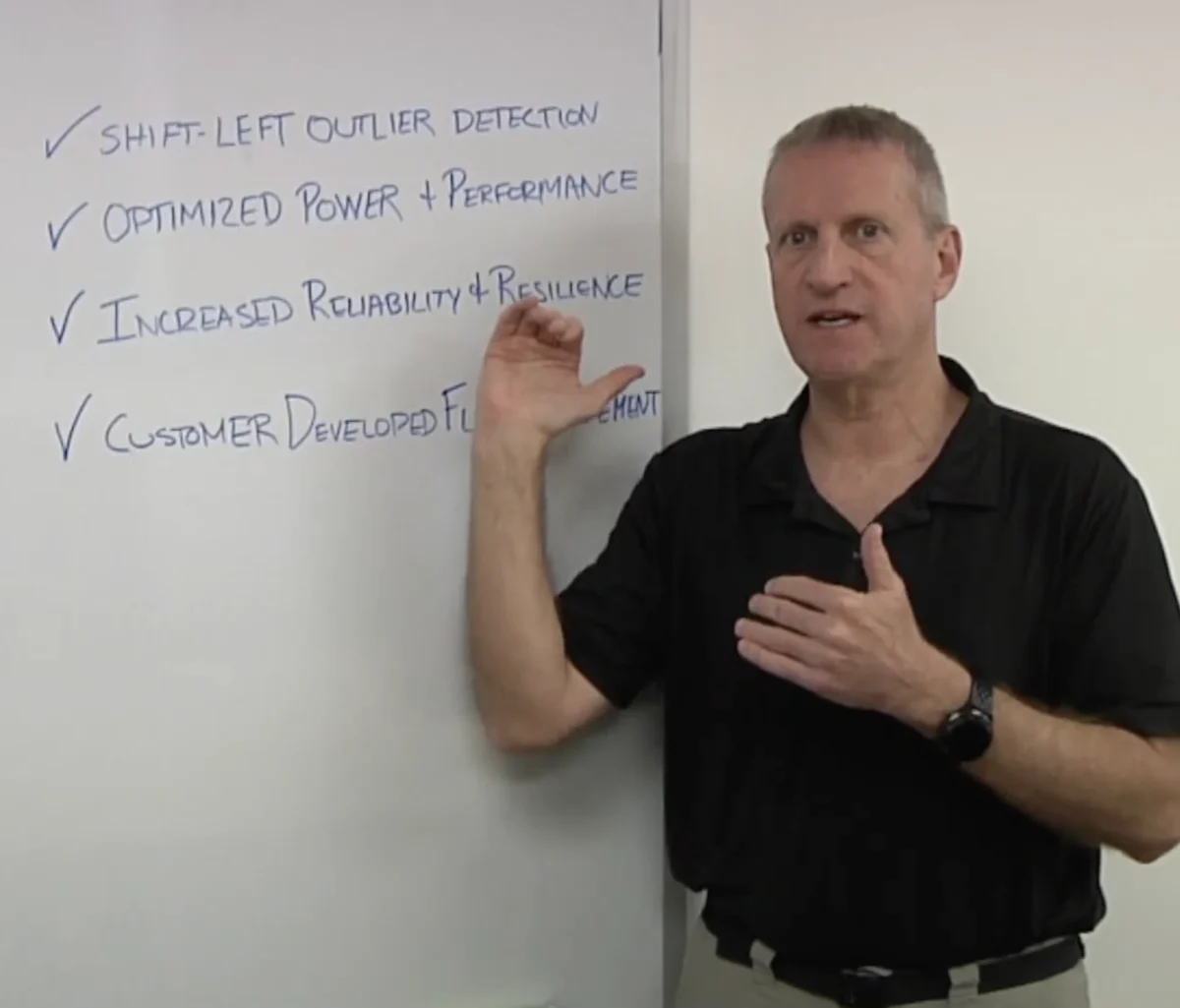

While the modular approach offers significant advantages in terms of yield and cost, it introduces a new layer of engineering complexity. Moving data between chiplets requires ultra-high-bandwidth interfaces that must operate with minimal latency and power consumption. Furthermore, the physical proximity of different chiplets—often manufactured at different process nodes—creates unique thermal and mechanical challenges that do not exist in traditional monolithic designs. Nir Sever, senior director of business development at proteanTecs, recently highlighted that the success of these systems depends on the ability of engineers to balance performance and power while ensuring long-term reliability through deep-data monitoring.

The Evolution of Chiplet Architectures: A Chronology of Disaggregation

The concept of breaking a chip into smaller pieces is not entirely new, but its adoption as a mainstream commercial strategy has accelerated rapidly over the last decade. Historically, the semiconductor industry relied on scaling transistors to pack more functionality into a single die. However, as process nodes moved below 7nm toward 3nm and 2nm, the cost of designing and manufacturing large monolithic dies skyrocketed.

In 2019, AMD marked a significant turning point in the industry with the release of its Zen 2 architecture, which utilized a chiplet-based approach for its Ryzen and EPYC processors. By separating the core compute functions (built on a leading-edge 7nm node) from the I/O functions (built on a more mature and cost-effective 12nm node), AMD demonstrated that chiplets could provide a performance-per-dollar advantage that monolithic designs could no longer match.

By 2022, the industry took a major step toward standardization with the formation of the Universal Chiplet Interconnect Express (UCIe) consortium. This group, which includes industry titans like Intel, TSMC, Samsung, and ARM, aims to create an open ecosystem where chiplets from different vendors can be mixed and matched within the same package. Today, the focus has shifted from simple "die-splitting" to complex heterogeneous integration, where AI accelerators, high-bandwidth memory (HBM), and specialized I/O chiplets are combined to create massive system-in-package (SiP) solutions.

The Engineering Challenge of Data Movement and Interconnects

One of the most critical engineering considerations in a multi-chiplet design is the Die-to-Die (D2D) interface. In a monolithic chip, data travels across the silicon via on-chip wires with very low resistance and capacitance. When that design is split into chiplets, data must cross a physical boundary, often through a silicon interposer, an organic substrate, or a bridge like Intel’s EMIB (Embedded Multi-die Interconnect Bridge).

Engineers must manage several competing factors in these interfaces:

- Bandwidth Density: AI workloads require massive amounts of data to move between compute units and memory. High-bandwidth interfaces must support terabits per second per millimeter of die edge.

- Power Efficiency: Because chiplets are often used in power-constrained environments like data centers, the energy cost of moving a single bit of data (measured in picojoules per bit) must be kept extremely low.

- Latency: To the software running on the processor, the multi-chiplet system should ideally appear as a single monolithic entity. Any significant latency introduced by the D2D interface can degrade system performance.

According to industry data, the move to chiplets can increase the total number of pins and interconnects by an order of magnitude. Managing the signal integrity of thousands of micro-bumps—the tiny solder balls that connect chiplets to the package—requires rigorous simulation and testing. A single poorly formed connection can render an entire multi-thousand-dollar package useless.

Physical Effects: Thermal Management and Mechanical Stress

Placing multiple high-power chiplets in close proximity creates a "thermal cross-talk" effect. In a traditional SoC, heat is generally distributed across the die. In a chiplet design, a high-performance GPU chiplet might be placed directly next to a sensitive HBM stack. If the GPU runs hot, it can raise the temperature of the memory beyond its operational limits, leading to data errors or even permanent hardware failure.

Furthermore, the materials used in chiplet packaging have different Coefficients of Thermal Expansion (CTE). As the device heats up and cools down during operation, the different components expand and contract at different rates. This creates mechanical stress on the interconnects, which can lead to "warpage" or cracking over time.

Engineering teams are now utilizing sophisticated thermal modeling and "digital twin" simulations to predict how heat will flow through the package. This is where the role of telemetry and on-chip monitoring becomes vital. Companies like proteanTecs advocate for the inclusion of "agents" or monitors embedded within the silicon to provide real-time data on temperature, voltage, and timing margins. By monitoring these parameters, system controllers can dynamically adjust workloads to prevent hotspots and extend the life of the package.

The Economic Reality: Yield and the Known Good Die (KGD) Problem

The primary economic argument for chiplets is the improvement of yield. In semiconductor manufacturing, a single defect on a wafer can ruin a die. As die size increases, the probability of a defect hitting that die increases. By breaking a large design into four smaller chiplets, a single defect only ruins 25% of the silicon area rather than 100%.

However, this creates the "Known Good Die" (KGD) problem. Before chiplets are assembled into an expensive package, manufacturers must be certain that every individual chiplet is fully functional. If a package contains ten chiplets and one is faulty, the entire assembly must be discarded. This risk grows exponentially as the number of chiplets per package increases.

To mitigate this, the industry is moving toward more exhaustive testing at the wafer level. Traditional testing methods are often insufficient for chiplets because many of the high-speed interfaces are designed to be tested only after they are bonded in the package. Engineers are now developing "built-in self-test" (BIST) structures and specialized probing techniques to ensure that each chiplet meets strict quality standards before integration.

Implications for the Future of Semiconductor Manufacturing

The shift toward multi-chiplet designs is fundamentally changing the relationship between fabless chip designers and foundries. We are moving away from a world where a single company designs everything on a chip, toward a "chiplet marketplace" where a system integrator might buy a compute chiplet from one vendor, an I/O chiplet from another, and a security chiplet from a third.

This transition has several long-term implications:

- Standardization: For a multi-vendor chiplet ecosystem to work, standards like UCIe must be universally adopted. This requires unprecedented cooperation among competitors.

- Security: Multi-chiplet designs introduce new security risks. If chiplets come from different sources, how can an integrator be sure there are no "hardware trojans" or backdoors in a third-party component? Secure "root of trust" chiplets will become a standard feature of these packages.

- Supply Chain Resilience: Chiplets allow companies to be more flexible. If a 3nm fab is overbooked, a company might choose to keep its non-critical functions on 7nm or 12nm chiplets, reducing its reliance on the most advanced (and often constrained) manufacturing nodes.

Conclusion: Balancing Innovation with Reliability

Engineering considerations in multi-chiplet designs represent the next great frontier in microelectronics. While the benefits of breaking the reticle limit are clear, the path to successful implementation is fraught with technical hurdles. The industry must solve the problems of interconnect density, thermal management, and "Known Good Die" verification to make this transition sustainable.

As Nir Sever and other experts emphasize, the focus is shifting from pure performance to "lifecycle management." In the era of chiplets, the job of an engineer does not end when the chip leaves the factory. Continuous monitoring of the physical health of the silicon is now required to ensure that these complex, multi-component systems can operate reliably for their intended lifespan. As AI continues to drive the demand for ever-larger and more complex processors, the multi-chiplet architecture will likely become the standard blueprint for the next generation of computing.