Containers have fundamentally reshaped the landscape of software development and deployment, offering a robust, efficient, and consistent method for packaging and running applications. At its core, a container is a lightweight, standalone, executable package of software that bundles everything needed to run a specific piece of software: its code, runtime, system tools, system libraries, and configurations. This technology is a key component of a broader paradigm known as containerization, which isolates software and its dependencies from other processes, ensuring predictable behavior across diverse environments. This article delves into the intricacies of containerization, exploring its fundamental components, its crucial distinctions from virtual machines, its wide-ranging benefits, practical use cases, leading technologies, and the challenges and future trajectories of this transformative technology.

Understanding the Power of Containers

Containerization empowers developers to package and execute applications within isolated environments. This process provides a reliable and efficient mechanism for deploying software, ensuring that an application behaves consistently whether it’s running on a developer’s local machine, a staging server, or a production environment, regardless of underlying operating system configurations or infrastructure variations.

Unlike traditional deployment methods that often grapple with dependency conflicts and environmental inconsistencies, containers encapsulate an application and all its necessary dependencies into a single, portable unit called a container image. This image contains the application’s code, runtime, libraries, and essential system tools. A critical aspect of containers is their ability to share the host system’s kernel while maintaining their own isolated filesystem, CPU, memory, and process space. This architectural efficiency makes containers significantly lighter and more resource-efficient than virtual machines (VMs).

Containers Versus Virtual Machines: A Fundamental Distinction

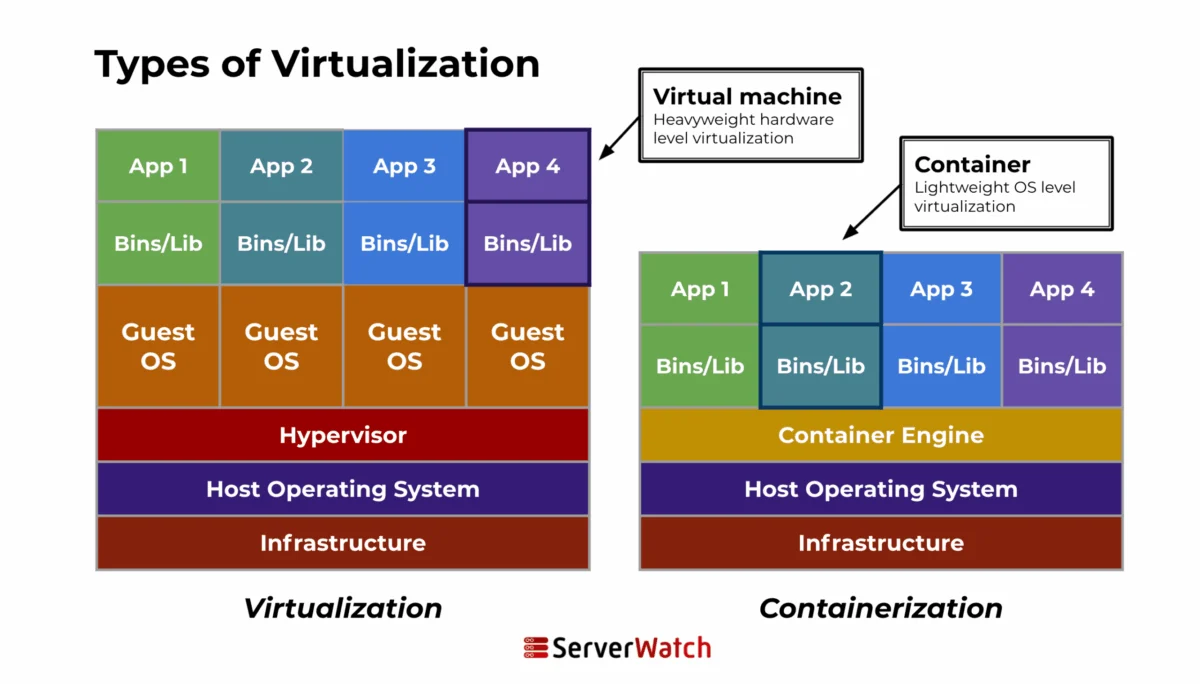

While both containers and virtual machines are utilized for isolating applications and their environments, they operate on fundamentally different principles. This distinction is crucial for understanding their respective strengths and optimal use cases.

| Feature | Containers | Virtual Machines (VMs) |

|---|---|---|

| Architecture | Containers share the host system’s kernel, isolating application processes from the host operating system. They do not require a full operating system for each instance, making them considerably more lightweight and faster to initiate than VMs. | Each VM includes not only the application and its dependencies but also a complete guest operating system. This guest OS runs on virtual hardware managed by a hypervisor, which itself resides on the host’s physical hardware. VMs are isolated from each other and the host system, offering a high degree of security and control. |

| Resource Management | Containers are more resource-efficient, consuming fewer system resources. This is due to their shared kernel architecture and the fact that they only need to package the application and its runtime environment. This leads to higher density and better hardware utilization. | The requirement to run a full OS within each VM results in higher resource consumption. This can lead to less efficient utilization of the underlying hardware, as each VM requires its own dedicated OS resources, including memory and CPU cycles. |

The efficiency of containers can be quantified by their startup times and resource footprints. A container can typically start in milliseconds, whereas a VM might take minutes to boot its operating system. In terms of memory, a container might consume tens of megabytes, while a VM could require gigabytes. This stark difference is a primary driver for the adoption of containers in microservices architectures and high-density cloud deployments.

The Mechanics of Containerization: How It Works

Containerization involves encapsulating an application within a container, complete with its own operating environment. This process typically entails several key stages:

- Image Creation: Developers define the contents of a container image using a Dockerfile or similar definition. This file specifies the base operating system, dependencies, application code, and configuration settings.

- Image Building: A container runtime engine, such as Docker, processes the definition file to build a layered image. Each instruction in the definition file creates a new layer in the image, enabling efficient storage and transfer.

- Container Instantiation: When an application needs to run, a container is created from the image. The container runtime engine creates an isolated process environment based on the image layers and the host system’s kernel.

- Runtime Execution: The application within the container runs as an isolated process on the host operating system. It interacts with the host’s resources (CPU, memory, network) through the shared kernel but is prevented from accessing or interfering with other containers or the host system directly.

- Orchestration: For managing multiple containers, orchestration platforms like Kubernetes automate deployment, scaling, and management, ensuring the availability and reliability of containerized applications.

Key Components of a Containerized Environment

A container is built upon several fundamental components that work in concert to provide isolation and portability:

- Container Image: A read-only template containing the application, its dependencies, libraries, and configuration. Images are built in layers, promoting efficiency.

- Container Runtime: Software responsible for running containers, such as Docker Engine or containerd. It manages the lifecycle of containers, including starting, stopping, and networking.

- Container Engine: The core software that creates and manages containers. It interacts with the host operating system’s kernel to provide isolation.

- Namespaces: A Linux kernel feature that partitions kernel resources such that one set of processes sees one set of resources, while another set sees a different set. This is crucial for isolating containers from each other and the host.

- Control Groups (cgroups): Another Linux kernel feature that limits, accounts for, and isolates the resource usage (CPU, memory, disk I/O, network, etc.) of a collection of processes. This ensures that one container doesn’t consume all available resources.

- Union File Systems (UnionFS): A file system that allows for multiple directory trees to be overlaid, forming a single coherent file system. This enables the layered nature of container images, where each layer is read-only.

- Container Orchestrator: Tools like Kubernetes or Docker Swarm that automate the deployment, scaling, and management of containerized applications across clusters of machines.

Diverse Container Use Cases in Modern Software Development

The versatility and efficiency of containers have made them indispensable across a wide spectrum of applications in the contemporary software ecosystem.

Microservices and Cloud-Native Architectures

Containers are intrinsically suited for microservices, an architectural style that structures an application as a collection of small, independently deployable services. Each microservice can be packaged in its own container, ensuring isolation, simplifying updates, and enabling independent scaling. In cloud-native environments, containers facilitate highly scalable and resilient applications that can be easily replicated, managed, and monitored for optimal load balancing and high availability. Orchestration tools further enhance this by dynamically managing container resources, providing automated healing, and streamlining scaling in response to demand. For instance, a complex e-commerce platform might break down its functionality into dozens of microservices—user authentication, product catalog, order processing, payment gateway—each running in its own container, managed by Kubernetes.

Continuous Integration/Continuous Deployment (CI/CD) Pipelines

Containers are a natural fit for CI/CD pipelines, providing consistent environments from development through to production. This consistency is paramount for early issue detection and resolution. Containers can automate testing environments, ensuring that every code commit is rigorously tested in an environment that closely mirrors production. This reduces deployment failures stemming from environmental discrepancies and accelerates release cycles. A developer commits code, which triggers an automated build in a containerized environment. This container is then used for unit testing, integration testing, and finally, deployment to a staging environment, all within the same containerized framework.

Application Packaging and Distribution

By encapsulating an application and all its dependencies, containers simplify the packaging and distribution of software across diverse environments. The portability of containers means that applications can be deployed on various platforms and cloud environments without requiring modifications. Container registries, such as Docker Hub or Amazon ECR, store multiple versions of container images, enabling straightforward rollbacks to previous stable versions, thereby enhancing deployment reliability and stability. A software vendor can package their application as a container image, allowing customers to deploy it on-premises, on a private cloud, or a public cloud with minimal effort.

The Compelling Benefits of Containerization

Containerization has emerged as a cornerstone of modern software development and deployment strategies, driven by a multitude of advantages.

- Portability: Applications packaged in containers run consistently across different environments, from developer laptops to production servers, eliminating the "it works on my machine" problem.

- Efficiency: Containers share the host OS kernel, resulting in lower resource overhead (CPU, memory) and faster startup times compared to VMs. This leads to higher server density and cost savings.

- Isolation: Containers provide process-level isolation, preventing applications from interfering with each other or the host system, enhancing security and stability.

- Scalability: Container orchestration platforms enable rapid scaling of applications by launching or terminating container instances based on demand.

- Agility: The ease of packaging, deploying, and managing containers accelerates development cycles and allows for quicker iteration and innovation.

- Consistency: Environments are identical across development, testing, staging, and production, reducing the risk of deployment errors.

- Resource Optimization: Efficient resource utilization through shared kernels and cgroups leads to better hardware performance and reduced infrastructure costs.

- Faster Deployment: Containers can be deployed in seconds or minutes, significantly reducing time-to-market for new features and applications.

- Simplified Dependency Management: All application dependencies are bundled within the container, eliminating conflicts with system-level libraries or other applications.

- Version Control: Container images can be versioned, allowing for easy rollbacks to previous stable states in case of issues.

- Microservices Enablement: Containers are the de facto standard for deploying and managing microservices architectures.

- Cost Savings: Reduced infrastructure needs, better resource utilization, and increased operational efficiency contribute to significant cost reductions.

- Enhanced Developer Productivity: Developers can focus on writing code without worrying about environment setup or deployment complexities.

Navigating Challenges and Considerations in Containerization

Despite its significant advantages, the adoption of containerization is not without its challenges and requires careful consideration.

Security Concerns

While containers offer isolation, they are not inherently immune to security threats. Potential vulnerabilities include:

- Image Vulnerabilities: Container images may contain outdated software or malicious code if not properly scanned and managed. A significant challenge is ensuring the integrity of images pulled from public repositories.

- Kernel Exploits: Since containers share the host kernel, a vulnerability in the kernel could potentially affect all containers running on that host.

- Container Escapes: Malicious actors might attempt to exploit vulnerabilities to break out of a container’s isolation and gain access to the host system or other containers.

- Misconfigurations: Incorrectly configured container runtimes or orchestration platforms can expose sensitive data or create security gaps. For example, running containers with excessive privileges can negate the benefits of isolation.

- Secrets Management: Securely managing sensitive information like passwords and API keys within containers is a critical challenge.

Complexity in Management

While containerization streamlines deployment, managing a large-scale containerized environment can introduce new complexities:

- Orchestration Complexity: Tools like Kubernetes, while powerful, have a steep learning curve and require specialized expertise for effective deployment, configuration, and maintenance.

- Networking: Managing network communication between numerous containers, potentially across multiple hosts, can be intricate.

- Storage: Persistent storage for stateful applications within containers requires careful planning and management.

- Monitoring and Logging: Aggregating logs and monitoring the performance of a distributed system of containers demands robust tooling and strategies.

- Skill Gap: Organizations may face a shortage of skilled personnel with expertise in container technologies and orchestration.

Integration with Existing Systems

Incorporating containerization into established IT infrastructure presents its own set of hurdles:

- Legacy Systems: Integrating containerized applications with legacy systems that were not designed for containerization can be challenging and may require significant re-architecture.

- Cultural Shift: Adopting containers often necessitates a shift in organizational culture, moving towards DevOps practices and a more agile approach to software development and operations.

- Toolchain Integration: Existing CI/CD pipelines, monitoring tools, and security solutions need to be adapted or replaced to work effectively with containers.

- Data Migration: Migrating data from traditional databases or storage solutions to container-based storage can be a complex undertaking.

Leading Container Technologies Shaping the Industry

The containerization landscape is dominated by a few key technologies that have become industry standards.

Docker: The Pioneer of Modern Containerization

Docker has been instrumental in popularizing containerization, making it accessible and standardized. It provides a comprehensive suite of tools for building, shipping, and running containerized applications.

- Key Features: Docker Engine, Dockerfiles for image definition, Docker Hub for image registry, Docker Compose for defining multi-container applications.

- Benefits: Ease of use, large community support, extensive ecosystem of tools and integrations, efficient image building and layering.

Kubernetes: The Orchestration Powerhouse

Kubernetes (K8s) is an open-source system for automating the deployment, scaling, and management of containerized applications. It is designed to work with various container runtimes, including Docker.

- Key Features: Automated rollouts and rollbacks, service discovery and load balancing, self-healing capabilities, secret and configuration management, automatic bin packing, batch execution.

- Benefits: Robust orchestration capabilities, high availability, scalability, declarative configuration, strong community and vendor support, industry standard for container management.

Other Notable Container Technologies

Beyond Docker and Kubernetes, several other projects contribute to the container ecosystem:

- containerd: An industry-standard container runtime that emphasizes simplicity, robustness, and portability. It is often used as a component within larger systems like Docker and Kubernetes.

- Podman: A daemonless container engine for developing, managing, and running OCI (Open Container Initiative) containers on Linux systems. It offers a command-line interface compatible with Docker.

- LXC (Linux Containers): A foundational technology for OS-level virtualization on Linux, providing a way to run multiple isolated Linux systems on a single Linux host.

- rkt (Rocket): An open-source container runtime that aimed to provide a more secure and composable alternative to Docker, though its development has largely ceased.

Future Trends in Containerization

The evolution of containerization is dynamic, with ongoing advancements integrating it more deeply with emerging technologies and adapting to new standards and regulations.

Integration with Emerging Technologies

Containerization is increasingly intersecting with cutting-edge fields, driving innovation:

- AI and Machine Learning: Containers provide reproducible and scalable environments for training and deploying AI/ML models. Frameworks like TensorFlow and PyTorch are often distributed and run within containers.

- Edge Computing: Lightweight containers are ideal for deploying applications on edge devices with limited resources, enabling distributed intelligence and processing closer to the data source.

- Serverless Computing: While seemingly contradictory, containers are playing a role in serverless platforms by providing the underlying execution environment for serverless functions, offering more control and flexibility.

- WebAssembly (Wasm): Wasm is emerging as a portable compilation target for languages like C++, Rust, and Go, and its integration with containerization promises even more secure and performant sandboxed applications.

Evolution of Container Standards and Regulations

As containerization matures, the development of robust standards and regulations is becoming critical:

- Security Standards: Increased focus on formalizing security best practices, including image signing, runtime security monitoring, and vulnerability management, is expected.

- Interoperability: Continued efforts to ensure interoperability between different container runtimes, orchestrators, and cloud platforms through standards like OCI (Open Container Initiative).

- Compliance and Governance: As containers become integral to enterprise infrastructure, regulatory compliance and robust governance frameworks for containerized environments will gain prominence.

- Sustainable Containerization: Research into optimizing container resource usage for reduced energy consumption and environmental impact is likely to grow.

The Bottom Line: The Indispensable Role of Containers

Containers have irrevocably transformed software development and deployment, delivering unparalleled efficiency, scalability, and consistency. As these technologies continue their rapid evolution, containers are poised to become even more central, fostering innovation and driving efficiencies across diverse sectors. The future potential of containerization is immense, with its inherent ability to seamlessly integrate with forthcoming technological advancements and adapt to evolving regulatory landscapes positioning it as a cornerstone of digital transformation strategies. Organizations that effectively harness the power of container technologies will undoubtedly lead the charge in innovation, equipped to navigate the complexities and seize the opportunities of a rapidly advancing digital world.

For those seeking to deepen their understanding, exploring the nuances of virtual machines offers valuable comparative context, and identifying the right virtualization companies can further enhance an organization’s cloud and infrastructure strategy.