Amazon Web Services (AWS) has announced the immediate availability of Anthropic’s most advanced large language model (LLM), Claude Opus 4.7, on its fully managed service, Amazon Bedrock. This strategic integration marks a significant leap forward in providing enterprises with access to cutting-edge artificial intelligence, specifically designed to elevate performance across complex domains such as agentic coding, long-running autonomous agents, and demanding professional knowledge work. The deployment of Claude Opus 4.7 is powered by Bedrock’s next-generation inference engine, which promises unparalleled enterprise-grade infrastructure for production workloads, emphasizing availability, scalability, and robust data privacy.

Claude Opus 4.7: A New Benchmark in AI Intelligence

Anthropic’s Claude Opus 4.7 represents the pinnacle of their foundational model series, engineered to tackle the most challenging AI tasks with enhanced precision and reasoning. As an upgrade from its predecessor, Opus 4.6, the 4.7 model boasts considerable improvements in its ability to navigate ambiguity, exhibit more thorough problem-solving methodologies, and adhere to intricate instructions with greater fidelity. This makes it particularly suited for workflows that demand high levels of accuracy and cognitive depth in production environments.

The model’s advancements are not merely incremental; they are designed to fundamentally transform how businesses leverage AI. For instance, in agentic coding, Opus 4.7 can generate more sophisticated and contextually appropriate code, understand complex architectural requirements, and even debug with greater efficiency. In the realm of knowledge work, it can synthesize vast amounts of information, extract nuanced insights, and generate comprehensive reports that previously required extensive human intervention. Furthermore, its enhanced visual understanding capabilities allow it to interpret and act upon visual data, opening new avenues for applications in various industries. The model’s proficiency in handling long-running tasks means it can maintain context and consistency over extended interactions, a critical feature for building persistent AI agents that manage complex projects or provide continuous support.

Anthropic, a leading AI safety and research company, has consistently focused on developing safe and steerable AI systems. Their "Constitutional AI" approach, which guides their models to align with human values and principles, is implicitly woven into Opus 4.7, aiming to provide not just powerful but also responsible AI capabilities to enterprises. This focus on safety and ethics resonates strongly with enterprises seeking to integrate AI responsibly into their core operations.

Amazon Bedrock’s Next-Generation Inference Engine: The Backbone of Performance

The integration of Claude Opus 4.7 on Amazon Bedrock is underpinned by a newly developed, state-of-the-art inference engine. This engine is a cornerstone of Bedrock’s value proposition, offering an enterprise-grade foundation specifically engineered for demanding production workloads. A key innovation within this engine is its brand-new scheduling and scaling logic. This advanced mechanism dynamically allocates capacity to incoming requests, significantly improving the overall availability of the service, particularly for steady-state workloads that require consistent performance. Concurrently, it efficiently manages resources to accommodate rapidly scaling services, ensuring that enterprises can scale their AI applications seamlessly without encountering bottlenecks.

Beyond performance, the Bedrock inference engine prioritizes data security and privacy—a paramount concern for businesses deploying sensitive AI applications. It features "zero operator access," a critical security measure meaning that customer prompts and the resulting responses are never visible to either Anthropic or AWS operators. This architectural design ensures that sensitive proprietary data remains private and secure, addressing a significant barrier to enterprise AI adoption and fostering trust in the cloud-based AI platform. This level of data isolation is crucial for industries dealing with confidential information, regulatory compliance, and intellectual property.

A Strategic Partnership for AI Innovation

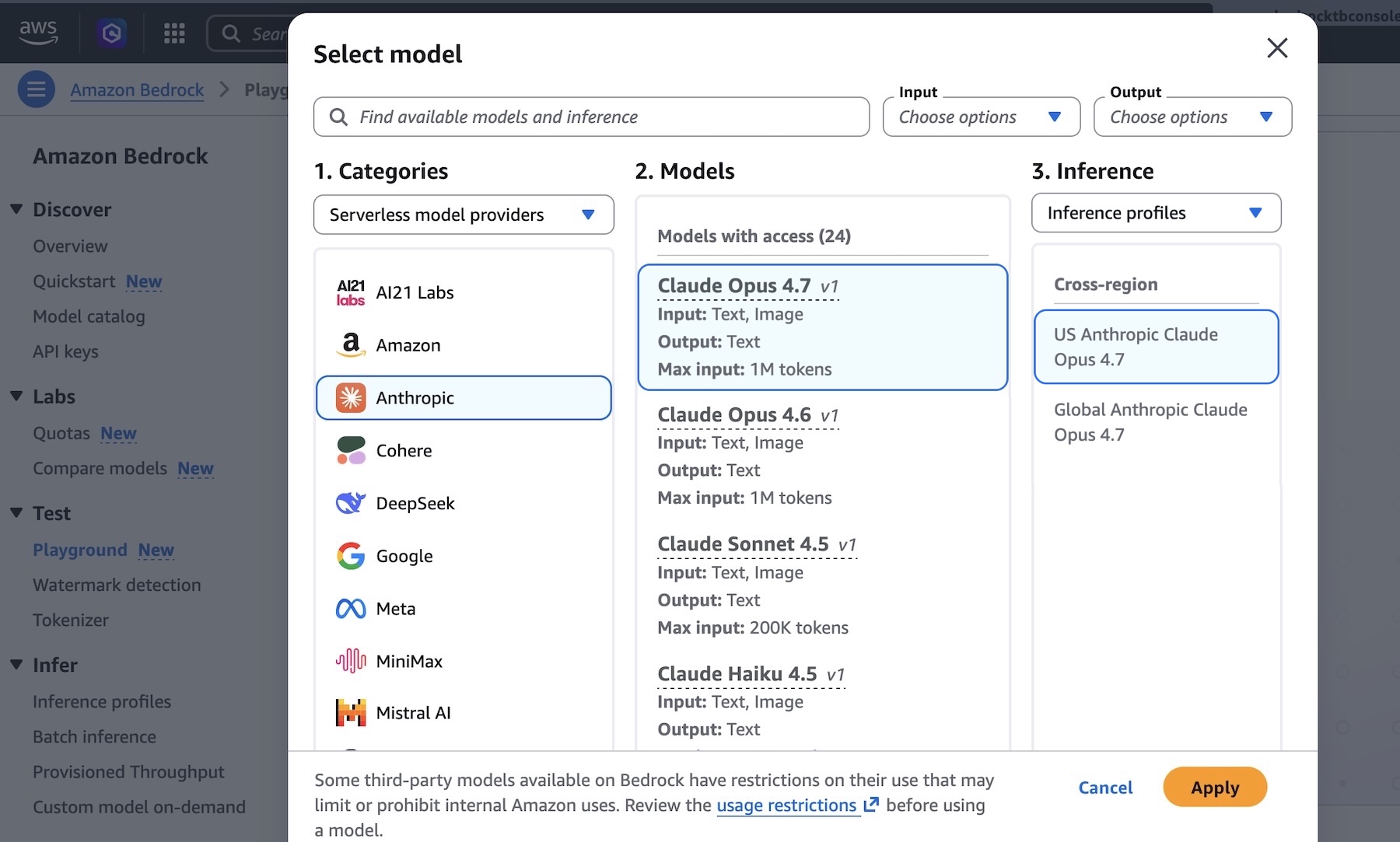

The collaboration between AWS and Anthropic is a testament to the growing trend of cloud providers partnering with leading AI model developers to democratize access to advanced AI. Amazon Bedrock, launched in 2023, was designed as a fully managed service that offers a choice of high-performing foundation models from leading AI companies, alongside Amazon’s own models, through a single API. This approach allows developers to easily experiment with various models, fine-tune them with their own data, and integrate them into their applications. The inclusion of Claude Opus 4.7 further solidifies Bedrock’s position as a premier platform for enterprise AI development.

This partnership is part of a broader chronology of innovation in the AI space. AWS has consistently invested in making cutting-edge technologies accessible, and its commitment to providing a diverse array of foundation models reflects the varied needs of its vast customer base. Anthropic, for its part, has emerged as a formidable player in the LLM landscape, competing with other major models by focusing on safety, steerability, and robust performance across a wide range of tasks. The continuous evolution of models like Claude, from earlier versions to Opus 4.7, highlights the rapid pace of advancement in AI and the imperative for platforms like Bedrock to keep pace.

Practical Implementation and Developer Accessibility

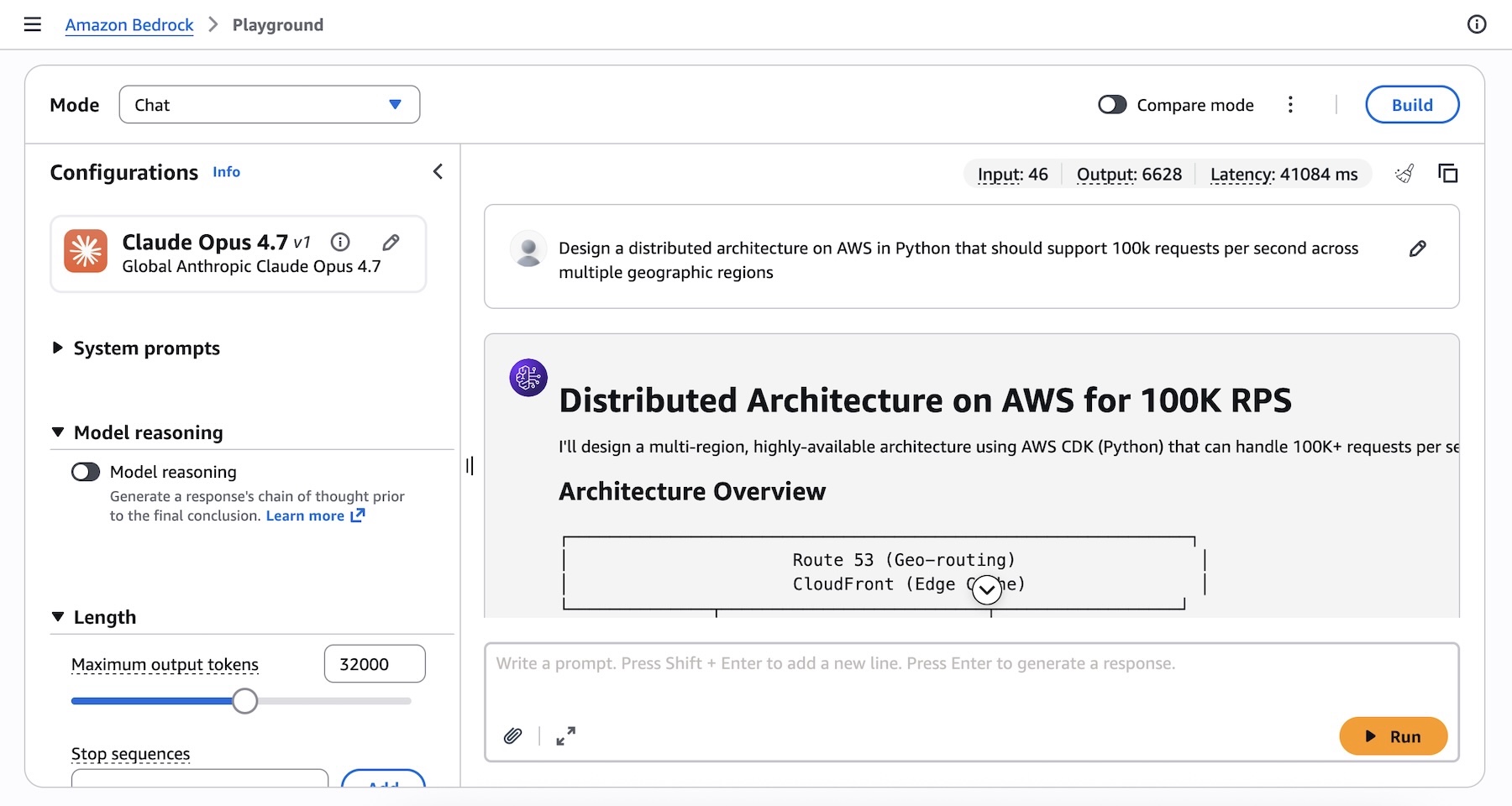

Developers and enterprises can readily access Claude Opus 4.7 through the Amazon Bedrock console. The "Playground" under the "Test" menu provides an intuitive interface for experimenting with the model, allowing users to test complex prompts and observe the model’s responses in real-time. For instance, a challenging prompt like "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions" can be submitted, showcasing Opus 4.7’s advanced reasoning and code generation capabilities.

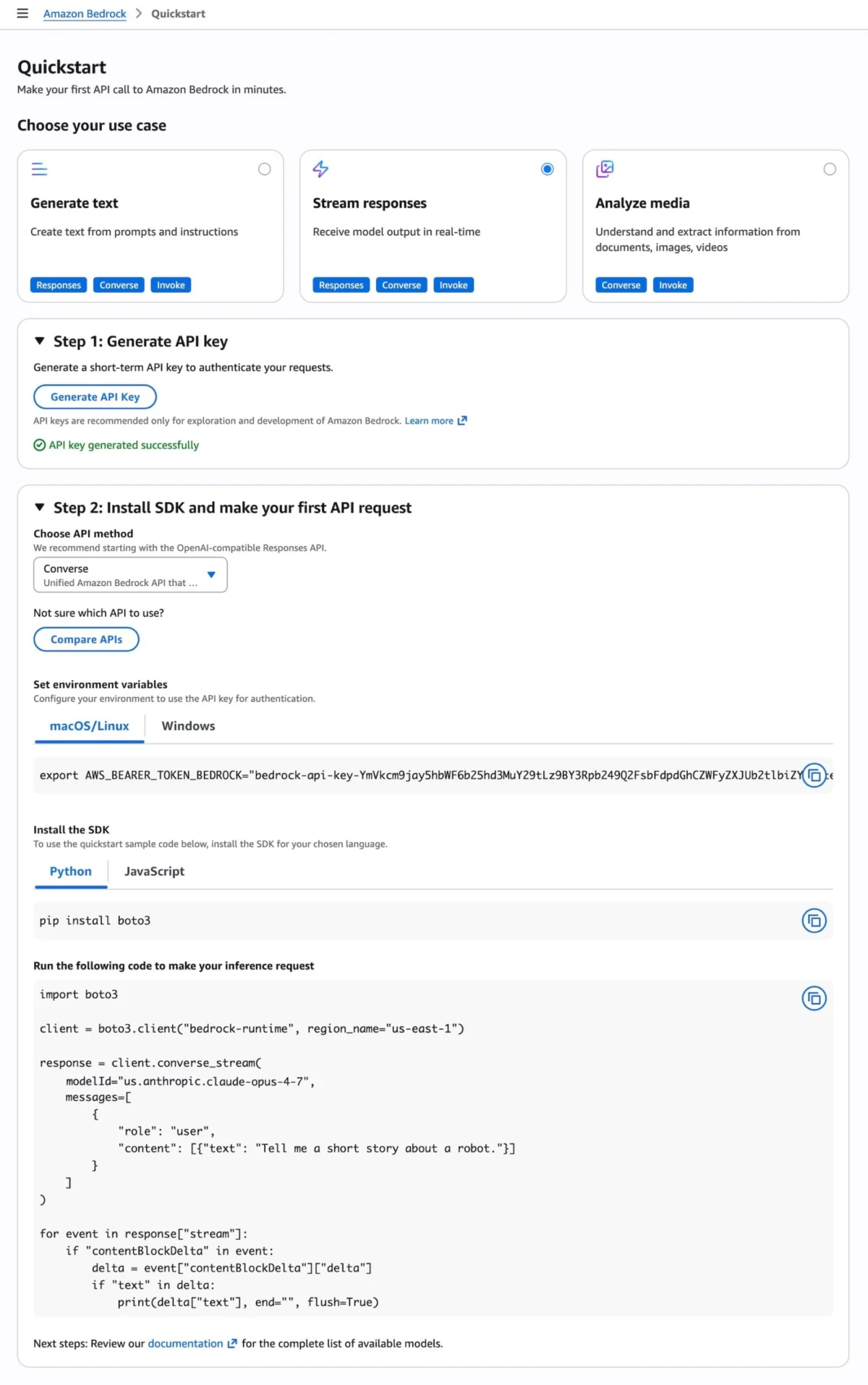

For programmatic access, developers have multiple robust options. The Anthropic Messages API can be invoked through the bedrock-runtime or bedrock-mantle endpoints using the dedicated anthropic[bedrock] SDK package. This provides a streamlined experience, automatically handling authentication via SigV4. Alternatively, the AWS Command Line Interface (AWS CLI) and AWS SDKs (for various programming languages like Python, Java, Node.js, etc.) allow direct interaction with the Invoke and Converse API on bedrock-runtime endpoints.

AWS has also simplified the onboarding process with a "Quickstart" option in the console, enabling users to generate short-term API keys for testing purposes and obtain sample code snippets tailored to their chosen API method, such as the OpenAI-compatible Responses API. This focus on developer experience underscores AWS’s commitment to lowering the barrier to entry for advanced AI development.

A notable feature introduced with Claude Opus 4.7 on Bedrock is "Adaptive Thinking." This intelligent capability allows Claude to dynamically allocate thinking token budgets based on the perceived complexity of each request. This means that for simpler prompts, the model conserves resources, while for highly complex problems, it can dedicate more computational "thought" processes, leading to more intelligent and nuanced responses without requiring manual tuning of token limits by the user. This adaptive approach optimizes both performance and cost-efficiency, making the model even more attractive for diverse enterprise workloads.

Global Availability and Economic Considerations

Anthropic’s Claude Opus 4.7 model is immediately available in several key AWS regions: US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). This initial rollout strategically covers major economic hubs, enabling a wide range of global enterprises to leverage the new capabilities. AWS has also indicated that additional regions will be supported in future updates, catering to its worldwide customer base.

The pricing structure for Claude Opus 4.7 on Amazon Bedrock follows the standard Bedrock model, which typically involves usage-based pricing for input and output tokens. This pay-as-you-go model offers flexibility for businesses, allowing them to scale their AI consumption based on actual demand without significant upfront investments. Given the advanced capabilities of Opus 4.7, enterprises can expect a competitive cost-benefit ratio, especially when considering the potential for increased automation, efficiency, and innovation that the model can unlock. The cost-effectiveness of an advanced model like Opus 4.7 must be weighed against its ability to perform tasks that are either impossible or prohibitively expensive for less capable models, ultimately driving a greater return on investment.

Broader Implications for the AI Landscape

The launch of Claude Opus 4.7 on Amazon Bedrock carries significant implications for the broader AI landscape. Firstly, it intensifies the competition among cloud providers in offering the most advanced and secure AI platforms. As AI capabilities become a crucial differentiator, the ability to host leading foundation models with robust infrastructure and privacy features becomes paramount. This move positions AWS as a strong contender in the race to become the preferred platform for enterprise AI.

Secondly, it further democratizes access to state-of-the-art AI. By making Opus 4.7 available through a managed service like Bedrock, AWS removes much of the operational complexity associated with deploying and scaling large language models. This allows a wider range of organizations, from startups to large enterprises, to harness the power of advanced AI without needing specialized infrastructure or deep ML operations expertise.

Finally, the emphasis on "zero operator access" and Anthropic’s commitment to "Constitutional AI" underscores the increasing importance of responsible AI development and deployment. As AI models become more powerful and integrated into critical business functions, trust, security, and ethical considerations are no longer optional but essential. This launch sends a clear signal that both AWS and Anthropic are prioritizing these aspects, setting a higher standard for the industry.

Statements and Reactions

While specific real-time statements were not provided in the original announcement, the launch aligns with typical strategic communications from both AWS and Anthropic. An inferred statement from an AWS executive might highlight the company’s commitment to empowering customers with the most innovative AI tools, emphasizing Bedrock’s role in providing choice, security, and scalability for enterprise workloads. "Our goal with Amazon Bedrock is to accelerate AI innovation for every organization," an AWS spokesperson might state. "The integration of Anthropic’s Claude Opus 4.7, backed by our next-gen inference engine and its unmatched privacy features, delivers a transformative capability for our customers tackling their most complex challenges."

Similarly, a representative from Anthropic would likely express enthusiasm for making their flagship model accessible to AWS’s extensive customer base. "Claude Opus 4.7 represents a significant leap in AI intelligence, and we are thrilled to bring its advanced capabilities to enterprises via Amazon Bedrock," an Anthropic spokesperson could comment. "This collaboration underscores our shared vision of delivering powerful, safe, and steerable AI that drives real-world impact across coding, professional services, and agentic workflows."

The developer community and enterprise users are expected to react positively to the enhanced capabilities and robust infrastructure. Early adopters will likely experiment with the model for complex automation, advanced content generation, and sophisticated decision-support systems, providing valuable feedback that will further shape the future development of AI tools on Bedrock.

In conclusion, the availability of Anthropic’s Claude Opus 4.7 on Amazon Bedrock represents a pivotal moment in the enterprise AI landscape. By combining one of the most intelligent LLMs with a highly secure, scalable, and developer-friendly managed service, AWS is setting a new standard for what businesses can achieve with artificial intelligence. The focus on complex problem-solving, data privacy, and ease of integration positions this offering as a critical tool for organizations aiming to innovate and gain a competitive edge in an increasingly AI-driven world. The continuous evolution of such partnerships and technologies promises an exciting future for AI applications across every industry.