Amazon Web Services (AWS) has announced the immediate availability of Amazon Redshift RG instances, a groundbreaking new instance family designed to significantly enhance the performance and cost-efficiency of cloud data warehousing. Powered by AWS Graviton processors, these new instances promise to accelerate data warehouse workloads by up to 2.2 times compared to previous-generation RA3 instances, while simultaneously offering a 30% lower price per vCPU. This strategic introduction addresses the escalating demands of modern analytics, particularly the exponential growth in query volumes driven by business intelligence (BI) tools, extract, transform, load (ETL) pipelines, and the burgeoning field of autonomous AI agents.

The Evolution of Amazon Redshift: A Decade of Data Innovation

Since its inception in 2013, Amazon Redshift has been a pivotal force in democratizing data warehousing, offering the full capabilities of an enterprise-grade data warehouse in the cloud at a fraction of the cost associated with traditional on-premises solutions. Over the past decade, AWS has consistently iterated on Redshift’s architecture, pushing the boundaries of performance and efficiency. Early generations focused on dense compute, followed by the introduction of Amazon RA3 instances, which decoupled compute and storage, allowing independent scaling for optimized resource utilization. More recently, Amazon Redshift Serverless emerged, providing an on-demand, auto-scaling option that eliminates the need for cluster management, further simplifying operations for customers. Each architectural leap has aimed to make data queries faster, cheaper, and more efficient, adapting to the ever-increasing scale and complexity of data.

The data landscape has undergone a profound transformation, moving beyond a singular focus on structured data warehouses. Organizations increasingly manage vast, diverse datasets, often stored cost-effectively in data lakes like Amazon Simple Storage Service (Amazon S3). This dual-system approach necessitates seamless integration and efficient querying across both structured data in warehouses and semi-structured or unstructured data in data lakes. Furthermore, the advent of sophisticated AI agents has introduced a new paradigm of data consumption, where automated systems query data warehouses at a scale and frequency that far surpass typical human usage, creating unprecedented demands on operational costs and system performance.

In response to these evolving challenges, Amazon Redshift has been on a continuous improvement trajectory. As recently as March 2026, AWS rolled out significant performance enhancements that boosted new query speeds by up to seven times. These improvements were specifically targeted at reducing response times for low-latency SQL queries, critical for near-real-time analytics applications, dynamic BI dashboards, efficient ETL processes, and the responsive operation of goal-seeking AI agents. The introduction of RG instances builds directly on this foundation, representing the next major stride in Redshift’s commitment to handling any workload, whether initiated by human analysts or sophisticated AI.

Graviton Powering the Next Generation of Analytics

At the heart of the new Amazon Redshift RG instances lies AWS Graviton technology. Graviton processors are custom-designed, ARM-based central processing units (CPUs) developed by AWS, offering a compelling blend of superior performance and energy efficiency. AWS began its Graviton journey in 2018 with the introduction of Graviton1, followed by Graviton2 and the latest Graviton3, which power a growing array of AWS services, including Amazon EC2, AWS Lambda, and now, Amazon Redshift. This in-house chip development strategy provides AWS with greater control over the silicon, allowing for deep optimization across its cloud infrastructure and services.

The adoption of Graviton processors for Redshift RG instances is a significant strategic move. By leveraging these custom-built chips, AWS can deliver substantial performance gains for data warehousing workloads. Graviton processors are engineered to handle demanding computational tasks with greater efficiency, translating directly into faster query execution and improved throughput for Redshift clusters. This efficiency also contributes to the lower operational costs, as the processors deliver more performance per watt, ultimately reducing the price per vCPU for customers. This integration underscores AWS’s commitment to vertical integration and optimizing its hardware and software stack to provide unparalleled cloud services.

Unpacking the Performance and Cost Advantages

The Amazon Redshift RG instances stand out with their impressive performance and cost metrics. AWS states that these new instances can run data warehouse workloads up to 2.2 times faster than the preceding RA3 instances. This acceleration is crucial for applications requiring rapid data insights, such as real-time dashboards that need to update instantaneously or complex analytical queries that process massive datasets. Furthermore, the 30% lower price per vCPU directly translates into significant cost savings for organizations, especially those with large-scale or bursty analytical workloads.

To illustrate the upgrade path and benefits, AWS provides a clear comparison for customers:

| Current RA3 Instance | Recommended RG instance | vCPU | Memory (GB) | Primary Use Case |

|---|---|---|---|---|

ra3.xlplus |

rg.xlarge |

4 | 32 | Small cluster departmental analytics |

ra3.4xlarge |

rg.4xlarge |

12 – 16 (1.33:1 ratio) | 96 GB – 128 GB (1.33:1 ratio) | Standard production workloads, medium data volumes |

This table indicates a direct upgrade path, where rg.xlarge instances replace ra3.xlplus for smaller analytical needs, and rg.4xlarge instances are recommended for standard production workloads, offering an increase in both vCPU count and memory. The improved resource allocation, coupled with Graviton’s inherent efficiencies, ensures that customers receive more processing power for their investment. The performance uplift is not merely incremental; it represents a generational leap, enabling businesses to derive insights faster and at a reduced operational expense, optimizing their total cost of ownership (TCO) for analytics infrastructure.

Seamless Data Lake Integration and Cost Optimization

One of the most significant advancements introduced with Amazon Redshift RG instances is their integrated data lake query engine. Traditionally, querying data stored in data lakes (e.g., Apache Iceberg, Apache Parquet files on Amazon S3) from Redshift often involved using Amazon Redshift Spectrum, a serverless query service that allowed Redshift to execute SQL queries directly against data in S3 without loading it into the data warehouse. While effective, Spectrum incurred separate per-terabyte scanning charges and operated as an external service.

With RG instances, Redshift now executes data lake queries directly on the cluster nodes – the same compute resources that process traditional data warehouse workloads. This profound architectural shift brings several transformative benefits:

- Enhanced Performance: The integrated engine delivers substantially faster query execution for data lake formats. Specifically, it offers performance up to 2.4 times faster than RA3 for Apache Iceberg and up to 1.5 times faster for Apache Parquet. This speed is critical for modern data strategies that heavily rely on combining structured and unstructured data for comprehensive analysis.

- Elimination of Spectrum Fees: By processing data lake queries within the Redshift cluster, the previous Amazon Redshift Spectrum scanning fees ($5 per terabyte) are entirely removed. This represents a direct and substantial cost saving for customers who frequently query data in their S3 data lakes, simplifying billing and significantly reducing overall analytics costs.

- Simplified Architecture and Operations: The integrated approach means a single system and engine can now efficiently query both warehouse tables and Amazon S3 data lakes. This eliminates the operational overhead of managing separate query services and provides a unified, consistent experience for data professionals.

- Enhanced Security and Compliance: Data lake queries now stay entirely within the customer’s Virtual Private Cloud (VPC) boundary and leverage existing AWS Identity and Access Management (IAM) roles. This ensures that data access and processing adhere to established security policies and compliance requirements, without data having to traverse external services.

This convergence of data warehousing and data lake analytics within a single, high-performance engine marks a pivotal moment for data architecture. It empowers organizations to build more agile and comprehensive analytics solutions, breaking down traditional data silos and fostering a more unified approach to data governance and analysis.

Addressing the Demands of AI and Agentic Workloads

The rise of artificial intelligence, particularly generative AI and machine learning, is profoundly reshaping the demands placed on data infrastructure. AI agents, whether used for autonomous decision-making, predictive analytics, or natural language processing, require access to vast quantities of data, often with extremely low-latency requirements and at scales that far exceed human interaction. These agents query data warehouses continuously and voluminously, making efficiency and cost critical factors.

Amazon Redshift RG instances are specifically designed to meet these burgeoning demands. The combination of significantly faster query performance and a lower price per vCPU makes them ideally suited for the high query volumes characteristic of AI-driven workloads. By providing a more performant and cost-effective foundation for data access, Redshift RG instances enable organizations to deploy and scale AI applications without incurring spiraling operational costs. This capability is vital for businesses looking to leverage AI for competitive advantage, ensuring that their data infrastructure can keep pace with the computational intensity of advanced AI models. The efficiency gains offered by Graviton processors contribute to a more sustainable and scalable infrastructure for the future of AI.

Deployment and Migration Pathways

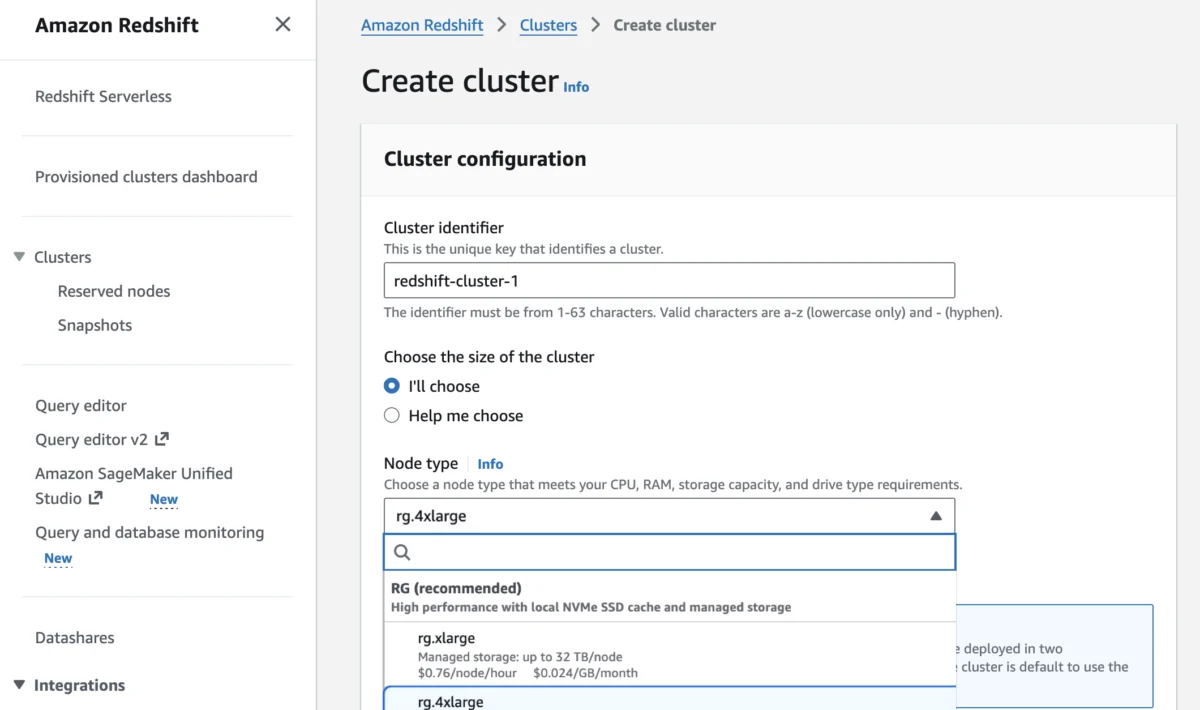

Customers interested in leveraging the power of Amazon Redshift RG instances can get started through multiple familiar AWS interfaces: the AWS Management Console, the AWS Command Line Interface (AWS CLI), or the AWS API. For new deployments, RG instances can be selected directly during the cluster creation process within the Amazon Redshift console, offering a straightforward setup experience.

For existing Redshift users, AWS has streamlined the migration process from previous-generation instances to RG instances. The migration paths are designed for optimality, allowing customers to estimate costs, validate compatibility, and automate the execution of the migration. Crucially, this transition is engineered to be seamless from an application perspective. External tables, schemas, and existing query syntax—including any Amazon Redshift Spectrum queries—remain unchanged. There is no requirement to recreate external tables or modify existing application code, minimizing disruption and accelerating the adoption of the new instance family. The Redshift Management Guide provides comprehensive documentation for managing cluster considerations during this transition.

To assist with financial planning, AWS encourages customers to utilize the AWS Pricing Calculator with their specific workload patterns to estimate potential savings with RG instances. This tool allows for tailored cost analysis, helping businesses understand the economic benefits of migrating or deploying new clusters with the new instance type.

Amazon Redshift RG instances are broadly available across a wide array of AWS Regions, including US East (N. Virginia, Ohio), US West (N. California, Oregon), Asia Pacific (Hong Kong, Hyderabad, Jakarta, Malaysia, Melbourne, Mumbai, Osaka, Seoul, Singapore, Sydney, Taiwan, Tokyo), Canada (Central), Europe (Frankfurt, Ireland, Milan, London, Paris, Spain, Stockholm), and South America (São Paulo). For specific regional availability and future expansion plans, customers can consult the AWS Capabilities by Region page. For Redshift Provisioned, customers have the flexibility to choose between On-Demand Instances with hourly billing and no commitments, or opt for Reserved Instances for additional cost savings, catering to diverse operational and financial strategies. Detailed pricing information is available on the Amazon Redshift Pricing page.

Industry Implications and Future Outlook

The introduction of Amazon Redshift RG instances, powered by AWS Graviton and featuring an integrated data lake query engine, carries significant implications for the broader data analytics market and enterprise data strategies. This move reinforces AWS’s leadership in the cloud data warehousing space and signals a clear direction for the future of analytics.

Firstly, it intensifies the competition among cloud data warehouse providers. By offering a compelling combination of performance, cost efficiency, and simplified data lake integration, AWS sets a new benchmark that competitors will need to match. The custom Graviton silicon provides AWS with a unique advantage in optimizing performance-per-cost for its services.

Secondly, it accelerates the trend towards the convergence of data warehousing and data lakes. The ability to seamlessly query both structured warehouse data and diverse data lake formats from a single, high-performance engine within the Redshift cluster simplifies complex architectures and reduces the need for multiple, specialized tools. This unified approach can lead to faster data-to-insight cycles and more robust, comprehensive analytics. Data architects can now design more streamlined solutions, reducing complexity and improving data governance across disparate data types.

Finally, the explicit focus on supporting AI and agentic workloads highlights the critical role of data infrastructure in the age of artificial intelligence. As AI models become more sophisticated and pervasive, the underlying data platforms must evolve to handle unprecedented scale and real-time demands. Redshift RG instances are positioned to be a foundational component for enterprises building AI-driven applications, ensuring that data access does not become a bottleneck for innovation. This strategic alignment with AI trends ensures Redshift remains relevant and crucial for the next wave of technological advancement.

AWS encourages customers to explore the capabilities of RG instances through the Redshift console and provide feedback via AWS re:Post for Amazon Redshift or their usual AWS Support contacts. This iterative approach to product development ensures that Redshift continues to evolve in direct response to customer needs and industry shifts, solidifying its position as a cornerstone of cloud-based data analytics. The continuous innovation demonstrated by AWS, culminating in the RG instances, underscores a commitment to empowering businesses with cutting-edge tools to unlock the full potential of their data in an increasingly data-intensive and AI-driven world.