Amazon Web Services (AWS) has announced the immediate availability of Anthropic’s Claude Opus 4.7 model within its Amazon Bedrock service, marking a significant enhancement in the platform’s offering of advanced foundational models for enterprise customers. This integration positions Claude Opus 4.7, Anthropic’s most intelligent model to date, to drive substantial improvements across critical enterprise workflows, including sophisticated coding tasks, long-running autonomous agents, and various forms of professional knowledge work. The announcement, made through the AWS News Blog, underscores AWS’s continued commitment to providing cutting-edge artificial intelligence capabilities with enterprise-grade infrastructure, security, and scalability.

Background and Context: The Bedrock-Anthropic Partnership

The integration of Claude Opus 4.7 into Amazon Bedrock is the latest development in a strategic partnership between AWS and Anthropic, a leading AI safety and research company. Amazon Bedrock, launched in 2023, is a fully managed service that makes foundational models (FMs) from Amazon and leading AI startups accessible via an API. It offers a serverless experience, allowing developers to experiment with, customize, and deploy FMs without managing underlying infrastructure. This approach has rapidly democratized access to powerful AI models, enabling enterprises of all sizes to integrate generative AI into their applications.

Anthropic, known for its "constitutional AI" approach that prioritizes safety and ethical alignment, has been a cornerstone partner for Bedrock. Its Claude family of models—including Claude Instant, Claude Sonnet, and the flagship Claude Opus—has gained traction for its strong reasoning abilities, extensive context windows, and commitment to responsible AI development. The introduction of Claude Opus 4.7 represents the pinnacle of Anthropic’s current model lineage, designed to tackle the most complex and nuanced AI challenges faced by businesses today. This collaboration highlights a broader trend in the tech industry where cloud providers are forging deep alliances with AI model developers to offer a comprehensive, secure, and performant AI ecosystem.

Unpacking Claude Opus 4.7: A Leap in AI Intelligence

Claude Opus 4.7 is presented as a generational leap, building upon its predecessors to deliver superior performance across a spectrum of demanding tasks. According to Anthropic, the model exhibits marked improvements in several key areas crucial for enterprise operations:

- Agentic Coding: For developers and engineering teams, Opus 4.7 offers enhanced capabilities in generating, debugging, and refining code. This goes beyond simple code snippets, enabling the model to assist in complex software architecture design, understanding intricate codebases, and automating significant portions of the development lifecycle. Its ability to handle ambiguity and follow precise instructions makes it a powerful co-pilot for high-stakes coding projects, potentially accelerating development cycles and reducing error rates.

- Knowledge Work and Problem Solving: In professional settings, Opus 4.7 excels at knowledge-intensive tasks. This includes synthesizing information from vast datasets, generating comprehensive reports, assisting with strategic decision-making, and performing intricate data analysis. Its improved thoroughness in problem-solving and capacity to work through ambiguous instructions mean it can handle more open-ended queries and deliver more robust, nuanced outputs compared to previous versions. This directly translates to higher productivity for analysts, researchers, and consultants.

- Visual Understanding: While the announcement primarily focuses on text-based applications, the mention of "visual understanding" suggests an expanded multimodal capability. This could imply a stronger ability to interpret and reason about information presented in diagrams, charts, and other visual formats, further enhancing its utility in data analysis, scientific research, and design-related fields.

- Long-Running Tasks: A common challenge with earlier AI models was their performance degradation over extended, multi-turn conversations or complex, sequential tasks. Opus 4.7 is specifically designed to manage long-running agents and maintain context more effectively over prolonged interactions. This is critical for applications like customer service chatbots that handle intricate queries, project management assistants that track multiple dependencies, or research agents that compile extensive information over time, ensuring consistency and coherence throughout the process.

Anthropic emphasizes that Opus 4.7 is more thorough in its problem-solving and follows instructions with greater precision. This enhanced fidelity to user prompts, coupled with a better ability to navigate ambiguity, is paramount for enterprise applications where accuracy and reliability are non-negotiable. While an upgrade from Opus 4.6, users are advised to review Anthropic’s prompting guide, as minor adjustments to prompting strategies and "harness tweaks" might be necessary to fully leverage the new model’s advanced capabilities.

The Power Behind the Model: Amazon Bedrock’s Next-Gen Inference Engine

A critical component enabling the advanced performance of Claude Opus 4.7 is Amazon Bedrock’s next-generation inference engine. This engine is not merely a pipeline for model execution; it represents a significant engineering achievement designed specifically for enterprise-grade production workloads. Its core innovations address common challenges in deploying and scaling large language models (LLMs):

- Dynamic Scheduling and Scaling Logic: The new inference engine features brand-new scheduling and scaling logic that dynamically allocates capacity to incoming requests. This intelligent resource management improves availability, particularly for steady-state workloads that require consistent performance, while simultaneously ensuring that the service can rapidly scale to accommodate sudden spikes in demand. This elasticity is crucial for businesses experiencing fluctuating usage patterns or those launching new AI-powered features.

- Enhanced Availability: By optimizing resource allocation and management, the engine significantly improves the overall availability of models. This translates to reduced latency, fewer service interruptions, and a more reliable experience for end-users and applications relying on Bedrock. For enterprises, high availability is non-negotiable, directly impacting operational efficiency and customer satisfaction.

- Zero Operator Access and Data Privacy: Perhaps one of the most compelling features for enterprise adoption is the "zero operator access" guarantee. This means that customer prompts and responses processed through Bedrock’s inference engine are never visible to Anthropic or AWS operators. This stringent privacy control is vital for organizations handling sensitive data, intellectual property, or regulatory compliance requirements. It addresses a primary concern many businesses have about cloud-based AI services, fostering greater trust and enabling the use of generative AI in mission-critical applications where data confidentiality is paramount. This commitment to privacy aligns with AWS’s broader security posture and compliance certifications, making Bedrock a highly attractive option for regulated industries.

Enterprise Adoption and Use Cases

The release of Claude Opus 4.7 on Amazon Bedrock has immediate and far-reaching implications for enterprise AI adoption. Businesses across various sectors can leverage its advanced capabilities to:

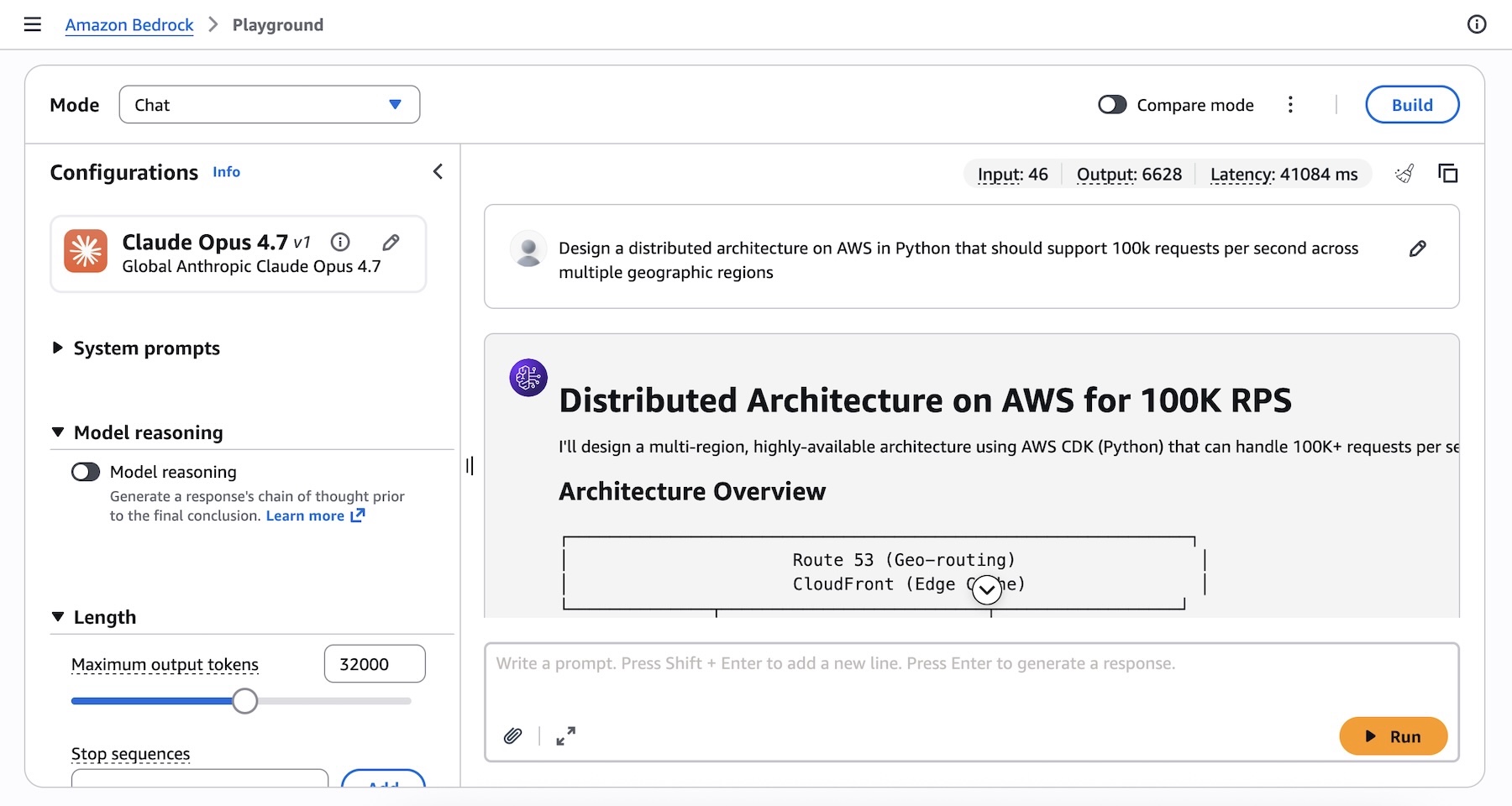

- Automate Complex Software Development: From generating infrastructure-as-code scripts for AWS environments to developing sophisticated application logic in Python, Opus 4.7 can serve as an invaluable assistant to software engineers. The example provided in the announcement, where Opus 4.7 designs a distributed AWS architecture for 100,000 requests per second across multiple regions, showcases its capacity for high-level architectural thinking and code generation. This capability can drastically reduce the time spent on boilerplate code, system design, and even code reviews.

- Enhance Data Analysis and Business Intelligence: Analysts can use Opus 4.7 to process and derive insights from unstructured data, generate natural language summaries of complex datasets, and even suggest optimal data visualization techniques. Its improved ability to work through ambiguity makes it adept at handling messy, real-world data.

- Power Next-Generation Customer Service and Support: Long-running agents powered by Opus 4.7 can provide more comprehensive and personalized customer support, maintaining context across extended interactions and resolving complex queries without human intervention. This can lead to improved customer satisfaction and reduced operational costs.

- Streamline Content Creation and Knowledge Management: Marketing teams can generate high-quality content, researchers can synthesize vast amounts of information, and legal professionals can draft documents with greater efficiency and accuracy. Opus 4.7’s thoroughness and precision make it suitable for tasks requiring high linguistic fidelity.

- Accelerate Scientific Research and Development: In fields like biotechnology or materials science, Opus 4.7 can assist in hypothesis generation, experimental design, and the interpretation of research findings, potentially speeding up discovery cycles.

Technical Accessibility and Developer Experience

AWS has ensured that Claude Opus 4.7 is readily accessible to developers and enterprises through multiple interfaces, catering to various technical preferences and integration needs.

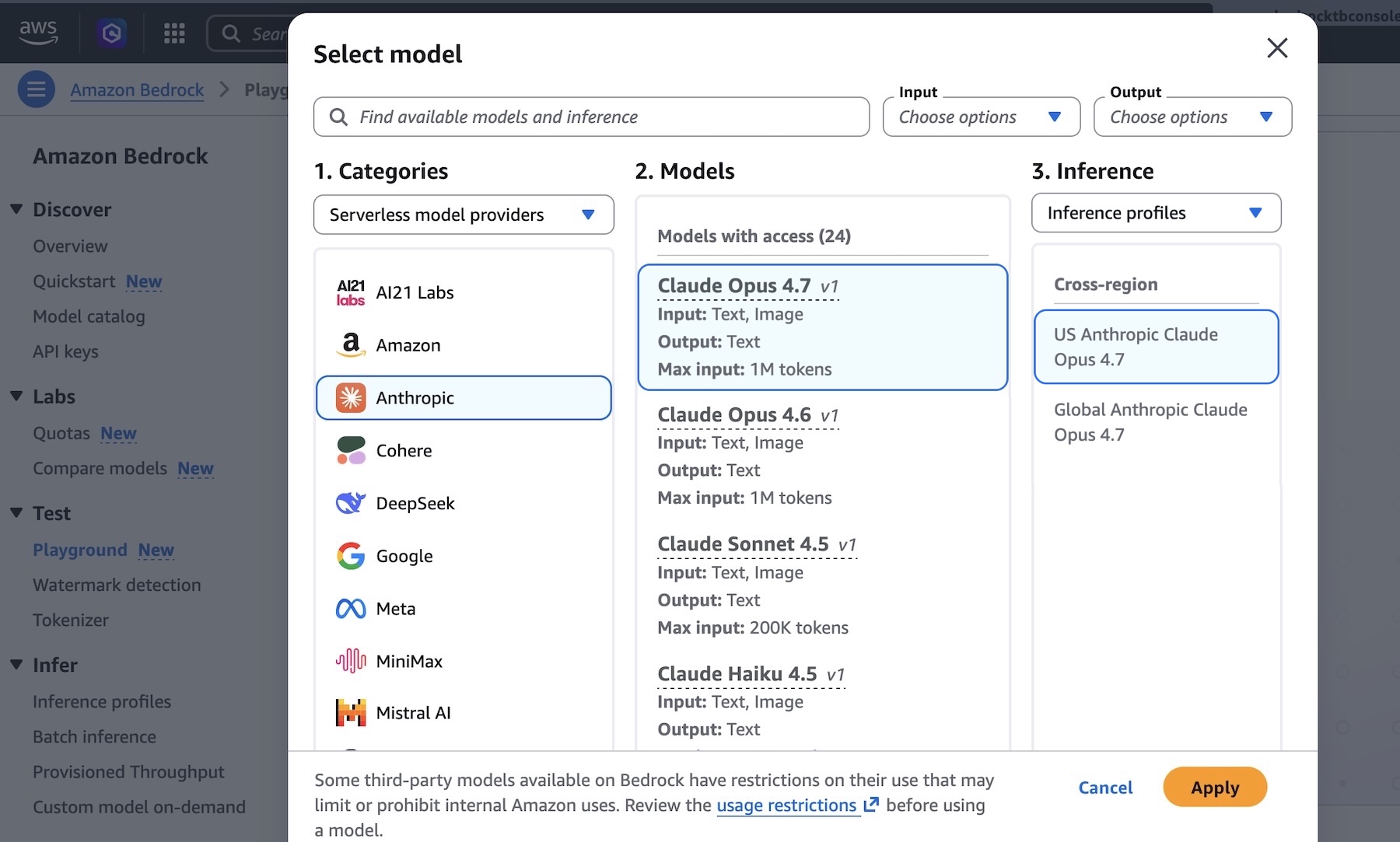

- Amazon Bedrock Console: The most straightforward way to begin experimenting with Opus 4.7 is through the Amazon Bedrock console. Users can navigate to the "Playground" under the "Test" menu, select "Claude Opus 4.7," and immediately begin testing complex prompts. This interactive environment allows for quick iteration and understanding of the model’s capabilities.

- Programmatic Access: For deeper integration into applications, developers can access the model programmatically. This includes using the Anthropic Messages API, which can be called via the

bedrock-runtimeendpoints directly or through theanthropic[bedrock]SDK package for a streamlined experience. The Messages API offers a robust interface for constructing conversational AI flows and managing model interactions. - AWS SDKs and CLI: For those already integrated into the AWS ecosystem, the model can also be invoked using the standard AWS Command Line Interface (AWS CLI) and AWS SDKs (e.g., Python, Java, Node.js). The

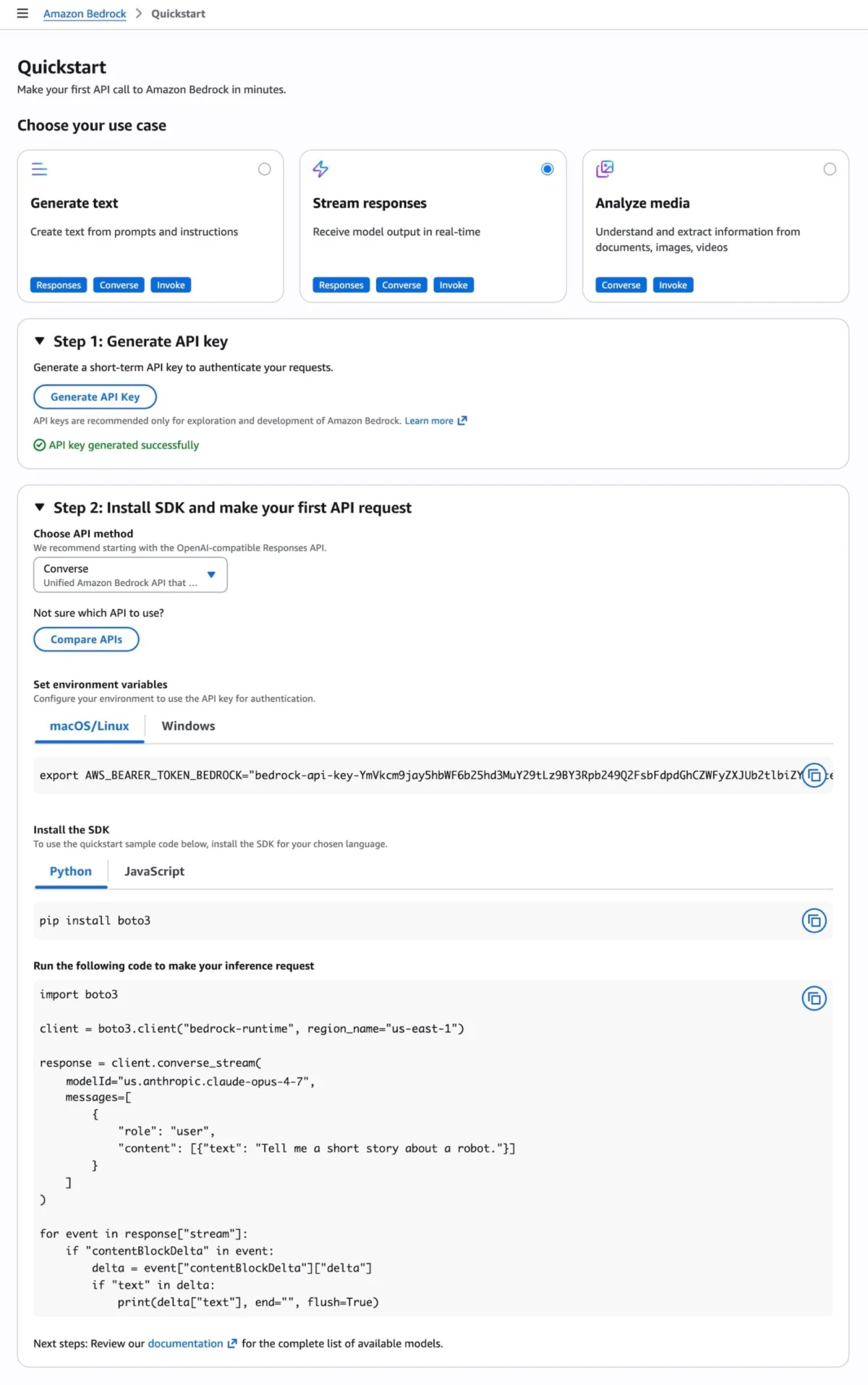

InvokeandConverse APIonbedrock-runtimeendpoints provide flexible options for making inference requests, allowing developers to choose the method that best fits their existing tooling and workflows. Sample code snippets for Python and AWS CLI are provided, demonstrating how to design a distributed AWS architecture with a simple prompt. - Quickstart Feature: To further lower the barrier to entry, the Bedrock console includes a "Quickstart" feature. This allows users to generate short-term API keys for testing purposes and obtain sample code tailored to specific use cases and API methods, such as the OpenAI-compatible Responses API.

- Adaptive Thinking: For more intelligent reasoning, Claude Opus 4.7 supports "Adaptive thinking," a feature that allows the model to dynamically allocate "thinking token budgets" based on the complexity of each request. This optimizes resource usage and ensures that the model can dedicate more processing power to genuinely challenging problems, leading to more thoughtful and accurate responses.

Comprehensive documentation, including the Anthropic Claude Messages API guide and extensive code examples across various programming languages, is available to assist developers in maximizing the utility of Opus 4.7.

Availability and Future Outlook

Anthropic’s Claude Opus 4.7 model is currently available in several key AWS regions: US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). AWS has indicated that additional regions will be added in future updates, reflecting the global demand for advanced AI capabilities. Pricing details are available on the Amazon Bedrock pricing page, typically structured based on input and output tokens, offering a flexible, consumption-based cost model.

This release is more than just an update; it represents a strategic move by AWS and Anthropic to solidify their position in the rapidly evolving generative AI landscape. By offering one of the most powerful and secure large language models, AWS aims to attract and empower enterprises seeking to build sophisticated, AI-driven applications. The focus on enterprise-grade infrastructure, coupled with stringent privacy controls and advanced model capabilities, is expected to accelerate the adoption of generative AI in sectors that have historically been cautious due to data security and performance concerns.

Industry analysts predict that such advancements will further intensify the competition among cloud providers and AI model developers. As models become more capable of handling complex, agentic tasks, the emphasis will shift from mere model availability to the holistic ecosystem—including ease of integration, security, scalability, and cost-effectiveness. The integration of Claude Opus 4.7 into Amazon Bedrock sets a new benchmark for what enterprises can expect from their cloud AI partners, paving the way for a new era of intelligent automation and innovation across industries. AWS encourages users to try Claude Opus 4.7 in the Amazon Bedrock console and provide feedback through AWS re:Post or their usual AWS Support contacts, indicating a continuous cycle of improvement and responsiveness to user needs.