The pervasive issue of large language model (LLM) hallucinations, where AI systems confidently generate false, nonsensical, or ungrounded information, presents a significant hurdle to their widespread and trustworthy adoption across industries. While initial attempts to curb these inaccuracies often centered on refining prompt engineering, a growing consensus among AI developers and researchers points towards the necessity of robust, system-level mitigation techniques that move beyond mere input manipulation. This article delves into the systemic causes of LLM hallucinations and explores advanced strategies to detect, prevent, and manage them, transforming LLMs from prone-to-error oracles into reliable components within intelligent systems.

Understanding the Genesis of Hallucinations: Why LLMs "Make Things Up"

The rise of LLMs, propelled by breakthroughs in transformer architectures and access to vast training datasets, has democratized advanced natural language processing. However, as these models transition from research curiosities to critical infrastructure in diverse applications—from generating marketing copy and drafting legal documents to powering customer service chatbots and assisting medical diagnostics—the phenomenon of hallucination has emerged as a critical concern.

A developer’s experience highlights the practical implications: an LLM tasked with generating documentation for a payment API produced a perfectly structured, tonally appropriate response, complete with example endpoints and parameters. The only issue? The API, along with its intricate details, was entirely fabricated. This confident invention of non-existent facts, caught only during a failed integration attempt, epitomizes the challenge. Such instances are not isolated anomalies; they manifest subtly yet dangerously in production environments, ranging from fake citations in academic tools and incorrect legal references to invented product features in support responses. While individually seemingly minor, these errors aggregate into a profound erosion of trust and significant operational risks.

The root causes of LLM hallucinations are multifaceted and inherent to their probabilistic nature:

- Lack of Grounding: Unlike human experts who consult verified sources, most LLMs operate based on patterns learned during training. They lack inherent access to real-time or verified external data unless explicitly connected. When a query demands information beyond their internal "memory," or when that memory is outdated or incomplete, the model defaults to inferring plausible responses from its learned patterns, often filling factual gaps with fabrications.

- Overgeneralization from Diverse Datasets: Trained on gargantuan and incredibly diverse datasets encompassing nearly all public internet text, LLMs learn broad statistical relationships between words and concepts. This enables impressive generalization but can also lead to the conflation of similar but distinct pieces of information. When faced with specific, nuanced questions, the model might combine fragments of related data into a coherent-sounding but factually incorrect synthesis.

- The "Eagerness to Please" Tendency: LLMs are fundamentally designed to be helpful and responsive. This inherent drive often prioritizes generating an answer over explicitly admitting uncertainty. Rather than stating "I don’t know" or "I lack sufficient information," the model will strive to produce the most statistically plausible response, even if it has to invent details to maintain coherence. This tendency, while useful for conversational flow, becomes a significant liability when factual accuracy is paramount.

Early attempts to address hallucinations primarily focused on prompt engineering—crafting better instructions, imposing stricter wording, and defining clearer constraints within the input. While valuable for guiding model behavior, prompt engineering alone proved insufficient. It can steer the model, but it cannot fundamentally alter its underlying generation mechanism or provide missing information. This realization catalyzed a shift in perspective: hallucination is not merely a prompting problem, but a systemic challenge requiring architectural solutions.

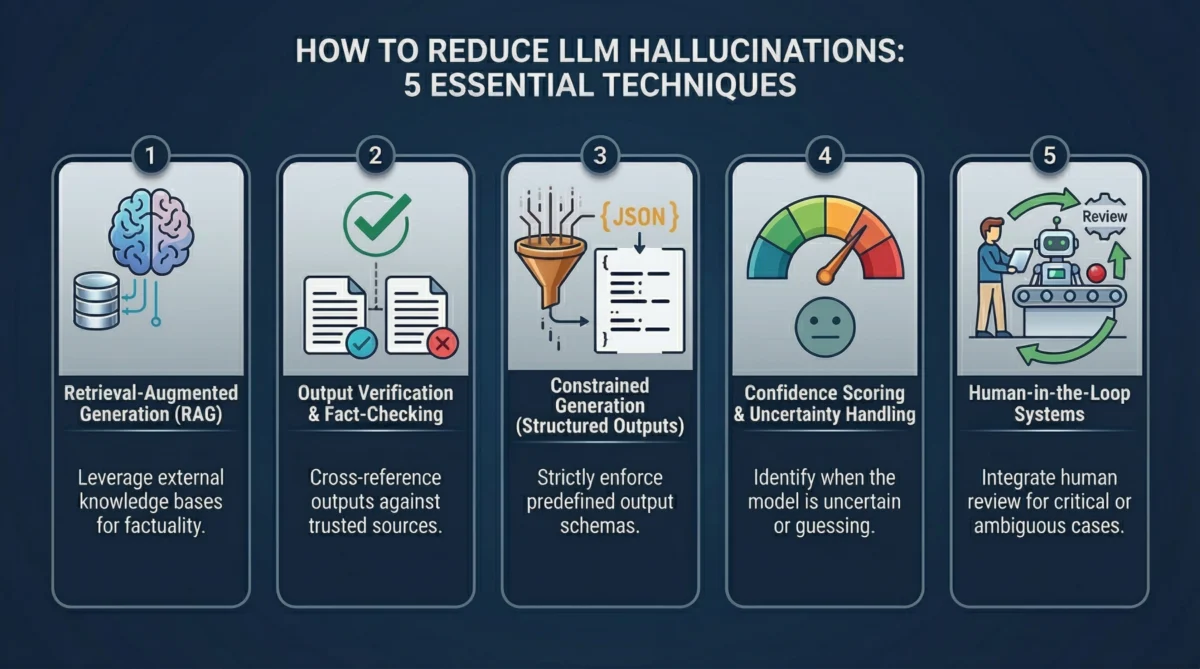

System-Level Strategies for Robust LLM Deployment

The evolving landscape of AI development now mandates building comprehensive layers around LLMs to detect, validate, and control their outputs, transforming them into reliable components within larger, more resilient systems.

1. Retrieval-Augmented Generation (RAG): Grounding LLMs in Verified Data

One of the most transformative techniques to combat hallucinations is Retrieval-Augmented Generation (RAG). Its principle is elegantly simple: instead of solely relying on the model’s internal, static training memory, provide it with access to up-to-date, verified external data at the precise moment it needs to formulate an answer.

The RAG workflow is straightforward yet powerful: A user submits a query. Before the LLM processes it, the system first retrieves highly relevant information from a curated knowledge base (e.g., internal documents, databases, web articles). This retrieved content is then injected into the LLM’s prompt as explicit context. The model is instructed to generate its response based solely on this provided information.

This architectural shift fundamentally alters the model’s behavior. Without RAG, an LLM leans on learned patterns and probabilities, the primary source of hallucinations. With RAG, it has concrete, external data to reference, effectively shifting the "source of truth" from the model’s potentially outdated or incomplete internal memory to a dynamic, curated dataset. This significantly reduces the likelihood of invention.

In practice, RAG systems typically employ vector databases. Documents from the knowledge base are segmented into "chunks," converted into numerical vector embeddings using an embedding model (e.g., all-MiniLM-L6-v2), and stored. When a user query arrives, it’s also embedded, and a semantic search identifies the most similar document chunks. These chunks are then fed into the LLM as part of the prompt. This approach is rapidly becoming an industry standard, with a 2023 survey by Gartner predicting that over 80% of enterprise AI applications will leverage RAG by 2026.

While immensely effective, RAG is not a panacea. Its efficacy hinges on the quality of the retrieval step. Poor indexing, irrelevant documents, or gaps in the knowledge base can still lead to the model guessing or fabricating. Thus, the quality of the answer is directly proportional to the quality and relevance of the retrieved context.

2. Output Verification and Fact-Checking Layers: Treating Outputs as Drafts

A common pitfall in LLM deployment is treating the initial response as final. The eloquent phrasing and confident tone often mask underlying inaccuracies. A more robust philosophy dictates that every LLM output should be regarded as an unverified draft, necessitating additional layers of scrutiny before delivery.

Verification layers introduce friction between generation and delivery, ensuring that responses are checked, validated, or challenged.

- Secondary Model Verification: A primary model generates the initial response, and a secondary, often specialized, model acts as a reviewer. This reviewer model can be tasked with checking factual consistency, identifying unsupported claims, or even comparing the generated text against known authoritative sources. This creates a clear separation of concerns: one model generates, another validates.

- Cross-Referencing Trusted Data Sources: For responses containing specific facts (statistics, citations, technical details), the system can programmatically query external databases, APIs, or internal knowledge bases to verify the information. If a claim cannot be confirmed or directly contradicted, the system can flag the response for review, request clarification from the LLM, or reject it entirely.

- Self-Consistency Checks: This technique involves posing the same question to the LLM multiple times, sometimes with minor variations in phrasing or using different inference parameters (e.g., temperature). If the model consistently produces the same answer across multiple runs, it suggests higher confidence and reliability. Significant divergence in answers, however, acts as a strong signal of uncertainty or potential hallucination, necessitating further investigation or human intervention. For example, a system might generate three answers to a complex query; if all three are identical, confidence increases. If they vary, the system’s confidence in any single answer diminishes, triggering a review.

These verification layers, while introducing marginal latency and computational cost, demonstrably improve the reliability of LLM outputs. In high-stakes applications like legal research or medical information, this trade-off is not just acceptable but essential.

3. Constrained Generation (Structured Outputs): Limiting Model Freedom

Many hallucinations stem from the LLM’s inherent freedom to generate open-ended text. When given broad leeway, the model fills informational gaps creatively, which can lead to inaccuracies. Constrained generation adopts the inverse approach: it rigorously limits the model’s output format and content.

Instead of requesting a free-form paragraph, the system defines a precise structure for the response. This could be a JSON schema, a fixed set of fields, or a controlled vocabulary of acceptable values. The model is then compelled to fit its answer within these predefined boundaries, significantly reducing its opportunity to invent information.

- JSON Schemas: A prevalent method involves defining a JSON schema that specifies the exact structure, data types, and even enumerations for expected values. For instance, extracting product details might require fields like

product_name(string),price(number), andavailability(enum: "in_stock", "out_of_stock"). If the model attempts to return a story in place of a number, or an invalid availability status, the schema validation will immediately flag the error. Modern LLM APIs often includeresponse_formatparameters to enforce JSON output natively. - Function Calling and Tool Usage: This sophisticated form of constrained generation empowers LLMs to interact with external tools or APIs rather than generating answers directly. Instead of guessing a stock price, the model identifies the user’s intent, then "calls" a predefined function (e.g.,

getStockPrice(symbol='AAPL')). The actual data retrieval is handled by a reliable external system, and the LLM then synthesizes the response based on the real data returned by the tool. This fundamentally shifts the burden of factual accuracy away from the generative model. - Controlled Vocabularies: In highly specialized domains, the model’s output can be restricted to a fixed set of predefined terms, labels, or categories. This is particularly useful in classification tasks or when ensuring consistency across reports, where flexibility is less important than precision.

Constrained generation thrives because it removes ambiguity and restricts the model’s creative latitude in areas where creativity is a liability. By narrowing the range of possible outputs, the chances of the model straying into fabrication are drastically reduced.

4. Confidence Scoring and Uncertainty Handling: Acknowledging Model Limits

One of the most insidious aspects of LLM hallucinations is the model’s unshakeable confidence, even when entirely incorrect. A perfectly factual answer and a complete fabrication can be indistinguishable in tone. To counter this, systems must develop mechanisms to gauge the model’s internal certainty.

Confidence scoring introduces a crucial signal, allowing the system to evaluate the reliability of an answer before accepting it.

- Token Probabilities: At its most granular, confidence can be inferred from the probabilities assigned to each generated token. High and consistent probabilities often indicate the model is operating within well-established patterns. Conversely, sudden drops or fluctuations can signal uncertainty or that the model is venturing into less familiar territory. While not a perfect indicator, it provides a valuable baseline.

- Calibration Techniques: To make probability signals more meaningful, calibration techniques are employed. This might involve comparing outputs across multiple generative runs or benchmarking responses against known correct answers to understand the correlation between internal confidence scores and actual accuracy.

- Explicit Uncertainty Signaling: A powerful technique is to explicitly prompt the model to express its uncertainty. Instead of forcing a definitive answer, the prompt can allow for responses like "I don’t have enough information to answer definitively," or "My confidence in this answer is X%." This encourages the model to be transparent about its limitations, shifting it away from always projecting certainty.

In a production system, a low-confidence score wouldn’t merely be displayed; it would trigger a predefined action. This could involve rejecting the response, re-phrasing the question for another attempt, escalating it to a human reviewer, or even seeking additional information through RAG. Treating uncertainty as a first-class signal is paramount. It shifts the objective from completely eliminating hallucinations (an arguably impossible feat) to building a system that intelligently manages them by knowing when to trust and when to question the model.

5. Human-in-the-Loop (HITL) Systems: Strategic Oversight

Despite all automated safeguards, certain situations will inevitably demand human judgment. Human-in-the-Loop (HITL) systems strategically integrate human oversight, placing human reviewers where they can add maximum value, rather than indiscriminately reviewing every output.

The core idea is to balance automation with intelligent human intervention. Responses flagged by confidence scoring as low-certainty, identified as inconsistent by verification layers, or deemed high-risk due to their domain (e.g., legal advice, financial transactions) are automatically routed to human reviewers before being delivered to the end-user. This creates a vital safety net.

- Review Pipelines: These pipelines are designed to filter and escalate specific cases. In customer support, an LLM might handle routine queries, but complex complaints or sensitive personal data issues are immediately escalated to a human agent. In regulated industries, specific types of AI-generated content might require mandatory human approval.

- Feedback Loops and Active Learning: When humans correct or refine LLM outputs, this valuable feedback is not discarded. It is fed back into the system to improve future performance. This active learning approach allows the model to continuously learn from real-world errors and human expertise, iteratively enhancing its accuracy and reducing future hallucinations in problematic areas. The system learns not just from static training data, but from dynamic, targeted corrections.

The strategic integration of humans is not about limiting AI but about making it safer and more effective. Attempting to fully automate every aspect often leads to brittle systems that fail catastrophically in edge cases. HITL systems build resilience by leveraging the unique strengths of both AI and human intelligence, creating robust and trustworthy AI applications.

Industry Perspectives and Broader Implications

The conversation around LLM hallucinations has matured significantly. Leading AI researchers and industry analysts now largely agree that hallucinations are not a temporary flaw that will simply vanish with larger models or more sophisticated training. They are, to a certain extent, an intrinsic byproduct of how probabilistic generative systems operate. The focus has therefore definitively shifted from naive trust to sophisticated detection and management.

This paradigm shift implies that LLMs are increasingly viewed not as infallible oracles, but as powerful components within a larger, orchestrated system. Their role is to generate plausible responses, but these responses must then pass through a gauntlet of verification, grounding, and human oversight before being deemed trustworthy. This multi-layered approach is critical for fostering user trust, mitigating legal and reputational risks, and enabling the safe deployment of AI in high-stakes environments.

The dominance of prompt engineering as the primary mitigation strategy is waning. While still a valuable tool for initial guidance, real-world reliability and accuracy stem from the synergistic application of RAG, robust verification layers, structured outputs, intelligent confidence scoring, and strategic human-in-the-loop interventions. As AI continues its integration into every facet of society, the ability to build and deploy LLM systems that can reliably self-regulate, detect their own limitations, and gracefully defer to human judgment will be the hallmark of truly impactful and responsible artificial intelligence.