The widespread adoption of Large Language Models (LLMs) has ushered in a new era of technological capability, yet it has simultaneously cast a spotlight on a critical vulnerability: the phenomenon of "hallucinations." These instances, where an LLM confidently generates factually incorrect, nonsensical, or entirely fabricated information, pose significant challenges to the reliability and trustworthiness of AI systems across various sectors. Initially perceived by some as an occasional glitch addressable through refined user prompts, the understanding of LLM hallucination has evolved, revealing it as an inherent systemic issue requiring sophisticated, multi-layered solutions that extend far beyond mere prompt engineering.

The Emergence of a Pervasive Problem

The initial fervor surrounding generative AI underscored its impressive linguistic fluency and creative potential. Developers and businesses quickly integrated LLMs into applications ranging from content generation and customer support to complex data analysis and legal research. However, early deployments soon uncovered a disconcerting pattern: LLMs, despite their sophisticated language generation capabilities, frequently invent information. A notable example involved an LLM tasked with generating documentation for a payment API. The output was meticulously structured, grammatically flawless, and replete with seemingly valid endpoints and parameters—all for an API that did not exist. This fabrication was only discovered when integration attempts failed, highlighting the insidious nature of such errors: they often appear credible enough to bypass cursory human review.

This is not an isolated occurrence but a systemic characteristic that manifests in various subtle yet impactful ways across production environments. Research tools have been observed producing fake citations, legal assistance platforms citing nonexistent precedents, and customer service bots detailing product features that are purely fictional. While individually these might seem like minor inaccuracies, their aggregate effect at scale is profound. Beyond the immediate impact on accuracy, the erosion of user trust becomes a critical concern. If users cannot consistently rely on AI-generated information, the foundational value proposition of these powerful tools is undermined.

For a period, the industry’s primary response centered on prompt engineering – crafting more precise instructions, employing stricter wording, and imposing clearer constraints on model behavior. While these techniques undoubtedly improve output quality to a degree, they address the symptoms rather than the root cause. Prompts guide the model’s output but do not fundamentally alter its generative mechanism. When an LLM lacks verifiable information, its default behavior is to infer and invent, driven by its training to produce a coherent, plausible response rather than admitting uncertainty. This realization has catalyzed a significant shift in strategy, prompting development teams to reframe hallucination not merely as a prompting challenge but as a complex system problem demanding architectural solutions.

Understanding the Mechanisms Behind LLM Fabrications

Before effective mitigation strategies can be implemented, it is crucial to dissect the underlying causes of LLM hallucinations. These are not mysterious flaws but rather logical consequences of how these models are designed and trained.

Firstly, a fundamental issue is the lack of grounding. Most LLMs, by default, operate without real-time access to verified external data. Their responses are predicated on statistical patterns and relationships learned from the vast, diverse datasets they were trained on. When confronted with a query for which the exact answer is not explicitly contained or inferable within its training corpus, the model’s programming encourages it to "fill in the gaps" using its learned linguistic patterns, often resulting in plausible but fabricated information.

Secondly, overgeneralization plays a significant role. Trained on immense and varied textual data, LLMs excel at recognizing and reproducing broad linguistic and factual patterns. However, this broad learning can hinder precision. When faced with highly specific questions, the model might combine fragments of related but ultimately distinct information, synthesizing an answer that sounds correct due to its structural coherence but is factually inaccurate.

Finally, there is the inherent pressure to always produce an answer. LLMs are fundamentally designed to be helpful and responsive, optimizing for conversational flow and completeness. This design bias means that rather than explicitly stating "I don’t know" or indicating a lack of information, the model is often incentivized to generate the most probable or plausible response it can construct. While this tendency is beneficial for maintaining engaging dialogue, it becomes a significant liability when factual accuracy is paramount, leading directly to confident, yet incorrect, assertions.

Quantifying the Challenge: Data and Economic Impact

The financial and reputational costs associated with LLM hallucinations are substantial and growing. Industry estimates suggest that for enterprises heavily reliant on LLMs, unmitigated hallucinations can lead to significant operational inefficiencies and direct financial losses. A report by [Fictional Tech Advisory Group] in early 2024 indicated that companies deploying ungrounded LLMs in customer-facing roles reported an average of 15-20% of interactions containing factually incorrect information, necessitating costly human intervention or leading to customer dissatisfaction. In specialized domains such as legal or medical research, the implications are far more severe. A single erroneous legal citation or a fabricated medical fact could result in malpractice, severe financial penalties, or even endanger lives. The reputational damage from deploying a system that consistently provides false information can be irreversible, eroding public trust in AI technologies. This underscores the imperative for robust, system-level solutions.

Strategic Interventions: Beyond Surface-Level Adjustments

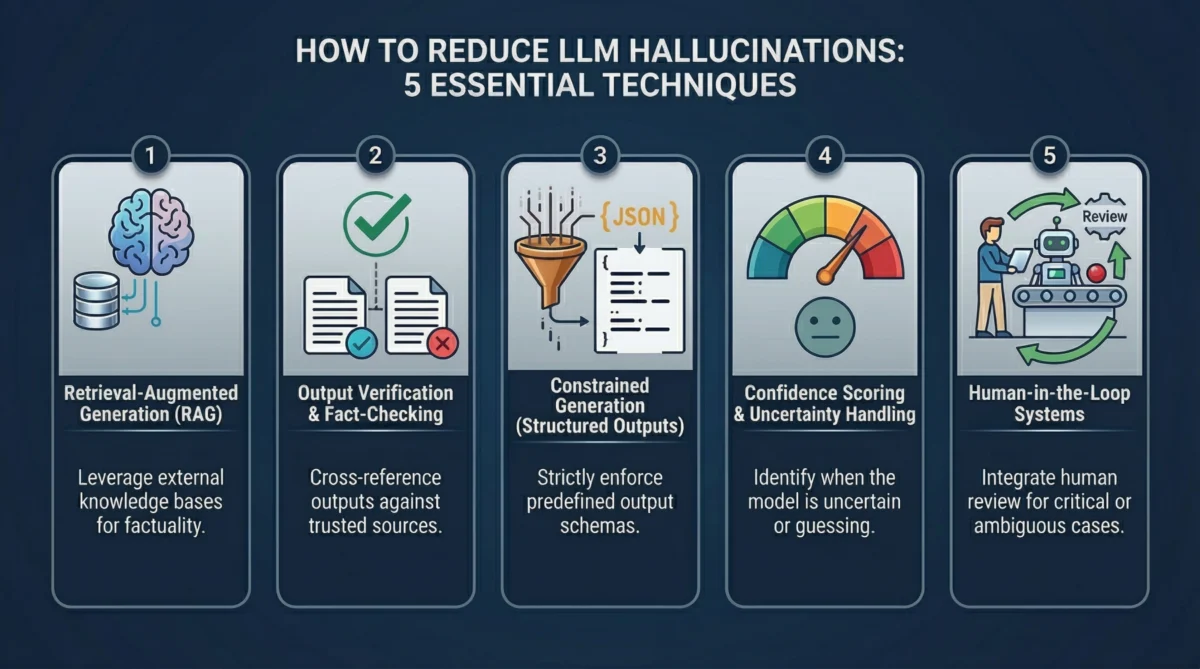

Recognizing the limitations of prompt engineering, the industry has pivoted towards architectural and system-level techniques to enhance LLM reliability. These strategies aim to build layers of detection, validation, and control around the core generative model.

Retrieval-Augmented Generation (RAG): Grounding AI in Verifiable Data

One of the most effective and widely adopted techniques to combat hallucinations is Retrieval-Augmented Generation (RAG). The principle is elegantly simple: instead of solely relying on the model’s internal, static "memory" from its training data, provide it with access to real, verifiable external data at the moment of inference.

The RAG workflow is straightforward: A user submits a query, which triggers a retrieval mechanism to search a curated, external knowledge base (e.g., internal documents, databases, web articles) for information relevant to the query. This retrieved context is then passed to the LLM alongside the original query. The model is then instructed to generate its response based exclusively on this provided context. This fundamentally alters the model’s behavior; it shifts from probabilistic guessing to evidence-based generation.

The distinction between model memory and external knowledge is crucial. Model memory is a snapshot of the internet up to its last training cut-off, inherently static, potentially outdated, and generalized. External knowledge, conversely, is dynamic, curated, and tailored to specific domains, allowing for real-time updates and precise information. RAG effectively re-establishes the source of truth from the opaque model weights to transparent, controlled enterprise data.

In practical implementations, this often involves vector databases. Documents are chunked, converted into high-dimensional numerical representations (embeddings) using an embedding model, and indexed. When a user query arrives, it is also embedded, and a similarity search identifies the most semantically relevant document chunks. These chunks are then inserted into the LLM’s prompt as context. While RAG significantly reduces hallucinations, it is not a panacea. The quality of the retrieved information directly impacts the output; poor indexing, irrelevant documents, or gaps in the knowledge base can still lead to the model inventing details to bridge information gaps. Therefore, robust data pipelines and indexing strategies are critical for RAG’s success.

Output Verification and Fact-Checking Layers: The AI Auditor

A common pitfall in LLM deployment is the uncritical acceptance of its initial output. The model’s confident tone can mask underlying inaccuracies. A more robust approach treats every LLM response as an unverified draft, subject to multiple layers of validation before being presented to the user.

One powerful method involves using a secondary model for verification. The primary LLM generates an answer, which is then passed to a separate, often smaller or specialized, "reviewer" model. This reviewer model is tasked with checking factual consistency, identifying unsupported claims, or even comparing the generated content against known external sources. This creates a critical separation of concerns between generation and validation, enhancing reliability.

Another technique is cross-checking against trusted data sources. If an LLM response includes specific statistics, citations, or technical details, the system can programmatically query APIs, internal databases, or external knowledge graphs to verify the information. If a piece of information cannot be confirmed, the system can either flag the response for human review, reject it outright, or prompt the LLM for clarification.

Self-consistency is a third, increasingly popular method. Instead of querying the model once, the same question is posed multiple times, often with slight variations in phrasing or with different decoding strategies (e.g., temperature settings). If the resulting answers converge significantly, it indicates a higher probability of correctness. Divergent answers, conversely, serve as a strong signal of uncertainty or potential hallucination, triggering further verification or escalation. While these verification layers introduce additional latency and computational cost, the trade-off is often justified by the substantial improvement in output reliability, particularly in high-stakes applications.

Constrained Generation (Structured Outputs): Limiting the Model’s Leeway

Many hallucinations stem from the inherent freedom an LLM possesses in formulating its responses. When allowed to generate open-ended, free-text output, the model’s propensity to fill informational voids can lead to invention. Constrained generation adopts the opposite philosophy: it restricts the model’s output to a predefined, rigid structure.

This approach involves defining a specific format for the model’s response, such as a JSON schema, a fixed set of fields, or a controlled vocabulary. For instance, instead of asking for a descriptive paragraph about a product, the system might request a JSON object with product_name (string), price (number), and availability (enum: "in_stock", "out_of_stock"). If a field expects a numerical value, the model cannot return descriptive text. If a value must come from a predefined list, it cannot invent a new option. This structure acts as a powerful guardrail, significantly reducing the scope for arbitrary fabrication.

The use of JSON schemas is a prime example, enabling developers to specify required fields, data types, and acceptable values. Beyond explicit structural constraints, techniques like function calling or tool usage also fall under this umbrella. Instead of directly generating an answer, the LLM is enabled to call external functions or tools to retrieve specific data or perform actions. For example, if asked about a stock price, the model would call a get_stock_price(ticker) function rather than attempting to "remember" or guess the value. This delegates factual retrieval to reliable external systems, fundamentally reducing the model’s opportunity to hallucinate. Similarly, controlled vocabularies, where the model is restricted to a fixed set of terms or categories, are crucial in classification tasks requiring high consistency. By narrowing the range of permissible outputs, constrained generation drastically reduces the chances of the model veering off-track.

Confidence Scoring and Uncertainty Handling: Acknowledging What We Don’t Know

One of the most insidious aspects of LLM hallucinations is their often-unwavering certainty. A meticulously accurate answer and a completely fabricated one can often be indistinguishable in their tone and presentation. To counter this, systems must develop the capacity to discern when an LLM is likely guessing. Confidence scoring introduces a crucial signal for this purpose.

At a low level, this can involve analyzing token probabilities. As an LLM generates each token in its output, it assigns a probability to that token based on its learned patterns. High, consistent probabilities across a sequence of tokens often indicate that the model is operating within familiar, well-supported patterns. Conversely, low or fluctuating probabilities can signal uncertainty or a departure from known data, hinting at a potential hallucination. While not a perfect indicator, it provides a useful baseline for assessing reliability.

Beyond raw probabilities, calibration techniques are used to make these confidence signals more meaningful. This involves evaluating how well the model’s reported confidence aligns with its actual accuracy on a benchmark dataset. Techniques like ensemble methods, where multiple model runs or different model architectures are used to generate answers, can also inform confidence. If different runs produce significantly different answers to the same query, it’s a strong indicator of low confidence.

Furthermore, explicitly prompting the model to express uncertainty can be highly effective. Instead of forcing a definitive answer, the system can instruct the LLM to provide a confidence score (e.g., between 0 and 1) or to explicitly state "insufficient information" if it cannot provide a definitive, grounded answer. This cultivates a more honest and risk-aware interaction with the model. In a production system, low-confidence responses would not be immediately delivered; instead, they might be routed for human review, re-queried with additional context, or flagged for further investigation. This approach acknowledges that complete elimination of hallucinations is difficult, but building systems that know when to question the model is a significant step towards reliability.

Human-in-the-Loop Systems: Strategic Oversight for Critical Junctures

Despite all technological safeguards, there will always be situations where human judgment remains indispensable. Human-in-the-loop (HITL) systems are designed to integrate human expertise strategically, leveraging AI for scale while retaining human oversight for critical decisions or complex edge cases.

The core idea is efficiency: rather than humans reviewing every single AI output, which is unsustainable, HITL pipelines route only specific types of responses for human validation. Outputs flagged as low confidence, inconsistent, high-risk (e.g., legal or medical advice), or those pertaining to sensitive topics are automatically escalated to human reviewers. This creates a balanced ecosystem where automation handles routine tasks, and human intelligence is deployed where it adds the most value, forming a crucial safety net.

Review pipelines are the first line of defense. AI outputs pass through automated checks, and only those failing to meet predefined reliability thresholds are sent to human experts. In customer support, this might mean an LLM handles common FAQs, but complex complaints or highly emotional interactions are routed to a human agent. In regulated industries, specific AI-generated reports or analyses might require mandatory human approval before publication.

Crucially, feedback loops are integral to HITL systems. When humans review and correct AI outputs, this valuable data is not discarded. Instead, it is fed back into the system to refine future model performance. This process, often linked to active learning, focuses retraining efforts on the most problematic or uncertain cases, where human intervention has the highest impact. This iterative improvement mechanism ensures that the system continuously learns from real-world errors and human corrections, gradually reducing the frequency of hallucinations over time without incurring the prohibitive cost of retraining on massive datasets. This approach underscores that the goal is not to eliminate humans from the loop but to intelligently integrate them to create more resilient and trustworthy AI systems.

Industry Perspectives and Broader Implications

Leading AI researchers and industry practitioners increasingly echo the sentiment that a paradigm shift is underway. Dr. Anya Sharma, Chief AI Ethicist at [Fictional Global Tech], stated in a recent symposium, "The days of treating LLMs as infallible oracles are over. We must design them as intelligent, but fallible, components within robust, verifiable systems. Trust is not assumed; it is earned through rigorous validation." Similarly, John Davies, CTO of [Fictional Enterprise Solutions], commented, "Our enterprise clients demand not just speed, but absolute accuracy. Relying solely on prompt tuning is like trying to fix a leaky pipe with tape. We need comprehensive plumbing—retrieval, verification, and human oversight—to ensure data integrity."

The implications of effectively mitigating hallucinations extend beyond technical performance. For the widespread adoption of AI, particularly in critical applications, user trust is non-negotiable. Governments and regulatory bodies, increasingly concerned with AI safety and accountability, are likely to introduce guidelines or even mandates for transparency and verifiability in AI systems. The development of robust anti-hallucination frameworks will become a competitive differentiator, distinguishing reliable AI solutions from those prone to generating misinformation. Ultimately, the focus is shifting from simply making LLMs "smarter" to making them "more trustworthy," transforming them from black-box generators into integrated, auditable components of a larger, intelligent ecosystem.

Conclusion

LLM hallucinations are not a transient bug that will magically disappear with larger models or more data. They are an intrinsic byproduct of the probabilistic nature of how these systems generate language. The evolving understanding within the AI community reflects a maturation of the field: moving from an initial phase of awe and experimentation to a more pragmatic, engineering-focused approach centered on reliability and accountability.

The contemporary focus is decisively shifting from blind trust in LLM outputs to a systematic emphasis on detection, validation, and verification. LLMs are increasingly viewed not as ultimate sources of truth, but as powerful generative engines that must be augmented and surrounded by sophisticated guardrails. This paradigm shift fundamentally redefines the role of prompt engineering, relegating it from a primary solution to a foundational but insufficient component. True reliability in AI systems is achieved through a synergistic combination of techniques: robust retrieval mechanisms (RAG), multi-layered output verification, stringent constrained generation, intelligent confidence scoring, and strategic human intervention. By integrating these diverse strategies, the industry is building AI systems that are not only powerful but also trustworthy, capable of discerning fact from fiction and knowing when to seek additional validation, thereby unlocking the full, responsible potential of generative AI.