Chronosphere has announced a significant achievement in its cloud infrastructure optimization, reporting a 74% reduction in storage costs by migrating petabytes of time-series data from the traditional ext4 filesystem to Btrfs, a robust copy-on-write filesystem for Linux. This transformative move, detailed in an expanded blog post based on a presentation at FOSDEM 2026, highlights the potential for advanced filesystem features to yield substantial operational savings without compromising production environments. The company has also made Btrfs experimentally available on Google Cloud Platform, enabling other organizations to explore similar benefits.

Understanding Btrfs: A Deeper Dive into its Capabilities

Linux, a dominant operating system in server environments, supports a variety of filesystems, each governing file organization and access. Btrfs stands out among these for its advanced features, which go beyond basic file management. Key among these are transparent checksumming for data integrity, transparent compression for space efficiency, and its core copy-on-write (COW) mechanism. These functionalities are instrumental in Btrfs’s ability to offer enhanced data protection and storage optimization, making it a compelling option for data-intensive applications.

Harnessing Compression for Substantial Savings

The initial gains for Chronosphere were primarily driven by Btrfs’s transparent compression capabilities. To illustrate this, a substantial English Wikipedia dump, totaling 109 bytes (one terabyte), originally occupied 1000MB (approximately 953 MiB) of disk space. After being processed by Btrfs’s transparent compression, this same dataset was reduced to a mere 340MiB.

A detailed analysis using the compsize tool revealed a remarkable compression ratio:

Type Perc Disk Usage Uncompressed Referenced

TOTAL 35% 340M 953M 953M

zstd 35% 340M 953M 953MThis indicates that the compressed data occupied only 35% of its original size, effectively requiring a disk approximately three times smaller for the same volume of data. Crucially, Btrfs’s transparent compression allows data to be accessed and queried without explicit decompression, a significant advantage for applications that handle large datasets. For instance, searching for "FOSDEM" within the compressed Wikipedia dump was as straightforward as performing a grep command on the uncompressed equivalent:

$ grep FOSDEM enwik9 | head -1

Cox was the recipient of the [[Free Software Foundation]]'s [[2003]]

[[FSF Award for the Advancement of Free Software|Award for the Advancement of

Free Software]] at the [[FOSDEM]] conference in [[Brussels]].Chronosphere’s core business involves storing vast quantities of time-series metrics. These metrics typically comprise two primary components, and the company observed that a subset of their internal cluster data, when subjected to a similar compsize analysis, indicated potential disk savings in the 65-70% range. Given that storage represented the single largest component of their cloud expenditure at the time, a thorough evaluation of Btrfs was deemed a strategic imperative. While application-level compression is an option, Btrfs’s transparent filesystem compression offered a distinct advantage: it required minimal to no modifications to their existing database infrastructure, simplifying the integration process and mitigating development overhead.

Addressing Btrfs’s Historical Reputation

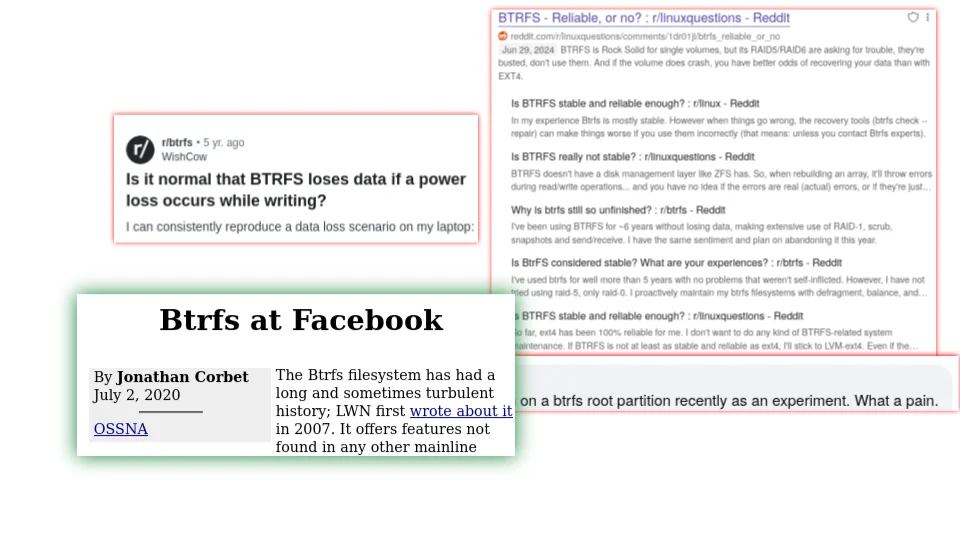

Despite the compelling potential for cost savings, Btrfs’s historical reputation presented a notable hurdle. Merged into the Linux kernel in 2008, the filesystem initially struggled with stability concerns that were well-documented and experienced firsthand by many in the industry. However, over the past decade, significant advancements and widespread adoption by major enterprises have reshaped its perception. A pivotal moment for Chronosphere’s internal assessment was Josef Bacik’s presentation on "Btrfs at Facebook" from the 2020 Open Source Summit. This talk provided crucial insights into Btrfs’s current state of reliability and scalability in demanding enterprise environments, helping to shift the organization’s perspective and pave the way for a serious consideration of its implementation.

Navigating Cloud Platform and Kubernetes Integration

Chronosphere operates on Google Cloud Platform (GCP) utilizing Google-managed Kubernetes. At the time of their evaluation, GCP’s supported Linux filesystems were limited to ext4 and XFS. To deploy Btrfs, Chronosphere needed to overcome this limitation by implementing a custom Container Storage Interface (CSI) driver. The CSI is a standard that allows storage vendors to develop and deploy storage solutions for container orchestration systems like Kubernetes, independent of the core Kubernetes code.

Chronosphere pursued two primary strategies to establish Btrfs volumes for their databases:

- Developing a custom CSI driver: This involved building and deploying a CSI driver that explicitly supported Btrfs, allowing Kubernetes to provision and manage Btrfs-backed persistent volumes.

- Leveraging existing CSI drivers with modifications: In some cases, they adapted existing CSI drivers designed for specific cloud providers to incorporate Btrfs support.

Upon successfully migrating their databases to compressed Btrfs filesystems, the critical test of stability was passed: "the database did not blow up." The observed compression ratios remained consistent with their initial experiments, averaging 65-70%.

The File System Conversion Process

With the CSI driver in place, the next challenge was converting existing ext4 and XFS volumes to Btrfs. While the btrfs-convert tool exists for in-place filesystem conversion, its documentation includes a strong caution:

"always consider whether mkfs and a file copy would be a better option than the in-place conversion, given what was said above."

This warning stems from the potential for data corruption or instability during in-place conversions, especially on large or critical datasets. Therefore, Chronosphere opted for a more robust workflow that involved:

- Creating a new Btrfs volume: A fresh Btrfs filesystem was provisioned.

- Migrating data: Existing data was copied from the old filesystem to the new Btrfs volume using a reliable data transfer tool.

- Re-attaching the new volume: The application was reconfigured to use the new Btrfs-backed volume.

This approach, while more time-consuming, offered a significantly higher degree of safety and reliability. Notably, Chronosphere already possessed a similar workflow for shrinking database disks, as neither ext4 nor GCP’s block storage natively support online disk shrinking. This existing expertise facilitated a relatively straightforward adaptation of the disk shrinking process to accommodate filesystem conversion.

Mitigating Risks and Addressing "Unknown Unknowns"

The transition to Btrfs, while promising significant cost savings, was not without its perceived risks. Before full deployment, several potential issues were identified:

- Data integrity: Concerns about Btrfs’s historical reliability and the potential for silent data corruption.

- Performance implications: The impact of new features like compression and copy-on-write on application performance.

- Operational complexity: The added burden of managing a non-standard filesystem on a cloud platform.

Even with meticulous planning, Chronosphere encountered unforeseen challenges, or "unknown unknowns," during their Btrfs adoption journey.

Surprise 1: Escalating Disk Snapshot Costs

One of the most immediate and impactful surprises was the dramatic increase in costs associated with block-level snapshots provided by GCP. While these incremental snapshots were cost-effective with ext4, their expense escalated by over sixfold after migrating to Btrfs, even as the underlying disk storage costs plummeted by more than 50%. In some cases, the cost of disk snapshots began to exceed the cost of the actual data storage, prompting an urgent review of their backup strategy.

To resolve this, Chronosphere accelerated a project to transition from block-level snapshots to file-based backups. This shift rendered the backup process filesystem-agnostic, effectively decoupling backup costs from the underlying storage technology and returning them to pre-Btrfs levels.

Surprise 2: IO Storms During Large Deletions

A more critical operational issue emerged on a production tenant involving large-scale data deletion. When a significant volume of files was removed, it triggered a massive Input/Output (IO) storm. This surge in disk activity led to an uncontrollable growth in the commit log queue, posing a severe threat to service stability and resulting in a production incident.

Investigation revealed that Btrfs’s background space reclamation process was the culprit. When many files are deleted, Btrfs initiates a data shuffling operation on disk, with the intensity roughly proportional to the number of bytes removed. The aggressive configuration of the bg_reclaim_threshold parameter, set to 90 in Chronosphere’s setup for precise free space tracking, amplified this effect. The team is eagerly awaiting the integration of the dynamic_reclaim tunable, merged into Linux kernel v6.11, which promises to offer more granular control over this process and mitigate IO storms during large deletions. In the interim, Chronosphere implemented application-level throttling for deletions.

Surprise 3: The Nuances of Read-Ahead

Performance degradation, characterized by P99 latency spikes directly correlating with saturated disk read throughput, became another point of investigation. The root cause was traced to an unexpected interaction with Btrfs’s read-ahead settings. Two distinct read-ahead mechanisms were found to be at play: one for the generic block device and another, more intelligent, for the Btrfs filesystem via its Backing Device Info (BDI) interface.

The Btrfs-specific read-ahead setting was found to be 32 times larger than the default for the block device. While this setting is designed to be more aware of logical file layouts, for Chronosphere’s workload, which involved numerous random-like reads, this aggressive read-ahead (up to 4MB) resulted in significant read amplification. This meant that large, often unused, chunks of data were being pulled into memory, leading to inefficient resource utilization and performance bottlenecks. Adjusting these settings was crucial for optimizing read performance.

Project Timeline and Current Status

Chronosphere developed a comprehensive "Btrfs master plan" to guide their adoption, encompassing potential savings, a detailed timeline, development requirements, migration strategies, and risk assessments. This plan proved invaluable in communicating the project’s scope and risk mitigation strategies to stakeholders. The rough project timeline included:

- Month 1: Proof of Concept (POC) and initial performance testing.

- Months 2-3: Developing and testing the CSI driver and conversion workflows.

- Months 4-6: Phased migration of non-production databases.

- Months 7-12: Migrating production databases, starting with less critical workloads and progressing to core systems.

Today, all of Chronosphere’s time-series databases, representing petabytes of compressed data, run on Btrfs. This undertaking required substantial engineering investment, leading to an infrastructure that diverges from standard GCP offerings. Chronosphere actively maintains its own builds of the GCP Compute Persistent Disk CSI driver to incorporate Btrfs support. They have also been instrumental in upstreaming these changes to the broader Kubernetes and GCP communities. Btrfs support has been officially enabled in Container Optimized OS 125 and their proposed CSI driver modifications have been merged into the upstream project. As of Google Kubernetes Engine (GKE) 1.35, Btrfs utilization is fully supported.

The migration has yielded a 74% reduction in disk costs solely from compression. Furthermore, with the full adoption of Btrfs, Chronosphere is now in a position to remove some application-level checksums, anticipating further cost savings in compute resources.

Key Takeaways from Chronosphere’s Btrfs Journey

Chronosphere’s experience offers several critical insights for organizations considering advanced filesystem solutions:

- Btrfs is a viable enterprise solution: The filesystem has matured into a trustworthy and capable option for large-scale, single-volume enterprise deployments.

- Significant cost savings are achievable: Transparent compression, a core feature of Btrfs, can deliver substantial reductions in storage expenditure, particularly for organizations dealing with large volumes of uncompressed data that are challenging to compress at the application level.

Errata: Clarification on Deduplication

During the FOSDEM 2026 presentation, a question was raised regarding the use of deduplication. The presenter initially misheard the question as "replication." The clarified answer remains "no," as Chronosphere employs an application-level mechanism for deduplicating shared data, which incurs minimal CPU and IO overhead without major application modifications.