The global semiconductor manufacturing landscape is currently undergoing a fundamental transformation as artificial intelligence (AI) begins to replace legacy methodologies in the back-end testing process. At the Semicon Korea 2026 conference in Seoul, Cohu, a prominent provider of semiconductor equipment and services, detailed a significant departure from traditional automated test equipment (ATE) management. Wai-Kong Chen, representing Cohu, presented a comprehensive roadmap for integrating AI to move beyond reactive troubleshooting toward a future defined by predictive maintenance and self-optimizing systems. This shift addresses the escalating complexity of modern integrated circuits (ICs), where traditional rule-based optimization methods are increasingly viewed as insufficient for the high-volume, high-precision demands of the industry.

The Technological Context of Semicon Korea 2026

Semicon Korea has long served as a critical nexus for the global microelectronics supply chain, particularly for the memory and logic sectors that dominate the East Asian market. The 2026 iteration of the event arrived at a time when the semiconductor industry faced unprecedented pressure to increase yield and reduce time-to-market for next-generation chips, including those used in 6G communications, advanced automotive systems, and high-performance computing (HPC).

As silicon geometries continue to shrink and heterogeneous integration becomes the norm, the amount of data generated during the testing phase has grown exponentially. Traditional ATE systems, while robust, rely heavily on human intervention and static rule sets to identify defects and optimize performance. The Cohu presentation highlighted that these manual processes are becoming a bottleneck. By introducing AI at the tester level, manufacturers can now process terabytes of telemetry data in real-time, allowing the equipment to make autonomous adjustments that were previously impossible.

The Limitations of Legacy Rule-Based Frameworks

For decades, ATE optimization has relied on a "reactive" model. When a test failure occurs or yield drops below a specific threshold, engineers analyze the logs, identify the root cause, and adjust the test parameters. This process is inherently backward-looking. Rule-based systems function on "if-then" logic, which is effective for known variables but fails to account for the subtle, non-linear interactions between temperature fluctuations, power delivery inconsistencies, and mechanical wear on test sockets.

The complexity of modern System-on-Chip (SoC) designs means that the "search space" for optimization is too vast for human engineers to navigate efficiently. A single chip may require thousands of individual tests. When multiplied by millions of units, the potential for efficiency loss is staggering. Cohu’s analysis suggests that reactive troubleshooting can lead to significant equipment downtime and "over-testing," where good dies are inadvertently rejected due to conservative guardbands—a phenomenon that directly erodes profit margins.

Defining the Paradigm Shift: From Reactive to Predictive

The core of the paradigm shift described by Wai-Kong Chen involves the deployment of machine learning (ML) models that can predict failures before they occur. Predictive ATE systems utilize continuous monitoring of "tester health" metrics, such as signal integrity, power consumption, and mechanical alignment.

By applying deep learning algorithms to historical and real-time data, these systems can identify "pre-failure" signatures. For example, a slight drift in the contact resistance of a test probe might not trigger a hard failure immediately but could indicate an impending breakdown. An AI-enabled system can flag this trend, allowing for scheduled maintenance during natural breaks in production rather than forcing an emergency shutdown.

Furthermore, the shift toward self-optimizing systems represents the next frontier. A self-optimizing ATE does not just wait for a human to fix a problem; it dynamically adjusts its own parameters. If the system detects a shift in the manufacturing process of the incoming wafers, it can realign its test "sweet spot" to maintain maximum yield without compromising test quality.

Technical Deep Dive: Sweet Spot Inference

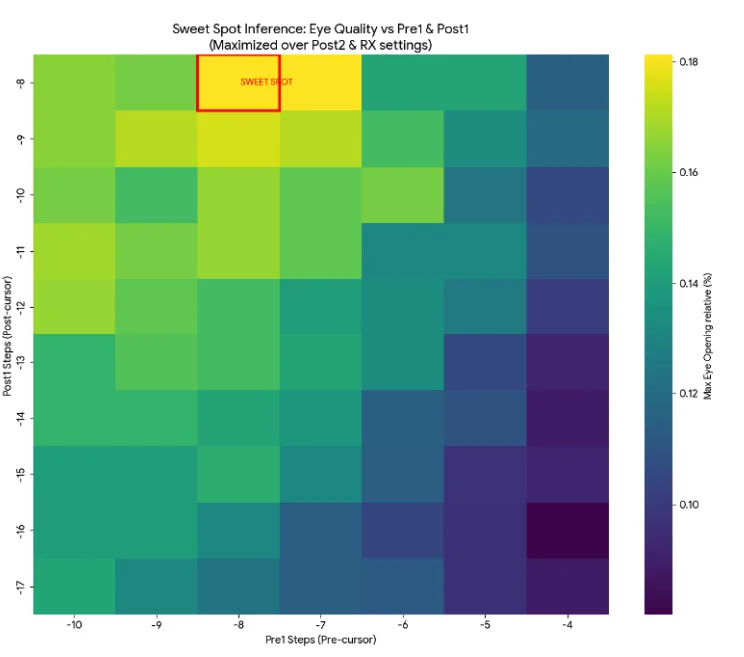

A pivotal element of Cohu’s presentation was the concept of "Sweet Spot Inference," as illustrated in the technical documentation released during the event. In semiconductor testing, the "sweet spot" refers to the optimal set of electrical and environmental conditions under which a chip should be tested to ensure reliability while maximizing yield.

In a traditional environment, finding this sweet spot is a trial-and-error process conducted during the characterization phase. AI changes this by using inference engines to calculate the sweet spot dynamically. By analyzing the relationship between various test parameters—such as voltage levels, timing margins, and temperature—the AI can infer the most efficient path to a "pass" or "fail" decision. This reduces the "test time per unit," which is one of the most critical metrics in high-volume manufacturing.

A Chronological Evolution of Semiconductor Testing

To understand the magnitude of this shift, it is necessary to view the evolution of ATE through a chronological lens:

- Manual Testing Era (Pre-1980s): Testing was largely a manual process involving benchtop instruments and human recording of results.

- Automated Rule-Based Era (1980s–2010s): The rise of specialized ATE platforms allowed for high-speed automated testing based on fixed programs and rigid pass/fail criteria.

- Data-Rich/Big Data Era (2010s–2022): Testers began generating massive datasets, leading to the use of offline data analytics to improve yield, though the testers themselves remained "dumb" in real-time.

- The AI Integration Era (2023–Present): Integration of edge AI directly into the tester hardware, enabling real-time inference, predictive maintenance, and autonomous self-optimization as highlighted in the 2026 Cohu roadmap.

Supporting Data and Industry Metrics

The move toward AI-driven ATE is supported by compelling economic data. Industry reports from the 2024-2025 period indicated that semiconductor manufacturers utilizing AI-enhanced test platforms saw a 3% to 5% increase in overall equipment effectiveness (OEE). While a single-digit percentage may seem modest, in a multi-billion dollar fabrication facility, this translates to tens of millions of dollars in annual savings.

Furthermore, data presented by Cohu suggests that predictive maintenance can reduce unscheduled downtime by up to 20%. By narrowing guardbands through more precise AI-driven measurements, manufacturers can also achieve a "yield recovery" of approximately 0.5% to 1.5%. In the context of high-margin AI accelerators and server CPUs, these recovery rates are vital for maintaining competitive pricing and supply chain stability.

Official Responses and Industry Sentiment

While Cohu has been a vocal proponent of this shift, the broader industry reaction at Semicon Korea was one of cautious optimism. Representatives from major Outsourced Semiconductor Assembly and Test (OSAT) providers noted that while the technology is promising, the primary challenge lies in data siloing. For AI to be truly effective, it requires access to data from the front-end (wafer fab) to the back-end (final test).

"The transition to self-optimizing systems is inevitable," noted a senior director from a leading South Korean memory manufacturer during a post-presentation panel. "However, the industry must standardize data formats to ensure that AI models can communicate across different equipment platforms. Cohu’s approach to sweet spot inference is a significant step toward that interoperability."

Wai-Kong Chen emphasized during his session that the goal is not to replace the test engineer but to augment their capabilities. By automating the "grunt work" of troubleshooting, engineers are freed to focus on high-level architecture and new product introduction (NPI) cycles.

Broader Impact and Global Implications

The implications of AI-enabled ATE extend far beyond the walls of the test floor. On a global scale, the increased efficiency of semiconductor testing is a key component in stabilizing the chip supply chain. As the world becomes increasingly reliant on semiconductors for everything from energy grids to medical devices, the ability to produce these components more reliably and with less waste is a matter of economic security.

Moreover, the shift to predictive and self-optimizing systems aligns with global sustainability goals. By reducing the energy consumption associated with re-testing and minimizing the scrap rate of incorrectly rejected chips, AI-driven ATE contributes to a more "green" semiconductor manufacturing process. The reduction in physical waste and the optimization of resource utilization are becoming central themes for ESG (Environmental, Social, and Governance) reporting among major tech firms.

Future Outlook: The Autonomous Fab

Looking ahead, the paradigm shift described by Cohu is likely a precursor to the fully autonomous semiconductor factory. In this vision, the entire manufacturing line—from lithography to packaging and testing—functions as a single, self-correcting organism.

By 2030, industry analysts predict that AI will not only optimize individual testers but will orchestrate the flow of material through the entire back-end facility. If a tester in one bay detects a systemic issue, it will automatically alert the upstream processes to adjust, effectively "healing" the production line in real-time. The presentation at Semicon Korea 2026 by Cohu serves as a definitive marker in this journey, signaling that the era of reactive troubleshooting is drawing to a close, replaced by a sophisticated, data-driven, and autonomous future for semiconductor testing.