Containers represent a fundamental shift in how software is packaged, deployed, and managed. At their core, containers are lightweight, standalone, executable software packages that bundle everything a piece of software needs to run, including its code, runtime, system tools, libraries, and configurations. This technology is a key component of the broader movement known as containerization, a methodology for isolating software and its dependencies, allowing them to run consistently across diverse computing environments without interference from other processes.

This comprehensive exploration delves into the intricacies of containerization, dissecting its core components, and drawing critical distinctions between containers and their long-standing counterparts, virtual machines (VMs). We will further examine the compelling benefits and diverse use cases that have propelled containerization to the forefront of modern IT infrastructure, alongside an overview of the leading container technologies. Finally, this article will address the inherent challenges and potential future trajectories of this transformative technology.

Understanding the Mechanics of Containers

Containerization empowers developers to package and execute applications within isolated environments. This process ensures a consistent and efficient method for deploying software, transcending the vagaries of different operating system configurations and underlying infrastructure. From a developer’s local workstation to robust production servers, containers promise predictability and reliability.

Unlike traditional deployment approaches, containers encapsulate an application and all its necessary dependencies into a single unit, known as a container image. This image is a self-contained blueprint, containing the application’s code, runtime environment, libraries, and system tools. A crucial aspect of container technology is that while containers share the host system’s kernel, they maintain their own independent filesystem, CPU, memory, and process space. This architectural characteristic renders them significantly more lightweight and resource-efficient compared to virtual machines.

The evolution of containerization can be traced back to early concepts of OS-level virtualization, with significant advancements emerging in the early 2000s. However, it was the widespread adoption and standardization brought about by technologies like Docker in the mid-2010s that truly propelled containerization into the mainstream IT consciousness. This period marked a turning point, where the practical benefits of portability and efficiency became accessible to a much broader audience of developers and operations teams.

Containers vs. Virtual Machines: A Comparative Analysis

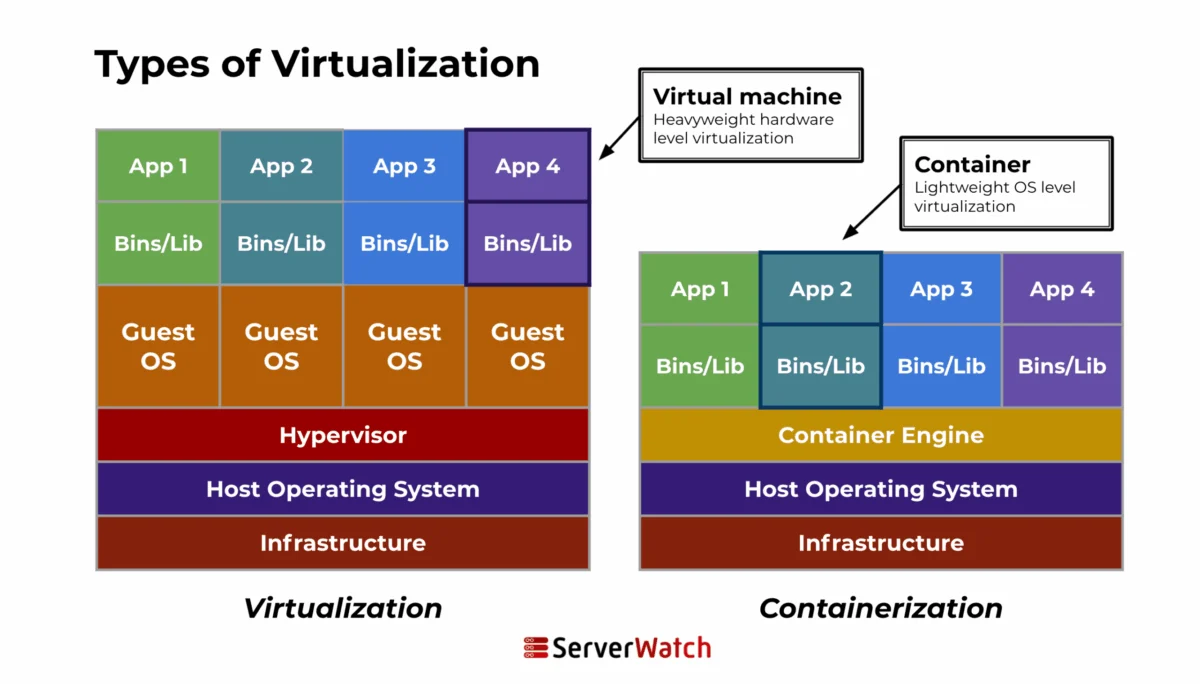

While both containers and virtual machines serve the purpose of providing isolated environments for running applications, their underlying architectures and operational principles differ significantly. This distinction is crucial for understanding their respective strengths and optimal use cases.

| Feature | Containers | Virtual Machines (VMs) |

|---|---|---|

| Architecture | Containers share the host system’s kernel but isolate application processes. They do not require a full operating system for each instance, making them lighter and faster to initialize. | Each VM includes not only the application and its dependencies but also an entire guest operating system. This OS runs on virtual hardware managed by a hypervisor, which resides on the host’s physical hardware. VMs offer strong isolation from each other and the host, providing enhanced security and control. |

| Resource Management | Containers are highly efficient and consume fewer resources because they share the host’s kernel and only require the application and its runtime environment. This leads to better hardware utilization. | The necessity of running a full OS in each VM leads to higher resource consumption (CPU, RAM, storage). This can result in less efficient utilization of the underlying hardware, especially when running many VMs. |

| Performance | Near-native performance due to minimal overhead. Faster startup times, often in seconds or milliseconds. | Performance is impacted by the overhead of the guest OS and the hypervisor. Startup times can range from minutes to several minutes. |

| Portability | Highly portable; a container image built on one system can run on any system with a compatible container runtime. | Less portable; VM images are tied to specific hypervisor platforms and hardware architectures, requiring conversion or re-configuration for migration. |

| Isolation Level | Process-level isolation. While robust, vulnerabilities in the shared kernel could potentially impact multiple containers. | Full OS and hardware-level isolation. Provides a higher degree of security and fault tolerance, as a compromise in one VM is unlikely to affect others or the host. |

The fundamental difference lies in the abstraction layer. VMs virtualize the hardware, allowing multiple operating systems to run on a single physical machine. Containers, on the other hand, virtualize the operating system, enabling multiple isolated application environments to run on a single OS instance. This distinction directly translates to the resource efficiency and speed advantages of containers.

The Inner Workings of Containerization

The process of containerization involves encapsulating an application and its entire operating environment into a self-contained unit. This involves several key stages:

- Image Creation: Developers define the container’s environment and application dependencies in a configuration file (e.g., a Dockerfile). This file serves as instructions for building the container image.

- Image Building: A container engine (like Docker) reads the configuration file and creates a layered filesystem image, incorporating the specified code, libraries, and dependencies.

- Container Runtime: When an application needs to be run, the container engine uses the image to create a running instance of the container. This instance is an isolated process that executes within the host OS kernel but has its own user space.

- Orchestration: For managing multiple containers, especially in complex applications, container orchestration platforms (e.g., Kubernetes) automate deployment, scaling, and management.

Key Components of a Container

A container is composed of several critical elements that enable its isolated and self-sufficient operation:

- Container Image: The immutable blueprint containing all the necessary code, runtime, libraries, environment variables, and configuration files for an application.

- Container Runtime: The software that executes containers, such as containerd, CRI-O, or the Docker Engine. It manages the container’s lifecycle, including starting, stopping, and monitoring.

- Operating System Kernel: Containers share the host operating system’s kernel. This is a key factor in their lightweight nature. However, each container has its own isolated user space and system libraries.

- Namespaces: These Linux kernel features provide process isolation, ensuring that each container has its own view of the system, including process IDs, network interfaces, and mount points.

- Control Groups (cgroups): Another Linux kernel feature, cgroups limit and account for the resource usage (CPU, memory, I/O, network) of processes, preventing one container from consuming all system resources and impacting others.

- Filesystem: Each container has its own writable layer on top of the read-only layers of its image, allowing for changes within the container without affecting the underlying image or other containers.

- Networking: Containers can be configured with their own network interfaces, IP addresses, and routing tables, allowing them to communicate with each other and the external network in a controlled manner.

Diverse Container Use Cases Driving Adoption

The versatility and efficiency of containers have made them indispensable across a wide spectrum of modern software development and operational paradigms.

Microservices and Cloud-Native Architectures

Containers are a natural fit for microservices, an architectural style that structures an application as a collection of small, independent services. Each microservice can be encapsulated in its own container, ensuring strict isolation, simplifying updates, and enabling independent scaling. This approach aligns perfectly with cloud-native principles, where applications are designed for dynamic, distributed environments. Orchestration tools like Kubernetes further enhance this by enabling automated deployment, scaling, and management of these microservices in the cloud, ensuring high availability and efficient resource utilization.

The adoption of microservices, often facilitated by containers, has seen a significant uptick in recent years. Industry reports from Gartner and Forrester consistently highlight the benefits of agility and resilience offered by this architectural pattern, with containerization being the enabling technology. For instance, a large e-commerce platform might break down its monolithic application into dozens of microservices, each running in its own container, allowing for faster feature rollouts and more robust handling of peak traffic.

Streamlining Continuous Integration and Continuous Deployment (CI/CD) Pipelines

Containers offer a significant advantage in CI/CD workflows by providing consistent and reproducible environments from development through to production. This consistency dramatically reduces the "it works on my machine" problem and helps identify and resolve issues earlier in the development lifecycle. Automated testing environments can be spun up rapidly using container images that precisely mirror production, ensuring that every code commit is validated in a realistic setting. This leads to more reliable deployments and accelerated release cycles. The ability to guarantee that software behaves identically across development, testing, staging, and production environments is a cornerstone of efficient CI/CD.

Data from DevOps surveys frequently indicates that organizations utilizing containers in their CI/CD pipelines report faster release cycles, fewer deployment failures, and improved developer productivity. For example, a software development team might use a containerized build environment to compile code, another for unit testing, and a third for integration testing, all based on identical images derived from the same source code.

Application Packaging and Distribution

The inherent nature of containers to bundle an application with all its dependencies makes them an ideal solution for packaging and distributing software. This portability ensures that applications can be deployed across diverse platforms and cloud environments without requiring significant modifications. Container registries act as centralized repositories for storing and managing container images, allowing for easy retrieval and deployment of specific versions. This capability is critical for maintaining application stability and enabling swift rollbacks to previous, stable versions if issues arise with new deployments.

This standardized packaging simplifies the software supply chain, making it easier for organizations to consume and deploy third-party applications. Imagine a scenario where a critical business application is delivered as a container image; deployment becomes a matter of pulling the image and running it, rather than navigating complex installation scripts and dependency conflicts.

The Compelling Advantages of Containerization

The widespread adoption of containerization is a testament to its numerous benefits, which resonate across development, operations, and business objectives.

- Enhanced Portability: Containers run consistently across different environments, from laptops to data centers to public clouds, eliminating "it works on my machine" issues.

- Increased Efficiency: By sharing the host OS kernel, containers require fewer resources than VMs, leading to higher server utilization and lower infrastructure costs.

- Faster Deployment: Container startup times are significantly faster than VMs, enabling rapid application deployment and scaling.

- Improved Scalability: Containers can be easily replicated and orchestrated to handle fluctuating workloads, ensuring applications remain responsive.

- Simplified Development: Developers can build and test applications in isolated, consistent environments that mirror production.

- Greater Agility: The modular nature of containerized applications (e.g., microservices) allows for faster iteration and feature delivery.

- Cost Savings: Reduced infrastructure needs and improved resource utilization can lead to substantial cost reductions.

- Isolation and Security: Containers provide process-level isolation, enhancing security by preventing interference between applications.

- Consistency: Ensures that applications behave the same way regardless of the underlying infrastructure.

- Resource Optimization: Fine-grained control over resource allocation through cgroups.

- Simplified Management: Orchestration tools automate complex deployment and management tasks.

- Faster Rollbacks: Easy to revert to previous versions of an application image if issues arise.

- Ecosystem Support: A vast and growing ecosystem of tools and services supports containerization.

Navigating the Challenges and Considerations of Containerization

Despite its significant advantages, the implementation and management of containerized environments present their own set of challenges.

Security Considerations

While containers offer isolation, they are not immune to security vulnerabilities. Key concerns include:

- Kernel Exploits: A vulnerability in the shared host kernel could potentially affect all containers running on that host.

- Image Vulnerabilities: Container images may contain outdated or vulnerable software packages if not regularly scanned and updated.

- Insecure Configurations: Misconfigurations in container settings, network policies, or access controls can create security loopholes.

- Privilege Escalation: If a container is compromised, an attacker might attempt to escalate privileges to gain access to the host system.

- Secrets Management: Securely managing sensitive information like passwords and API keys within containers is crucial.

Organizations must implement robust security practices, including regular vulnerability scanning of images, least privilege principles for container execution, network segmentation, and secure secrets management solutions.

Management Complexity

As container deployments scale, managing them can become increasingly complex:

- Orchestration Overheads: While tools like Kubernetes automate many tasks, they themselves require skilled personnel to set up, configure, and maintain.

- Monitoring and Logging: Aggregating logs and monitoring the health of numerous distributed containers can be challenging.

- Networking Complexity: Designing and managing complex container networking topologies requires specialized knowledge.

- Stateful Applications: Managing persistent data for stateful applications within a containerized environment requires careful planning.

Effective management often relies on robust orchestration platforms, comprehensive monitoring solutions, and well-defined operational procedures.

Integration with Existing Systems

Integrating modern container technologies with legacy IT infrastructure can be a significant undertaking:

- Hybrid Cloud Challenges: Deploying and managing containers across on-premises data centers and multiple public clouds requires careful architecture and tooling.

- Legacy Application Modernization: Migrating monolithic, legacy applications to containerized microservices can be a complex and time-consuming process.

- Skills Gap: Finding and retaining personnel with expertise in containerization technologies and cloud-native architectures can be difficult.

- Cultural Shifts: Adopting containerization often necessitates a shift in organizational culture towards DevOps practices and agile methodologies.

Organizations must approach integration strategically, often employing a phased approach and investing in training and upskilling their IT teams.

Leading Container Technologies Shaping the Landscape

The containerization ecosystem is rich with powerful tools and platforms that have driven its widespread adoption.

Docker

Docker has been a pivotal force in popularizing containerization, making it accessible and standardized. It provides a comprehensive platform for building, shipping, and running containerized applications.

- Key Features:

- Container Images: Immutable, layered images built from Dockerfiles.

- Docker Engine: The core runtime that builds and runs containers.

- Docker Hub: A cloud-based registry for sharing container images.

- Docker Compose: A tool for defining and running multi-container Docker applications.

- Benefits: Ease of use, strong community support, extensive documentation, and a rich ecosystem of third-party integrations.

Kubernetes

Kubernetes, often abbreviated as K8s, has emerged as the de facto standard for container orchestration. It automates the deployment, scaling, and management of containerized applications at scale.

- Key Features:

- Automated Deployment and Rollouts: Manages the deployment lifecycle of applications.

- Service Discovery and Load Balancing: Automatically exposes containers to the network and balances traffic.

- Self-Healing: Restarts failed containers, replaces and reschedules containers when nodes die.

- Storage Orchestration: Allows mounting of various storage systems.

- Secret and Configuration Management: Manages sensitive information and application configurations.

- Benefits: Highly scalable, resilient, portable across different infrastructures, and supported by a vast community and vendor ecosystem.

Other Notable Container Technologies

Beyond Docker and Kubernetes, several other technologies contribute to the containerization landscape:

- containerd: An industry-standard container runtime that emphasizes simplicity, robustness, and portability. It’s a core component of Docker and Kubernetes.

- CRI-O: A lightweight container runtime specifically designed for Kubernetes, focusing on implementing the Kubernetes Container Runtime Interface (CRI).

- Podman: A daemonless container engine for developing, managing, and running OCI (Open Container Initiative) containers on Linux systems. It’s designed as a more secure alternative to Docker.

- LXC/LXD: Linux Containers (LXC) provide OS-level virtualization, while LXD builds on LXC to offer a more user-friendly experience with features like system containers and remote management.

Future Trajectories of Containerization

The evolution of containerization is far from over; it continues to be shaped by emerging technologies and evolving industry standards.

Integration with Emerging Technologies

Containerization is increasingly becoming a foundational element for integrating with cutting-edge fields:

- Artificial Intelligence (AI) and Machine Learning (ML): Containers provide isolated and reproducible environments for training and deploying AI/ML models, simplifying dependency management and enabling seamless scaling of compute resources. Platforms like Kubeflow leverage Kubernetes for ML workflows.

- Internet of Things (IoT): Lightweight containers can be deployed on edge devices, enabling consistent application deployment and management in distributed IoT environments.

- Serverless Computing: Containerization plays a role in serverless platforms, allowing developers to package functions as containers that can be dynamically scaled by the cloud provider.

- WebAssembly (Wasm): The growing interest in WebAssembly as a secure and performant runtime environment for the web and beyond suggests potential synergies with containerization for portable, sandboxed execution.

Evolution of Container Standards and Regulations

As containerization matures and its adoption deepens, the development of robust standards and regulatory frameworks is becoming paramount:

- Open Container Initiative (OCI): The OCI is a crucial industry standard body that defines specifications for container image formats and runtimes, ensuring interoperability between different container technologies.

- Security Standards: Increasing focus on standardized security practices for container images, registries, and runtime environments, including compliance frameworks and certification programs.

- Regulatory Compliance: As containers are used in regulated industries (e.g., finance, healthcare), there is a growing need for solutions that meet specific compliance requirements, such as data privacy and auditability.

- Supply Chain Security: Enhanced measures to secure the container supply chain, from image creation to deployment, to prevent malicious code injection.

The Bottom Line: The Indispensable Role of Containers

Containers have fundamentally reshaped the software development and deployment landscape, offering unparalleled efficiency, scalability, and consistency. As these technologies continue to advance and integrate with new innovations, their role is set to become even more central, driving advancements and efficiencies across diverse sectors.

Looking ahead, the potential of containerization is vast. Its inherent ability to seamlessly integrate with future technological advancements and adapt to evolving regulatory landscapes positions it as a cornerstone of digital transformation strategies. Organizations that effectively harness the power of container technologies will undoubtedly be at the vanguard of innovation, better equipped to navigate the complexities and seize the opportunities of a rapidly transforming digital world.

For those seeking to deepen their understanding, exploring the nuances of virtual machines offers valuable comparative context. Additionally, familiarizing oneself with leading virtualization companies can aid in selecting the most suitable infrastructure solutions for diverse IT needs.