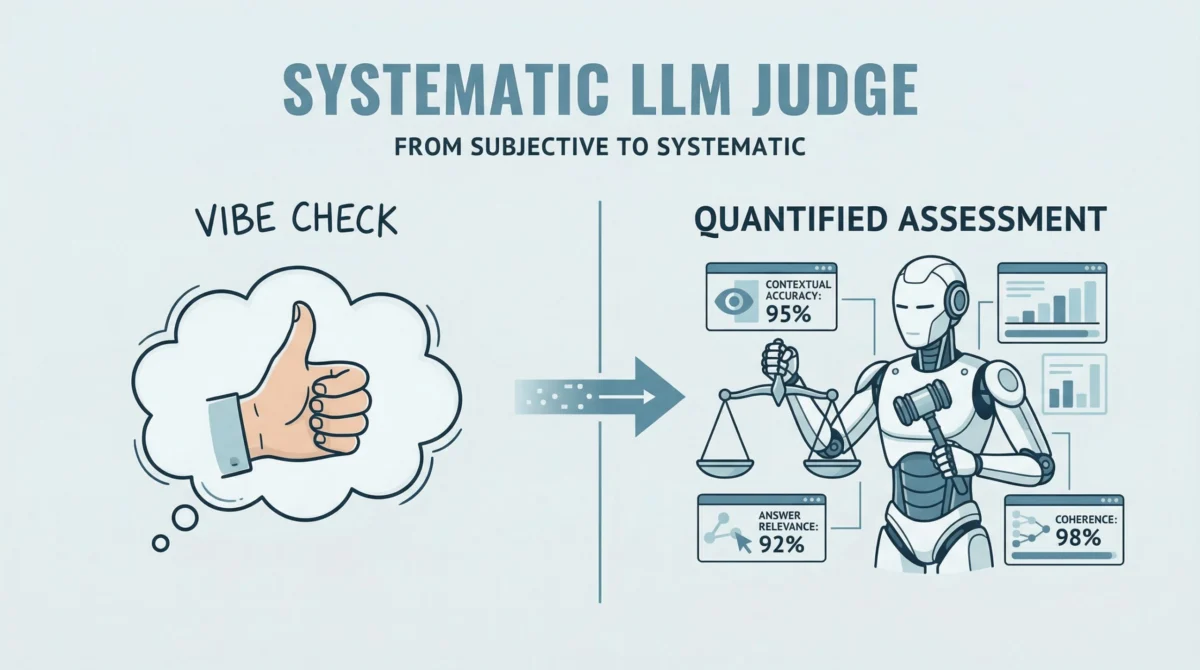

The burgeoning field of large language models (LLMs) and agent-based applications has ushered in a new era of artificial intelligence capabilities, yet it has simultaneously amplified the critical need for robust and systematic evaluation frameworks. Traditional subjective assessments, often dubbed "vibe checks," are proving increasingly inadequate for ensuring the reliability, accuracy, and safety of these sophisticated systems in production environments. This article delves into a practical, hands-on workflow for evaluating LLM applications, particularly those built on Retrieval-Augmented Generation (RAG) architectures and agentic designs, leveraging the power of RAGAs (Retrieval-Augmented Generation Assessment) and G-Eval-based frameworks.

The Evolving Landscape of LLM Evaluation: From Anecdote to Algorithm

The rapid proliferation of LLMs across various industries, from customer service chatbots to complex analytical agents, underscores a fundamental challenge: how do we quantitatively and qualitatively assess their performance? Early interactions with LLMs often relied on human judgment, where developers or users would manually review outputs for correctness, coherence, and relevance. While intuitive, this approach is inherently subjective, non-scalable, and prone to human bias and fatigue. As LLMs moved beyond experimental prototypes into critical applications, the demand for objective, automated, and reproducible evaluation methods became paramount.

The development of RAG architectures further complicated evaluation. RAG models combine the generative power of LLMs with a retrieval component, allowing them to access external knowledge bases to ground their responses. This design mitigates common LLM issues like hallucination but introduces new evaluation dimensions: how well does the system retrieve relevant information, and how accurately does it synthesize that information into its answer? These questions cannot be answered by merely checking the final output; they require an assessment of the underlying processes.

RAGAs: Quantifying Retrieval-Augmented Generation Quality

RAGAs emerged as a pivotal open-source evaluation framework designed to address these specific challenges in RAG pipelines. It fundamentally shifts the paradigm from subjective human review to a systematic, LLM-driven "judge." This "judge" quantifies the quality of RAG outputs by assessing a triad of desirable properties: contextual accuracy, answer relevance, and faithfulness.

- Faithfulness: This metric assesses whether the generated answer is factually supported by the provided context. A high faithfulness score indicates that the LLM has not hallucinated information or introduced external facts not present in the retrieved documents. This is crucial for applications where factual accuracy is non-negotiable, such as legal or medical information systems.

- Answer Relevancy: This measures how well the generated answer directly addresses the user’s question, without including superfluous or irrelevant information. An answer might be factually correct but still score low on relevancy if it deviates from the core query.

- Contextual Accuracy (or Precision/Recall of Context): While not explicitly demonstrated in the initial code snippets, RAGAs also offers metrics to evaluate the quality of the retrieved context itself. For instance, Contextual Precision measures the proportion of retrieved context that is relevant to the question, while Contextual Recall assesses whether all necessary information for answering the question was retrieved. These metrics are vital for fine-tuning the retrieval component of a RAG system.

By providing quantitative scores for these dimensions, RAGAs replaces vague "vibe checks" with concrete, interpretable data points, enabling developers to systematically identify weaknesses in their RAG pipelines and iterate on improvements. The framework’s flexibility has also allowed it to evolve beyond pure RAG architectures, extending its utility to agent-based applications where the LLM might interact with multiple tools or perform multi-step reasoning.

G-Eval and DeepEval: Customizing Qualitative Assessment for Agentic Systems

While RAGAs excels at objective, fact-based evaluation, many aspects of LLM performance are inherently qualitative. Attributes like coherence, clarity, conciseness, tone, and professionalism often define the user experience and overall utility of an AI application. This is where frameworks based on G-Eval become indispensable.

G-Eval, or Generative Evaluation, leverages the LLM itself as a powerful evaluator, allowing developers to define custom, interpretable evaluation criteria. Instead of relying on predefined metrics, G-Eval prompts an LLM to act as a judge, evaluating an output against specific instructions and providing a score along with a detailed reasoning. This "reasoning-and-scoring" approach offers a nuanced qualitative assessment that is difficult to achieve with purely quantitative methods.

DeepEval, specifically, integrates multiple evaluation metrics, including G-Eval-based custom criteria, into a unified testing sandbox. This platform streamlines the process of combining both objective and qualitative evaluations, offering a holistic view of an LLM’s performance. For agent-based applications, where the LLM might engage in complex reasoning, planning, and tool execution, G-Eval’s flexibility in defining custom metrics is particularly valuable. For example, an agent might need to be evaluated not just on the factual correctness of its final answer but also on the logical flow of its reasoning steps or its ability to gracefully handle ambiguous queries.

Practical Implementation: A Step-by-Step Workflow for Comprehensive Evaluation

Implementing a robust evaluation pipeline involves several key stages, from setting up the environment to interpreting the results. The following section outlines a practical workflow, expanding on the core concepts and code provided.

1. Setting Up the Environment and a Basic Agent

The first step in any evaluation workflow is to define the system under test. For simplicity, we begin with a basic LLM agent that takes a user query and generates a response using an LLM API, such as OpenAI’s gpt-3.5-turbo.

import openai

import os # For API key management

def simple_agent(query):

"""

A simplified LLM agent that generates a response based on a user query.

In a real-world scenario, this would include complex reasoning,

tool integration, and prompt engineering.

"""

# Ensure API key is set

if "OPENAI_API_KEY" not in os.environ:

raise ValueError("OPENAI_API_KEY environment variable not set.")

# NOTE: this is a 'mock' agent loop.

# In a real scenario, you would use a system prompt to define tool usage,

# manage conversation history, and potentially retrieve external information.

prompt = f"You are a helpful assistant. Answer the user query: query"

# Example using OpenAI (this can be swapped for Gemini or another provider)

try:

response = openai.chat.completions.create(

model="gpt-3.5-turbo",

messages=["role": "user", "content": prompt]

)

return response.choices[0].message.content

except Exception as e:

print(f"Error calling OpenAI API: e")

return "An error occurred while generating the response."

# Example usage (requires API key)

# os.environ["OPENAI_API_KEY"] = "YOUR_API_KEY"

# agent_response = simple_agent("What is the capital of France?")

# print(agent_response)While this simple_agent is intentionally basic, its structure allows us to focus on the evaluation aspects. In a production setting, this agent would incorporate sophisticated prompt engineering, external tool calls (e.g., search engines, databases), memory management for conversational context, and potentially multi-step reasoning capabilities. The evaluation frameworks discussed here are designed to scale to such complex agents.

2. Introducing RAGAs for Objective Metrics

With a basic agent defined, we can now introduce RAGAs to measure objective qualities like faithfulness and answer relevancy. RAGAs typically requires a dataset containing questions, generated answers, the context used (if RAG-based), and optionally, ground truth answers for more comprehensive evaluation.

Let’s start with a foundational example for a question-answering scenario:

from ragas import evaluate

from ragas.metrics import faithfulness, answer_relevancy

from datasets import Dataset # RAGAs often integrates with Hugging Face Datasets

# IMPORTANT: Replace "YOUR_API_KEY" with your actual API key

# os.environ["OPENAI_API_KEY"] = "YOUR_API_KEY"

# Define a simple testing dataset for a question-answering scenario

# In a real application, this dataset would be much larger and more diverse.

data =

"question": ["What is the capital of Japan?", "Who painted the Mona Lisa?"],

"answer": ["Tokyo is the capital.", "Leonardo da Vinci."],

"contexts": [

["Japan is a country in Asia. Its capital is Tokyo."],

["Leonardo da Vinci was an Italian polymath known for his art."]

],

"ground_truth": ["Tokyo", "Leonardo da Vinci"] # Ground truth is optional but highly recommended

# Convert dictionary to Hugging Face Dataset (required by RAGAs)

dataset = Dataset.from_dict(data)

# Running RAGAs evaluation with multiple metrics

# Note: Sufficient API quota (e.g., OpenAI or Gemini) is typically required for these LLM-as-a-judge evaluations.

try:

ragas_results = evaluate(dataset, metrics=[faithfulness, answer_relevancy])

print("nRAGAs Evaluation Results (Simple Example):")

print(ragas_results)

print(f"Faithfulness Score: ragas_results['faithfulness']")

print(f"Answer Relevancy Score: ragas_results['answer_relevancy']")

except Exception as e:

print(f"Error during RAGAs evaluation: e. Please ensure your API key is valid and you have sufficient quota.")

The evaluate function in RAGAs uses an LLM internally to act as a judge, analyzing the provided question, answer, and context to determine the scores for each metric. The output provides a clear, quantitative assessment, which is invaluable for tracking improvements across different model versions or prompt engineering strategies.

For a more structured and reusable approach, especially for agent-based workflows, encapsulating the evaluation logic into a function is beneficial:

# Assuming test_cases are defined as before:

test_cases = [

"question": "How do I reset my password?",

"answer": "Go to settings and click 'forgot password'. An email will be sent.",

"contexts": ["Users can reset passwords via the Settings > Security menu."],

"ground_truth": "Navigate to Settings, then Security, and select Forgot Password."

,

"question": "What are the benefits of cloud computing?",

"answer": "Cloud computing offers scalability, cost-effectiveness, and flexibility. Users can access resources on demand.",

"contexts": ["Cloud computing allows on-demand access to shared computing resources, providing scalability, cost savings, and flexibility."],

"ground_truth": "Scalability, cost-effectiveness, and flexibility."

]

def evaluate_ragas_agent(test_cases, openai_api_key):

"""

Simulates a simple AI agent that performs RAGAs evaluation.

This function takes a list of test cases and an API key,

then runs RAGAs metrics and returns the results.

"""

os.environ["OPENAI_API_KEY"] = openai_api_key

# Convert test cases into a Dataset object, which RAGAs expects

dataset = Dataset.from_list(test_cases)

# Run evaluation with defined RAGAs metrics

ragas_results = evaluate(dataset, metrics=[faithfulness, answer_relevancy])

return ragas_results

# Example of calling the evaluation function:

my_openai_key = "YOUR_API_KEY" # Replace with your actual API key

if 'test_cases' in globals() and my_openai_key != "YOUR_API_KEY": # Check if key is set

evaluation_output = evaluate_ragas_agent(test_cases, openai_api_key=my_openai_key)

print("nRAGAs Evaluation Results (Encapsulated Agent Workflow):")

print(evaluation_output)

print(f"Average Faithfulness: evaluation_output['faithfulness']:.2f")

print(f"Average Answer Relevancy: evaluation_output['answer_relevancy']:.2f")

else:

print("nPlease define the 'test_cases' variable and set 'my_openai_key' with your actual API key to run this section.")

This encapsulated function evaluate_ragas_agent demonstrates how RAGAs can be integrated into an MLOps pipeline for continuous evaluation. Each iteration of an agent or RAG system can be tested against a consistent set of metrics, allowing for data-driven decisions on model improvements.

3. Integrating DeepEval for Qualitative Judgments

While RAGAs provides quantitative metrics, DeepEval with its G-Eval capabilities allows for the assessment of more subjective, qualitative attributes. This is particularly useful for aspects like the "coherence" or "professionalism" of an LLM’s output.

from deepeval.metrics import GEval

from deepeval.test_case import LLMTestCase, LLMTestCaseParams

import os # Ensure API key is set

# IMPORTANT: Replace "YOUR_API_KEY" with your actual API key

# os.environ["OPENAI_API_KEY"] = "YOUR_API_KEY"

if 'test_cases' in globals() and my_openai_key != "YOUR_API_KEY":

# STEP 1: Define a custom evaluation metric using GEval

# Here, we define a metric for 'Coherence'.

coherence_metric = GEval(

name="Coherence",

criteria="Determine if the answer is easy to follow, logically structured, and free of grammatical errors.",

evaluation_params=[LLMTestCaseParams.INPUT, LLMTestCaseParams.ACTUAL_OUTPUT],

threshold=0.7 # Define a pass/fail threshold for this metric (0 to 1)

)

# STEP 2: Create a test case for DeepEval

# DeepEval's LLMTestCase can capture various parameters including input, actual output, expected output, context, etc.

case = LLMTestCase(

input=test_cases[0]["question"],

actual_output=test_cases[0]["answer"],

# Optional: Add context or ground_truth if relevant for other DeepEval metrics

# retrieval_context=test_cases[0]["contexts"],

# expected_output=test_cases[0]["ground_truth"]

)

# STEP 3: Run evaluation using the custom GEval metric

try:

coherence_metric.measure(case)

print(f"nDeepEval G-Eval Score for Coherence: coherence_metric.score")

print(f"Reasoning for Coherence Score: coherence_metric.reason")

print(f"Did it pass the threshold? 'Yes' if coherence_metric.is_successful else 'No'")

# We can define another custom metric, e.g., 'Professionalism'

professionalism_metric = GEval(

name="Professionalism",

criteria="Assess if the answer maintains a formal, respectful, and professional tone suitable for a business context.",

evaluation_params=[LLMTestCaseParams.INPUT, LLMTestCaseParams.ACTUAL_OUTPUT],

threshold=0.8

)

professionalism_metric.measure(case)

print(f"nDeepEval G-Eval Score for Professionalism: professionalism_metric.score")

print(f"Reasoning for Professionalism Score: professionalism_metric.reason")

print(f"Did it pass the threshold? 'Yes' if professionalism_metric.is_successful else 'No'")

except Exception as e:

print(f"Error during DeepEval G-Eval evaluation: e. Please ensure your API key is valid and you have sufficient quota.")

else:

print("nPlease define the 'test_cases' variable and set 'my_openai_key' with your actual API key to run this section.")

DeepEval’s G-Eval allows for granular control over what aspects of an LLM’s output are assessed. By defining specific criteria, developers can tailor the evaluation to the precise requirements of their application. The reasoning provided by the LLM judge is particularly insightful, offering actionable feedback beyond a mere numerical score. This approach is invaluable for refining prompts, fine-tuning models, and ensuring the LLM’s outputs align with desired brand voice or operational standards.

Implications for AI Development and Deployment: Building Trust and Reliability

The integration of frameworks like RAGAs and G-Eval into the LLM development lifecycle has profound implications.

- Enhanced Reliability and Trust: By systematically evaluating LLM outputs against objective and qualitative criteria, developers can significantly improve the reliability of their AI applications. This, in turn, fosters greater user trust, which is critical for the widespread adoption of AI technologies.

- Streamlined MLOps for LLMs: These frameworks facilitate robust MLOps practices for LLMs. Automated evaluation allows for continuous integration and continuous deployment (CI/CD) pipelines, where every code change or model update can be immediately assessed for regressions or improvements. This accelerates the development cycle and reduces the risk of deploying underperforming or flawed models.

- Targeted Improvements: The detailed metrics and reasoning provided by RAGAs and G-Eval enable developers to pinpoint specific areas for improvement. For instance, a low faithfulness score might indicate issues with context retrieval or how the LLM synthesizes information, while a low coherence score might point to a need for better prompt engineering or output formatting.

- Responsible AI Development: Rigorous evaluation is a cornerstone of responsible AI. By measuring aspects like accuracy, fairness, and safety (implicitly through relevancy and faithfulness), these frameworks help developers build AI systems that are not only performant but also ethical and accountable.

- Cost-Effectiveness: While LLM-as-a-judge evaluations consume API quota, they are often more cost-effective and scalable than extensive manual human evaluation, especially for large datasets and frequent iterations. The investment in API calls translates into faster development cycles and higher quality products.

The Future of LLM Evaluation

The field of LLM evaluation is continuously evolving. Future advancements are likely to include:

- More Sophisticated Metrics: Development of new metrics to assess complex LLM behaviors, such as creativity, common sense reasoning, or adherence to complex ethical guidelines.

- Multimodal Evaluation: As LLMs become multimodal (handling text, images, audio), evaluation frameworks will need to adapt to assess cross-modal consistency and performance.

- Explainable Evaluation: Tools that not only provide scores but also explain why a particular score was given, offering deeper insights into the LLM’s decision-making process.

- Benchmarking Standards: Industry-wide adoption of standardized benchmarks and evaluation protocols to allow for more direct comparisons between different LLMs and applications.

In conclusion, the era of subjective "vibe checks" for large language models is rapidly fading. By embracing sophisticated, LLM-driven evaluation frameworks like RAGAs and G-Eval (integrated through platforms like DeepEval), developers can move towards a more scientific, data-driven approach to building and deploying AI applications. This comprehensive evaluation strategy—combining objective metrics like faithfulness and answer relevancy with customizable qualitative assessments like coherence and professionalism—is essential for ensuring the reliability, trustworthiness, and ultimately, the success of modern AI systems in an increasingly AI-driven world.