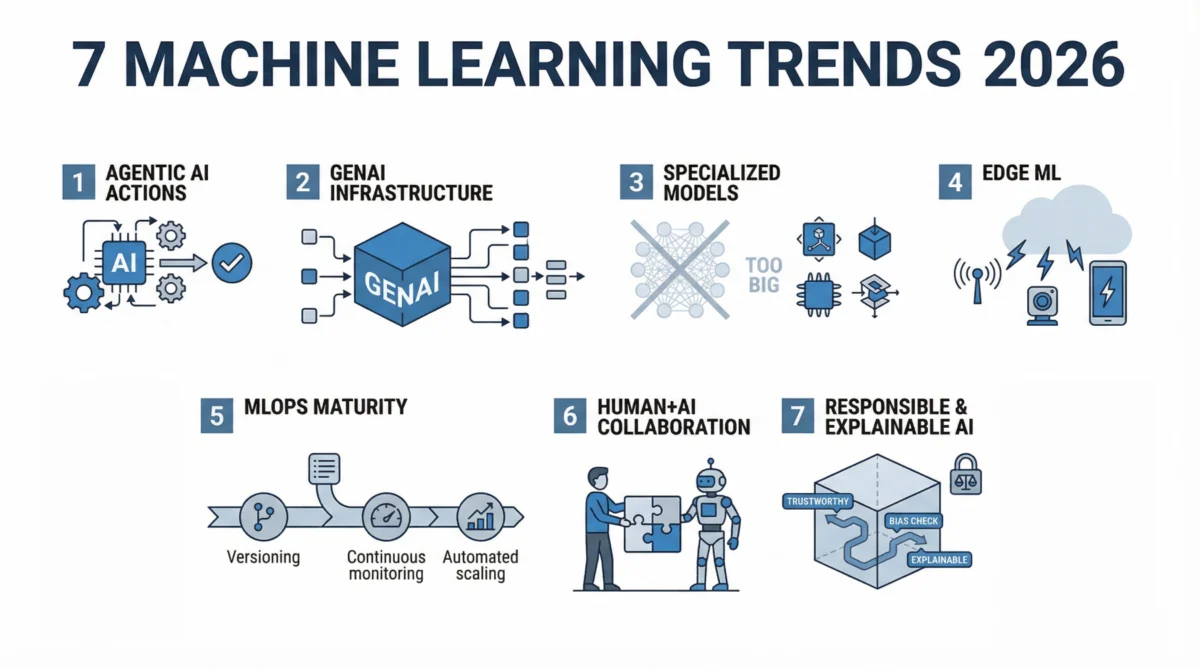

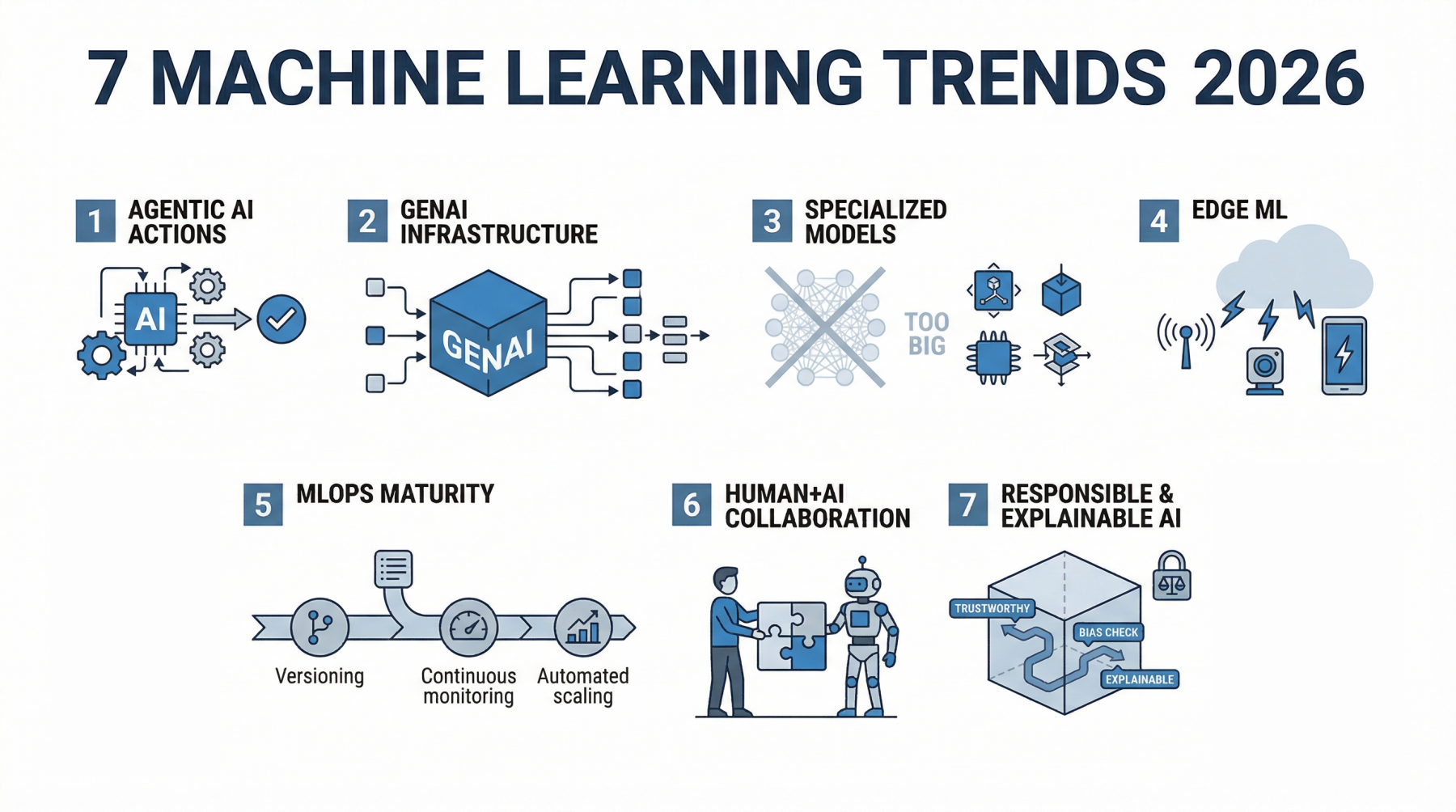

In 2026, machine learning is fundamentally redefining its role, transitioning from systems that merely predict outcomes to deeply integrated, autonomous agents that drive real-world workflows and operational decisions. This paradigm shift signifies a maturation of AI technologies, moving beyond experimental capabilities to become an indispensable component of enterprise infrastructure and daily operations.

The Evolution Towards Autonomous Intelligence

The trajectory of machine learning has seen significant evolution over the past few years. A couple of years prior, around 2023-2024, the primary focus was on demonstrating raw capability. The industry was captivated by larger models, achieving impressive benchmarks, and showcasing groundbreaking demos. Companies rushed to integrate AI into products, often to prove technological prowess rather than to solve specific business problems efficiently. This initial phase, while exciting, often led to implementations that struggled in production environments—they were costly, challenging to maintain, and frequently disconnected from practical workflows.

However, a crucial pivot occurred in 2025 and solidified in 2026. The emphasis shifted from mere output to tangible outcomes. Machine learning systems are now designed with the expectation that they will complete tasks end-to-end, not just provide assistance. For instance, a customer support model no longer just suggests a reply; it resolves the entire ticket. A data pipeline doesn’t merely flag an anomaly; it triggers the necessary corrective actions. This subtle yet profound change dictates how entire systems are engineered and deployed. The global financial commitment to this transformation is staggering, with projected AI spending set to reach an estimated $2.02 trillion by 2026. Concurrently, the machine learning market itself is on a trajectory to expand to $1.88 trillion by 2035, underscoring that these are not speculative investments but reflections of systems already deeply embedded within core business operations. This signifies that machine learning in 2026 is less about its inherent power and more about its seamless, functional integration into the very fabric of decision-making and automated processes.

Agentic AI: From Passive Assistants to Proactive Decision-Makers

One of the most impactful shifts shaping the machine learning landscape in 2026 is the rise of agentic AI. Historically, ML systems functioned as passive assistants: input was provided, an output was generated, and the responsibility for subsequent action remained with a human or an external system. This model is rapidly being superseded by agentic systems capable of planning, making autonomous decisions, and executing multi-step tasks from inception to completion.

The distinction is critical. A traditional model might predict customer churn or classify support tickets. While valuable, these are discrete outputs. An agentic system, however, extends this significantly: it identifies a high-risk customer, formulates an optimal retention strategy, drafts a personalized communication, and then initiates the outreach, all without direct human intervention at each step. The output is no longer a prediction but a completed action. This capability is powered by their ability to decompose complex goals into smaller, manageable tasks, execute them sequentially, and adapt their approach based on real-time feedback. They can dynamically retrieve data from diverse sources, interact with APIs, generate contextually relevant responses, and refine their decisions.

The adoption of agentic AI is evident across various sectors. In customer service, AI agents are increasingly resolving entire inquiries, minimizing the need for human escalation. Operational departments leverage them to manage inventory by integrating demand forecasts with supply chain constraints. In healthcare, these agents summarize patient records and recommend next steps, significantly reducing the administrative burden on clinicians. Market projections reflect this accelerated adoption, with the AI agents market anticipated to reach $93.2 billion by 2032. Furthermore, reports indicate that up to 40% of enterprise applications are expected to incorporate AI agents by 2026, signaling a fundamental re-architecture of how software is designed and interacts with users. This trend represents arguably the most significant development in machine learning today, as it redefines the core function of AI from analytical support to autonomous operational execution.

Generative AI’s Ubiquity: An Infrastructural Layer

The initial fanfare surrounding generative AI, characterized by standalone chatbots or content generators, has evolved dramatically. In 2026, generative AI is no longer perceived as a novel add-on but as an integral infrastructural layer powering everyday workflows. Its integration is now deep and pervasive, rather than superficial.

This shift is evident in its application within critical enterprise functions. In software development, generative models are embedded directly into integrated development environments (IDEs), assisting with code generation, review, and refactoring in real time. Within business operations, these systems autonomously generate comprehensive reports, synthesize insights from extensive datasets, and summarize meetings, thereby eliminating manual analysis. The key differentiator is not just enhanced capability but strategic placement within the core workflow.

The transition from exploratory experimentation to robust production deployment has been pivotal. While early adopters spent the preceding two years exploring generative AI’s potential, the current focus is squarely on reliability, cost-efficiency, and consistency. Models are undergoing rigorous fine-tuning, often combined with traditional machine learning techniques, and connected to structured data sources. This hybrid approach allows generative AI to excel in unstructured tasks like natural language understanding and reasoning, while traditional models handle predictive analytics and optimization. Companies are reporting substantial efficiency gains, with some experiencing up to a 30% reduction in workload after integrating generative AI into their workflows. Such improvements stem from deep integration rather than isolated features. The prevailing organizational question has shifted from "Should we adopt generative AI?" to "Where is generative AI still missing in our operations?"

Specialized Models Gain Ascendancy: The Era of Efficiency

For a considerable period, the progress of machine learning was often equated with sheer scale: more parameters, more data, and consequently, better performance. This philosophy propelled the industry towards the development of colossal systems demanding immense computational power, significant budgets, and intricate infrastructure. However, 2026 marks a turning point where smaller, highly specialized models are gaining substantial traction, driven not by their impressive scale but by their practical utility and efficiency.

These specialized models are meticulously engineered for specific tasks, trained on narrowly focused datasets, and optimized for real-world performance rather than generalized benchmarks. Small language models (SLMs) exemplify this trend. Unlike their larger counterparts that attempt to address a vast spectrum of tasks, SLMs are built to achieve exceptional performance within a constrained domain, such as legal document analysis, specialized customer support interactions, or internal knowledge retrieval. In these targeted applications, a smaller model with deep contextual understanding frequently surpasses a larger, more general model that lacks specific domain expertise.

The advantages are compelling: smaller models are considerably cheaper to operate, offer faster response times, and are simpler to deploy. They can run on localized servers or directly within applications, reducing reliance on external cloud infrastructure, which in turn minimizes latency and enhances data privacy and control for organizations. The metric for success has also evolved. Instead of evaluating a model’s general power, teams now prioritize its performance within a specific context. A model delivering consistent, accurate results for a single, business-critical task often proves more valuable than a large, general-purpose model that performs moderately well across numerous tasks but lacks precision where it matters most. This paradigm shift underscores a broader industry move away from raw scale as the primary objective towards practical usability and return on investment. Model size is no longer a badge of honor; efficiency and demonstrable business value are the new benchmarks.

Edge Machine Learning: Real-Time Intelligence at the Source

The traditional architecture for most machine learning systems involved cloud-centric processing: data collected, transmitted to centralized servers, processed, and then predictions returned. While functional, this approach presented inherent trade-offs, including latency, escalating bandwidth costs, and growing concerns regarding data privacy. In 2026, this dynamic is shifting decisively as more models are deployed closer to the point of data generation—the "edge."

Edge machine learning involves executing models directly on devices or proximate servers, obviating the need to send raw data to the cloud. Practical applications are proliferating: security cameras can detect anomalous activity in real time, mobile applications process voice or image data instantaneously, and industrial machinery monitors performance and reacts without the delays associated with cloud round trips. This localized computation drastically reduces latency, often to near zero, which is critical for use cases demanding immediate responses, such as autonomous vehicles, critical healthcare monitoring, and smart infrastructure.

Privacy considerations are another significant driver. Transmitting vast quantities of raw, potentially sensitive data to the cloud raises substantial concerns. Edge processing allows much of this data to be handled locally, with only aggregated insights or necessary alerts being shared, thereby enhancing compliance with stringent data regulations. The sheer scale of connected devices further amplifies this trend; with IoT devices projected to reach 39 billion by 2030, transmitting all generated data to the cloud becomes inefficient and economically unfeasible. This development does not signal a complete abandonment of cloud computing but rather a strategic redistribution of computational tasks, with an increasing number of decisions being made autonomously at the network’s periphery.

MLOps and LLMOps: Operationalizing AI at Scale

While the initial development of a machine learning model has become increasingly accessible through open-source tools and pre-trained models, the challenge of reliably operating these systems in production remains significant. This is where MLOps (Machine Learning Operations) becomes indispensable, encompassing everything from model versioning and monitoring to deployment, scaling, and continuous updates. With the proliferation of generative AI, this discipline has expanded into LLMOps (Large Language Model Operations) and even AgentOps, each introducing new complexities. Managing prompts, evaluating responses, integrating tools, and orchestrating multi-step executions all require meticulous operational frameworks.

The transition from experimental prototypes to production-grade systems has highlighted critical gaps. Models that perform impeccably in testing can exhibit unpredictable behavior in real-world scenarios due to evolving data distributions or shifts in user behavior. Without robust monitoring, minor errors can quickly escalate into systemic failures. Consequently, organizations are now treating machine learning systems with the same rigor applied to critical software infrastructure. This entails continuous performance tracking, meticulous version control, and automated deployment pipelines that enable seamless updates without disrupting existing services. Safeguards, including comprehensive output logging, anomaly detection, and fallback mechanisms, are becoming standard practice.

Scaling presents another formidable challenge. A model designed for a limited user base may falter under heavy demand, leading to increased latency, inflated costs, and inconsistent performance. MLOps practices address these issues by optimizing model serving and ensuring efficient resource utilization. In 2026, machine learning is unequivocally a core component of enterprise systems; its failure directly impacts product functionality and business continuity. This underscores why operational maturity in MLOps, LLMOps, and AgentOps is now a significant competitive advantage. Organizations capable of consistently deploying, monitoring, and enhancing their AI models will innovate faster and build more resilient systems, while those lacking such capabilities risk being bogged down by persistent operational issues. The ability to run models reliably at scale is now as critical as the ability to build them.

Human-AI Collaboration: The New Standard of Work

Early narratives surrounding artificial intelligence frequently centered on themes of human displacement and widespread job automation. However, the reality emerging in 2026 presents a more nuanced and productive picture: the most significant value creation is stemming from human-AI collaboration, rather than outright substitution. AI is increasingly functioning as a sophisticated co-worker, fundamentally altering how work is performed. Individuals are now collaborating with systems that can suggest, generate, review, and refine outputs in real time. The human provides strategic direction, contextualizes tasks, and makes final decisions, while the AI handles the complex, data-intensive heavy lifting.

This collaborative model is transformative across various sectors. In healthcare, AI systems summarize extensive patient histories, identify critical risks, and propose potential next steps, thereby freeing clinicians to focus on nuanced judgment and direct patient interaction. Marketing teams leverage AI to generate diverse campaign ideas, test multiple variations, and analyze performance with unprecedented speed, far exceeding manual capabilities. In engineering, developers are using AI systems to write, review, and debug code, accelerating development cycles.

This partnership not only enhances speed but also redefines professional roles. Tasks that once consumed hours are now completed in minutes, allowing human professionals to dedicate more time to strategic thinking, critical validation, and creative problem-solving. Measurable impacts are already evident, with AI-assisted workflows driving significant productivity gains across industries, as these systems become deeply integrated into daily operations. These improvements are not achieved by removing humans from the loop but by augmenting their capabilities within it. This paradigm shift also necessitates new skill sets: the ability to formulate precise questions, guide AI outputs effectively, and critically evaluate generated results is becoming as crucial as traditional technical expertise. The notion of competing with AI is diminishing; the true competitive advantage now lies in mastering the art of collaboration with intelligent systems and understanding where human judgment remains paramount.

Responsible and Explainable AI: Building Trust and Accountability

As machine learning systems become increasingly integrated into critical decision-making processes, the fundamental question of trust gains paramount importance. For years, many sophisticated models operated as "black boxes," delivering accurate results but obscuring the underlying reasoning. While acceptable in low-stakes scenarios, this opaqueness becomes problematic when these systems are deployed in high-consequence domains such as finance, healthcare, human resources, or law enforcement.

This is where Explainable AI (XAI) takes center stage, focusing on enhancing the transparency of model decisions. Instead of merely providing an output, XAI systems can illustrate which inputs exerted the strongest influence on a decision and the degree of that influence. This capability empowers human teams to validate results, identify potential errors, and build confidence in the system’s behavior. Concurrently, regulatory frameworks are evolving to keep pace with AI adoption. Governments and international bodies are introducing guidelines and legislation that mandate greater accountability from companies regarding the design, training, and deployment of their AI systems. Compliance is no longer solely a legal consideration but an inherent aspect of product development and operational integrity.

Bias and fairness are receiving heightened attention. Machine learning models learn from the data they are trained on, and if this data reflects existing societal biases, the model can inadvertently amplify them, leading to inequitable outcomes in areas such as loan approvals, hiring decisions, or risk assessments. Addressing these issues requires a multifaceted approach encompassing meticulous data curation, continuous monitoring for algorithmic bias, and clear accountability for the system’s impact. Organizations are prioritizing these concerns not only due to regulatory pressure but also in response to heightened user expectations. Stakeholders demand transparency regarding decisions that affect them; if a system denies a request or flags a risk, a clear, understandable explanation is expected. This pervasive focus on responsible AI is evident across both industry best practices and policy development, elevating ethical considerations from peripheral discussions to foundational design principles. Without trust, widespread adoption is inherently limited. In 2026, building accurate models is only part of the challenge; constructing systems that are transparent, fair, and trustworthy is equally, if not more, critical for sustained impact.

The Integrated Future of Machine Learning

In 2026, machine learning has transcended its earlier definition as a collection of specialized tools or experimental features. It has seamlessly integrated into the operational background of virtually every workflow, quietly powering strategic decisions, automating complex tasks, and engaging in collaborative partnerships with human intelligence. The prevailing emphasis has shifted profoundly from merely building larger or more technically impressive models to engineering systems that are autonomously capable, deeply integrated with existing processes, and consistently deliver tangible, real-world impact.

The pivotal trends defining this landscape—the emergence of agentic AI, the infrastructural integration of generative AI, the rise of smaller specialized models, the expansion of machine learning to the edge, the operational imperative of MLOps and LLMOps, the ubiquity of human-AI collaboration, and the paramount importance of responsible and explainable AI—are not disparate developments. Rather, they converge to establish a new standard for intelligent systems: machine learning that functions reliably, meaningfully, and is inextricably woven into the core fabric of business operations and daily life. The era of building merely "smarter" models has given way to the era of building systems that proactively and efficiently "do the work."