AWS has announced the general availability of cross-account safeguards in Amazon Bedrock Guardrails, a significant enhancement designed to empower organizations with centralized enforcement and management of safety controls across multiple AWS accounts within their enterprise. This new capability directly addresses the complex challenges associated with deploying generative AI at scale, ensuring consistent adherence to responsible AI principles and corporate governance policies. By enabling a unified approach to AI safety, AWS aims to significantly reduce the administrative burden on security and compliance teams while fostering innovation within a secure framework.

The Genesis of Generative AI Governance Challenges

The rapid ascent of generative artificial intelligence has ushered in an era of unprecedented innovation, transforming industries and redefining how businesses operate. Services like Amazon Bedrock, which provides a fully managed environment for foundational models (FMs) from leading AI companies, have democratized access to powerful AI capabilities. Enterprises are increasingly integrating generative AI into diverse applications, from customer service chatbots and content creation tools to sophisticated data analysis and software development assistants. However, this transformative potential comes with inherent risks, including the generation of harmful content, the spread of misinformation, exposure of sensitive data, or outputs that violate ethical guidelines or corporate brand standards.

Managing these risks effectively across an organization, particularly in large enterprises operating with multiple AWS accounts, has presented a formidable challenge. Historically, implementing and monitoring safety controls often required configuring guardrails individually for each account or application. This decentralized approach led to inconsistencies, increased operational overhead, and a higher risk of compliance gaps. Security and governance teams found themselves grappling with the complexities of ensuring uniform protection, verifying configurations, and maintaining compliance across a sprawling digital estate. The absence of a unified control plane made it difficult to enforce a consistent responsible AI posture, potentially hindering the broader adoption of generative AI due to unresolved safety concerns. This environment highlighted a critical need for robust, scalable, and centralized governance mechanisms that could keep pace with the rapid deployment of AI technologies.

Unveiling Cross-Account Safeguards: A Centralized Approach

The general availability of cross-account safeguards in Amazon Bedrock Guardrails directly tackles these governance challenges. This new feature allows organizations to define a guardrail in a new Amazon Bedrock policy within the management account of their AWS organization. Once configured, this policy automatically enforces the specified safeguards across all member entities—be it individual accounts, organizational units (OUs), or the entire organization—for every model invocation with Amazon Bedrock. This architecture ensures uniform protection across all generative AI applications, providing a single source of truth for AI safety controls.

The core benefit lies in its ability to consolidate control. Instead of disparate teams or individual account administrators managing their own guardrail configurations, a central security or governance team can now establish a baseline of safety that applies universally. This not only streamlines the operational workflow but also hardens the organization’s overall security posture against potential misuse or unintended outputs from generative AI models. Furthermore, this capability is designed with flexibility in mind. While organizational safeguards provide a foundational layer of protection, individual accounts and applications retain the ability to apply additional, more granular controls tailored to their specific use case requirements. This hierarchical approach allows for both broad compliance and targeted customization, striking a balance between corporate policy and application-specific needs.

Deep Dive into Implementation: How it Works

Implementing cross-account safeguards in Amazon Bedrock Guardrails involves distinct steps for both account-level and organization-wide enforcement, leveraging AWS Organizations for scalable management. The underlying principle is to ensure that once a guardrail is defined and enforced, its configuration remains immutable to prevent unauthorized modifications by member accounts, guaranteeing consistent policy application.

-

Account-Level Enforcement:

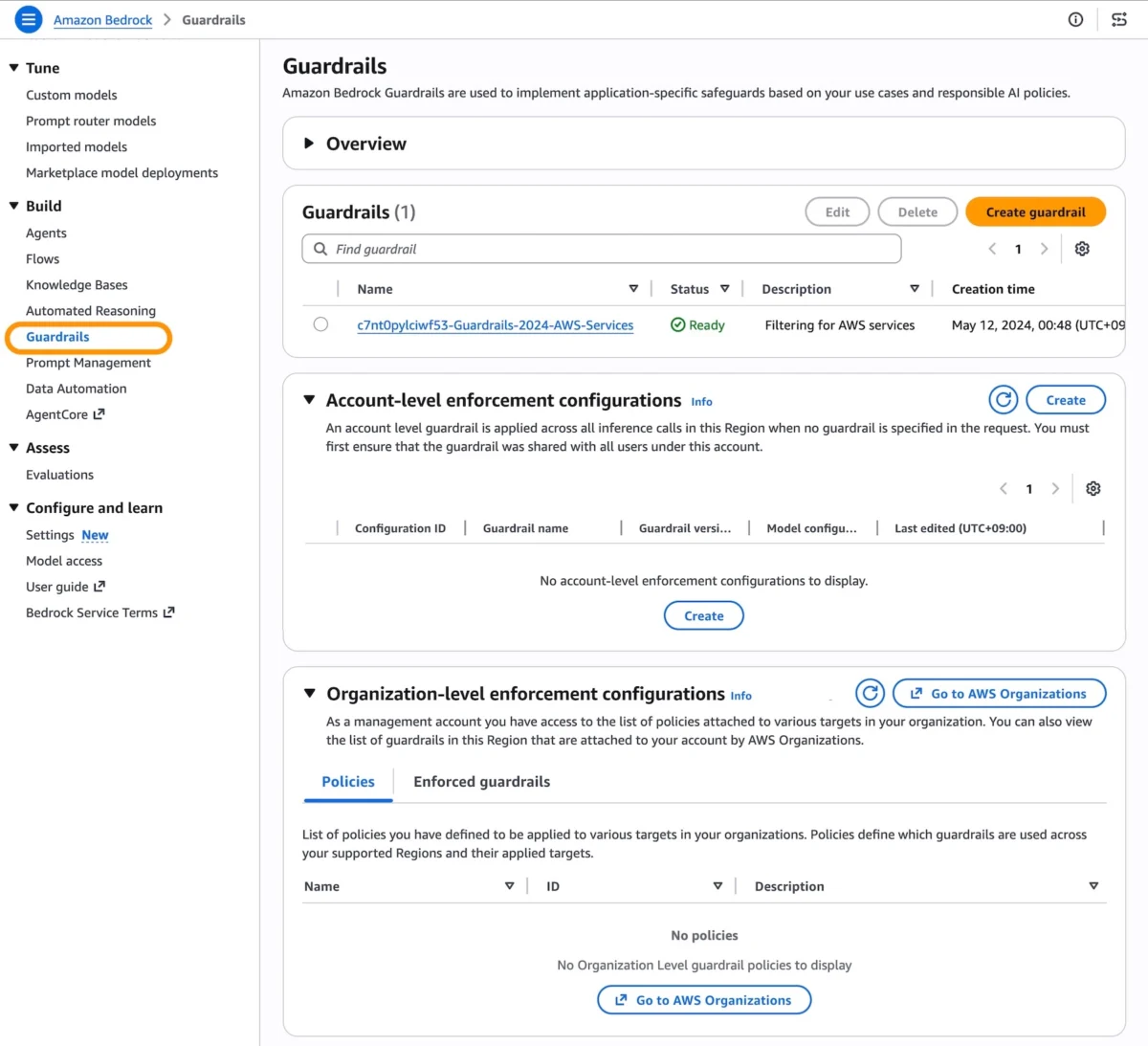

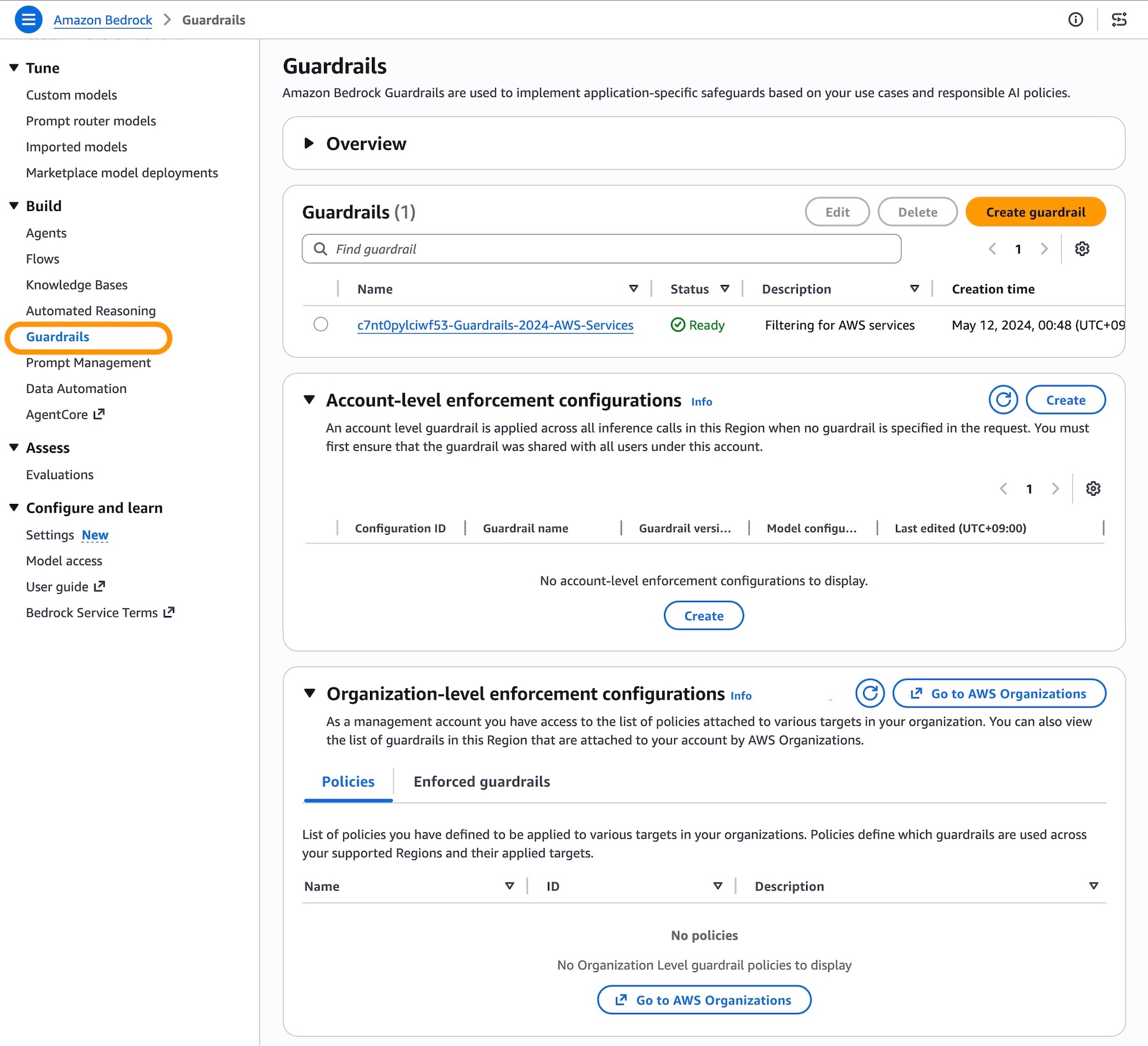

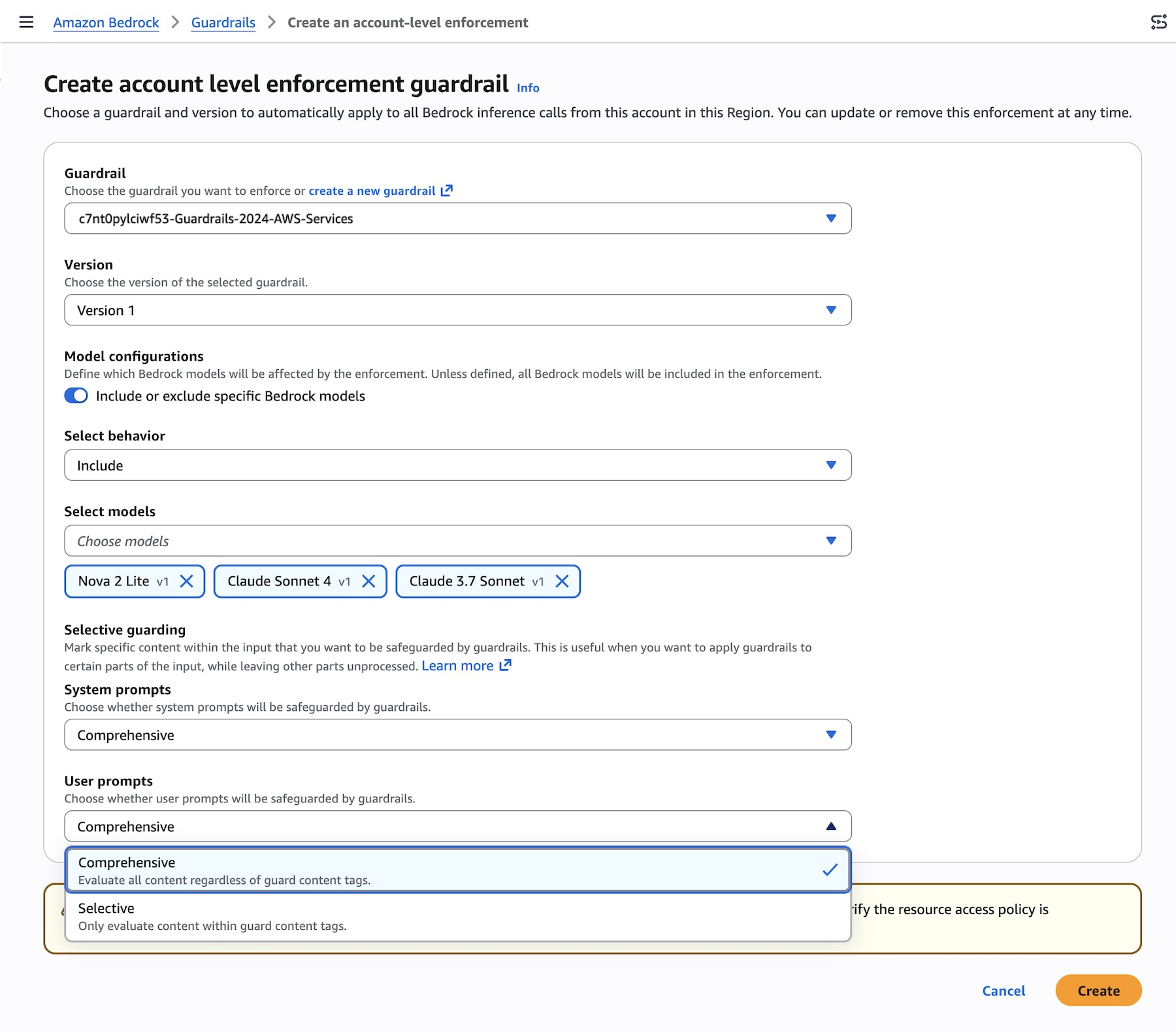

For individual AWS accounts, the process begins in the Amazon Bedrock Guardrails console. Before enforcement can be configured, users must create a guardrail with a specific version. This versioning is crucial as it ensures the guardrail’s configuration cannot be altered by member accounts once it’s set for enforcement. Additionally, certain prerequisites, such as setting up resource-based policies for guardrails, must be met to grant necessary permissions.Within the Bedrock Guardrails console, under the "Account-level enforcement configurations" section, users can select "Create." Here, they choose the desired guardrail and its specific version to be automatically applied to all Amazon Bedrock inference calls originating from that account within the given AWS Region. A key new feature introduced with general availability is the ability to define precisely which models will be affected by the enforcement. This can be done using either an "Include" list, specifying only the models to which the guardrail applies, or an "Exclude" list, specifying models that should be exempt. This granular control allows organizations to tailor guardrail application to specific model usage patterns.

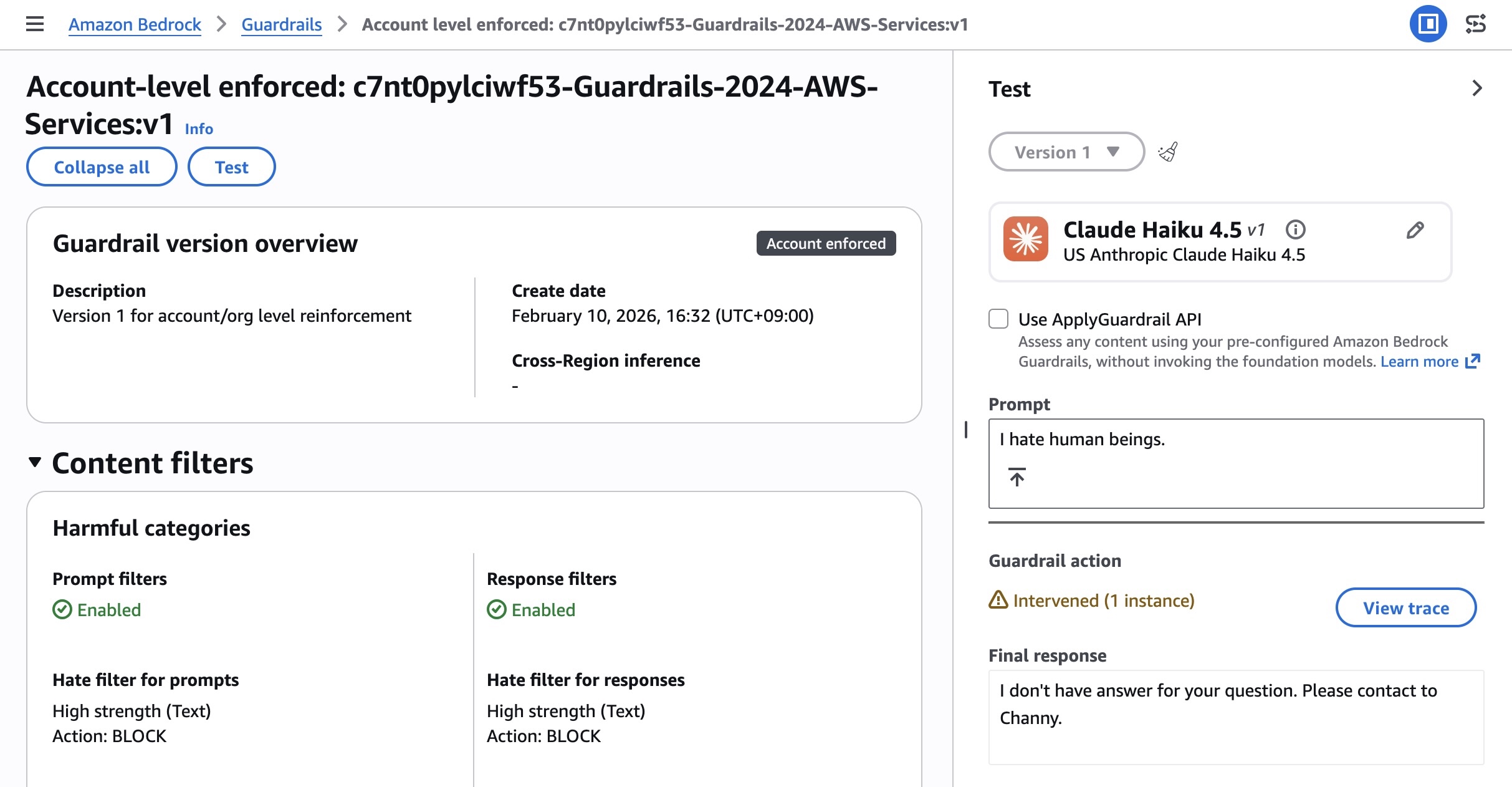

Further customization is available for content guarding controls, applicable to both system prompts and user prompts. Users can choose between "Comprehensive" guarding, which applies all defined safety controls, or "Selective" guarding, which allows for a more nuanced application of controls based on specific content types or risk profiles. After creating the enforcement, rigorous testing is recommended. This involves using a designated role in the account to make Bedrock inference calls via APIs such as

InvokeModel,InvokeModelWithResponseStream,Converse, orConverseStream. The response should include guardrail assessment information, confirming that the enforced guardrail has been automatically applied to both prompts and outputs. -

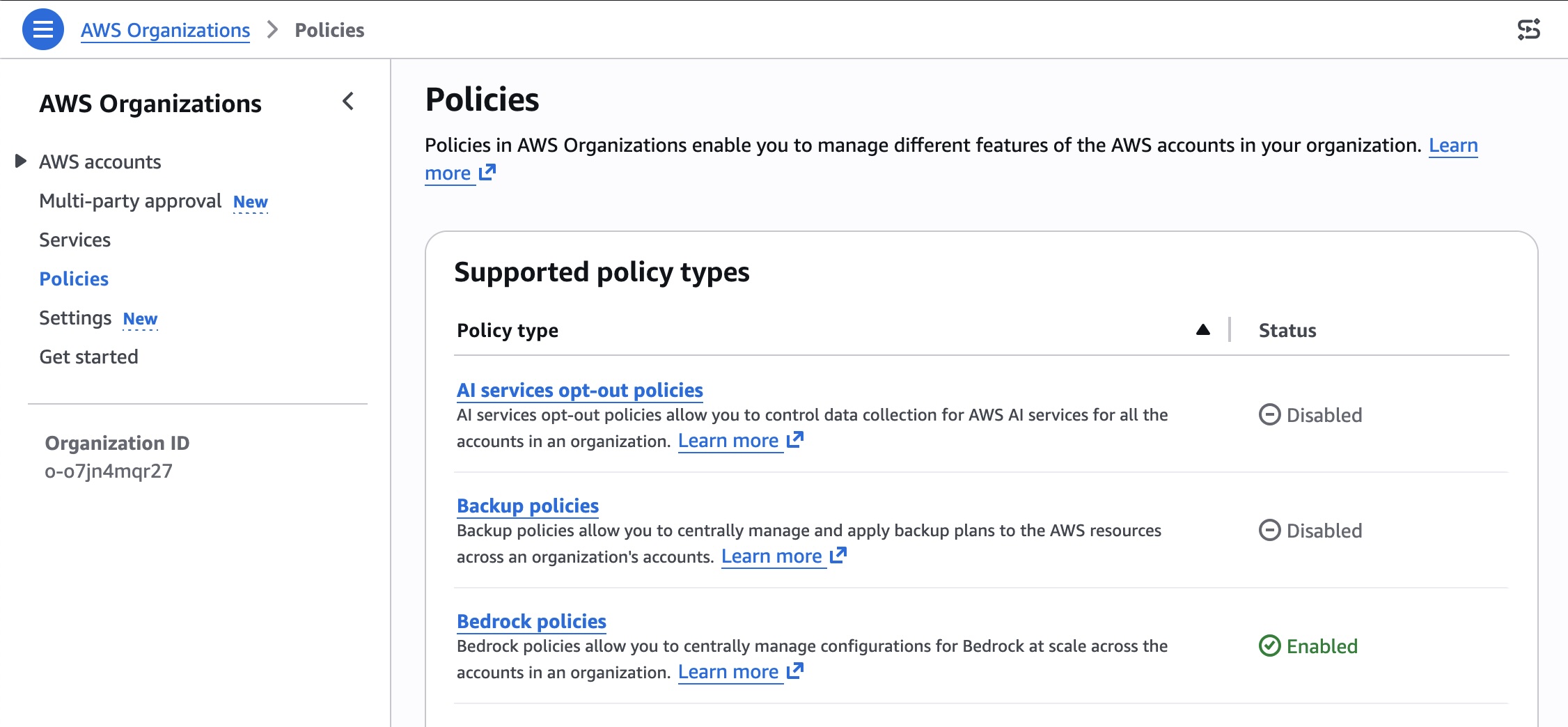

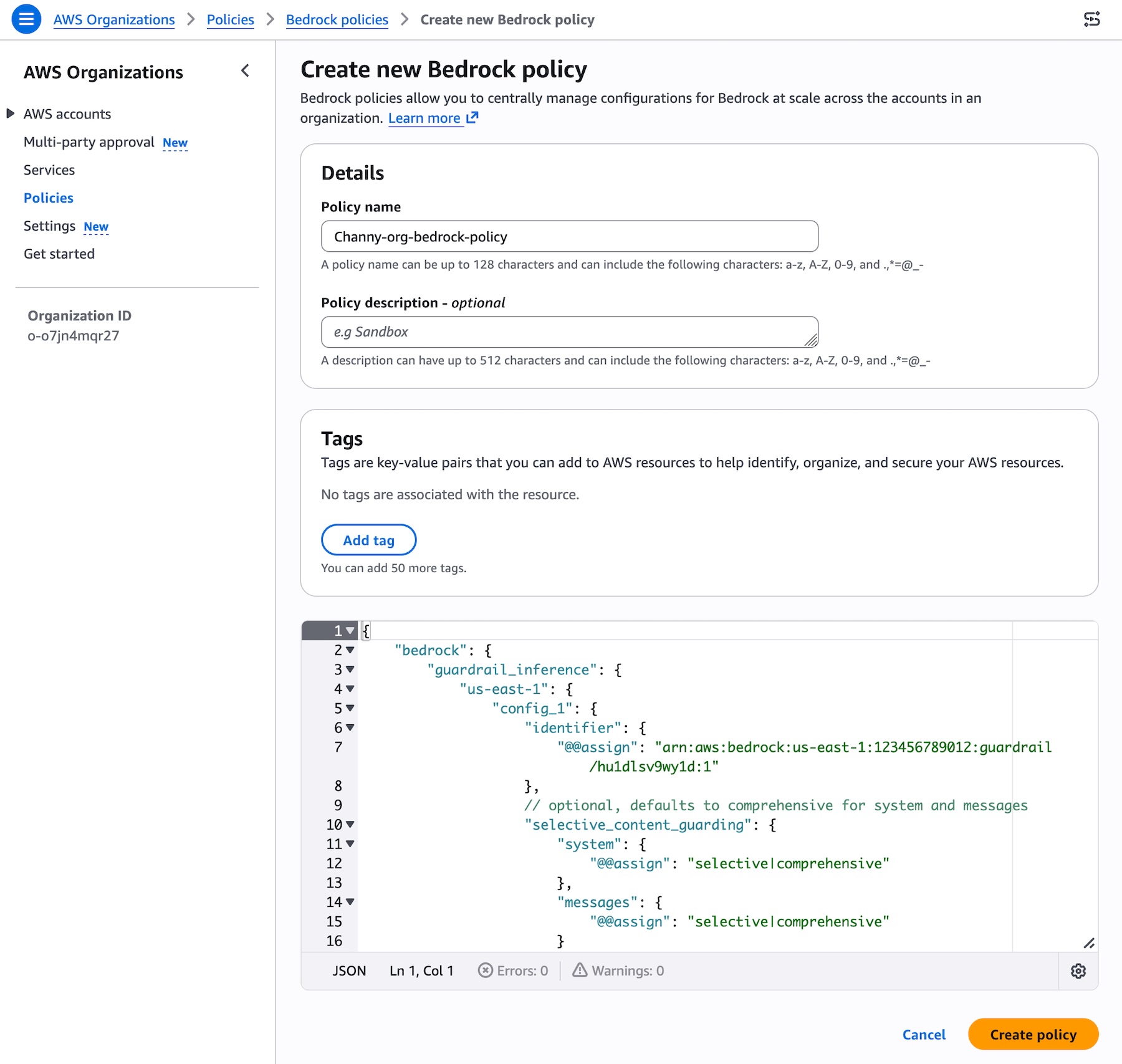

Organization-Wide Enforcement via AWS Organizations:

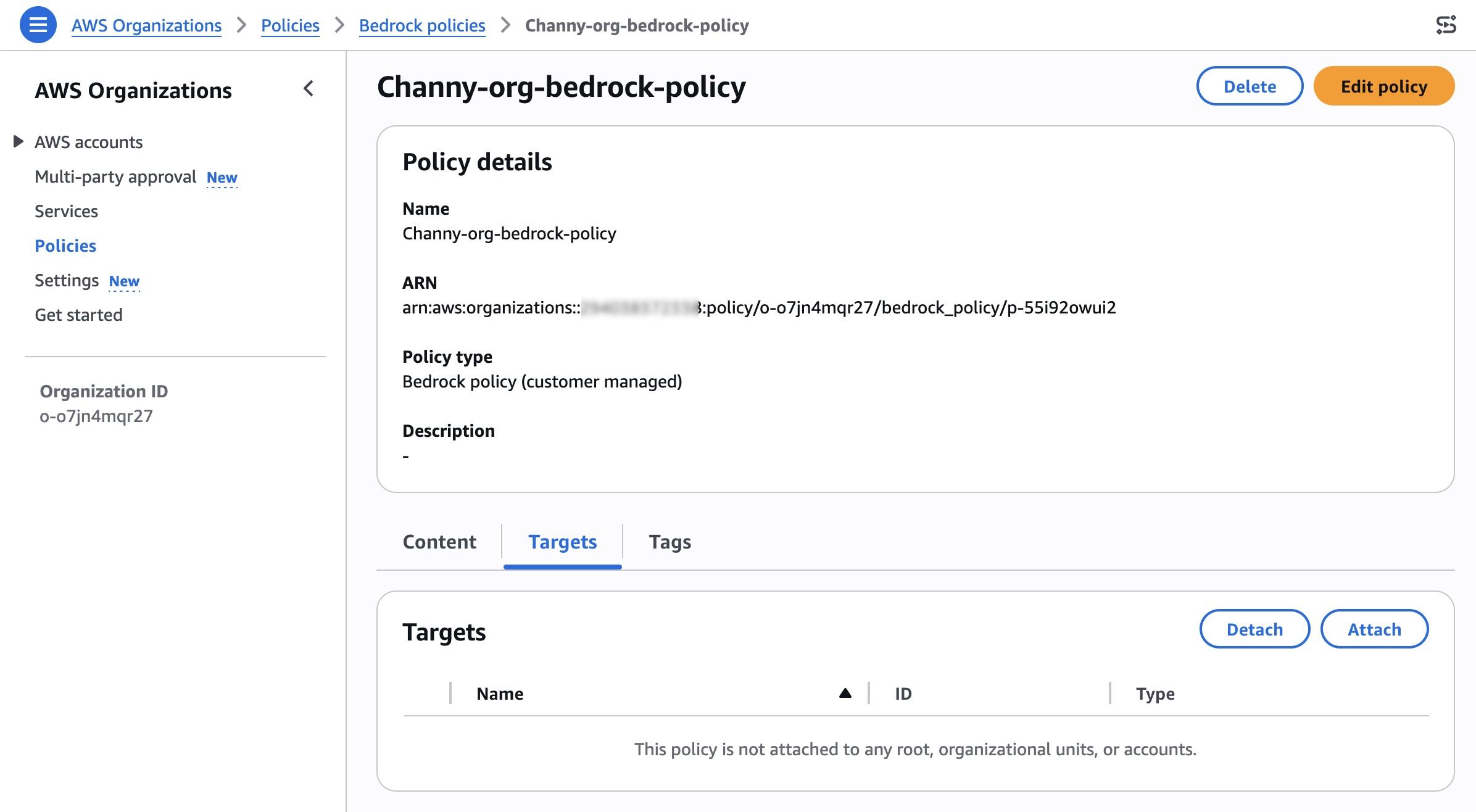

To enable organization-level enforcement, the process shifts to the AWS Organizations console. First, "Bedrock policies" must be enabled within the Policies menu. Once activated, a new Bedrock policy can be created. This policy is where the ARN (Amazon Resource Name) and version of the chosen guardrail are specified. Additionally, configuration for input tags relevant to AWS Organizations can be set. This policy serves as the central directive for AI safety across the entire organization or specific parts of it.After defining the policy, the next critical step is to attach it to the desired organizational units (OUs), individual accounts, or the organization’s root. This attachment process dictates the scope of the guardrail’s enforcement. For instance, attaching a policy to the organization root ensures its application across all accounts, while attaching it to a specific OU applies it only to accounts within that unit. The AWS Organizations console provides a "Targets" tab where users can search and select their organizational root, OUs, or individual accounts to attach the newly created policy.

Verification for organization-level enforcement is equally important. From a member account that is subject to the attached policy, administrators should observe the organization-enforced guardrail listed under the "Organization-level enforcement configurations" section in the Amazon Bedrock Guardrails console. This visual confirmation, coupled with API testing similar to account-level verification, ensures that the underlying safeguards within the specified guardrail are automatically enforced for every model inference request across all designated member entities. This guarantees consistent safety controls across the entire organizational footprint, while still allowing for the attachment of different policies with associated guardrails to varying member entities to accommodate diverse team or application requirements.

The Strategic Advantages for Enterprises

The introduction of cross-account safeguards in Amazon Bedrock Guardrails offers several strategic advantages for enterprises, fundamentally changing how they approach generative AI governance and adoption.

-

Enhanced Compliance and Responsible AI: In an increasingly regulated landscape, with emerging AI legislation globally, maintaining compliance is paramount. This centralized enforcement mechanism provides a robust framework for consistent adherence to corporate responsible AI requirements, ethical guidelines, and industry-specific regulations. By ensuring uniform application of safety policies, organizations can mitigate legal and reputational risks associated with harmful or inappropriate AI outputs. It allows for a clear audit trail of policy enforcement, simplifying compliance reporting and demonstrating a proactive commitment to responsible AI practices. This capability is critical for sectors like finance, healthcare, and government, where data privacy, ethical AI use, and regulatory adherence are non-negotiable.

-

Streamlined Operations and Reduced Overhead: One of the most significant benefits is the drastic reduction in administrative burden. Security and compliance teams no longer need to individually oversee, configure, and verify guardrail settings for each AWS account or application. A single, unified approach from the management account streamlines the entire process, freeing up valuable resources. This efficiency translates into faster deployment cycles for generative AI applications, as the foundational safety layer is already in place, reducing the time spent on manual checks and individual approvals. The operational savings can be substantial, allowing teams to focus on higher-value tasks rather than repetitive configuration management.

-

Scalability and Risk Mitigation: For large enterprises with hundreds or even thousands of AWS accounts, manually managing AI safety policies is unsustainable. Cross-account safeguards provide the scalability necessary to extend generative AI capabilities across the entire organization without compromising safety. By enforcing policies at an organizational level, the risk of human error or oversight in individual accounts is significantly minimized. This proactive risk mitigation strategy helps prevent the inadvertent generation of undesirable content, sensitive data leakage, or the propagation of biased outputs, thereby safeguarding the organization’s brand, data, and user trust. It enables enterprises to confidently expand their generative AI footprint, knowing that a consistent safety net is universally applied.

-

Developer Empowerment and Innovation: While providing stringent controls, this feature paradoxically empowers developers. By establishing clear, organization-wide guardrails, developers gain a secure sandbox in which to innovate. They can focus on building novel generative AI applications, confident that the foundational safety and compliance requirements are automatically handled. This reduces friction in the development lifecycle, accelerates prototyping, and encourages broader experimentation with generative AI, knowing that corporate safety standards are consistently met without impeding creativity.

Navigating the Setup: A Step-by-Step Guide

To fully leverage the cross-account safeguards in Amazon Bedrock Guardrails, organizations must follow a structured approach for both setup and verification. This section details the practical steps involved, emphasizing the critical considerations at each stage.

-

Prerequisites and Guardrail Versioning:

Before initiating enforcement, it is essential to ensure that all necessary prerequisites are met. This includes configuring appropriate resource-based policies for guardrails, which grant Amazon Bedrock the permissions required to apply the specified safeguards. A crucial preliminary step is the creation of a guardrail with a specific, immutable version. This versioning mechanism is fundamental to the cross-account enforcement model. By locking a guardrail to a particular version, organizations ensure that once an organizational policy is attached, member accounts cannot modify the underlying safety controls, thereby maintaining policy integrity and consistent application across the enterprise. -

Configuring Account-Level Controls:

For account-specific enforcement, navigate to the Amazon Bedrock Guardrails console. Within the "Account-level enforcement configurations" section, select "Create." Here, you will specify the guardrail ARN and its chosen immutable version. A new feature allows for precise control over which models are affected by the guardrail, offering "Include" or "Exclude" options. This enables fine-tuning of policies to specific generative AI model usage within an account. Furthermore, content guarding controls for system and user prompts can be configured as either "Comprehensive" or "Selective," providing flexibility in the depth of content filtering. -

Establishing Organization-Level Policies:

For organization-wide enforcement, the process begins in the AWS Organizations console. First, enable "Bedrock policies" under the "Policies" menu. Once enabled, proceed to "Create policy." In this step, you will define the Bedrock policy by specifying the ARN and version of your pre-configured guardrail. This policy acts as the central directive. After creation, the policy needs to be attached to its target. In the "Targets" tab, you can search and select your organization’s root, specific Organizational Units (OUs), or individual member accounts. Attaching the policy to the root applies it across the entire organization, while OUs allow for departmental or functional segmentation of policies. -

Verification and Testing:

After configuring either account-level or organization-level enforcement, thorough testing is critical to confirm the guardrail’s active application. For account-level enforcement, use an appropriate role within the account to make inference calls to Amazon Bedrock using APIs such asInvokeModel,InvokeModelWithResponseStream,Converse, orConverseStream. The API response should include detailed guardrail assessment information, indicating that the guardrail was enforced on both prompts and outputs. For organization-level enforcement, from a member account subject to the organizational policy, navigate to the Amazon Bedrock Guardrails console. The "Organization-level enforcement configurations" section should display the enforced guardrail, confirming its active status and consistent application across the member entity. This multi-faceted verification process ensures that the implemented safeguards are functioning as intended, providing the desired layer of protection for generative AI interactions.

Market Context and Broader Implications

The release of cross-account safeguards for Amazon Bedrock Guardrails comes at a pivotal moment in the enterprise adoption of generative AI. Industry reports consistently show a surge in interest and investment in AI technologies, with many organizations moving beyond experimentation to full-scale deployment. However, a significant barrier to this broader adoption has been the lack of robust, scalable governance tools. Surveys from organizations like Gartner and IDC frequently highlight data security, compliance, and ethical AI use as top concerns for IT leaders and C-suite executives considering generative AI integration.

This new capability from AWS directly addresses these concerns, positioning Amazon Bedrock as a more enterprise-ready solution for responsible AI deployment. By simplifying the management of safety controls across complex AWS environments, AWS is not only enhancing the security posture of its customers but also accelerating the safe and ethical integration of generative AI into mainstream business operations. This move aligns with a broader industry trend towards "AI governance platforms" and "responsible AI frameworks," which aim to provide tools and processes for managing the entire lifecycle of AI systems, from development to deployment and monitoring.

The implications extend beyond just technical implementation. For compliance officers, it provides a clearer pathway to demonstrate adherence to evolving regulations, such as the EU AI Act or upcoming U.S. state-level AI guidelines. For legal teams, it offers a verifiable mechanism to mitigate risks associated with intellectual property, privacy, and brand reputation. For business leaders, it instills greater confidence in leveraging generative AI for critical functions, knowing that a strong safety net is in place. This move by AWS helps to democratize access to advanced AI while ensuring that ethical considerations are built into the very fabric of enterprise AI deployments. It underscores AWS’s commitment to not just providing powerful AI services but also enabling their responsible and secure use in real-world scenarios.

Availability and Commercial Details

The cross-account safeguards in Amazon Bedrock Guardrails are now generally available in all AWS commercial and GovCloud Regions where Amazon Bedrock Guardrails is offered. This widespread availability ensures that a broad range of AWS customers, including those with stringent regulatory requirements, can immediately leverage this enhanced capability. For a comprehensive list of Regional availability and to stay informed about future roadmap developments, customers are encouraged to visit the AWS Capabilities by Region page.

Regarding the cost structure, charges apply to each enforced guardrail based on its configured safeguards. AWS emphasizes transparency in its pricing model, and detailed pricing information for individual safeguards and the overall Amazon Bedrock service can be found on the Amazon Bedrock Pricing page. This allows organizations to understand and plan for the costs associated with implementing their desired levels of AI safety and governance. AWS encourages customers to explore this new capability through the Amazon Bedrock console and to provide feedback via AWS re:Post for Amazon Bedrock Guardrails or through their usual AWS Support contacts, fostering continuous improvement and adaptation of the service to evolving customer needs.