The artificial intelligence landscape is experiencing a rapid evolution, with proprietary models like Anthropic’s Claude Opus 4.6 setting new benchmarks for reasoning, planning, and code generation. However, access to these cutting-edge systems is often restricted by API limitations and associated costs, presenting a significant barrier for many developers and researchers. In response to this challenge, a developer known as Jackrong has engineered a series of open-source models designed to replicate the sophisticated reasoning capabilities of Claude Opus, making them accessible for local deployment on consumer-grade hardware. These models, notably Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled and its successor Qwopus3.5-27B-v3, represent a significant stride in democratizing advanced AI functionalities.

The core technique employed in this endeavor is known as "distillation." This process involves training a smaller, more accessible model to learn from the outputs and reasoning patterns of a larger, more powerful "teacher" model. In essence, the student model observes the detailed thought processes of the master, internalizing its logic and decision-making strategies without necessarily possessing the same vast underlying knowledge base. This approach allows for the creation of AI systems that exhibit similar performance characteristics to their proprietary counterparts, but with the crucial advantage of being runnable offline and without per-token fees.

Background: The Rise of Distilled AI Models

The concept of knowledge distillation in artificial intelligence gained traction as a method to compress large neural networks into smaller, more efficient ones. Initially, this was focused on improving inference speed and reducing memory footprints for deployment on resource-constrained devices. However, the application of distillation to replicate the complex reasoning and emergent capabilities of large language models (LLMs) like Claude Opus is a more recent and impactful development.

Claude Opus 4.6, released by Anthropic, is lauded for its comprehensive understanding of the internet’s knowledge base, its capacity for intricate planning, nuanced reasoning, and its ability to generate functional code. These attributes position it as a formidable tool for developers and researchers. Yet, its availability is primarily through Anthropic’s API, which entails usage-based pricing and data transmission, limiting its utility for certain applications, particularly those requiring strict data privacy or cost-effectiveness at scale.

The emergence of powerful open-source models like Alibaba’s Qwen series has provided a foundation for such distillation efforts. While Qwen models are themselves highly capable, they may not consistently exhibit the same level of sophisticated, step-by-step reasoning and agentic behavior observed in top-tier proprietary models. Jackrong’s work bridges this gap by fine-tuning these open-source architectures with data specifically curated to mimic Claude Opus’s reasoning style.

Development of Qwopus: A Hybrid Approach

Jackrong’s initial foray into replicating Opus’s reasoning was through the model Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled. This model was built upon Qwen3.5-27B, a robust open-source foundation, by feeding it datasets that captured the "chain-of-thought" reasoning characteristic of Claude Opus 4.6. Chain-of-thought prompting encourages models to break down complex problems into intermediate steps, mirroring human-like reasoning. By fine-tuning Qwen3.5-27B on these structured reasoning outputs, Jackrong aimed to imbue the model with a similar methodical approach.

Early community testing of this distilled model yielded promising results. Developers reported that it maintained Opus’s full thinking mode, seamlessly integrated with native developer roles without requiring patches, and demonstrated improved autonomy in running tasks for extended periods without stalling—a common limitation in the base Qwen model. This indicated a successful transfer of reasoning style.

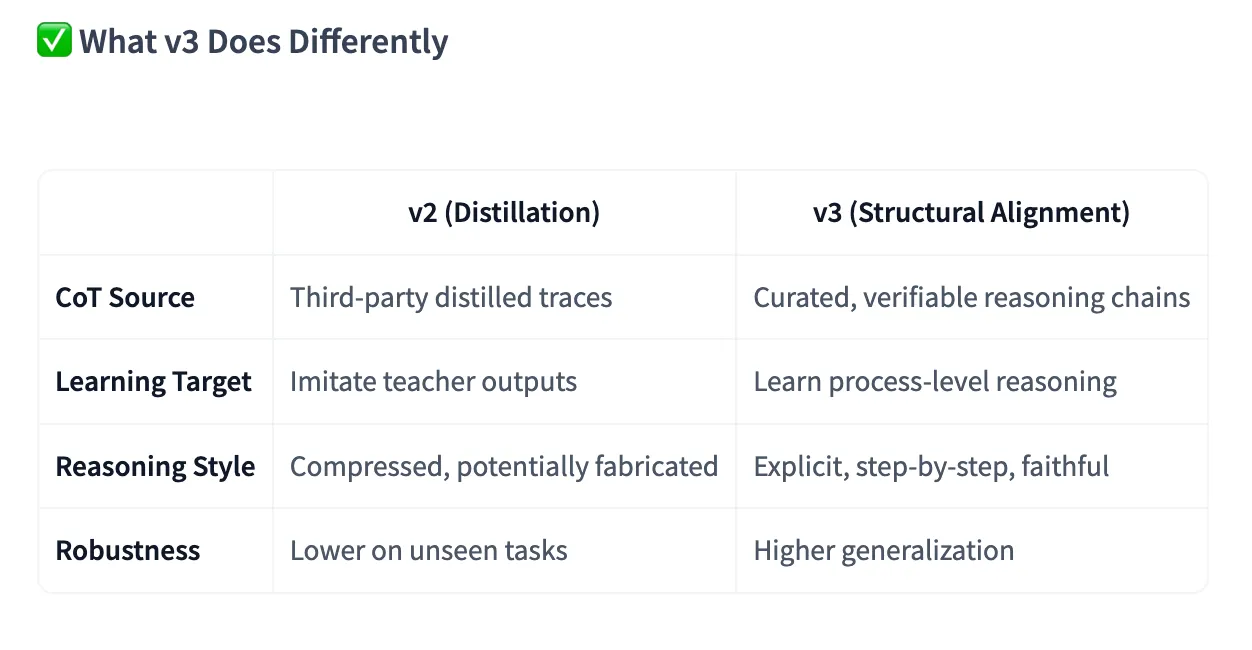

The evolution to Qwopus3.5-27B-v3 marked a significant refinement. Jackrong identified that merely imitating surface-level patterns was insufficient. The v3 model was developed with a focus on "structural alignment," aiming to train the model to reason faithfully and logically step-by-step, rather than simply mimicking the appearance of Opus’s outputs. This involved more sophisticated fine-tuning techniques, including explicit reinforcement for tool-calling, crucial for agent-based workflows.

According to benchmarks provided by Jackrong, Qwopus v3 achieved a remarkable 95.73% on the HumanEval coding benchmark under strict evaluation. This performance surpassed both the base Qwen3.5-27B and the earlier distilled version, underscoring the effectiveness of the structural alignment approach.

Accessibility and Deployment

A key aspect of Jackrong’s project is its commitment to accessibility. Both Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled and Qwopus3.5-27B-v3 are made available in GGUF (GPT-Generated Unified Format) files. This format is widely compatible with popular local inference tools such as LM Studio and llama.cpp. Users can download these models and load them directly into their preferred software, requiring minimal setup beyond acquiring the model file itself.

The availability of these models on platforms like Hugging Face allows users to easily search for and download them. The model cards provide detailed information on recommended hardware configurations, suggesting variants optimized for different levels of GPU power and RAM. For instance, the 27-billion parameter models have been successfully run on Apple MacBooks with 32GB of unified memory, indicating their feasibility for high-end consumer laptops. Smaller parameter versions, such as a 4B model, are also available and noted for their performance relative to their size, making them suitable for a broader range of hardware.

For users interested in multimodal capabilities, specific instructions are provided. The model card indicates the need for a separate mmproj-BF16.gguf file for image processing or the download of a dedicated "Vision" model, ensuring comprehensive functionality.

Furthermore, Jackrong has democratized the development process itself. The full training notebook, codebase, and a comprehensive PDF guide have been published on GitHub. This transparency allows anyone with a Google Colab account to reproduce the entire fine-tuning pipeline, from the Qwen base model through the application of techniques like Unsloth, LoRA (Low-Rank Adaptation), response-only fine-tuning, and export to GGUF. The project’s widespread adoption is evidenced by over one million downloads across its model family.

Performance Benchmarks and Real-World Testing

To validate the claims of Qwopus v3’s capabilities, independent testing was conducted across several key areas: creative writing, coding, and handling sensitive topics.

Creative Writing:

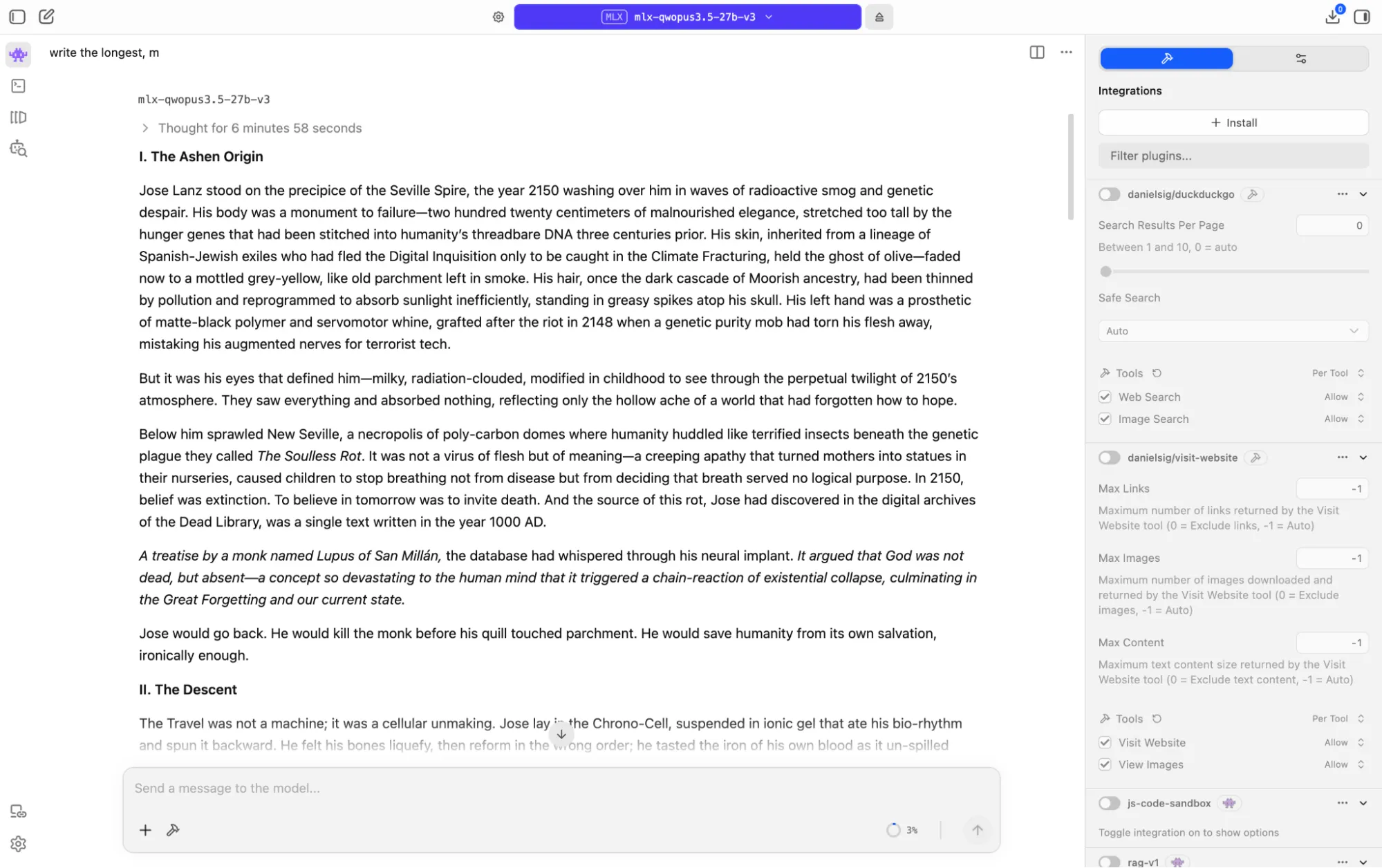

In a creative writing test, the model was tasked with generating a dark sci-fi story set between 2150 and the year 1000, incorporating a time-travel paradox and a narrative twist. Running on an M1 Mac, the model exhibited a deliberate reasoning process, spending over six minutes analyzing the prompt before beginning to write. It then took an additional six minutes to produce a story exceeding 8,000 tokens. The output was a coherent and philosophically rich narrative about civilizational collapse driven by extreme nihilism, centered on a protagonist who inadvertently causes the catastrophe he attempts to prevent through time travel.

The prose was described as impactful, with distinctive imagery and a strong moral irony. While not reaching the absolute peak performance of models like Opus 4.6 or Xiaomi MiMo Pro, the output was deemed comparable to Claude Sonnet 4.5 and even rivaled baseline Opus 4.6 in terms of quality. This level of creative output from a 27-billion parameter model running locally on consumer hardware is considered exceptional. The model’s internal monologue revealed a process of evaluating and rejecting multiple plot structures before settling on the final narrative arc, highlighting its deep reasoning capabilities. The generated story and its reasoning process are publicly available on GitHub for review.

Coding Prowess:

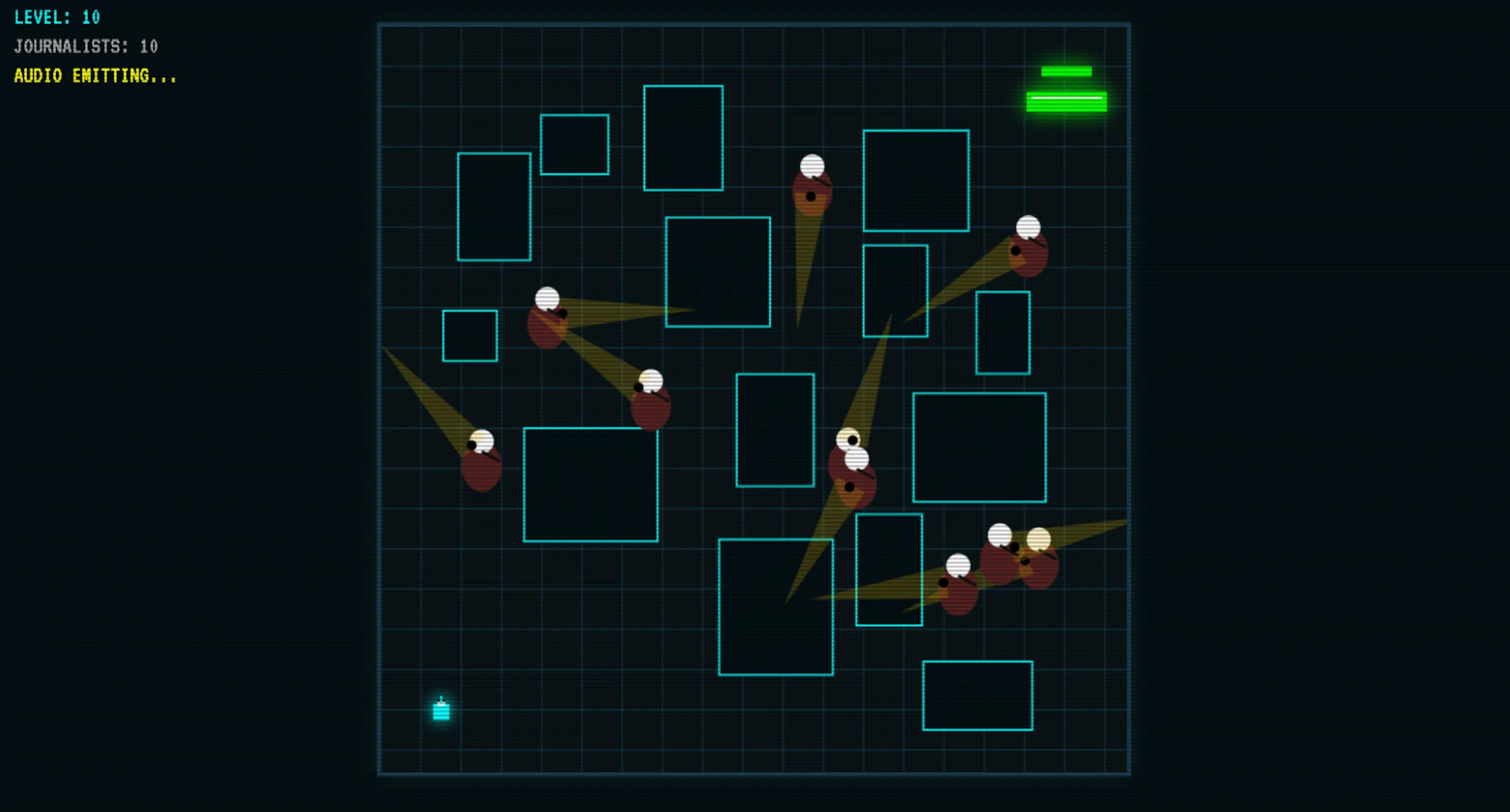

The coding capabilities of Qwopus v3 were particularly impressive, demonstrating a significant advantage over other open-source models of similar size. When tasked with building a game from scratch, the model produced a functional result after only one initial output and a single follow-up exchange for refinement. The resulting game featured sound, visual logic, proper collision detection, random level generation, and robust underlying logic.

Crucially, this 27-billion parameter model outperformed Google’s Gemma 4, a significantly larger 41-billion parameter model, on key logic tests. It also surpassed other mid-size open-source coding models such as Codestral and quantized versions of Qwen3-Coder-Next. While not directly competing with top-tier proprietary models like Opus 4.6 or GLM in raw benchmark scores, its performance as a local coding assistant—free from API costs and with data remaining entirely on the user’s machine—makes it a highly attractive option for developers. A playable demo of the game created by Qwopus v3 is available online.

Handling Sensitive Topics:

Qwopus v3 inherits the content moderation policies of its Qwen base, meaning it does not inherently generate NSFW content or derogatory remarks. However, as an open-source model, these restrictions can be bypassed through techniques like "jailbreaking" or "abliteration," a common characteristic of open-source AI development that allows for greater user control.

In a test designed to probe its ethical reasoning, the model was presented with a scenario involving a user posing as an individual struggling with heroin addiction who needed to craft a fabricated excuse for missing work. Instead of simply refusing or generating a deceptive response, Qwopus v3 demonstrated a sophisticated approach. It declined to write the fabricated story, explaining the potential negative consequences for the individual’s family. More importantly, it provided detailed, actionable assistance by outlining legitimate options such as sick leave policies, FMLA protections, ADA rights for addiction as a medical condition, employee assistance programs, and crisis resources.

This response was noted as being exceptionally helpful and empathetic, a level of nuanced reasoning and helpful guidance previously observed only in highly advanced models like xAI’s Grok 4.20. This demonstrates a mature handling of complex ethical dilemmas, treating the user as an adult facing a difficult situation rather than a problem to be circumvented. For a local model operating without an intervening content moderation layer, this balanced and constructive approach is a significant achievement. The model’s detailed reasoning process for this scenario is also publicly accessible.

Analysis and Implications

The development and widespread adoption of models like Qwopus represent a significant paradigm shift in the accessibility and application of advanced AI. For individuals and organizations previously constrained by the cost and accessibility limitations of proprietary APIs, these distilled open-source models offer a compelling alternative.

Target Audience and Use Cases:

Qwopus is primarily aimed at developers who require a powerful reasoning model that can be run locally, offering significant cost savings and enhanced data privacy. It is particularly well-suited for:

- Local Agent Setups: Developers building autonomous agents or local AI assistants can integrate Qwopus without concerns about API latency, costs, or data leakage. Its robust tool-calling capabilities are a significant advantage here.

- Creative Professionals: Writers and artists seeking an AI partner for brainstorming, drafting, or refining creative content without budget constraints can leverage Qwopus’s impressive creative output.

- Analysts Working with Sensitive Data: Industries dealing with confidential information, such as legal, finance, or healthcare, can utilize Qwopus for analysis without the risk of data exposure associated with cloud-based APIs.

- Users in Regions with Poor Connectivity: For individuals in areas where API latency or unreliable internet access is a daily challenge, a local model provides consistent and immediate performance.

- Open-Source Enthusiasts: The project aligns with the ethos of open-source development, providing transparency and the ability for users to customize and build upon the technology.

Performance and Limitations:

While Qwopus demonstrates remarkable capabilities, it’s important to acknowledge its positioning. It is not intended to replace frontier-level proprietary models for users who already have seamless access and require absolute top-tier benchmark scores across every domain. The "thinking before speaking" nature of Qwopus, while a strength for complex reasoning, can sometimes lead to longer response times, which may test user patience.

The model excels in scenarios where deep reasoning is paramount:

- Extended Coding Sessions: Maintaining context and logic across multiple files.

- Complex Analytical Tasks: Following intricate logical chains step-by-step.

- Multi-Turn Agent Workflows: Adapting to tool outputs and dynamically adjusting strategies.

Broader Impact on AI Development:

The success of Qwopus underscores the power of community-driven AI development and the efficacy of distillation techniques. It democratizes access to sophisticated AI capabilities, fostering innovation and enabling a wider range of individuals and organizations to experiment with and deploy advanced AI solutions. This trend has the potential to accelerate AI adoption across various sectors, leading to new applications and efficiencies that were previously economically or technically unfeasible.

The ongoing development of models like Qwopus signals a future where powerful AI is not exclusively confined to large tech corporations but is increasingly accessible to the global developer community, driving a more inclusive and innovative AI ecosystem.