In 2024, the global landscape of digital infrastructure reached a critical juncture as data centers consumed approximately 415 terawatt-hours (TWh) of electricity. According to the International Energy Agency (IEA), this figure represents roughly 1.5% of total global electricity demand, highlighting the massive scale of the infrastructure required to power the modern internet, cloud computing, and the burgeoning field of generative artificial intelligence. As these facilities become the undisputed backbone of the global digital economy, the IEA estimates that their share of world electricity consumption will climb to between 2% and 3% by 2030. To manage this escalating demand, industry leaders and engineers have historically relied on a single, pivotal metric: Power Usage Effectiveness, or PUE. However, as the industry enters the era of "AI factories," the limitations of this metric are becoming as significant as its benefits.

The Genesis and Definition of Power Usage Effectiveness

Power Usage Effectiveness (PUE) is a ratio used to determine how efficiently a data center uses its power. Developed by The Green Grid in 2007, a non-profit industry consortium, the metric was designed to provide a standardized way for operators to measure the energy efficiency of their facilities. At its core, PUE specifically measures the relationship between the total energy entering a data center and the energy actually delivered to the IT equipment, such as servers, storage arrays, and networking switches.

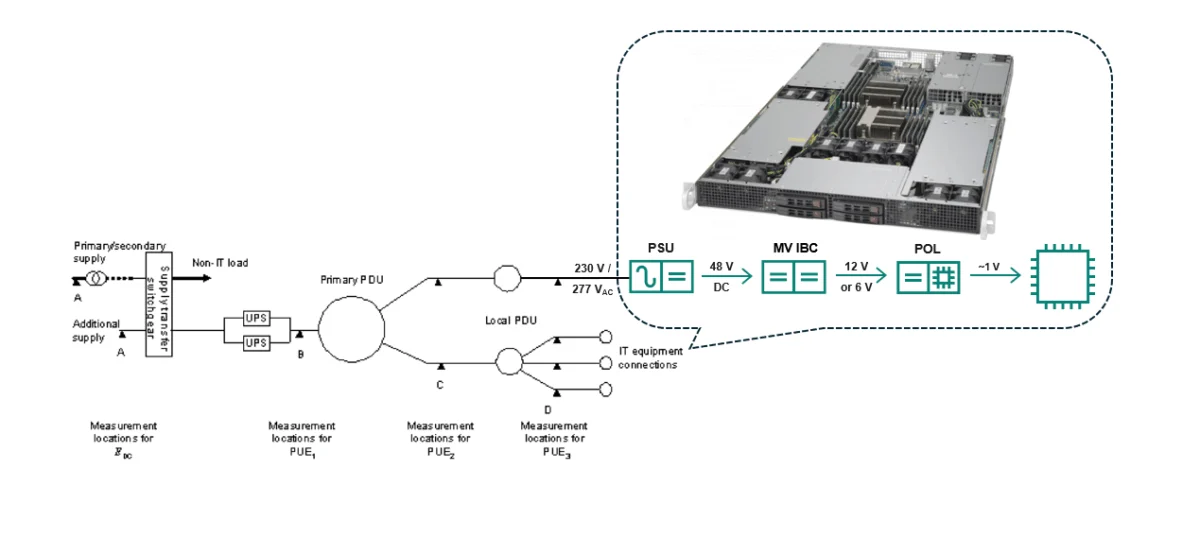

The formula for calculating PUE is mathematically straightforward: PUE equals the Total Facility Power divided by the IT Equipment Power. In this equation, "Total Facility Power" includes everything required to keep the building operational, including cooling systems (chillers, CRAC units, and fans), lighting, power distribution units (PDUs), and uninterruptible power supplies (UPS). "IT Equipment Power" refers to the energy consumed by the hardware that performs the actual computing tasks.

A "perfect" PUE score is 1.0. This theoretical ideal signifies that every single watt of electricity entering the facility is utilized directly for computing, with zero energy lost to overhead. In practice, a score of 1.0 is impossible to achieve because electrical resistance, the laws of thermodynamics, and the necessity of cooling systems inevitably result in some energy loss.

A Chronology of Data Center Efficiency: From 2000 to 2024

The history of PUE over the last two decades follows what analysts describe as a "hockey stick" curve, characterized by a period of rapid, dramatic improvement followed by a long, stubborn plateau.

In the early 2000s, before PUE became a standardized benchmark, many data centers were notoriously inefficient. Facilities often operated with PUE ratings of 2.0 or higher, meaning that for every watt used by a server, another full watt was wasted on cooling and power conversion. These legacy sites were frequently cooled by massive, energy-hungry air conditioning units that lacked the precision of modern systems.

Between 2007 and 2013, the industry experienced a "golden age" of efficiency gains. Driven by the introduction of the PUE metric and rising energy costs, operators began implementing "low-hanging fruit" solutions. These included hot-aisle/cold-aisle containment, which prevents the mixing of cold intake air and hot exhaust air, and the adoption of "free cooling" techniques that utilize outside air to regulate temperatures. During this window, the global average PUE plummeted from above 2.0 to approximately 1.6.

However, from 2014 to the present day, the rate of improvement has slowed significantly. Data from the Uptime Institute and Google Sustainability indicates that the industry has hit a plateau. While hyperscale leaders like Google, Meta, and Amazon Web Services (AWS) have pushed their facilities to "excellent" PUE ratings of 1.1 to 1.2, the broader global industry average remains stuck between 1.6 and 1.8. This stagnation suggests that traditional mechanical and architectural optimizations have reached their limits, and further gains will require a more granular look at the hardware level.

Understanding the PUE Rating Scale

To evaluate a data center’s performance, the industry uses a standardized status scale based on PUE ratios:

- 1.0 (Perfect): A theoretical ideal where no energy is wasted on overhead.

- 1.1 – 1.2 (Excellent): Achieved by hyperscale leaders. These facilities often utilize advanced liquid cooling, AI-driven thermal management, and custom-designed electrical architectures.

- 1.3 – 1.5 (Good): The benchmark for modern, well-designed enterprise facilities that employ contemporary best practices in cooling and power distribution.

- 1.6 – 1.8 (Average): The current global industry average, representing a mix of older facilities and newer sites that may not yet have fully optimized their cooling loads.

- 2.0+ (Inefficient): Typical for legacy facilities where half of the incoming energy is lost to heat and infrastructure overhead.

While these numbers provide a quick snapshot of a facility’s health, they do not tell the whole story of a data center’s carbon footprint or its overall operational efficiency.

The PUE Loophole: A Hidden Inefficiency in the Server Chassis

Despite its widespread adoption, PUE contains a significant "blind spot" that many experts refer to as the PUE Loophole. This loophole exists because the metric measures how efficiently a data center delivers power to the server, but it ignores how efficiently the server uses that power once it arrives.

In a traditional PUE calculation, any power consumed inside the server chassis is categorized as "IT Equipment Power" (the denominator). This creates a misleading scenario. If a server contains an inefficient Power Supply Unit (PSU) that wastes significant energy during the conversion from Alternating Current (AC) to Direct Current (DC), that waste is counted as "useful" IT power rather than "wasted" facility power.

Power typically arrives at the server rack as AC, often at 240V. However, the semiconductors—the CPUs and GPUs—require low-voltage DC power. The conversion process happens within the PSU or Common Redundant Power Supply (CRPS). Conventional power supplies are often only 95% efficient. This means that 5% of the total power entering the server is immediately lost as heat before it even reaches a processor.

Because this 5% loss occurs inside the server, it actually makes the PUE score look better. By increasing the denominator (IT Power) while keeping the numerator (Facility Power) relatively stable, an inefficient server can technically lower a facility’s PUE ratio. This creates a lack of incentive for data center operators to demand more efficient AC/DC converters, as their primary performance metric does not penalize them for internal server losses.

The Impact of Artificial Intelligence and High-Density Computing

The urgency to address the PUE Loophole is growing alongside the rise of Artificial Intelligence. AI workloads require massive arrays of GPUs, such as NVIDIA’s H100 or B200 chips, which consume significantly more power than traditional CPUs. A single modern AI server rack can demand 50kW to 100kW of power, compared to the 5kW to 10kW typical of a decade ago.

As power density increases, the 5% waste within the PSU becomes a massive thermal and financial burden. Wasted energy translates directly into heat, which then requires even more energy for the facility’s cooling systems to remove. This "cascading inefficiency" means that the true cost of an inefficient power supply is felt twice: once in the lost electricity and again in the increased cooling load.

Technological Solutions: Moving Beyond Silicon

To close the PUE loophole and move toward a "grid-to-chip" view of efficiency, the industry is increasingly looking toward innovative semiconductor materials like Gallium Nitride (GaN). Unlike traditional silicon-based transistors, GaN-based power supplies can achieve efficiencies of 97% or better.

While a jump from 95% to 97% efficiency might seem incremental, the cumulative effect is staggering. Improving PSU efficiency by just two or three percentage points can reduce wasted energy by more than 50%. On a global scale, industry analysts suggest that transitioning to these more efficient power topologies could save more than 37 billion kilowatt-hours (kWh) annually. This is equivalent to the energy required to power approximately 40 hyperscale data centers, or enough electricity to sustain millions of households.

Broader Implications and the Future of Metrics

The limitations of PUE have sparked a movement within the industry to adopt more comprehensive metrics. Organizations are beginning to look at Carbon Usage Effectiveness (CUE), which measures the carbon footprint per unit of IT energy, and Water Usage Effectiveness (WUE), which tracks the liters of water used for cooling.

Furthermore, there is a push for a "Total Usage Effectiveness" (TUE) metric. TUE would combine the traditional PUE (facility efficiency) with ITUE (IT efficiency), providing a holistic view of how much energy actually reaches the transistors to perform a calculation.

Industry analysts suggest that the next phase of data center evolution will be defined by "AI Factories"—facilities designed from the ground up for maximum density and minimum waste. In these environments, the PUE metric will remain a foundational tool for infrastructure benchmarking, but it will no longer be the sole "gold standard."

Conclusion

The data center industry stands at a crossroads. While PUE has been instrumental in driving down energy waste over the last 17 years, the plateau in efficiency gains and the specific demands of AI require a new approach. Recognizing the PUE loophole is the first step toward a more sustainable digital future. By incentivizing the adoption of advanced power conversion technologies like GaN and broadening the scope of efficiency metrics, the industry can ensure that the digital economy continues to grow without placing an unsustainable burden on the world’s energy grids. The transition from "facility efficiency" to "grid-to-chip efficiency" is no longer just a technical preference; it is an economic and environmental necessity.