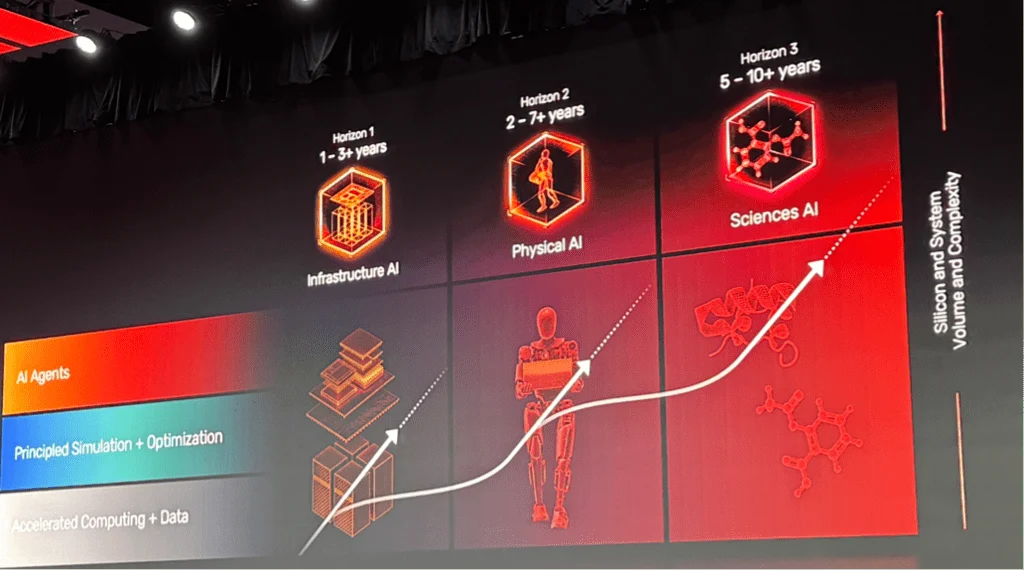

The global industrial landscape is on the precipice of a transformative shift as humanoid robotics, powered by the convergence of generative and agentic artificial intelligence, moves from experimental laboratories to the front lines of the global economy. This evolution, often termed "Physical AI," represents a departure from traditional robotics—which relied on rigid, pre-programmed routines—toward autonomous systems capable of reasoning, perceiving, and interacting with the physical world in a human-like manner. Industry leaders and economic analysts now project that this sector could eventually rival the total global GDP in scale, with current estimates suggesting a potential market value of $25 trillion in the coming decades.

The rapid maturation of Large Language Models (LLMs) and transformer architectures has provided the missing "brain" for humanoid platforms. While sensors and actuators have existed for decades, the ability to interpret complex environmental data and translate it into dexterous physical movement has remained the primary bottleneck. Today, however, companies such as Cadence, Nvidia, Synopsys, and Texas Instruments are reporting a 100-fold increase in capability compared to just a few years ago. This surge is driving a new chronology of adoption, beginning in automotive and electronics factories and rapidly expanding toward logistics, professional cleaning, and eventually, domestic eldercare.

The Economic and Strategic Timeline of Humanoid Integration

The trajectory of humanoid robotics is defined by a clear chronological progression. In the early 2010s, robotics was dominated by articulated arms used for repetitive tasks in controlled environments. By 2020, the integration of computer vision allowed for more flexible automation. However, the period between 2024 and 2026 is being identified by analysts as the "Great Acceleration."

According to recent data from TrendForce, China is preparing for a massive industrial surge, with an expected 94% increase in humanoid robot output by 2026. Domestic firms like Unitree and AgiBot are positioned to capture nearly 80% of that market share. This aggressive manufacturing push is mirrored by a strategic shift in the West, where the focus is on the "sim-to-real" gap—the challenge of training AI in virtual environments and ensuring those skills transfer accurately to physical hardware.

Cadence CEO Anirudh Devgan recently underscored the sheer scale of this movement, noting that while the current global GDP sits at approximately $110 trillion, the robotics category could realistically reach $25 trillion. This projection is based on the assumption that humanoids will transition from niche industrial tools to general-purpose assistants capable of filling labor shortages in aging societies and increasing productivity in virtually every sector of the economy.

The Sensory Frontier: Vision, Touch, and Hearing

For a humanoid robot to be truly effective, it must replicate the human sensory experience with extreme precision. Currently, development is uneven across the various senses, with vision and language processing leading the way, while haptics and olfaction follow.

Vision and Language: The Leading Edge

Vision systems are the most mature, benefiting from over a decade of research in autonomous vehicles. Humanoids utilize high-resolution cameras and LiDAR to map rooms, identify floor types, and distinguish between objects. However, vision remains a non-trivial problem. As Marc Swinnen, director of product marketing at Synopsys, points out, the challenge lies in interpretation—not just seeing an object, but understanding its function and the risks it poses in a dynamic environment.

Language technology has seen a similar leap. Because LLMs are developed for a broad range of applications, robotics companies can leverage existing scale. This allows robots to not only follow verbal commands but to engage in "agentic" reasoning—interpreting the intent behind a command rather than just the words.

The Challenge of Haptics and Dexterous Manipulation

Touch is perhaps the most difficult sense to master. Humanoid hands must manage force, shear, slip, and temperature to interact safely with objects. In surgical robotics, the accuracy requirement is near-absolute, requiring "five nines" (99.999%) of precision.

Sam Toba, senior product marketing manager at Synaptics, explains that "shear"—the force felt when an object is slipping through a grip—is a critical metric. To solve this, engineers are embedding miniaturized chips and sensors directly into the "fingertips" and "palms" of robotic hands. This allows for closed-loop processing at the edge, where the hand can react to a slipping object in milliseconds without waiting for a signal to travel to the robot’s central "brain."

Hearing and Contextual Awareness

The next frontier in human-robot interaction is contextual hearing. Current systems can recognize words, but they often struggle with "intent." For example, a domestic robot must distinguish between a user talking to a spouse about coffee and a direct command to brew an espresso. John Weil of Synaptics notes that the industry is moving toward "beamforming" microphones and localized models that can determine the direction and tone of audio, allowing robots to ignore background noise and focus on relevant interactions.

Technical Infrastructure: From Sim-to-Real to Edge Computing

The shift toward Physical AI requires a fundamental rethinking of hardware and software architecture. One of the most significant hurdles is the "sim-to-real" gap. Nvidia and Cadence are currently collaborating to bridge this by using digital twins—virtual replicas of the physical world where AI agents can undergo millions of hours of training in a fraction of the time. These simulations include complex physics models that account for gravity, friction, and material density.

Furthermore, the mechanical complexity of humanoids presents a dual-layer problem. Unlike the automotive industry, which has mastered mechanical systems and is now solving the AI layer, robotics must solve both simultaneously. Matthew Bubis of Imagination Technologies notes that getting the outputs of an AI model to control hundreds of moving mechanical parts in real-time is a hurdle that requires massive computational power.

To manage this, the industry is adopting a distributed computing model:

- The Edge (Sensors): Micro-controllers (MCUs) in the limbs handle immediate data filtering and noise reduction.

- The Hand/Limb: Secondary processing layers manage localized feedback loops, such as grip strength.

- The Central Brain: High-performance processors handle reasoning, navigation, and long-term mission planning.

This hierarchy prevents the central CPU from being overloaded by the thousands of data points generated by tactile and visual sensors every second.

Regional Variations and Market Adoption

The adoption of humanoid technology is not uniform, with distinct regional philosophies emerging. In Asia, particularly China, there is a heavy emphasis on user experience and rapid deployment. This has led to the integration of voice interfaces and massive displays in consumer-facing robots and vehicles. The Chinese market is currently the leader in "professional cleaning" humanoids and AI-powered domestic appliances.

In contrast, European and North American markets are characterized by a more conservative approach, with a primary focus on functional safety and security. As robots begin to work alongside humans in factories and homes, the "functional safety" standards developed for the automotive industry (such as ISO 26262) are being adapted for robotics. Ensuring that a 150-pound humanoid does not cause accidental injury is a prerequisite for widespread Western adoption.

Broader Impact and Future Implications

The implications of a $25 trillion robotics market are profound. If these projections hold, the global economy will undergo a productivity surge not seen since the Industrial Revolution. Humanoids offer a solution to the "demographic time bomb" facing nations like Japan, Germany, and South Korea, where a shrinking workforce threatens to stall economic growth.

However, the transition to Physical AI also introduces significant risks. Security threats to the Internet of Things (IoT) and industrial control systems are converging. A humanoid robot is, essentially, a mobile edge device with significant physical strength. Ensuring these systems are resilient against hacking and "hallucinations" (errors in AI reasoning) is now a top priority for developers.

As the industry moves from generative AI (which creates content) to agentic AI (which takes action) and finally to Physical AI (which interacts with the world), the boundaries between human and machine labor will continue to blur. The successful integration of these systems will depend not just on the "brain" of the AI, but on the sophisticated sensors and mechanical actuators that allow it to navigate the complexities of human reality.

The coming decade will likely see the transition of humanoids from high-tech novelties in automotive plants to ubiquitous fixtures of modern life. With China’s production surge and the West’s focus on high-precision "sim-to-real" training, the race to dominate the $25 trillion humanoid economy is officially underway. Future developments in vision, touch, and distributed computing will determine which platforms ultimately become the "general-purpose" standard for the next generation of global industry.