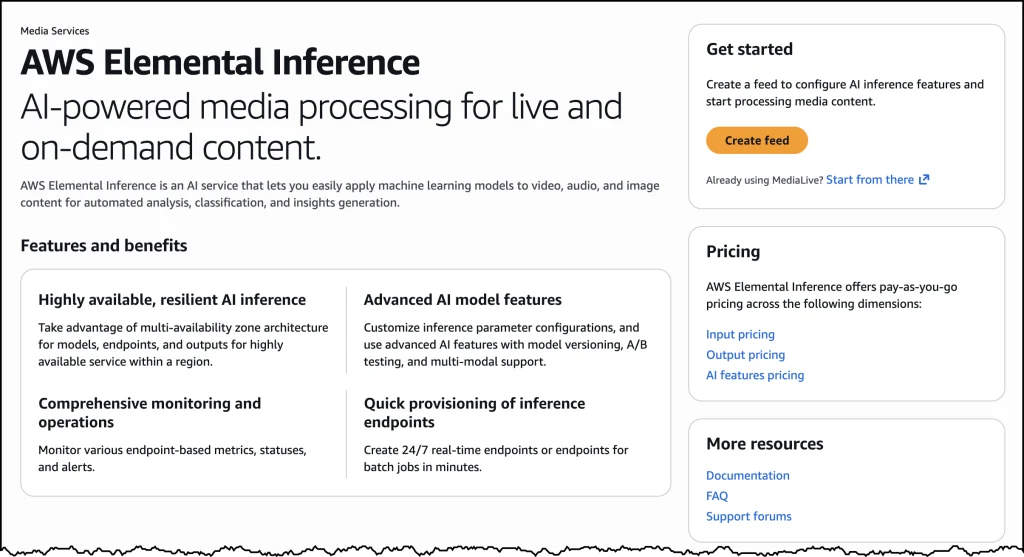

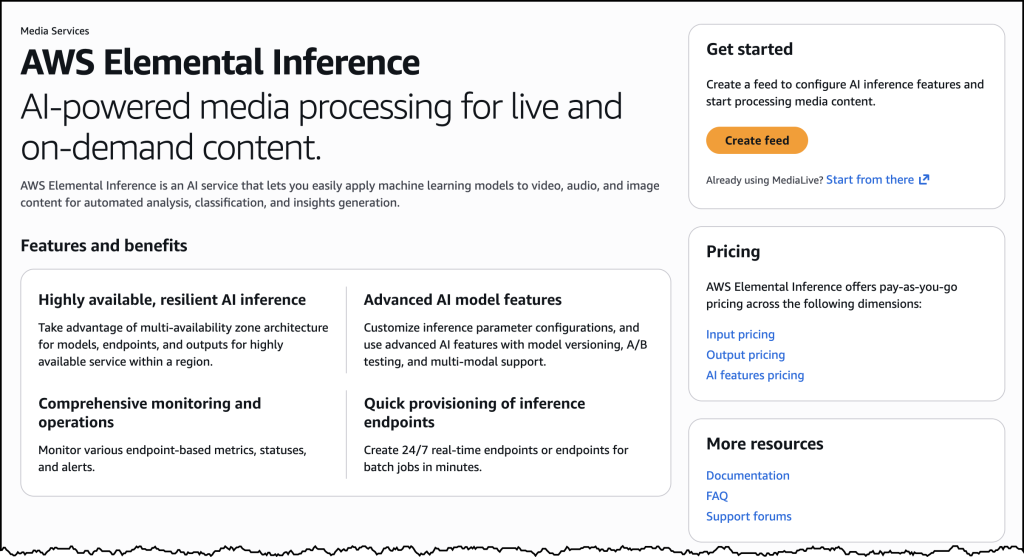

Amazon Web Services (AWS) has announced the immediate availability of AWS Elemental Inference, a sophisticated, fully managed artificial intelligence (AI) service designed to automatically transform and optimize live and on-demand video broadcasts, enabling content creators to engage wider audiences across diverse platforms. This launch marks a significant step in democratizing advanced AI capabilities for the media and entertainment industry, particularly addressing the pervasive challenge of adapting traditional landscape video content for the rapidly growing vertical video formats prevalent on mobile and social media platforms. At its initial rollout, AWS Elemental Inference offers real-time conversion of video content into vertical formats, specifically optimized for platforms such as TikTok, Instagram Reels, and YouTube Shorts, eliminating the need for extensive manual post-production work or specialized AI expertise.

Addressing the Mobile-First Revolution in Video Consumption

The landscape of video consumption has undergone a profound transformation in recent years, driven primarily by the ubiquity of smartphones and the explosive growth of short-form, vertical video content. While traditional broadcasting and streaming largely continue to produce content in a 16:9 landscape aspect ratio, mobile viewers increasingly gravitate towards vertical (9:16) formats that are native to their devices and preferred social platforms. Data from various industry reports consistently highlights this shift; for instance, mobile devices now account for over 70% of global video consumption, and engagement rates for vertical video on platforms like TikTok and Instagram Reels often surpass those of horizontal content. A 2023 study by Statista projected that the average daily time spent consuming digital video globally would continue to rise, with short-form content playing a disproportionately large role in driving this growth.

This divergence between content production and consumption preferences has created a significant operational hurdle for broadcasters, live streamers, and content creators. Manually converting landscape footage into engaging vertical formats is an arduous, time-consuming process requiring specialized video editors, often involving tedious cropping, reframing, and re-editing to maintain visual integrity and focus. This labor-intensive workflow frequently results in missed opportunities, as viral moments unfold rapidly on social media, and delays in content adaptation mean lost audience engagement to competitors or platforms that are inherently "mobile-first." AWS Elemental Inference directly confronts this challenge by automating this conversion, allowing content creators to maintain relevance and capture audience attention in the fast-paced digital ecosystem without incurring substantial operational overhead.

The Technical Core: Agentic AI and Foundation Models in Action

At the heart of AWS Elemental Inference lies an innovative agentic AI application, a system designed to operate autonomously, analyzing video streams in real-time and intelligently applying the most effective optimizations. This intelligent automation removes the need for human intervention in the decision-making process for tasks such as vertical video cropping and clip generation. The service leverages fully managed foundation models (FMs), which are pre-trained on vast datasets and continually updated and optimized by AWS. This approach ensures that users benefit from cutting-edge AI capabilities without needing to build, train, or manage complex machine learning models themselves, nor do they require dedicated AI teams or specialized data science expertise within their organizations.

The operational efficiency of AWS Elemental Inference is a key differentiator. Unlike traditional post-processing methods that can introduce latency measured in minutes, this new service achieves a remarkable processing latency of just 6–10 seconds. This near real-time capability is crucial for live events, news broadcasts, and time-sensitive content where immediate adaptation to social platforms can dictate a video’s virality and reach. Furthermore, the service employs a "process once, optimize everywhere" methodology. This means that a single video stream can undergo multiple AI-powered transformations simultaneously, such as vertical cropping and highlight clip generation, without requiring the content to be reprocessed for each individual capability. This parallel processing significantly reduces computational resources and time, streamlining workflows and enhancing overall efficiency. The underlying architecture integrates seamlessly with existing AWS Elemental MediaLive workflows, allowing broadcasters to augment their current video processing pipelines with advanced AI features without necessitating a complete overhaul of their infrastructure. This strategic integration underscores AWS’s commitment to providing flexible, scalable solutions that enhance, rather than disrupt, established media operations.

Seamless Integration and Flexible Deployment Options

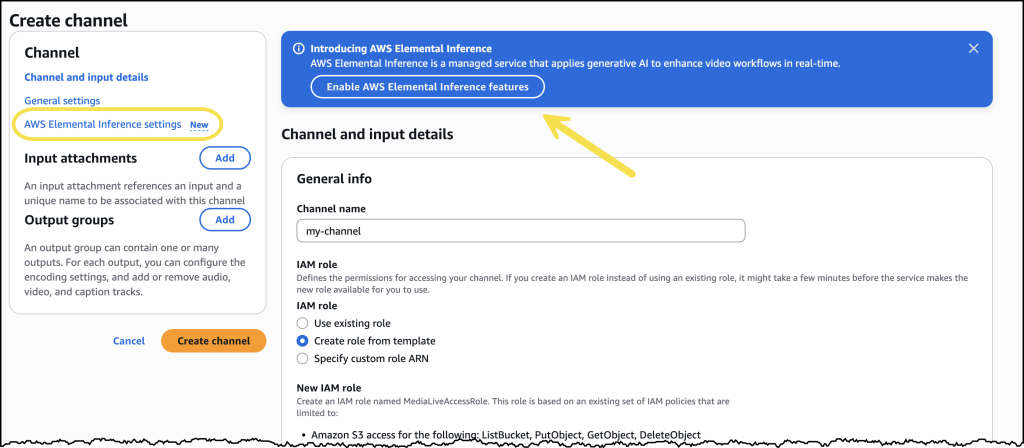

AWS Elemental Inference offers unparalleled flexibility in its deployment, catering to a wide range of user preferences and existing technical architectures. Users have two primary options for integrating the service into their video workflows: a standalone console experience or direct integration within the AWS Elemental MediaLive console.

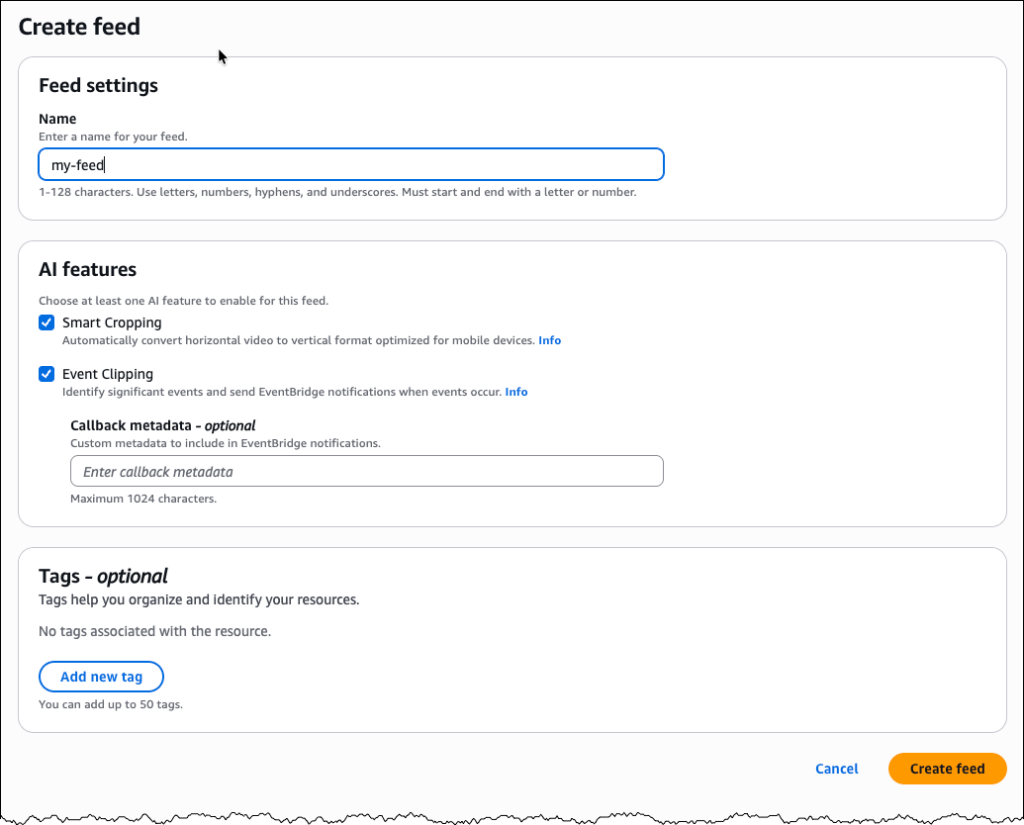

For those seeking a straightforward, dedicated interface, the standalone AWS Elemental Inference console provides a streamlined pathway to configure and manage AI-powered video transformations. From the AWS Management Console, users can navigate directly to AWS Elemental Inference, where they can initiate the creation of a "feed." This feed acts as the top-level resource for AI processing, allowing users to define feature configurations. For vertical video cropping, an empty feed is sufficient, as the service intelligently manages cropping parameters based on the video’s specifications. For more advanced features like clip generation, users can add specific outputs, name them (e.g., "highlight-clips"), select "Clipping" as the output type, and enable the status. This intuitive, step-by-step process is designed to minimize complexity and allow users to quickly begin leveraging AI for video optimization.

Alternatively, for broadcasters and streamers already utilizing AWS Elemental MediaLive for their live video encoding and delivery, AWS Elemental Inference can be enabled directly within their existing MediaLive channel configurations. This integrated approach is particularly beneficial as it allows the addition of AI capabilities without any architectural modifications to current live video workflows. Users can simply enable the desired AI features as part of their channel setup, and AWS Elemental Inference will operate in parallel with their video encoding processes. A dedicated AWS Elemental Inference tab within the MediaLive channel details page provides a centralized view of all AI-powered video transformation configurations, displaying essential information such as the Amazon Resource Name (ARN), data endpoints, and feed output details, including which features like "Smart Crop" are active and their operational status. This deep integration ensures a cohesive and efficient ecosystem for managing both traditional and AI-enhanced video streams.

Transforming Live and On-Demand Workflows

The immediate implications of AWS Elemental Inference are profound for a variety of content producers. For traditional broadcasters covering live news, sports, or entertainment events, the service enables instantaneous adaptation of their high-quality, landscape productions for immediate distribution across mobile-first social platforms. This capability means that critical moments from a sporting event or breaking news can be cropped and shared vertically within seconds, capturing audience attention on platforms where content consumption is increasingly real-time and ephemeral. This speed is critical for capitalizing on trending topics and maximizing the reach of live content.

Beyond live events, on-demand video libraries can also benefit immensely. Media archives, often vast and produced entirely in landscape, can now be automatically re-rendered into vertical segments for promotional use or to create new, short-form content series optimized for mobile viewership. This unlocks new life for existing content, expanding its discoverability and engagement potential without requiring extensive human re-editing. Streamers, including individual content creators and gaming personalities, can leverage the service to automatically generate highlight clips or vertical versions of their streams, effortlessly expanding their presence from platforms like Twitch and YouTube to TikTok and Instagram Reels, thereby reaching new demographics and fostering community growth. The ability to "process once, optimize everywhere" significantly streamlines multi-platform distribution strategies, allowing content creators to focus on generating compelling core content rather than repetitive format adaptations.

Strategic Implications for the Media and Entertainment Industry

The introduction of AWS Elemental Inference positions AWS as a formidable innovator in the evolving media and entertainment technology landscape. By offering a fully managed, AI-powered solution that solves a critical pain point for content creators, AWS reinforces its commitment to supporting the entire media workflow, from content creation and processing to delivery and monetization. This move also highlights the broader trend of cloud providers increasingly integrating advanced AI capabilities directly into their core services, making sophisticated technology accessible to a wider range of users.

Industry observers suggest that this service could be a significant disruptor, particularly for companies that traditionally rely on manual editing processes or specialized software for video adaptation. While those solutions still have their place for highly bespoke productions, AWS Elemental Inference offers a scalable, automated alternative that can significantly reduce operational costs and accelerate time-to-market for mass content adaptation. It democratizes the ability to compete effectively in the mobile-first video space, leveling the playing field for smaller creators and large media organizations alike. This initiative is also a testament to the growing convergence of AI and cloud computing, demonstrating how these technologies can synergize to create entirely new paradigms for content creation and distribution.

Expanding Reach and Monetization Potential

The immediate benefit of AWS Elemental Inference is enhanced audience engagement through optimized content delivery. By making it easier to publish content across diverse platforms in their native formats, creators can tap into new demographics and strengthen their presence where audiences are most active. However, the long-term vision extends beyond mere engagement. AWS has indicated that future updates will include tighter integration with other AWS Elemental services and the introduction of features designed to help customers monetize their video content more effectively.

This future roadmap hints at capabilities that could automatically identify monetizable segments, insert contextually relevant ads into vertical clips, or facilitate new subscription models based on AI-generated personalized content. As the service evolves, it could become a comprehensive platform not just for transformation, but for intelligent content packaging and distribution that directly impacts revenue streams. The ability to quickly adapt and distribute content across a wider array of channels also creates more inventory for advertising, potentially increasing the overall value of a content library. By abstracting away the complexity of AI and infrastructure management, AWS empowers content creators to innovate on their monetization strategies, focusing on creative execution rather than technical overhead.

Global Availability and Scalable Economics

AWS Elemental Inference is now available across four key AWS Regions: US East (N. Virginia), US West (Oregon), Europe (Ireland), and Asia Pacific (Mumbai). This strategic regional deployment ensures that a significant portion of AWS’s global customer base can access the service with low latency and compliance with regional data residency requirements. The service can be enabled through the AWS Elemental MediaLive console or integrated into existing workflows using the AWS Elemental MediaLive APIs, offering programmatic control for advanced users and large-scale deployments.

Adhering to AWS’s standard economic model, AWS Elemental Inference operates on a consumption-based pricing structure. Customers pay only for the features they utilize and the volume of video they process, with no upfront costs or long-term commitments. This "pay-as-you-go" model is highly advantageous for media organizations, allowing them to scale their AI-powered video processing capabilities dynamically. During peak events or periods of high content output, resources can be expanded seamlessly, while during quieter times, costs can be optimized by only paying for actual usage. This flexible pricing model aligns perfectly with the often-variable demands of the media industry, ensuring cost-effectiveness and operational agility.

The launch of AWS Elemental Inference marks a pivotal moment for content creators striving to thrive in the mobile-first era. By offering an accessible, powerful, and scalable AI-driven solution for video transformation, AWS is not only simplifying complex workflows but also opening new avenues for audience engagement and future monetization. As the digital media landscape continues its rapid evolution, services like AWS Elemental Inference will be instrumental in enabling content creators to remain at the forefront of innovation.