The landscape of software architecture is in constant flux, and with it, the tools that provide crucial observability must adapt. Historically, the shift to microservices necessitated the rise of distributed tracing, with Jaeger emerging as a foundational tool for engineers navigating these fragmented systems. Now, as organizations increasingly integrate generative AI applications and autonomous agents into their production environments, the demands on tracing technologies are evolving once again. The complex execution paths of AI agents, which involve intricate prompt assembly, vector database retrievals, and multiple external tool calls, present new challenges that traditional tracing tools are struggling to adequately map.

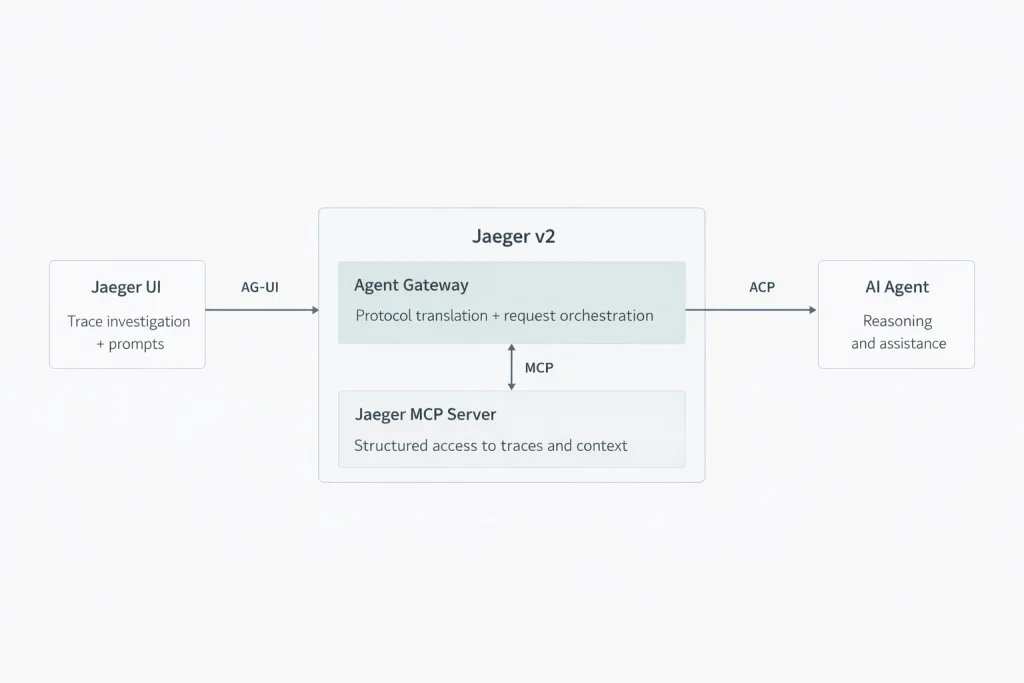

In response to these paradigm shifts, Jaeger is undergoing a significant evolution to better address these emerging workloads. This transition is being executed in two primary phases. The first, already realized with the release of Jaeger v2, involved a foundational rebuild of its core architecture to natively integrate OpenTelemetry. This strategic move provides a robust data foundation for enhanced tracing capabilities. The second phase is currently underway, focusing on expanding Jaeger’s functionality beyond standard data visualization. By embracing open protocols such as the Model Context Protocol (MCP), Agent Client Protocol (ACP), and the Agent–User Interaction Protocol (AG-UI), Jaeger aims to foster an environment where human engineers and AI agents can collaborate more effectively. This collaborative approach is designed to provide unprecedented visibility into the intricate execution pathways of AI pipelines, which often push the boundaries of existing tracing solutions.

Setting the Foundation: Jaeger v2 and OpenTelemetry Integration

The imperative to efficiently manage and understand the burgeoning complexities of AI workloads underscored the necessity for a modernized data collection pipeline. This realization was a driving force behind the architectural overhauls detailed in the recent CNCF blog post, "Jaeger v2 released: OpenTelemetry in the core!". This release marks a pivotal moment in Jaeger’s development, signaling a deep commitment to aligning with industry-standard observability practices.

Jaeger v2 represents a significant departure from its previous architecture by replacing its legacy collection mechanisms with the OpenTelemetry Collector framework. This consolidation allows for a unified deployment model that can seamlessly ingest metrics, logs, and traces. The native ingestion of the OpenTelemetry Protocol (OTLP) eliminates the need for cumbersome intermediate translation steps, thereby enhancing ingestion performance and efficiency. This foundational integration with OpenTelemetry not only streamlines data processing but also establishes the necessary data infrastructure upon which more sophisticated AI-centric tracing features can be built. The adoption of OpenTelemetry as the core data ingress point ensures that Jaeger is well-positioned to leverage the vast and growing ecosystem of OpenTelemetry-compatible tools and instrumentation.

A New Era of Human and Agent Collaboration

Building upon the robust foundation of Jaeger v2, the project is actively exploring innovative methodologies for analyzing distributed systems, with a particular emphasis on facilitating seamless collaboration between human engineers and AI agents during the debugging and incident response processes. This ambitious endeavor is being propelled forward by dedicated contributors from vital community initiatives, including the CNCF LFX Mentorship program and Google Summer of Code (GSoC), underscoring the collaborative and community-driven nature of this evolution.

To effectively support the integration of AI within observability workflows, Jaeger is strategically adopting three key open standards: the Model Context Protocol (MCP), the Agent Client Protocol (ACP), and the Agent–User Interaction Protocol (AG-UI). The MCP is designed to standardize the secure access of external data sources by AI models, ensuring that sensitive information is handled responsibly. The ACP, in turn, provides a uniform and standardized method for user interfaces to communicate with AI agents and their associated sidecars, creating a more cohesive interaction model. When used in concert, these protocols empower Jaeger to function as an interactive and intelligent workspace, bridging the gap between human intuition and AI-driven analysis. This integration is particularly crucial for mapping the intricate execution paths of AI pipelines, which can involve a cascade of operations that traditional tracing tools find difficult to represent comprehensively.

Building the Backend Protocol Layer for AI Integration

The technical implementation of this collaborative vision is primarily taking shape within Jaeger’s backend. A critical component of this effort is the development of an Agent Client Protocol (ACP) layer. This layer will function as a stateless translator, facilitating communication between the Jaeger frontend and external AI sidecars. The design principles and initial proof-of-concept for this integration are meticulously documented in key GitHub issues within the Jaeger project: issue #8252, titled "Implement AG-UI to ACP Jaeger AI," and issue #8295, "Implement ACP-based AI handler."

Traditionally, incident responders rely on manual query construction, often involving the tedious filtering of services and tags to pinpoint issues. The integration of ACP fundamentally transforms this process. It enables the Jaeger backend to interpret natural-language constraints – such as requests to identify all 500-level errors occurring within the payment service that exhibit latency exceeding two seconds – and translate these human-readable directives into precise, deterministic trace queries.

Organizations will have the flexibility to configure this backend to leverage cloud-based large language models (LLMs) for sophisticated reasoning tasks, or to utilize local small language models (SLMs) when stringent data privacy requirements are paramount. This architectural choice allows for a nuanced approach to AI integration, where the depth of analysis is directly tied to the capabilities of the chosen model. As highlighted in industry analyses comparing hosted versus local LLM infrastructure, this flexibility is a significant advantage. By deliberately confining the AI’s role to protocol translation and query generation, the architecture significantly mitigates the inherent risks of hallucinations often associated with more open-ended chatbot functionalities, ensuring that the insights provided are grounded and actionable.

The Collaborative UI Workspace: Enhancing User Interaction

Complementing the backend advancements, the Jaeger user interface is also undergoing substantial updates to effectively support this new AI-driven backend logic. These frontend enhancements are being meticulously tracked in Jaeger UI issue #3313, which details the ongoing migration from the legacy Redux state management library to a more modern stack comprising Zustand and React Query.

The updated frontend will feature an integrated in-app assistant, powered by a combination of assistant-ui and the AG-UI protocol. This assistant will leverage streaming events to efficiently transmit critical trace context – including error logs and key-value tags – to the backend gateway. This enables engineers to interact with the assistant using natural language prompts, requesting summaries of failure paths within specific spans. This capability promises to dramatically reduce the time and effort required to review raw log lines during an incident, accelerating the mean time to resolution (MTTR).

Visualizing Generative AI Execution Paths

Beyond leveraging AI to analyze traditional distributed system traces, Jaeger is actively expanding its capabilities to provide direct observability into the execution paths of AI applications themselves. This crucial development is being outlined in Jaeger issue #8401, "GenAI integration." The core of this work involves the visualization of the rapidly evolving OpenTelemetry Generative AI semantic conventions. The OpenTelemetry community is currently engaged in drafting specifications to standardize telemetry data for these highly dynamic AI workflows. Prominent initiatives include emerging drafts for Generative AI Agentic Systems, aimed at tracking agent tasks, memory, and actions, as well as conventions for AI Sandboxes to monitor ephemeral code execution environments.

The project’s commitment is to map these new standardized AI operations directly within the Jaeger UI. This will provide engineers with clear and intuitive visibility into AI execution paths without imposing vendor-specific data formats. Developers building Retrieval-Augmented Generation (RAG) pipelines and autonomous agents will gain the ability to precisely measure embedding model latency, meticulously track external tool calls, and closely monitor token usage. This enhanced visibility is essential for optimizing AI performance, identifying bottlenecks, and ensuring the efficient operation of complex AI systems.

Unified Observability: From Local Testing to Production Deployment

A persistent practical challenge in software development is maintaining consistency in observability across both local testing and production environments. Jaeger has historically addressed this by offering an "all-in-one" executable to simplify local testing setups. With Jaeger v2, this paradigm is further strengthened. Because the v2 release is built upon the OpenTelemetry Collector, developers can now run the exact same binary locally as they do in their production deployments.

During the development and testing phases, engineers can deploy the Jaeger v2 container alongside a local SLM. This creates a secure, private sandbox environment, enabling them to test generative AI traces or debug ACP integrations without inadvertently exposing sensitive data to external APIs. This local testing capability is invaluable for iterating rapidly on AI features and ensuring robust integration before deploying to production.

In production environments, platform teams can deploy this identical unified binary, often leveraging tools like the OpenTelemetry Operator for Kubernetes. This ensures a consistent observability posture across the entire lifecycle. The flexibility extends to production by allowing organizations to seamlessly replace the local SLM with a more powerful cloud-based LLM for advanced incident analysis. This seamless transition underscores the architectural robustness of Jaeger v2 and its ability to adapt to varying operational needs, ensuring that tracing configurations remain consistent and reliable from the initial stages of development through to full-scale deployment.

The Road Ahead: Adapting to the AI-Driven Future

The fundamental requirements for effective tracing are undergoing a profound transformation, driven by the escalating complexity and pervasive integration of AI applications. Jaeger’s strategic path forward, marked by the establishment of a robust OpenTelemetry foundation in Jaeger v2 and the proactive integration of MCP and ACP standards, signifies a decisive adaptation to these evolving demands. This forward-thinking technical direction is paving the way for a practical and efficient workflow, one where human engineers and AI agents can engage in a synergistic collaboration to diagnose and resolve failures within increasingly complex distributed systems. This evolution positions Jaeger not just as a tracing tool, but as a critical component of the future of AI-powered observability.