Law enforcement officials in San Francisco have confirmed that the residence of OpenAI Chief Executive Officer Sam Altman was the target of an attempted arson attack in the early hours of last week, marking a significant escalation in the physical security threats facing prominent figures in the artificial intelligence sector. According to police reports, an unidentified individual threw a Molotov cocktail at Altman’s home at approximately 3:45 a.m., an incident that occurred while the executive, his husband, and their young son were inside the property. While the incendiary device reportedly bounced off the structure without causing significant damage or injuries, the event has triggered a broader conversation regarding the safety of technology leaders and the increasingly volatile public discourse surrounding the development of Artificial General Intelligence (AGI).

Chronology of the Attack and Subsequent Arrest

The sequence of events began in the pre-dawn hours at Altman’s private residence in San Francisco. Following the failed attempt to ignite the home, the suspect fled the scene. However, the threat shifted locations later that day. According to official statements, the same individual was apprehended several hours later after appearing at the headquarters of OpenAI, where he allegedly made explicit threats to burn down the facility. The suspect is currently in custody, and the San Francisco Police Department (SFPD) is conducting an ongoing investigation into the motive behind the targeted violence.

Altman addressed the incident publicly via a blog post on Saturday, departing from his usual preference for privacy by sharing a photograph of his family. In the post, Altman expressed the hope that humanizing his family might dissuade future attackers. "Images have power, I hope," Altman wrote, noting that the first individual’s actions were a direct result of the "narratives" and "words" currently surrounding his persona and the work of OpenAI.

While Altman initially linked the attack to a recently published investigative piece in The New Yorker, he later retracted the specific phrasing, acknowledging that his choice of words—calling the article "incendiary"—was poor given the literal firebombing of his home. He cited the emotional distress of the day as the reason for the lack of clarity in his initial response.

Investigative Scrutiny and the New Yorker Exposé

The physical attack on Altman’s home coincided with the release of a highly detailed investigative report by Pulitzer Prize-winning journalist Ronan Farrow. Published in The New Yorker, the article is the result of an 18-month investigation involving hundreds of interviews, internal memos, Slack communications, and private notes. The piece provides an excoriating look at Altman’s leadership style, alleging a history of manipulative behavior and internal conflicts that have characterized OpenAI’s rapid ascent.

Farrow’s reporting highlights several key areas of concern that have fueled public and industry debate:

- The Shift in Corporate Mission: OpenAI was founded in 2015 as a non-profit research laboratory dedicated to ensuring that AGI benefits all of humanity. The transition to a "capped-profit" model and the subsequent pursuit of multi-billion-dollar investment rounds from entities like Microsoft has led to accusations that the company has prioritized commercial dominance over its original safety-first ethos.

- Internal Governance Failures: The article revisits the November 2023 board crisis, during which Altman was briefly fired. While he was reinstated following an employee revolt, the Farrow report suggests that the board’s initial concerns regarding Altman’s transparency and "conflict-averse" but ultimately disruptive management style were more substantive than previously acknowledged.

- Societal Impact and Ethics: The investigation raises questions about the ethical implications of OpenAI’s technology, specifically regarding copyright infringement and the potential for AI to displace millions of workers without a sufficient social safety net in place.

The Rising Cost of Executive Security in Silicon Valley

The attack on Altman is not an isolated incident in the technology sector, but rather part of a documented trend of rising threats against high-profile CEOs. As artificial intelligence becomes a central pillar of the global economy and a source of significant social anxiety, the security requirements for the leaders of these firms have reached unprecedented levels.

Data from SEC filings indicate that major technology firms are spending record sums on executive protection. For example, Meta Platforms (formerly Facebook) reportedly spent over $14 million on personal security for Mark Zuckerberg in 2023, while Google and Amazon have also significantly increased their security budgets. For leaders in the AI space, the threat profile is uniquely complex, as they are often blamed for a wide range of societal ills, from the spread of misinformation to the projected obsolescence of various professions.

Industry analysts suggest that the "celebrity" status of AI founders, combined with the "black box" nature of their technology, creates a vacuum that is often filled by extreme rhetoric. The SFPD’s investigation into the Altman attack is expected to examine whether the suspect was influenced by online radicalization or specific conspiracy theories related to AI dominance.

Legal and Political Pressures Surrounding OpenAI

Beyond physical threats and journalistic scrutiny, OpenAI is currently navigating a minefield of legal and political challenges. The company is embroiled in a high-stakes lawsuit filed by Elon Musk, a co-founder of OpenAI who left the organization in 2018. Musk alleges that Altman and OpenAI have breached their founding contract by becoming a "closed-source de facto subsidiary" of Microsoft. The trial, which is expected to begin in the coming weeks, will likely force the public disclosure of internal communications that could further impact the company’s reputation.

Simultaneously, the political landscape has become increasingly hostile. Recent comments from prominent political figures have characterized AI firms as being ideologically biased. This political friction was exacerbated by reports that OpenAI has moved to secure contracts with the U.S. Department of Defense. This move represents a pivot from the stance of competitors like Anthropic, which have maintained stricter ethical boundaries regarding the use of their models for military applications. The decision to pursue defense contracts has sparked internal debate within OpenAI and attracted criticism from advocates who fear the militarization of AI technology.

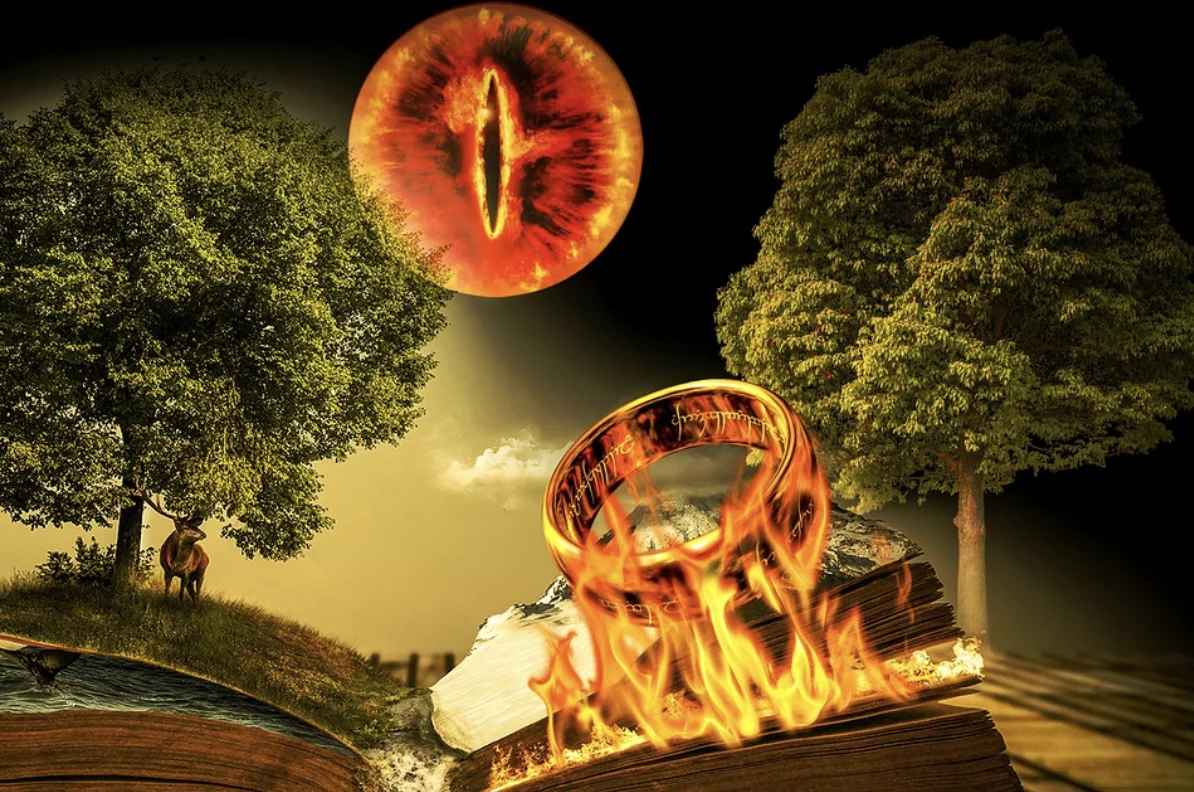

The AGI "Ring of Power" Metaphor and Industry Implications

In his post-attack reflections, Altman utilized a metaphor from J.R.R. Tolkien’s The Lord of the Rings to describe the current state of the AI industry. He referred to the pursuit of AGI as a "Ring of Power" dynamic, suggesting that the desire to control such a "totalizing" technology drives individuals and organizations to extreme behaviors.

Altman’s stated solution is the "democratization" of the technology, arguing that "no one should have the ring." However, critics point out a fundamental contradiction in this stance. While Altman advocates for shared control, OpenAI remains a central, highly concentrated power in the AI field, with a valuation recently estimated at over $150 billion. The tension between the rhetoric of "AI for everyone" and the reality of a multi-billion-dollar corporate entity seeking market dominance remains a primary source of friction in the industry.

Broader Impact on Public Discourse and Safety

The firebombing of a private residence marks a dangerous turning point in the debate over AI’s future. Experts in public policy and technology ethics warn that if the discourse continues to escalate into violence, it may stifle the very transparency and regulation that the public is demanding.

There is a growing consensus that the rhetoric surrounding AI needs to be "de-escalated," as Altman suggested in his blog post. This involves:

- Constructive Disagreement: Moving away from hyperbolic "doomsday" or "utopian" narratives toward evidence-based discussions on regulation, safety, and labor impact.

- Accountability for Platforms: Addressing the role of social media platforms in amplifying misinformation and vitriol directed at public figures.

- Transparency from AI Labs: Reducing the secrecy surrounding model development to build public trust and mitigate the fear of the unknown.

As the industry moves toward increasingly capable AI systems, the incident in San Francisco serves as a stark reminder of the human stakes involved. The challenge for Altman and his peers will be to navigate the "Shakespearean drama" of the AI race while ensuring that the pursuit of technological progress does not come at the cost of civil stability or personal safety. The Eye of Sauron, to use Altman’s borrowed imagery, is indeed fixed on the development of AGI, and the coming months of legal battles and investigative scrutiny will determine how that power is ultimately governed.