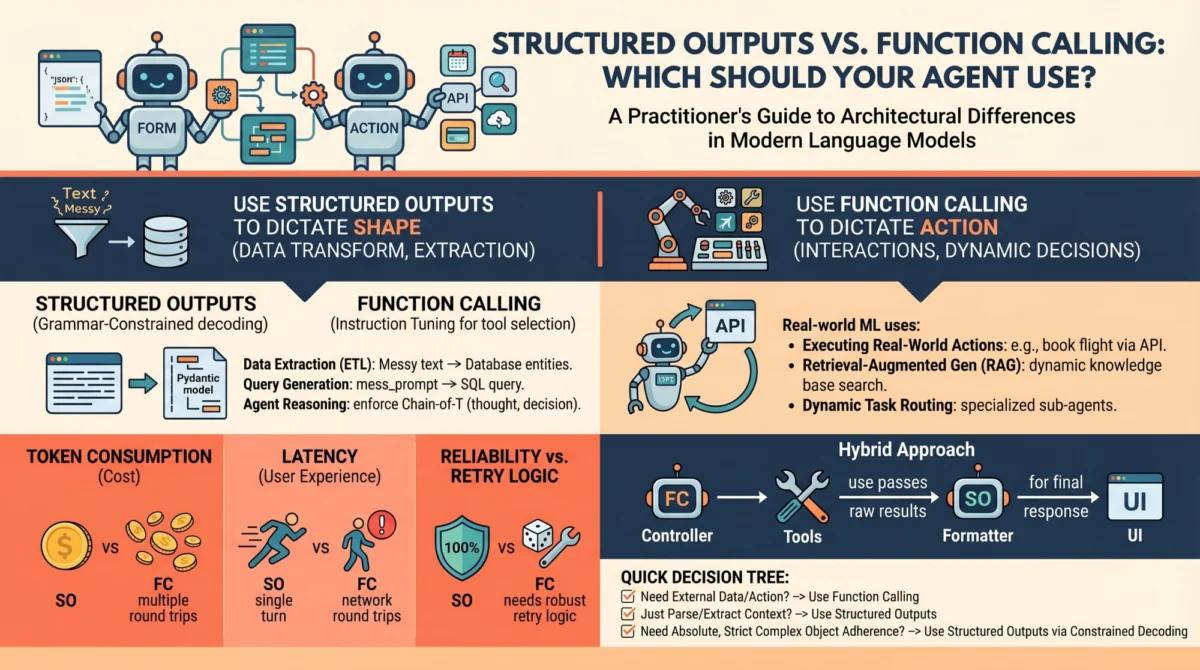

Language models (LMs), at their foundational core, operate as sophisticated text-in and text-out systems. While this paradigm suffices for human-to-AI conversational interfaces, the integration of these powerful models into robust, autonomous agents and reliable software pipelines presents a significant architectural challenge. The inherent unstructured nature of raw text output, often conversational prose, poses a considerable hurdle for machine learning practitioners seeking to parse, route, and integrate these outputs into deterministic systems. To overcome this, modern LM API providers, including industry leaders like OpenAI, Anthropic, and Google Gemini, have introduced two primary mechanisms designed to bridge the gap between fluid natural language and structured machine-readable instructions: structured outputs and function calling. While seemingly similar at first glance, often relying on JSON schemas under the hood and both yielding structured key-value pairs, these capabilities serve fundamentally distinct architectural purposes within agent design. Conflating them is a common pitfall that can lead to brittle architectures, excessive latency, and unnecessarily inflated API costs. A clear understanding of their distinctions is paramount for effective AI engineering.

The Rise of AI Agents and the Challenge of Unstructured Data

The past few years have witnessed an explosion in the development of AI agents – systems designed to understand user intent, break down complex tasks, interact with external tools, and achieve goals autonomously. From customer service bots managing complex queries to sophisticated data analysis agents and even robotic control systems, the demand for reliable and predictable AI interactions has never been higher. However, the native "text-in, text-out" format of large language models (LLMs) often proved insufficient for these applications. Developers frequently struggled with parsing free-form text, implementing robust error handling for unexpected outputs, and ensuring that the AI’s responses could seamlessly trigger subsequent actions in a programmatic workflow.

Early attempts to coerce LLMs into generating structured data typically involved extensive prompt engineering, often instructing the model with directives such as, "You are a helpful assistant that only speaks in JSON." While occasionally effective, this method was notoriously error-prone, requiring substantial retry logic, output validation, and often leading to unpredictable failures in production environments. A 2023 survey by a prominent developer platform indicated that over 40% of AI application developers cited unreliable LLM output parsing as a major impediment to agent deployment, highlighting a critical need for more robust solutions. This challenge underscored the necessity for dedicated, API-level mechanisms that could guarantee structured outputs and enable intelligent interaction with external systems.

Architectural Divergence: Unpacking the Core Mechanisms

To make informed decisions, it is crucial to understand the mechanical and API-level differences between structured outputs and function calling. These differences dictate their optimal application contexts.

Structured Outputs: Precision Through Constraint

Modern "structured outputs" fundamentally transform the reliability of data generation by employing grammar-constrained decoding. This advanced technique moves beyond mere prompt engineering by mathematically restricting the token probabilities during the generation process. Libraries such as Outlines and JSONFormer, along with native features like OpenAI’s Structured Outputs, actively prune the LLM’s vocabulary at each step of generation. If a predefined schema, typically a JSON schema, dictates that the next token must be a quotation mark, a specific boolean value, or an integer within a certain range, the probabilities of all non-compliant tokens are effectively masked out (set to zero).

This process ensures near 100% schema compliance, dramatically reducing the need for post-hoc validation and retry mechanisms. It is a single-turn generation focused strictly on the form of the output. The model directly answers the prompt, but its expressive freedom is confined to the exact structure defined by the developer. This capability represents a significant leap from heuristic prompt engineering, offering unparalleled reliability for tasks requiring precise data formatting. The evolution of this technology began to gain significant traction in late 2022 and early 2023, as open-source communities and major providers started integrating these deterministic generation capabilities.

Function Calling: Empowering Autonomous Action

Function calling, conversely, is built upon the foundation of instruction tuning. During its training phase, the model is meticulously fine-tuned to recognize scenarios where it lacks the necessary information to complete a user’s request, or when the prompt explicitly requires it to perform an action that extends beyond simple text generation. When developers provide an LLM with a list of available tools (functions), they are essentially instructing it: "If you encounter a situation where you need to retrieve external data, interact with a service, or perform a specific operation, you can pause your current text generation, select an appropriate tool from this predefined list, and generate the necessary arguments to execute it."

This mechanism initiates an inherently multi-turn, interactive workflow. The process typically unfolds as follows:

- User Query: The user provides a prompt, e.g., "What’s the weather like in London tomorrow?"

- Model Response (Function Call): The LLM, recognizing it needs external data, generates a structured call to a predefined

get_current_weatherfunction, complete with arguments likelocation='London'anddate='tomorrow'. This output itself is a structured output, but its purpose is to trigger an action. - System Execution: The application’s deterministic logic intercepts this function call, executes the

get_current_weatherfunction (e.g., by querying a weather API), and retrieves the result. - Tool Output Injection: The result from the external tool (e.g., "The weather in London tomorrow will be partly cloudy with a high of 15°C") is then fed back into the LLM as part of the ongoing conversation history.

- Final LLM Response: The model, now equipped with the necessary information, generates a natural language response to the user’s original query, incorporating the tool’s output.

OpenAI’s introduction of dedicated function calling APIs in June 2023 marked a pivotal moment, making it significantly easier for developers to integrate external capabilities directly into their LLM applications. This represented a shift from merely processing information to actively orchestrating actions within a larger system.

A Chronology of Evolution: From Heuristics to APIs

The journey to these sophisticated mechanisms reflects the rapid maturation of AI engineering:

- Pre-2022 (Heuristic Era): Early LLMs relied solely on prompt engineering for structured data. Developers manually crafted prompts and implemented extensive regex parsing and retry loops, leading to fragile systems.

- 2022-2023 (Emergence of Tool Use): The concept of "tool use" or "plugin" architectures gained traction, demonstrating LLMs’ ability to interact with external systems. This was often achieved through clever prompting and custom orchestrators.

- Late 2022 – Early 2023 (Grammar-Constrained Decoding): Research and open-source projects like

Outlinesbegan to offer more deterministic ways to ensure structured JSON output, leveraging advancements in decoding algorithms. - Mid-2023 (Native Function Calling APIs): Major providers like OpenAI introduced native function calling APIs, formalizing the process and making it a first-class feature within their platforms. This was followed by similar offerings from Anthropic and Google Gemini, standardizing the approach.

- Late 2023 – Present (Refinement and Hybridization): Continued refinement of these APIs, alongside the development of advanced agent frameworks, has led to sophisticated hybrid architectures that combine the strengths of both structured outputs and function calling.

Use Cases in Practice: A Strategic Decision Framework

Choosing between structured outputs and function calling is not arbitrary; it depends entirely on the task’s requirements.

When to Opt for Structured Outputs

Structured outputs should be the default choice whenever the primary objective is pure data transformation, extraction, or standardization, and all necessary information resides within the prompt or context window. The model’s role is simply to reshape existing data into a predictable format.

- Data Extraction and Parsing: Extracting specific entities (e.g., names, dates, product IDs, sentiment scores) from unstructured text, such as customer reviews, legal documents, or news articles, into a JSON object for database storage or further processing.

- Configuration Generation: Creating configuration files (e.g., for software, network devices, or simulation parameters) based on a natural language description, ensuring the output adheres to a strict schema.

- Content Summarization with Metadata: Summarizing a document and simultaneously extracting key metadata like keywords, author, publication date, and topic categories, all formatted according to a predefined JSON structure.

- Schema Mapping and Translation: Translating data from one unstructured format to another structured format, or mapping data between different structured schemas, such as converting a free-text job description into a standardized

JobPostingschema. - Form Field Population: Given a user’s free-form input, populating a structured form (e.g., for an order, a support ticket) with validated fields, ensuring data types and constraints are met.

The Verdict for Structured Outputs: Use structured outputs when the "action" is purely about formatting, transformation, or extraction of information already present. Its advantages include high reliability, significantly lower latency due to single-turn generation, and zero schema-parsing errors, making it ideal for robust data pipelines. Industry benchmarks suggest that grammar-constrained decoding methods achieve over 99.5% schema compliance, a stark contrast to the error rates of traditional prompt engineering.

When Function Calling Becomes Indispensable

Function calling is the engine of agentic autonomy, dictating the control flow of an application rather than just the shape of data. It is essential for external interactions, dynamic decision-making, and scenarios where the model needs to fetch information it doesn’t currently possess.

- Dynamic Information Retrieval: Answering questions that require up-to-date or external data, such as "What’s the current stock price of Google?" (requiring a

getStockPricetool) or "What are the latest headlines?" (requiring agetNewstool). - Interacting with APIs and Databases: Allowing the model to interact with a CRM system to "create a new lead," query a product catalog to "find items under $50," or send an email using an

sendEmailtool. - Complex Workflow Orchestration: Building agents that can plan and execute multi-step tasks, such as a travel agent assistant that first checks flight availability, then hotel prices, and finally books a reservation, using a series of tool calls.

- Personalization and Contextual Actions: A smart home assistant that can "turn off the lights in the living room" (requiring a

controlSmartHomeDevicetool) or "set a reminder for tomorrow morning" (requiring acreateRemindertool). - Autonomous Agent Planning: Enabling agents to dynamically choose which tools to use based on user intent and available information, forming a decision-making loop that drives complex behaviors.

The Verdict for Function Calling: Choose function calling when the model must interact with the outside world, fetch hidden data, or conditionally execute software logic mid-thought. While it introduces multi-turn latency and requires careful orchestration, it unlocks true agentic capabilities and dynamic problem-solving. This approach underpins the most advanced AI assistants and autonomous systems.

Performance, Latency, and Cost: Operational Considerations

When deploying AI agents to production, the architectural choice between structured outputs and function calling directly impacts unit economics and user experience.

- Latency: Structured outputs generally incur lower latency because they involve a single, constrained generation turn. The model receives the prompt and immediately generates the structured output. Function calling, conversely, is inherently multi-turn. It requires the model to generate a tool call, the system to execute the tool, and then the tool’s output to be re-injected into the model for a subsequent generation turn. This sequence can introduce significant delays, especially if external API calls are slow. For instance, a function call interacting with a third-party API might add hundreds of milliseconds to several seconds to the overall response time.

- Cost: API costs are typically calculated per token. Structured outputs involve a single generation, consuming tokens for the input prompt and the structured output. Function calling involves multiple generation turns and additional context. The model generates tokens for the function call, and then the function’s output (which can be substantial) is sent back to the model as part of the context for the final response. This often leads to higher token consumption and thus higher API costs per interaction. For example, a simple function call might consume 2-3x more tokens than a direct structured output, depending on the complexity of the function’s return data.

- Reliability and Error Handling: Structured outputs offer superior reliability due to grammar-constrained decoding, virtually eliminating schema compliance errors from the LLM itself. Error handling primarily focuses on the validity of the data values within the schema. Function calling introduces more points of failure: the model might hallucinate a non-existent function, generate invalid arguments, or the external tool itself might fail. Robust error handling for function calling requires careful validation of function names and arguments, comprehensive error trapping for external API calls, and strategies for re-planning or gracefully degrading if tools fail.

Hybrid Approaches and Best Practices

In advanced agent architectures, the distinction between these two mechanisms often blurs, leading to powerful hybrid approaches. Modern function calling fundamentally relies on structured outputs under the hood to ensure the generated arguments match your function signatures. Conversely, it’s possible to design an agent that only uses structured outputs to return a JSON object describing an action that your deterministic system should execute after the generation is complete—effectively "faking" tool use without the multi-turn latency.

Architectural Advice:

- Prioritize Structured Outputs: Use structured outputs as the default for data extraction and transformation whenever possible. Their reliability and low latency make them ideal for core data processing tasks.

- Isolate Function Calling: Encapsulate function calling logic within dedicated "tool agents" or specific modules. This makes the system more modular, easier to debug, and allows for robust error handling around external interactions.

- Validate Relentlessly: Always validate the outputs of both mechanisms. For structured outputs, validate the content against business rules. For function calls, validate the function name and arguments before execution, and always handle potential errors from the external tools.

- Optimize for Latency and Cost: Profile your agent’s performance. If a specific interaction is causing high latency or cost, re-evaluate if function calling is truly necessary, or if a hybrid approach or even a simple structured output could achieve the same goal with better efficiency.

The Broader Impact: Towards Reliable and Scalable AI

The deliberate use of structured outputs and function calling is not merely a technical detail; it represents a fundamental shift in AI engineering. It moves the discipline from experimental prompting to robust, production-grade software development. This evolution is critical for scaling AI solutions across industries, from finance and healthcare to manufacturing and retail. By providing deterministic ways to interact with LLMs, developers can build agents that are:

- More Reliable: Predictable outputs reduce debugging time and system failures.

- More Efficient: Reduced token usage and optimized latency translate to lower operational costs and better user experiences.

- More Scalable: Modular architectures allow for easier expansion and maintenance of complex agent systems.

- More Secure: Controlled interactions with external systems through defined function schemas minimize risks associated with arbitrary code execution.

Industry analysts project the AI agent market to grow at a Compound Annual Growth Rate (CAGR) exceeding 30% over the next five years, reaching multi-billion dollar valuations. The underlying infrastructure for reliable interactions, driven by structured outputs and function calling, is a cornerstone of this growth. Developers are increasingly recognizing that the "glue code" for AI is just as important as the models themselves.

Wrapping Up: A Foundational Choice for the Future of AI

LM engineering is rapidly transitioning from crafting conversational chatbots to building reliable, programmatic, autonomous agents. Understanding how to constrain and direct your models is the key to that transition. The most effective AI engineers treat function calling as a powerful but unpredictable capability, one that should be used sparingly and surrounded by robust error handling. Conversely, structured outputs should be treated as the reliable, foundational glue that holds modern AI data pipelines together.

The Practitioner’s Decision Tree:

When building a new feature, run through this quick 3-step checklist:

- Does the model need to interact with external systems or fetch information it doesn’t already possess? If YES, consider Function Calling. If NO, proceed to step 2.

- Is the goal simply to transform, extract, or standardize information already available in the prompt/context into a predictable format? If YES, use Structured Outputs. If NO, you might be looking for a basic text generation or different LLM capability.

- Are latency and cost critical constraints for this interaction? If YES, lean towards Structured Outputs or explore hybrid approaches to minimize function calls.

By making these deliberate architectural choices, AI engineers are not just optimizing performance; they are laying the groundwork for the next generation of intelligent, reliable, and truly autonomous systems that will redefine human-computer interaction.