The Evolution of Artificial Intelligence: From Reactive to Agentic

To understand the current surge in compute demand, it is necessary to examine the chronology of AI development over the last decade. The industry first moved from basic machine learning algorithms to deep learning, which gained mainstream prominence around 2012. By 2017, the introduction of the Transformer architecture revolutionized natural language processing, eventually leading to the 2022 release of ChatGPT and the subsequent explosion of generative AI.

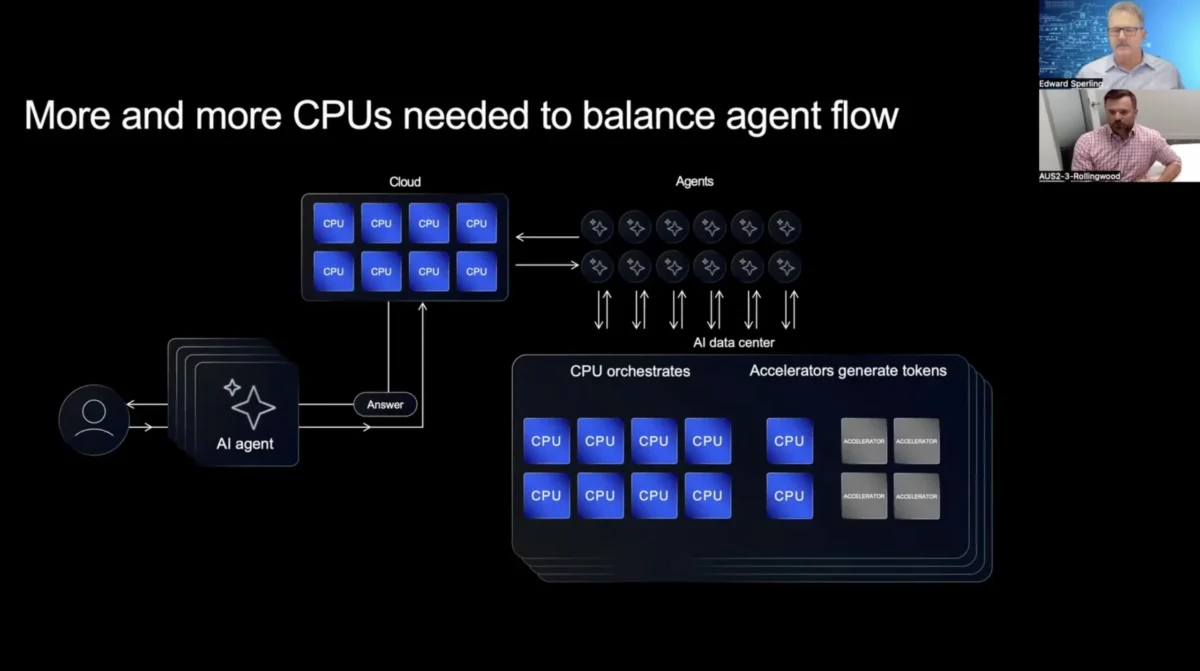

In the generative AI phase, the primary model of interaction is a "one-to-one" relationship: a human user provides a prompt, and a model generates a response. While this requires significant compute power for training and inference, the demand is limited by human input speed and the intermittent nature of human activity. However, the industry is now moving into the "Agentic AI" phase. In this model, a human provides a high-level goal—such as "organize a business trip including flights, meetings, and local transport"—and a primary agent decomposes this goal into dozens or even hundreds of sub-tasks. These sub-tasks are then distributed to specialized sub-agents. These agents communicate with one another, verify information, negotiate with external APIs, and reconcile data points in real-time. This machine-to-machine dialogue occurs 24 hours a day, seven days a week, removing the "human bottleneck" and creating a persistent, high-utilization environment for data center hardware.

The Architectural Challenge: Why General-Purpose Processing Matters

While GPUs and specialized AI accelerators (NPUs) have dominated the conversation regarding AI training, the shift toward agentic AI places a renewed focus on general-purpose processing, typically handled by the Central Processing Unit (CPU). In an agentic ecosystem, the CPU serves as the "conductor" or "orchestrator." While a GPU might handle the heavy lifting of a specific inference task, the CPU is responsible for managing the logic of the workflow, making decisions based on agent feedback, and handling the complex networking protocols required for agents to talk to one another.

According to industry analysts and engineering leaders at Arm, the orchestration of multiple agents creates a "multiplication effect" on compute requirements. For every single human request, there may be 10 to 50 internal machine-to-machine requests. Each of these requests involves data parsing, security checks, and logic branches—tasks that are inherently suited for the high-frequency, low-latency execution capabilities of modern general-purpose processors like the Arm Neoverse platforms. As these agents operate autonomously, the duty cycle of servers in the data center moves toward 100%, necessitating chips that can deliver high performance without exceeding strict power and thermal envelopes.

Supporting Data: The Scale of the Compute Explosion

The scale of this transition is reflected in the projected growth of data center energy consumption and hardware investment. According to a 2024 report by Goldman Sachs, AI is expected to drive a 160% increase in data center power demand by 2030. Furthermore, the International Energy Agency (IEA) estimates that data centers’ total electricity consumption could reach over 1,000 terawatt-hours (TWh) by 2026, roughly equivalent to the electricity consumption of Japan.

The demand for bandwidth within the chip architecture itself is also skyrocketing. In the agentic era, the bottleneck is often not the speed of the processor, but the speed at which data can move between the processor, the memory, and the network. Current high-end server architectures are seeing a transition to PCIe Gen 6 and Gen 7, as well as the adoption of Compute Express Link (CXL) to allow for memory pooling and lower latency. Jeff Defilippi notes that improving bandwidth within the chip architecture is essential to prevent "stalls" in the agentic workflow, where a fast processor sits idle while waiting for a response from a sub-agent or a remote database.

| Metric | Generative AI Phase (2023) | Agentic AI Phase (2025-2030 Proj.) |

|---|---|---|

| Primary Interaction | Human-to-Machine | Machine-to-Machine |

| Duty Cycle | Intermittent (Human-driven) | Continuous (Autonomous) |

| Queries per Intent | 1:1 | 1:10+ |

| Data Center Power Growth | Moderate | High (Estimated 160% increase) |

| Hardware Focus | GPU-centric Training | CPU-GPU Balanced Orchestration |

Official Responses and Industry Reactions

The semiconductor industry is responding to these demands with a wave of architectural innovation. Leaders at Arm have emphasized that the future of data centers lies in "specialized general-purpose compute." This seemingly paradoxical term refers to CPUs that are highly customizable for specific data center workloads while retaining the flexibility to run a wide array of software.

"The shift from generative to agentic AI will significantly increase the amount of compute power needed in data centers," states Defilippi. He suggests that the industry must focus on three pillars: orchestration efficiency, 24/7 operational stability, and massive increases in inter-chip and intra-chip bandwidth.

Hyperscalers such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud have already begun deploying custom silicon designed to handle these specific orchestration tasks. AWS’s Graviton series and Microsoft’s Cobalt 100 are examples of Arm-based processors designed to maximize performance-per-watt, specifically targeting the high-density, high-throughput workloads that agentic AI demands. The consensus among these major players is that traditional, "one-size-fits-all" hardware is no longer sufficient to meet the efficiency requirements of an agent-driven digital economy.

Technical Implications: Bandwidth and Chiplet Architectures

To address the bandwidth constraints inherent in agentic AI, the industry is moving toward chiplet-based architectures. By breaking a large processor into smaller, specialized chips (chiplets) and connecting them using high-speed interconnects like Universal Chiplet Interconnect Express (UCIe), manufacturers can increase the total "surface area" for data movement. This allows for more memory channels and more network interfaces to be integrated into a single package.

Furthermore, the "memory wall"—the gap between how fast a processor can compute and how fast it can access memory—is becoming a primary target for optimization. Agentic AI requires agents to maintain "context windows" or "memory" of previous interactions to make informed decisions. As the number of agents grows, the amount of data that must be kept "near" the processor increases. This is driving the adoption of High Bandwidth Memory (HBM) and 3D-stacked cache, technologies that were once reserved for high-end GPUs but are now migrating into general-purpose CPU designs to support the reasoning tasks of autonomous agents.

Broader Impact and Future Implications

The transition to agentic AI will have profound implications for the global economy and the environment. On the economic front, the ability of machines to talk to machines and execute complex tasks without human oversight will likely lead to massive productivity gains in sectors such as finance, logistics, and legal services. However, these gains come at a cost. The sheer volume of compute power required means that the "cost per query" must drop significantly for agentic AI to be commercially viable at scale. This puts immense pressure on semiconductor designers to find more efficient ways to process data.

From an environmental perspective, the continuous operation of agentic AI poses a challenge to sustainability goals. As data centers shift to 24/7 high-utilization models, the traditional methods of managing power—such as "throttling" during off-peak hours—become less effective. The industry will need to rely more heavily on carbon-free energy sources and advanced cooling technologies, such as liquid cooling and immersion cooling, to handle the heat generated by these persistent workloads.

In conclusion, the rise of agentic AI marks the beginning of a new chapter in computing. The shift from human-initiated requests to autonomous, machine-to-machine orchestration is transforming the requirements for data center hardware. General-purpose processing, once overshadowed by the hype of AI accelerators, is returning to the forefront as the essential glue that holds these complex agentic ecosystems together. As Jeff Defilippi and other industry experts suggest, the success of this next wave of AI will depend not just on how fast we can calculate, but on how efficiently we can move data and orchestrate the complex dialogue between the machines of the future.