The proliferation of sophisticated large language models (LLMs) has ushered in a new era of artificial intelligence: agentic AI systems. These systems, designed to reason, plan, and act autonomously in complex environments, promise to revolutionize industries from customer service to scientific discovery. However, the path to building reliable and scalable agentic AI is fraught with challenges. Many initial implementations of agentic AI systems are developed incrementally, pattern by pattern, decision by decision, often lacking a cohesive architectural framework. This ad-hoc approach frequently leads to unpredictable agent behavior, difficulties in debugging, and significant hurdles in systematic improvement, a problem that intensifies in multi-step workflows where early missteps can cascade throughout an entire operation.

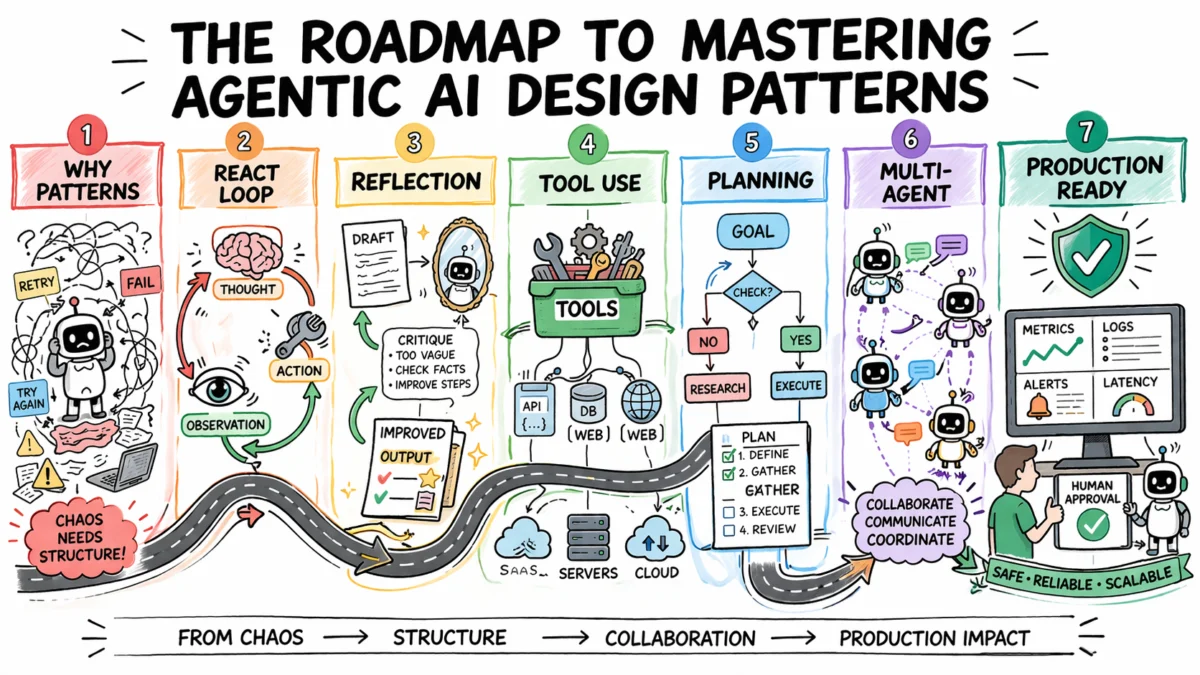

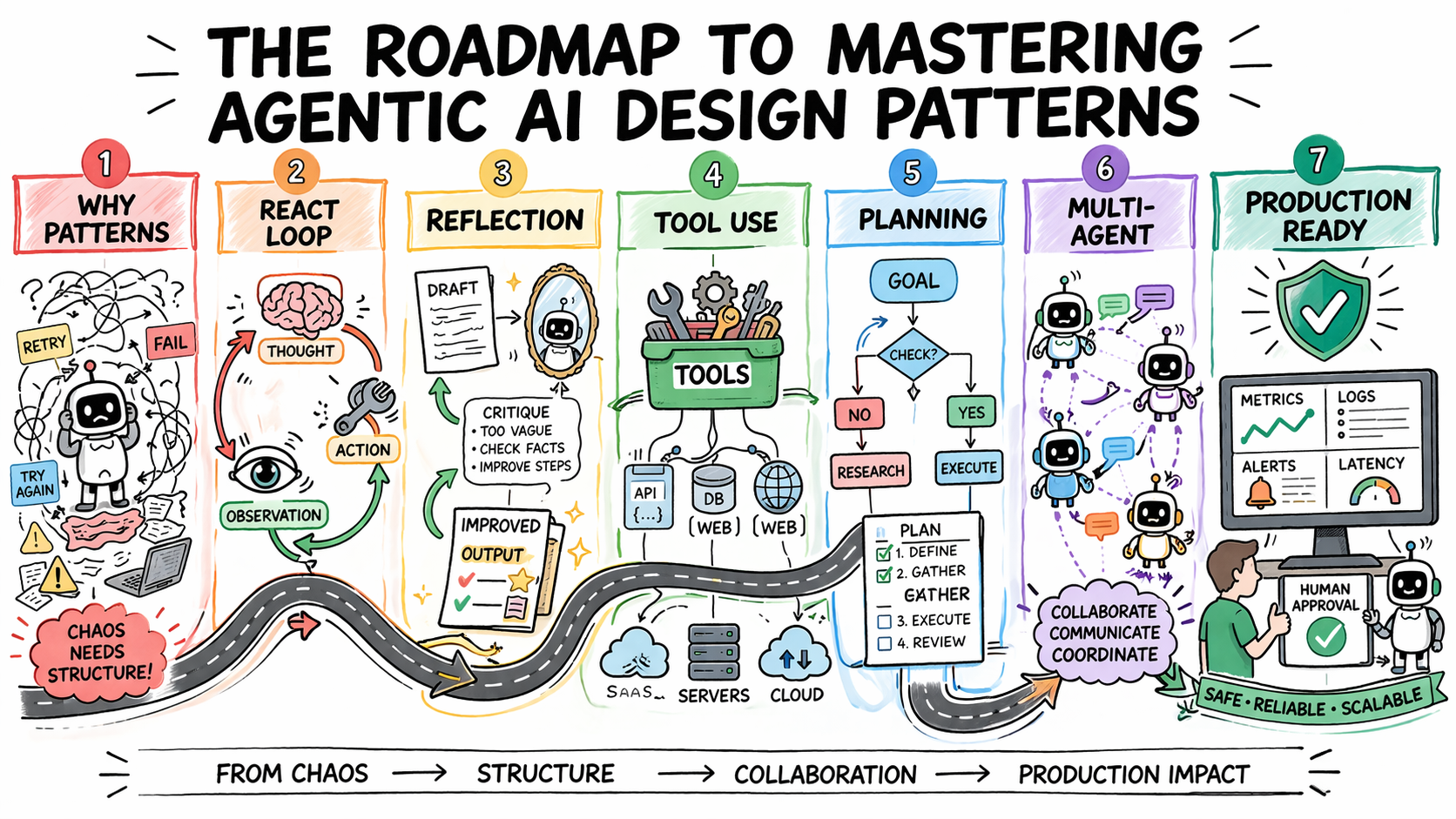

Agentic design patterns emerge as a critical solution to these challenges. These are reusable, proven approaches tailored to address recurring problems inherent in agentic system design. They provide a structured methodology for defining how an agent reasons before acting, evaluates its own outputs, selects and utilizes external tools, coordinates with other agents, and determines when human intervention is necessary. Selecting the appropriate pattern for a given task is paramount, transforming agent behavior from a black box into a predictable, debuggable, and composable system capable of evolving with increasing requirements. This article provides a comprehensive roadmap for understanding and applying these essential agentic AI design patterns, detailing their architectural significance, appropriate use cases, inherent trade-offs, and how they integrate within real-world deployments.

The Imperative for Structured Agent Development

Before delving into specific patterns, it is crucial to reframe the common perception of agent failures. Many developers instinctively attribute agent missteps to inadequate prompting, believing a refined system prompt will rectify the issue. While prompting plays a vital role, a deeper analysis often reveals architectural shortcomings. An agent caught in an endless loop, for instance, typically lacks a deliberately designed stopping condition. One that misuses tools often operates without a clear contractual understanding of when and how to invoke specific functionalities. Similarly, an agent generating inconsistent outputs from identical inputs usually suffers from the absence of a structured decision-making framework.

Design patterns are explicitly engineered to resolve these architectural deficiencies. They serve as repeatable templates that define the fundamental behavior of an agent’s operational loop: how it determines its next action, when to terminate a task, strategies for error recovery, and mechanisms for reliable interaction with external systems. Without these structural blueprints, debugging and scaling agent behavior become nearly insurmountable tasks.

A common pitfall for development teams is the "pattern-selection problem." The temptation to immediately deploy the most advanced and complex patterns—such as multi-agent orchestration or dynamic planning—is strong. However, industry experts caution against premature complexity in agentic systems, highlighting its steep costs. More model calls translate to higher latency and increased token expenses. A greater number of agents introduces additional failure surfaces, while intricate orchestration heightens the risk of coordination bugs. The costly mistake is often the leap to complex patterns before simpler ones have demonstrably reached their operational limits. Therefore, a pragmatic approach dictates starting with the simplest viable pattern and escalating complexity only when clear limitations are encountered.

ReAct: The Foundational Pattern for Adaptive Reasoning

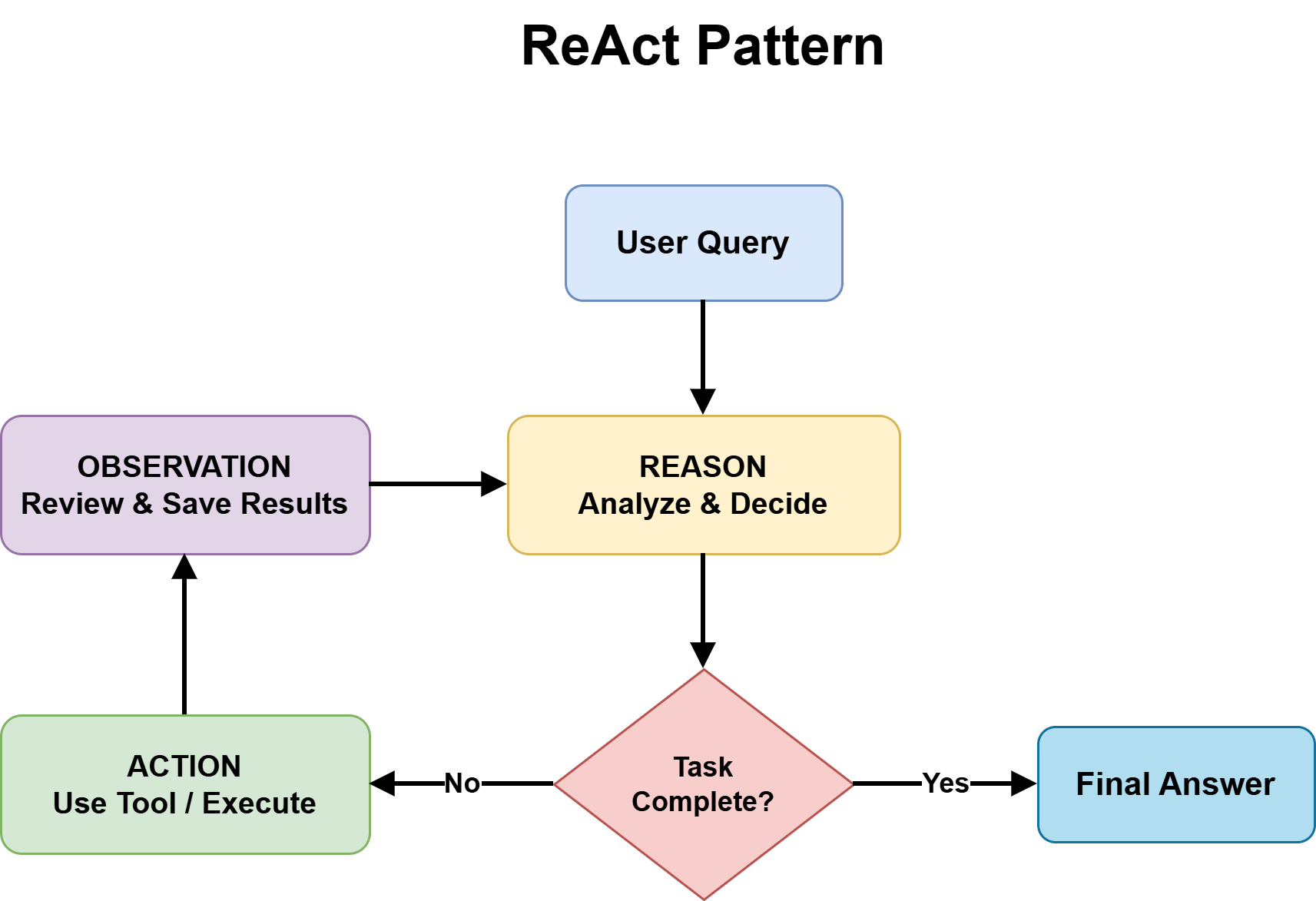

ReAct, an acronym for Reasoning and Acting, stands as the most foundational and often the default agentic design pattern for tackling complex, unpredictable tasks. It ingeniously integrates chain-of-thought reasoning with the utilization of external tools within a continuous feedback loop. This pattern cyclically alternates through three distinct phases: Observation, Thought, and Action. The agent first observes the current state and the results of its previous actions, then thinks or reasons about what to do next, and finally takes an action, often by invoking a tool or generating a response. This cycle persists until the task is definitively completed or a predefined stopping condition is met.

The effectiveness of ReAct stems from its externalization of the reasoning process. Every decision point is made visible, offering unparalleled transparency. When an agent deviates or fails, developers can precisely pinpoint where the logical breakdown occurred, eliminating the need to debug a opaque, black-box output. Furthermore, ReAct actively prevents premature conclusions by grounding each reasoning step in an observable result before progressing, significantly mitigating the risk of hallucinations where models generate unsupported answers without real-world validation.

However, ReAct is not without its trade-offs. Each iteration of the ReAct loop necessitates an additional model call, contributing to increased latency and operational costs. Errors in tool output can propagate through subsequent reasoning steps, compromising overall accuracy. The inherent non-deterministic nature of some language models means identical inputs might lead to divergent reasoning paths, posing consistency challenges, particularly in regulated environments. Without an explicit iteration cap, the ReAct loop risks running indefinitely, leading to rapidly compounding costs. Consequently, ReAct is best suited for scenarios where the solution path is not predetermined, such as adaptive problem-solving, multi-source research, and customer support workflows characterized by variable complexity. Conversely, it should be avoided when speed is paramount or when inputs are sufficiently well-defined to be handled more efficiently and economically by a fixed workflow.

Enhancing Reliability with Reflection

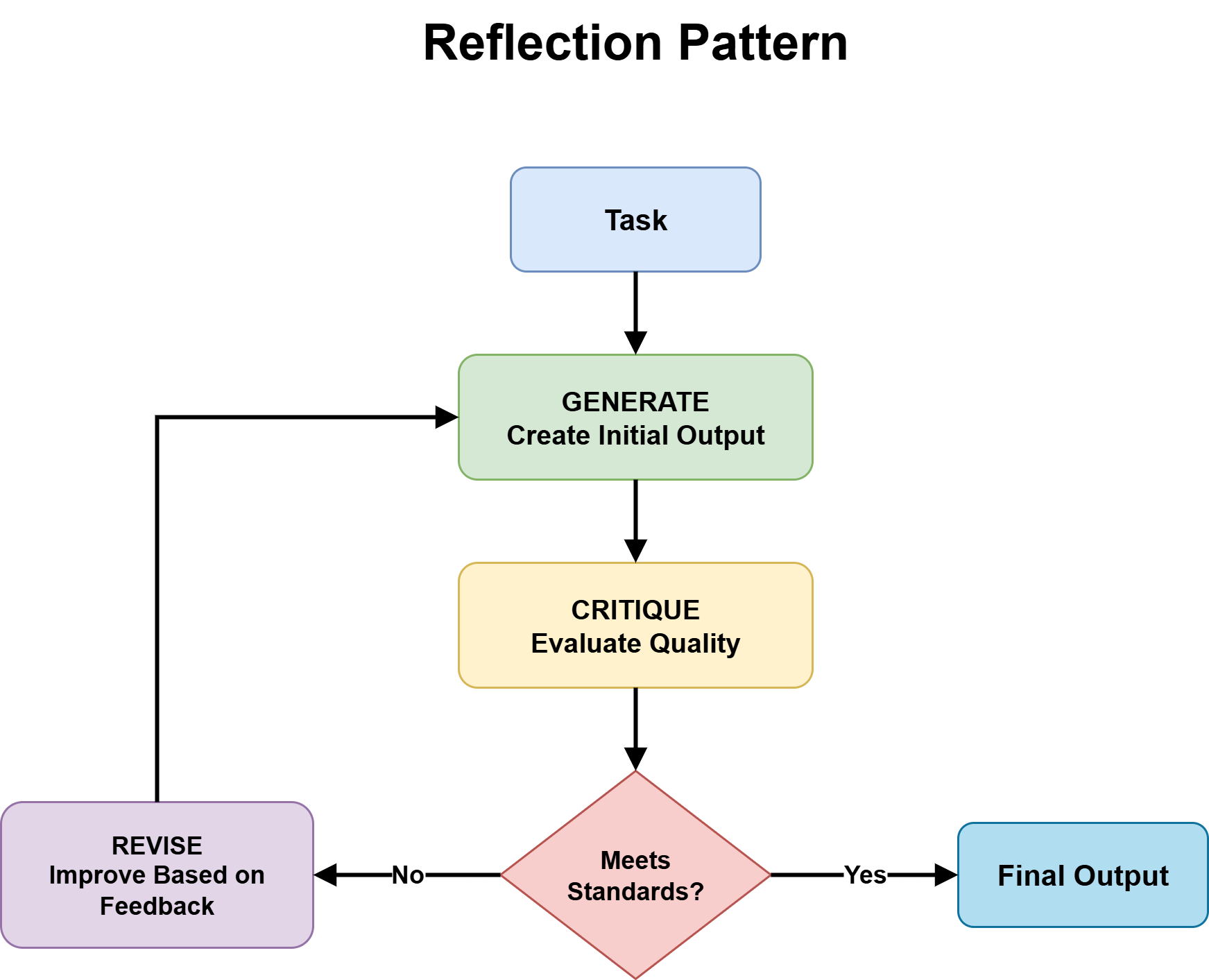

The Reflection pattern empowers an agent with the crucial ability to critically evaluate and revise its own outputs before they are presented to a user. This is achieved through a structured generation-critique-refinement cycle. Initially, the agent produces an output. Subsequently, it assesses this output against a set of predefined quality criteria. This assessment then forms the basis for a revision, initiating a refinement phase. This cycle can be configured to run for a predetermined number of iterations or until the output satisfies a specified quality threshold.

This pattern proves particularly effective when the critique mechanism is specialized. For instance, an agent tasked with code review can focus its critique on identifying bugs, edge cases, or potential security vulnerabilities. An agent reviewing a legal contract might meticulously check for missing clauses or logical inconsistencies. The efficacy of reflection is further amplified by integrating external verification tools such as linters, compilers, or schema validators. These tools provide deterministic feedback, allowing the agent to rely on objective criteria rather than solely on its internal judgment.

Critical design decisions underpin successful reflection. Crucially, the critic component should operate independently from the generator. At a minimum, this entails a separate system prompt with distinct instructions; for high-stakes applications, employing an entirely different model for critique can prevent the critic from inheriting the same blind spots as the generator, thereby fostering genuine evaluation rather than superficial self-agreement. Additionally, explicit iteration bounds are non-negotiable. Without a maximum loop count, an agent continually seeking marginal improvements may stall indefinitely, failing to converge on a satisfactory output. Reflection is the ideal pattern when output quality is prioritized over speed, and when tasks possess sufficiently clear correctness criteria to facilitate systematic evaluation. Its added cost and latency, however, make it less suitable for simple factual queries or applications demanding strict real-time performance.

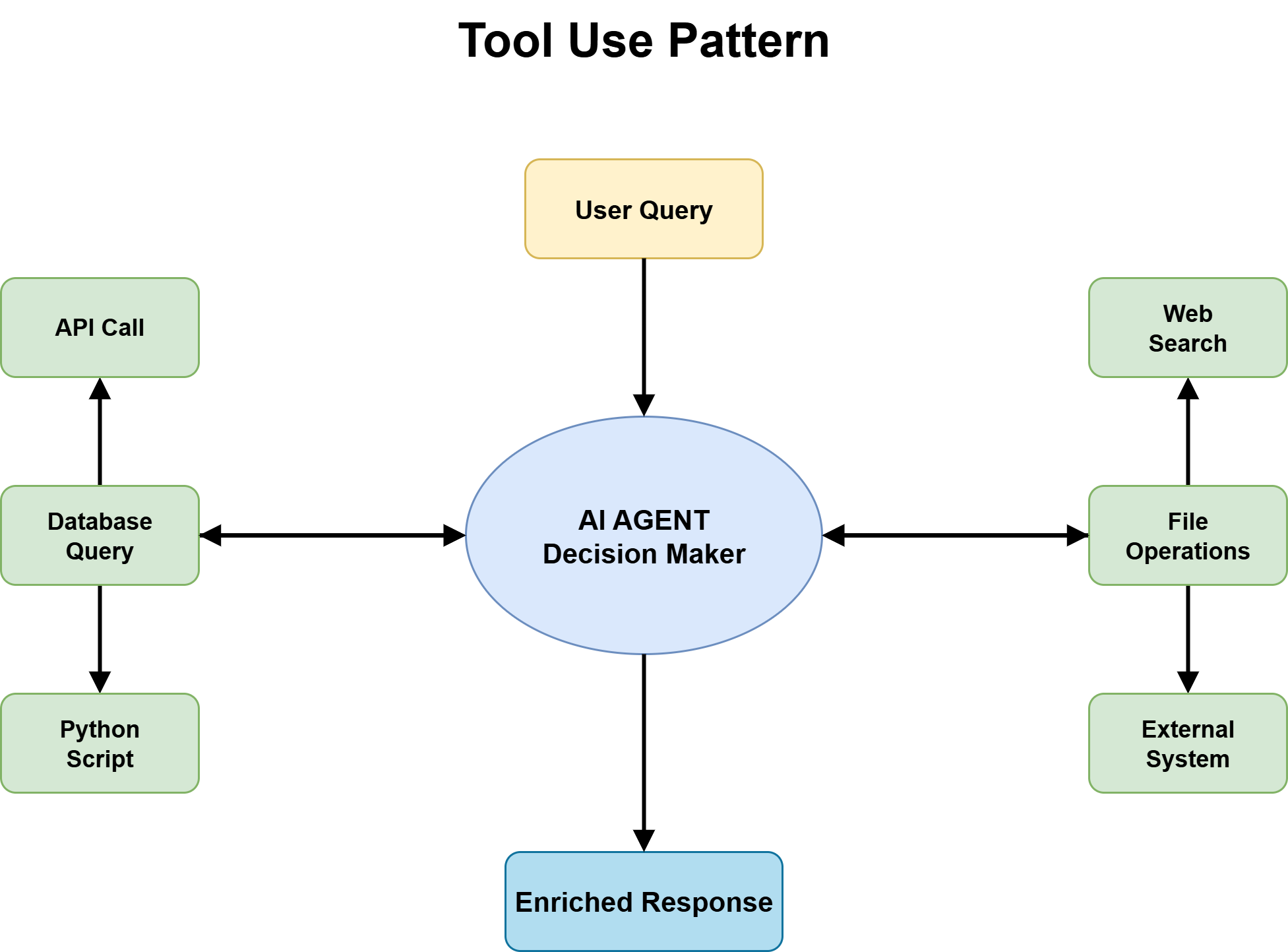

Tool Use: Bridging AI with the Real World

Tool use is the transformative design pattern that elevates an agent from a mere knowledge system into a dynamic action system. Without the ability to use tools, an agent remains confined to its training data, lacking access to current information, external systems, or the capacity to trigger real-world actions. With tool integration, an agent gains the power to call APIs, query databases, execute code, retrieve documents, and interact seamlessly with a multitude of software platforms. For virtually every production-grade agent designed to handle real-world tasks, robust tool use forms the indispensable foundation upon which all other functionalities are built.

The most paramount architectural decision in implementing tool use is the definition of a fixed tool catalog complete with strict input and output schemas. Without these clear schemas, the agent is left to guess how to invoke tools, a process prone to failure, especially under edge cases. Tool descriptions must be sufficiently precise to enable the agent to accurately reason about which tool is appropriate for a given situation. Vague descriptions lead to mismatched calls, while overly narrow ones cause the agent to overlook valid use cases.

Another critical decision revolves around handling tool failures. An agent that simply inherits the reliability problems of its external dependencies without any explicit failure-handling logic will be inherently fragile. APIs are susceptible to rate limits, timeouts, unexpected response formats, and behavioral changes following updates. A resilient agent’s tool layer must incorporate explicit error handling, sophisticated retry logic, and graceful degradation paths for scenarios where tools become temporarily unavailable.

Tool selection accuracy presents a subtler yet equally vital concern. As tool libraries expand, agents must navigate increasingly larger catalogs to identify the correct tool for each task. Performance in tool selection tends to degrade with the growth of the catalog size. A valuable design principle is to structure tool interfaces such that distinctions between tools are clear, unambiguous, and easily discernible by the agent.

Finally, tool use introduces a security surface that many agent developers frequently underestimate. Once an agent can interact with real systems—such as submitting forms, updating records, or initiating financial transactions—the potential blast radius of errors or malicious intent grows significantly. Consequently, sandboxed execution environments, stringent input validation, and human approval gates become absolutely essential for high-risk tool invocations. The OWASP Top 10 for Large Language Model Applications provides a useful reference for mitigating these security risks.

Strategic Planning for Complex Agentic Workflows

Planning represents the design pattern specifically engineered for tasks where the inherent complexity or coordination requirements are too high for the ad-hoc reasoning capabilities of a simple ReAct loop. Unlike ReAct, which improvises step-by-step, planning systematically decomposes a grand objective into an ordered sequence of subtasks, explicitly defining dependencies before any execution commences.

Broadly, two main implementations of planning exist: pre-execution planning and dynamic planning. Pre-execution planning involves the agent generating a comprehensive plan upfront, detailing all steps and their sequence before beginning execution. This is akin to drafting a project plan before starting work. Dynamic planning, on the other hand, allows the agent to adapt its plan mid-execution, re-evaluating and adjusting steps based on new observations or unexpected outcomes, offering greater flexibility in highly uncertain environments.

Planning proves invaluable for tasks demanding significant coordination: multi-system integrations that must proceed in a precise sequence, complex research tasks synthesizing information from numerous disparate sources, and intricate development workflows spanning design, implementation, and rigorous testing phases. The primary benefit of planning is its ability to surface hidden complexities and potential bottlenecks before execution even begins, thereby preventing costly mid-run failures and ensuring a more streamlined process.

The trade-offs associated with planning are straightforward. It necessitates an additional model call at the outset, an overhead that is unwarranted for simpler tasks. Furthermore, planning inherently assumes that the task structure is largely knowable in advance, an assumption that does not always hold true for highly dynamic or novel problems. Therefore, planning is the appropriate choice when the task structure can be clearly articulated upfront and when coordination between steps is sufficiently complex to benefit from explicit sequencing. In all other scenarios, defaulting to the ReAct pattern remains the more pragmatic choice.

Scaling Intelligence Through Multi-Agent Collaboration

As agentic AI systems tackle increasingly ambitious challenges, a single, monolithic agent often reaches its limitations. Multi-agent systems emerge as a powerful paradigm, distributing work across a collective of specialized agents, each endowed with focused expertise, a tailored tool set, and a clearly defined role. In this architecture, a central coordinator typically manages the routing of tasks and the synthesis of outputs, while specialist agents handle the specific functions for which they are optimized.

The benefits of multi-agent systems are compelling: they lead to superior output quality through specialized processing, allow for independent improvability of each agent without impacting the entire system, and facilitate a more scalable architecture capable of handling greater workloads. However, these advantages come with a significant increase in coordination complexity. Successfully implementing a multi-agent system requires meticulously addressing key design questions early in the development cycle.

Ownership—specifically, which agent possesses write authority over shared state or critical resources—must be explicitly defined to prevent conflicts and ensure data integrity. The routing logic that determines how tasks are directed to appropriate agents can range from deterministic rules to intelligent routing driven by an LLM, with most production systems employing a hybrid approach for optimal balance. The orchestration topology dictates how agents interact and collaborate:

- Sequential Topology: Agents process tasks in a linear fashion, passing outputs from one to the next (e.g., Agent A completes task, passes to Agent B).

- Hierarchical Topology: A manager agent delegates tasks to worker agents and aggregates their results (e.g., Manager assigns research to Agent X, writing to Agent Y).

- Dynamic/Peer-to-Peer Topology: Agents can communicate and collaborate flexibly as needed, forming ad-hoc teams to solve problems.

A prudent approach dictates starting with a single, capable agent leveraging ReAct and appropriate tools. The transition to a multi-agent architecture should only occur when a clear bottleneck or a demonstrable need for specialization emerges that cannot be efficiently addressed by a single agent. Modern agent orchestration frameworks like LangGraph, AutoGen, and CrewAI provide robust tools for managing the complexities inherent in multi-agent collaboration.

Ensuring Production Readiness and Safety

The selection of appropriate design patterns constitutes only half of the journey. Ensuring these patterns operate reliably and safely in a production environment demands deliberate evaluation, explicit safety engineering, and continuous monitoring.

It is crucial to define pattern-specific evaluation criteria. For ReAct patterns, evaluation might focus on loop efficiency, accuracy of tool calls, and convergence to a correct answer. For Reflection, key metrics include the rate of improvement per iteration and the system’s ability to converge on a high-quality output within defined bounds. Tool Use patterns require rigorous evaluation of tool call reliability, the accuracy of tool selection, and the robustness of error handling. Planning patterns are assessed based on the optimality of the generated plans and the success rate of plan execution. Multi-agent systems necessitate evaluation of coordination success, task throughput, and the seamless integration of specialist outputs.

Developers must build failure mode tests early in the development cycle. This involves proactively probing for common issues such as tool misuse, infinite loops, routing failures in multi-agent systems, and degraded performance under long context windows or high loads. Treating observability as a core requirement is non-negotiable; step-level traces and comprehensive logging are essential for effective debugging and understanding agent behavior in complex scenarios.

Designing guardrails based on risk assessment is paramount. This includes implementing robust input validation, strict rate limiting for external API calls, and human approval gates for high-impact or sensitive actions. The OWASP Top 10 for LLM Applications serves as an invaluable reference for identifying and mitigating security vulnerabilities specific to language model applications.

Finally, planning for human-in-the-loop (HITL) workflows should be considered a fundamental design pattern, not merely a fallback mechanism. Human oversight provides an essential layer of safety, expertise, and ethical governance, especially in high-stakes applications. Leveraging existing agent orchestration frameworks like LangGraph, AutoGen, CrewAI, and Guardrails AI can significantly streamline the implementation of these evaluation, safety, and monitoring practices, providing battle-tested abstractions for complex agentic workflows.

Conclusion

Agentic AI design patterns are not a static checklist to be completed once and then forgotten. They are dynamic architectural tools that must evolve in tandem with the increasing sophistication and changing requirements of your AI systems. The strategic adoption of these patterns is fundamental to moving beyond experimental prototypes towards robust, enterprise-grade agentic AI.

The overarching strategy remains consistent: initiate development with the simplest pattern that effectively addresses the problem at hand, introduce complexity only when clearly necessitated by evolving requirements or demonstrable limitations of simpler designs, and make substantial investments in comprehensive observability and rigorous evaluation throughout the development lifecycle. This disciplined approach is the cornerstone for building agentic AI systems that are not only highly functional but also inherently reliable, scalable, and safe for deployment in diverse real-world applications, charting a clear course for the future of autonomous intelligence.