Anthropic’s Claude Mythos Preview, unveiled on April 7, has swiftly become the focal point of global cybersecurity discussions, sparking both excitement and trepidation across the industry. This powerful, cybersecurity-focused AI system, initially described as capable of identifying vulnerabilities at unprecedented scale and speed, has forced organizations to confront profound questions about their ability to validate, prioritize, and remediate the influx of findings it generates. The debut of Mythos, as part of the broader "Project Glasswing" initiative, signals a potential paradigm shift, yet it also casts a stark light on a long-standing, often-overlooked operational deficiency: the chasm between vulnerability discovery and effective remediation.

The Dawn of AI-Powered Vulnerability Discovery

Anthropic, a leading AI safety and research company, positioned Claude Mythos as a revolutionary tool in the cybersecurity arsenal. Leveraging advanced large language models (LLMs), Mythos is designed to scrutinize codebases, identify logical flaws, and pinpoint exploitable vulnerabilities with a level of detail and velocity previously unattainable by human analysts or traditional scanning tools. The initial reports highlighted its capacity to accelerate the "red team" function, providing deep insights into potential attack vectors and weaknesses across complex digital infrastructures. This capability promises to significantly enhance defensive postures by offering a proactive, continuous assessment of an organization’s security landscape.

However, the very power of Mythos immediately raised critical questions within the cybersecurity community. Experts debated whether this represented a genuine "step-change" in security capabilities or merely an incremental advancement. A significant point of contention revolved around Anthropic’s initial strategy of restricting access to a select group of major tech players and financial institutions, including Microsoft, Apple, Amazon Web Services (AWS), and JPMorgan. Critics argued that while this approach might mitigate initial risks by placing the tool in the hands of already well-defended entities, it could simultaneously concentrate defensive advantages, leaving smaller organizations and critical infrastructure providers at a greater disadvantage. Furthermore, the inevitable concern emerged: what happens when state-sponsored actors, sophisticated criminal enterprises, or other malicious entities develop equivalent AI capabilities, turning this powerful defensive tool into an offensive weapon?

Beyond Discovery: The Unseen Remediation Bottleneck

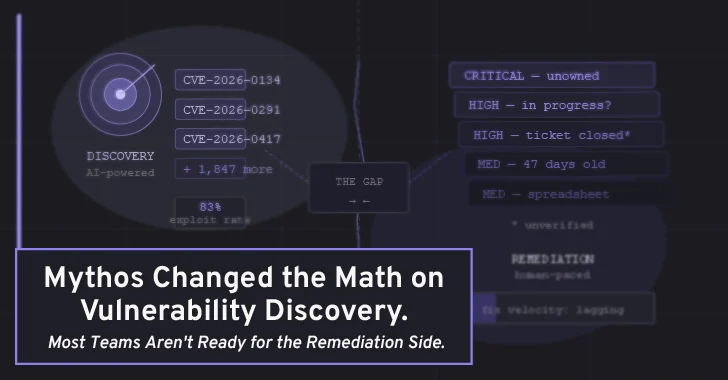

While these strategic and ethical considerations are undoubtedly crucial, a more immediate and pervasive operational challenge, often obscured in the broader AI security conversation, is gaining prominence: the "Discovery-to-Remediation Gap." The original article astutely points out that while AI models like Mythos excel at finding vulnerabilities faster, the process of fixing them involves an entirely different set of workflows and organizational capabilities. This gap, where many security programs silently falter, represents the true determinant of whether most organizations can adapt and survive this new era of accelerated threat intelligence.

Traditional vulnerability management often struggles with systemic inefficiencies. After a penetration test, a security audit, or a vulnerability scan unearths critical findings, these often end up in disparate, unstructured formats – a spreadsheet, a hastily created ticket, or a voluminous PDF report buried in an inbox. The security team might be aware, but the engineering or development teams responsible for implementing fixes often lack clear visibility, precise ownership, or standardized communication channels. This leads to ambiguity in remediation ownership, a lack of consistent tracking mechanisms for whether patches are deployed or deprioritized, and often, an absence of scheduled re-testing to verify the effectiveness of a fix. Consequently, a backlog of unaddressed vulnerabilities quietly accumulates, turning identified risks into persistent liabilities.

AI models like Mythos are poised to dramatically accelerate the input side of this pipeline. They can discover vulnerabilities with a pace and depth that far outstrips human red teams or conventional scanners. However, if an organization’s underlying infrastructure for triaging, prioritizing, communicating, and verifying these fixes has not evolved in parallel, faster discovery merely translates into a faster-growing, unmanageable backlog of critical issues. Imagine a scenario where a traditional penetration test might yield ten high-severity findings over a three-week period, and remediation teams are already struggling to keep pace. What happens when an AI system continuously scans the same attack surface, generating findings at ten or even a hundred times that rate? Without a robust remediation framework, the sheer volume of newly identified vulnerabilities could overwhelm even well-intentioned security teams, ironically making an organization less secure despite having superior discovery capabilities.

The False Positive Conundrum and Contextualizing Risk

Adding another layer of complexity to this operational problem is the issue of false positives, a point sharply articulated by cybersecurity expert Bruce Schneier. While Anthropic reported an impressive 89% severity agreement with human contractors on a showcased sample of Mythos findings, the true false positive rate on unfiltered output remains largely unknown. AI systems, particularly those designed to be highly sensitive to potential vulnerabilities, often generate plausible-sounding alerts, some of which may pertain to already patched code, non-exploitable conditions, or simply misinterpretations of context.

Operationally, a tool that generates high-confidence, yet spurious, critical findings at scale does not alleviate the burden on security teams; it exacerbates it. Each false positive that requires triage, investigation, and eventual dismissal consumes valuable time and resources that security engineers could otherwise dedicate to real, exploitable vulnerabilities. The true value of AI-assisted vulnerability discovery can only be realized if the findings it produces can be efficiently evaluated, accurately contextualized against actual business risk, and reliably routed to the appropriate personnel for action. This necessitates a sophisticated system capable of filtering noise, understanding the broader operational context, and distinguishing between theoretical weaknesses and immediate threats.

Essential Infrastructure for an AI-Augmented Security Landscape

The organizations best positioned to absorb the velocity of Mythos-era discovery are those that have proactively invested in three foundational elements for their vulnerability management and remediation programs:

-

Centralized Findings Management: This goes beyond a simple ticketing system or a Jira board haphazardly bolted onto a spreadsheet. It requires a purpose-built platform where vulnerability findings from diverse sources – automated scanner outputs, manual penetration test reports, red team engagements, and now, AI-generated insights – are aggregated, normalized, and stored in a queryable format. Such a system serves as a single source of truth, eliminating data silos and providing a comprehensive, real-time overview of an organization’s risk posture. It allows for consistent data interpretation, facilitates trend analysis, and supports automated workflows.

-

Risk-Contextualized Prioritization: Raw Common Vulnerability Scoring System (CVSS) scores are merely a starting point, not a definitive decision. A high-CVSS finding on an air-gapped internal system poses a vastly different risk profile than the same finding in a customer-facing API handling sensitive data. Organizations that rely solely on severity scores will quickly be overwhelmed by the volume of findings generated by AI discovery. Effective prioritization demands scoring against a broader set of criteria: asset criticality (the business value of the affected system), business impact (the potential financial, reputational, or operational consequences of exploitation), and exposure context (whether the vulnerability is internet-facing, internal, or protected by other controls). This dynamic, configurable scoring allows security teams to intelligently triage, focusing limited resources on the threats that pose the most significant and immediate danger to the business.

-

Closed-Loop Remediation Tracking: This is often the weakest link in many security programs. A vulnerability that is identified but not verified as fixed remains a liability, albeit one that now has a name. Effective closed-loop tracking involves a structured workflow: clear assignment of ownership, continuous monitoring of remediation progress, and crucially, continuous re-testing or verification to confirm that the patch or fix was correctly implemented and effectively mitigated the vulnerability. Without these rigorous processes, a security program merely accumulates documented risk rather than systematically reducing it. Platforms like PlexTrac, for instance, are designed to provide this operational layer, transforming raw findings into actionable, trackable remediation projects, ensuring accountability, and verifying successful mitigation. They act as the "operational layer" that ensures the "structural problems" identified by AI are actually fixed, much like a general contractor manages repairs after a detailed home inspection.

The Access Problem: A Workflow Challenge for SMEs

The discussion around "Project Glasswing" and its initial limited access points to a deeper structural issue identified by experts like Bruce Schneier and echoed by former national cyber directors. While Fortune 500 enterprises possess the resources and sophisticated security teams to potentially absorb and remediate AI-generated findings, small and medium-sized enterprises (SMEs), regional infrastructure operators, and specialized industrial systems face a significant disadvantage. These organizations are often more exposed due to limited budgets, smaller security teams, and a lack of specialized expertise.

This disparity is not solely an "access problem" that policy can address by simply democratizing the tools. It is fundamentally a "workflow problem." Even if AI-powered discovery tools were made universally available, many smaller organizations lack the foundational operational infrastructure – the centralized management, contextualized prioritization, and closed-loop tracking – necessary to translate AI-generated security findings into executed remediations. For these entities, tooling that significantly reduces the overhead of vulnerability management – through faster reporting, clearer findings communication, and lower-friction remediation handoffs – is arguably even more critical than for the large enterprises that can simply "throw headcount at the problem." The gap between discovery and remediation widens proportionally to an organization’s resource constraints.

The Urgent Call to Action for Cybersecurity Leaders

The "Mythos moment" serves as a powerful and timely forcing function for the entire cybersecurity industry. It’s not a harbinger of imminent compromise for every system, but rather a stark illumination of a widening chasm that has been growing quietly for years: while security teams have made significant strides in their ability to find problems, the organizational machinery for fixing them has lagged considerably.

The appropriate response to this development is neither panic nor passive waiting for broader access to advanced AI tools. Instead, it is a proactive internal audit of an organization’s existing remediation pipeline. Cybersecurity leaders and IT professionals must ask uncomfortable, yet essential, questions:

- What is the average time it takes for a critical finding to move from initial discovery to verified fix within our organization?

- How many open high-severity findings are currently in an ambiguous state, merely "being worked on" without clear progress or accountability?

- Do we possess the capability to actually re-test systems after remediation, or do we simply trust that an engineering ticket being marked "closed" equates to a resolved vulnerability?

- Are our vulnerability findings centralized, normalized, and easily queryable, or are they scattered across various documents and platforms?

- Can we effectively prioritize vulnerabilities based on real business risk and asset criticality, or do we rely solely on generic severity scores?

The answers to these questions do not require access to Anthropic’s Claude Mythos. They demand an honest assessment of current processes, technologies, and organizational structures. For most teams, the insights gleaned from such an audit will likely be more uncomfortable, and ultimately more impactful, than anything contained within Anthropic’s comprehensive technical documentation. The future of cybersecurity, increasingly augmented by powerful AI, hinges not just on sophisticated discovery, but on robust, efficient, and accountable remediation. Ignoring this fundamental operational problem is to invite an escalating torrent of identified, yet unaddressed, risk.