Amazon Web Services (AWS) has announced the immediate availability of Anthropic’s Claude Opus 4.7, the latest and most advanced iteration of its flagship large language model (LLM), within the Amazon Bedrock managed service. This integration marks a significant advancement in enterprise artificial intelligence, offering businesses unprecedented performance across critical domains such as sophisticated coding, the orchestration of long-running autonomous agents, and complex professional workflows. The strategic inclusion of Opus 4.7 on Bedrock is poised to accelerate the adoption of generative AI solutions by providing enterprise-grade infrastructure coupled with cutting-edge model intelligence, promising to transform how organizations approach data processing, decision-making, and application development.

Key Capabilities and Performance Enhancements of Claude Opus 4.7

Anthropic positions Claude Opus 4.7 as its most intelligent model to date, engineered to deliver substantial improvements across a spectrum of production workflows essential for modern enterprises. The model is specifically designed to excel in tasks demanding advanced reasoning, meticulous problem-solving, and precise instruction following, even when confronted with ambiguity. This enhanced capability is crucial for scenarios where previous models might have struggled with nuanced context or multi-step reasoning.

One of the standout features highlighted is its prowess in agentic coding. This goes beyond simple code generation; Opus 4.7 is equipped to understand complex software requirements, plan multi-faceted coding tasks, generate robust code, and even suggest improvements or debug issues, behaving more like an autonomous coding assistant or a junior developer. For organizations grappling with accelerated development cycles and talent shortages, this capability can significantly boost developer productivity, reduce time-to-market for new applications, and streamline maintenance of existing systems. From generating complex algorithms to crafting entire microservices architectures in various programming languages, Opus 4.7 aims to act as a highly capable co-pilot for software engineers.

Beyond coding, Opus 4.7 demonstrates superior performance in knowledge work. This encompasses a wide array of activities, including synthesizing vast amounts of information from diverse sources, performing intricate data analysis, generating comprehensive reports, and extracting critical insights from unstructured text. For industries like finance, legal, and research, where processing and understanding large volumes of information are paramount, Opus 4.7 can serve as an invaluable tool for accelerating research, due diligence, and strategic planning. Its ability to work better through ambiguity means it can handle real-world data, which often contains inconsistencies or incomplete information, with greater accuracy and reliability.

The model also boasts enhanced visual understanding capabilities, meaning it can interpret and reason about information presented in images or diagrams. While the immediate announcement focuses on text-based interactions, this underlying capability suggests future potential for multimodal applications that can analyze charts, graphs, technical drawings, and other visual data alongside textual prompts, offering a more holistic understanding of complex inputs. This feature is particularly relevant for sectors like manufacturing (interpreting CAD designs), healthcare (analyzing medical images), and retail (understanding product layouts).

Furthermore, Opus 4.7 is optimized for long-running tasks. Many real-world business processes involve sequences of operations that unfold over extended periods, requiring persistent context and coherent decision-making. Whether it’s managing complex customer service interactions, orchestrating supply chain logistics, or running multi-stage data processing pipelines, the model’s ability to maintain context and execute instructions over prolonged interactions represents a significant leap forward. This reduces the need for frequent re-prompts or manual intervention, leading to more autonomous and efficient workflows.

Anthropic emphasizes that Opus 4.7 is "more thorough in its problem solving" and "follows instructions more precisely." These attributes are foundational for building reliable AI applications, especially in regulated industries or mission-critical systems where errors can have severe consequences. The model’s refined ability to parse and adhere to intricate instructions, combined with its deeper analytical capabilities, mitigates the risk of misinterpretations and ensures outputs are aligned with user intent, even for highly complex, multi-faceted requests.

Underlying Infrastructure: Amazon Bedrock’s Next-Generation Inference Engine

The integration of Claude Opus 4.7 is powered by Amazon Bedrock’s cutting-edge, next-generation inference engine. This engine is not merely a conduit for the model; it is a critical component that elevates the model’s utility to enterprise standards, delivering robust infrastructure specifically designed for production-grade generative AI workloads. The enhancements to Bedrock’s inference capabilities are as significant as the model improvements themselves.

At its core, the new inference engine features brand-new scheduling and scaling logic. This sophisticated system dynamically allocates computational capacity to incoming requests, ensuring optimal resource utilization and, crucially, improving availability. For businesses, this translates into a more reliable service, particularly for steady-state workloads that require consistent performance. Concurrently, the engine is designed to accommodate rapidly scaling services, allowing enterprises to seamlessly handle sudden spikes in demand without experiencing performance degradation or service interruptions. This elasticity is vital for applications with variable usage patterns, such as e-commerce platforms during peak sales or real-time analytics dashboards.

A cornerstone of Bedrock’s appeal, and a defining feature of this update, is its commitment to zero operator access. This means that customer prompts and the model’s responses are strictly confidential, never visible to Anthropic or AWS operators. In an era where data privacy and security are paramount, particularly for sensitive enterprise information, this "zero-trust" approach provides an unparalleled level of data isolation. Organizations handling proprietary business strategies, confidential financial data, protected health information (PHI), or personally identifiable information (PII) can deploy generative AI solutions with greater confidence, knowing their sensitive data remains private and secure. This feature significantly lowers compliance hurdles and accelerates the adoption of AI in highly regulated sectors.

Amazon Bedrock itself is a fully managed service that offers a choice of high-performing foundation models from leading AI companies, along with a broad set of capabilities to build, scale, and secure generative AI applications. Launched with the vision of making generative AI accessible and practical for enterprises, Bedrock simplifies the deployment and management of FMs by providing a single API endpoint, eliminating the need for complex infrastructure provisioning or specialized expertise. Its comprehensive toolset includes features for fine-tuning models with proprietary data, creating agents that execute multi-step tasks, and establishing guardrails to ensure responsible AI usage. The continuous evolution of Bedrock, exemplified by this Opus 4.7 integration, underscores AWS’s commitment to staying at the forefront of the generative AI landscape.

Strategic Partnership: AWS and Anthropic

The collaboration between AWS and Anthropic has been a pivotal force in the generative AI ecosystem. Anthropic, founded by former OpenAI researchers, has distinguished itself with a strong commitment to AI safety and responsible development, pioneering concepts like "Constitutional AI" to guide model behavior towards helpfulness, harmlessness, and honesty. This alignment with responsible AI principles makes Anthropic a natural partner for AWS, which similarly emphasizes secure and ethical AI deployment for its vast enterprise customer base.

The partnership saw Anthropic’s Claude models becoming available on Amazon Bedrock early in its lifecycle, providing enterprises with access to powerful, safety-oriented LLMs. This latest integration of Opus 4.7 is a testament to the deepening collaboration and a shared vision of empowering businesses with state-of-the-art AI. The rapid pace of model development and deployment within Bedrock highlights the dynamic nature of the generative AI market and the imperative for cloud providers to offer the latest innovations to their customers swiftly. This continuous cycle of innovation ensures that enterprises leveraging Bedrock can access the cutting edge of AI capabilities without the overhead of direct model integration and infrastructure management.

Impact on Enterprise and Developer Workflows

The introduction of Claude Opus 4.7 on Amazon Bedrock is expected to have a transformative impact across various enterprise and developer workflows:

- Accelerated Software Development: Developers can leverage Opus 4.7 for generating complex code, refactoring existing codebases, performing automated testing, and even translating code between languages. This could drastically cut development cycles and free up human developers to focus on higher-level architectural design and innovative problem-solving.

- Enhanced Business Intelligence: The model’s superior knowledge work capabilities can empower business analysts to derive deeper insights from market data, customer feedback, and internal reports. Automated summarization, trend identification, and predictive analytics will become more sophisticated and accessible.

- Intelligent Automation and Agents: With improved handling of long-running tasks and precise instruction following, businesses can deploy more capable AI agents for tasks like automated customer support, personalized marketing campaigns, and complex operational management. These agents can manage multi-turn conversations, process transactions, and interact with various internal systems autonomously.

- Content Creation and Curation: Marketing, media, and publishing sectors can benefit from Opus 4.7’s ability to generate high-quality, nuanced content, perform extensive research for articles, and adapt content for different audiences and platforms.

- Data Security and Compliance: For industries like healthcare, finance, and government, the "zero operator access" feature is a game-changer. It provides the necessary security assurances to adopt advanced AI models for processing sensitive data, enabling innovation without compromising regulatory compliance or patient/customer privacy.

- Research and Development: Scientific and engineering organizations can use Opus 4.7 for accelerating literature reviews, generating hypotheses, simulating experiments, and analyzing complex datasets, thereby shortening research cycles and fostering breakthrough discoveries.

Deployment and Accessibility

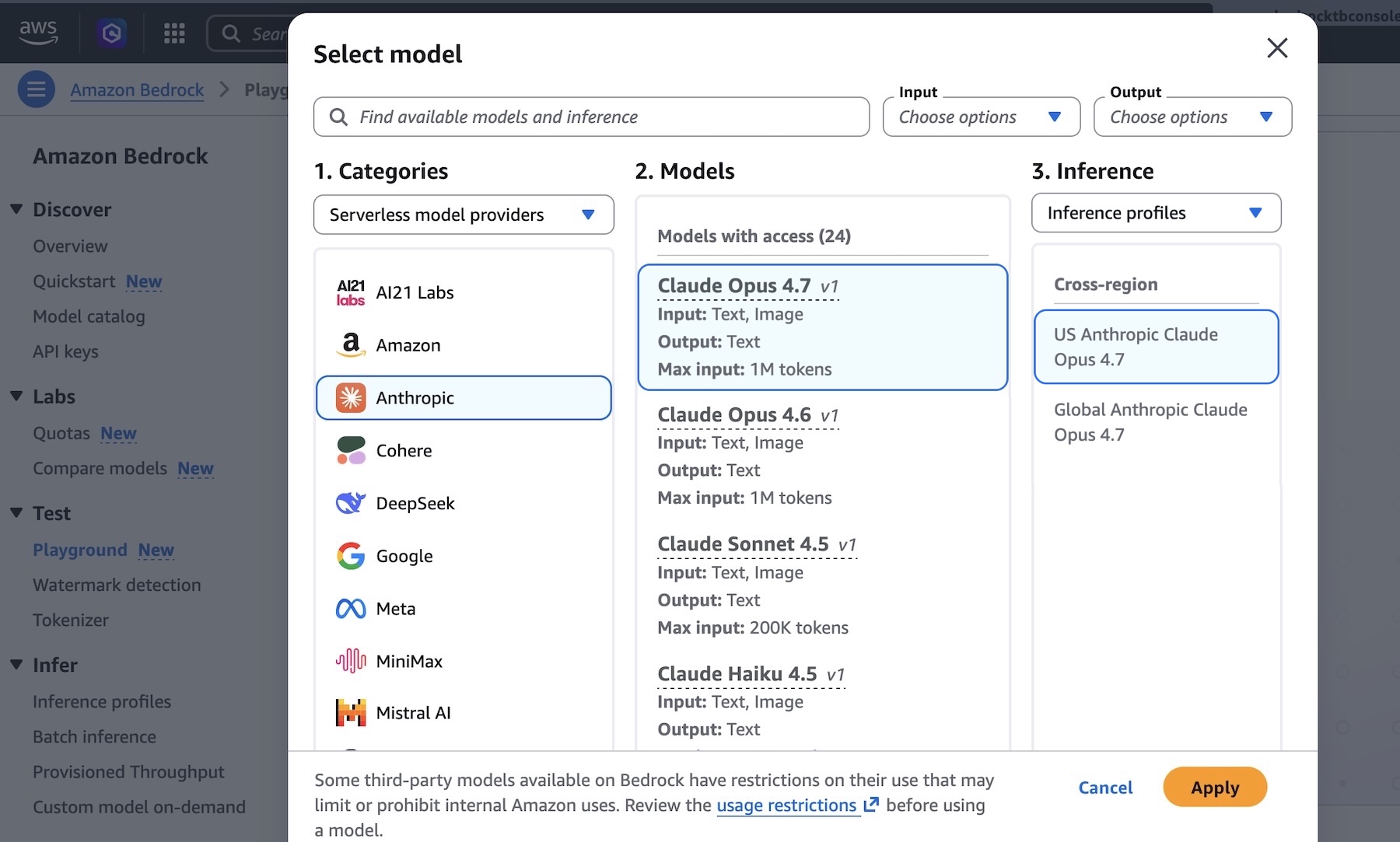

Anthropic’s Claude Opus 4.7 model is now generally available in key AWS regions, including US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). This multi-region availability ensures that global enterprises can deploy and leverage the model with low latency and in compliance with regional data residency requirements. AWS maintains a comprehensive list of supported regions, with future updates anticipated to expand accessibility further.

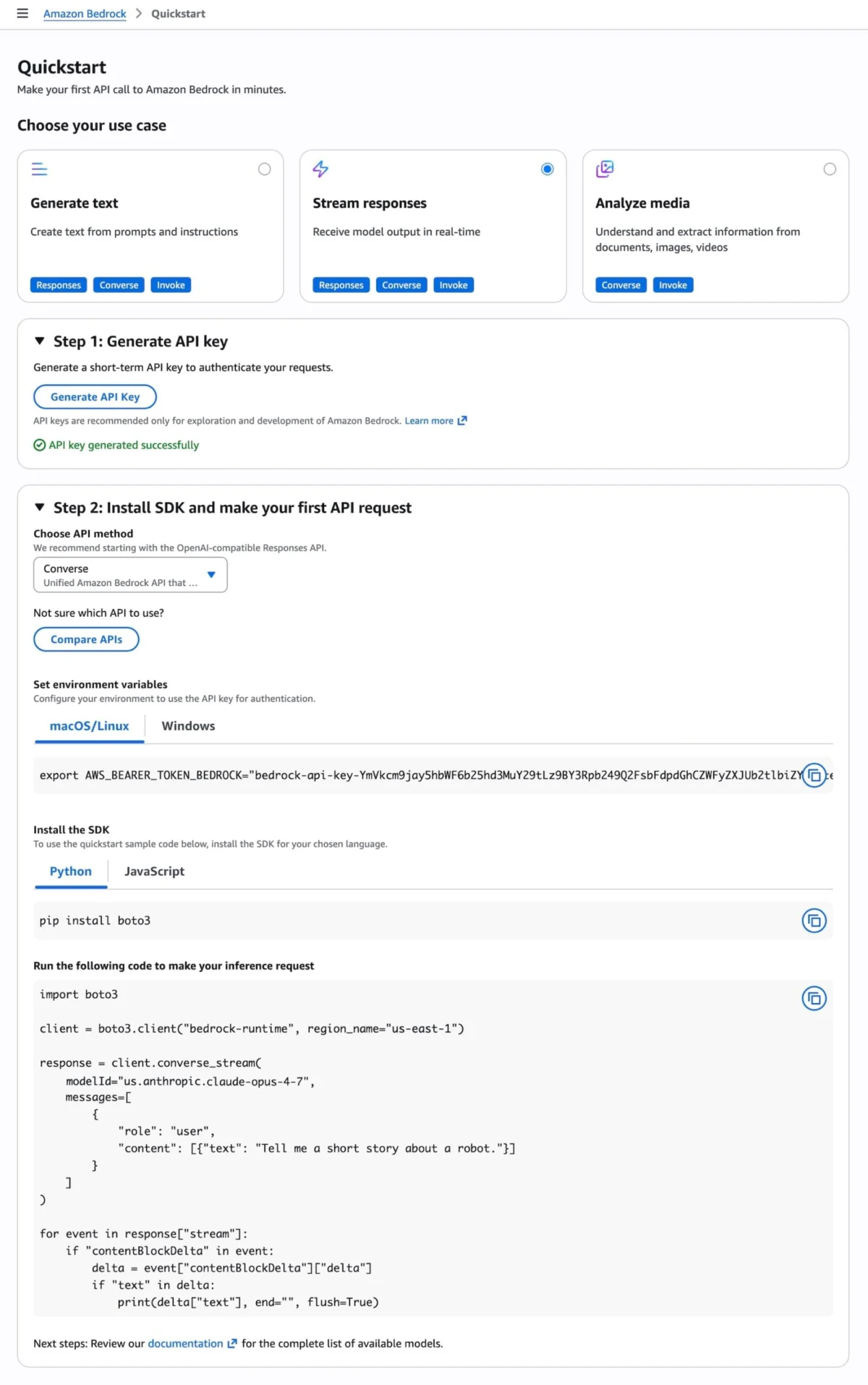

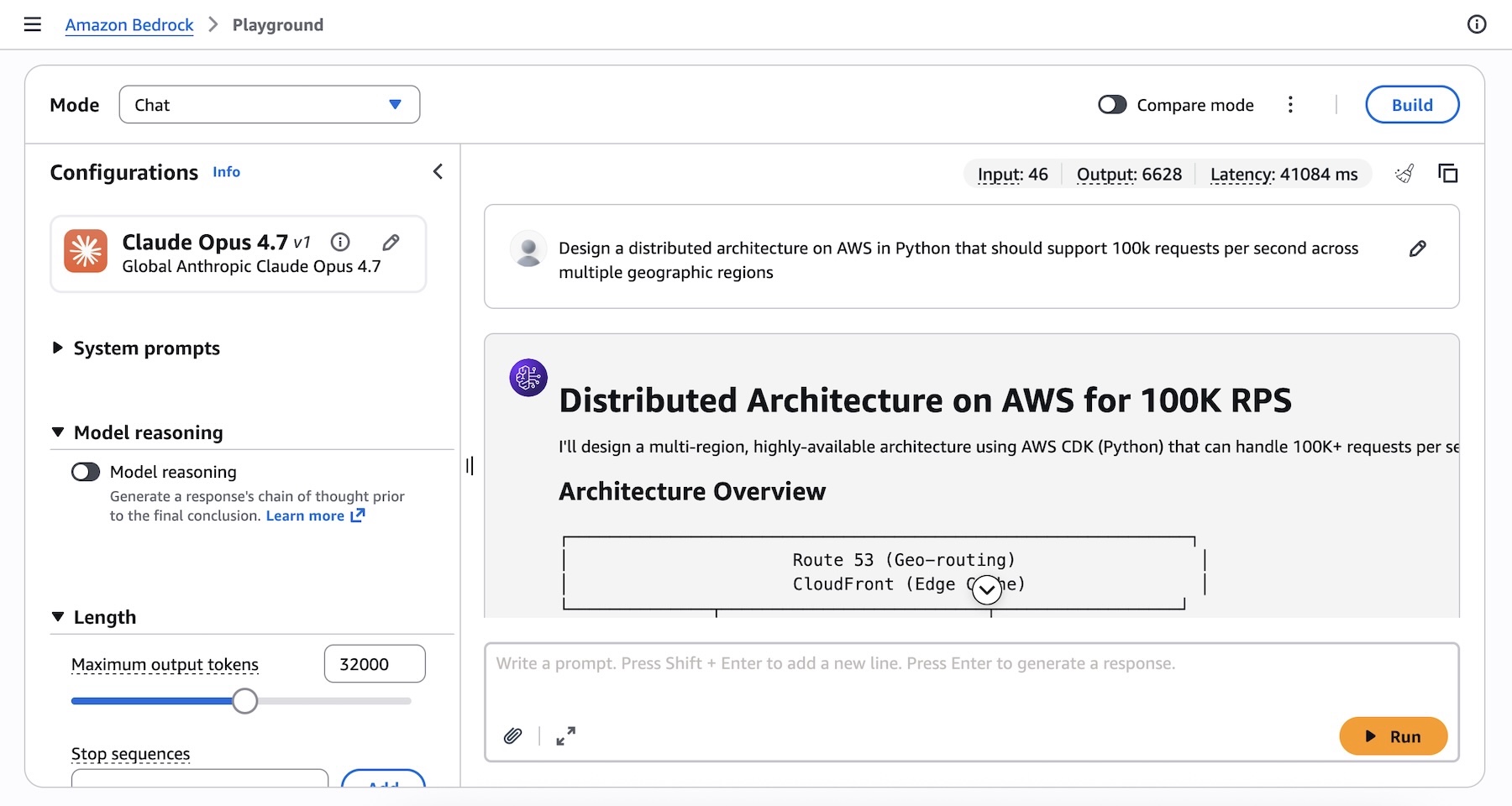

Developers and enterprises can begin experimenting with Claude Opus 4.7 immediately through the Amazon Bedrock console. The intuitive Playground interface allows users to test complex prompts and observe the model’s advanced reasoning capabilities in real-time. For programmatic access, AWS offers multiple avenues:

- Anthropic Messages API: This dedicated API, accessible via the

anthropic[bedrock]SDK package, provides a streamlined experience for interacting with Claude Opus 4.7. Developers can initialize theAnthropicBedrockMantleclient and construct messages using a simple Python interface, enabling quick integration into existing applications. - AWS CLI and SDKs: For those preferring standard AWS tools, the

bedrock-runtime invoke-modelcommand via the AWS Command Line Interface (CLI) or the AWS SDKs allows direct invocation of the model. This method offers flexibility and integrates seamlessly into AWS-centric development pipelines. - Invoke and Converse APIs: Bedrock’s general

InvokeandConverseAPIs also support Claude Opus 4.7, providing standardized interfaces for interaction across various foundation models available on the platform.

AWS also provides a "Quickstart" option in the Bedrock console, generating temporary API keys and sample code snippets in various programming languages. This facilitates rapid prototyping and enables developers to make their first API calls within minutes, significantly lowering the barrier to entry for exploring the model’s capabilities. Furthermore, developers can explore "Adaptive thinking" with Claude Opus 4.7, a feature that allows the model to dynamically allocate thinking token budgets based on prompt complexity, leading to more efficient and targeted responses.

Broader Market Implications and Future Outlook

The release of Claude Opus 4.7 on Amazon Bedrock solidifies AWS’s position as a leading enabler of enterprise AI and underscores the intensifying competition among cloud providers to offer the most sophisticated and secure generative AI services. This move directly addresses the growing demand from businesses for models that can handle increasingly complex, high-stakes tasks with greater accuracy and autonomy.

The emphasis on "enterprise-grade infrastructure" and "zero operator access" sets a new benchmark for trust and security in the generative AI space. As AI adoption moves from experimental projects to core business operations, these features will become non-negotiable requirements for many organizations, particularly those in highly regulated sectors or those handling sensitive intellectual property.

This development also highlights the rapid evolution of LLMs themselves. The continuous improvement in areas like ambiguity handling, instruction following, and agentic capabilities signifies a broader trend towards more robust, reliable, and "intelligent" AI systems. These advancements are paving the way for a new generation of AI-powered applications that can autonomously perform tasks previously thought to be exclusive to human cognitive abilities.

Looking ahead, the synergy between advanced models like Claude Opus 4.7 and scalable, secure cloud platforms like Amazon Bedrock will drive unprecedented innovation across industries. Businesses will be able to build more sophisticated AI assistants, automate complex decision-making processes, and unlock new forms of creativity and efficiency. The ongoing feedback loop from developers and enterprises testing these models will further refine their capabilities, ensuring that the generative AI revolution continues to deliver tangible, impactful results for the global economy.

AWS encourages users to try Anthropic’s Claude Opus 4.7 in the Amazon Bedrock console today and provide feedback via AWS re:Post for Amazon Bedrock or through their standard AWS Support channels, fostering a collaborative development environment that will shape the future of enterprise AI.