The rapid proliferation of generative artificial intelligence has fundamentally shifted the requirements for consumer-grade silicon, leading to the rise of the dedicated Neural Processing Unit (NPU). As hardware manufacturers race to meet the performance thresholds required for local AI execution—such as Microsoft’s 40 TOPS (Tera Operations Per Second) requirement for Copilot+ PCs—the internal architecture of these processors has come under intense scrutiny. At the heart of this technical debate is the tension between heterogeneous and unified NPU designs. While heterogeneous architectures utilize specialized engines to maximize the efficiency of specific mathematical operations, they introduce a structural "data movement tax" that can impact latency, power consumption, and overall system throughput.

During the Intel Tech Tour 2024, a detailed technical presentation regarding the Intel Gen 4 NPU architecture provided a rare window into the practical execution of modern AI workloads. By examining the execution flow of a transformer-based model, Intel’s engineers highlighted a recurring challenge in modern chip design: the necessity of moving intermediate data across architectural boundaries. In a heterogeneous system, where different types of compute engines handle different stages of an AI graph, the shared memory hierarchy becomes a critical, and sometimes congested, thoroughfare.

The Evolution of the Neural Processing Unit

To understand the implications of Intel’s Gen 4 design, one must first look at the trajectory of AI acceleration in the PC ecosystem. Intel’s NPU journey began with the acquisition of Movidius, leading to the integration of dedicated AI hardware in the "Meteor Lake" (Core Ultra Series 1) processors. The Gen 3 NPU found in those chips established a baseline for low-power AI inference, but the subsequent "Lunar Lake" (Core Ultra Series 2) architecture, featuring the Gen 4 NPU, represents a significant leap in both scale and complexity.

The Gen 4 NPU is built to handle the massive computational demands of Large Language Models (LLMs) and Diffusion models. However, the architectural philosophy remains heterogeneous. The design is partitioned into distinct compute domains, each optimized for a specific class of mathematical operations. This specialization is intended to provide the highest possible energy efficiency for the most common tasks, such as dense matrix multiplication. Yet, as models become more complex, the transitions between these specialized domains become more frequent, revealing the inherent trade-offs of the design.

Anatomy of the Gen 4 NPU: A Dual-Engine Strategy

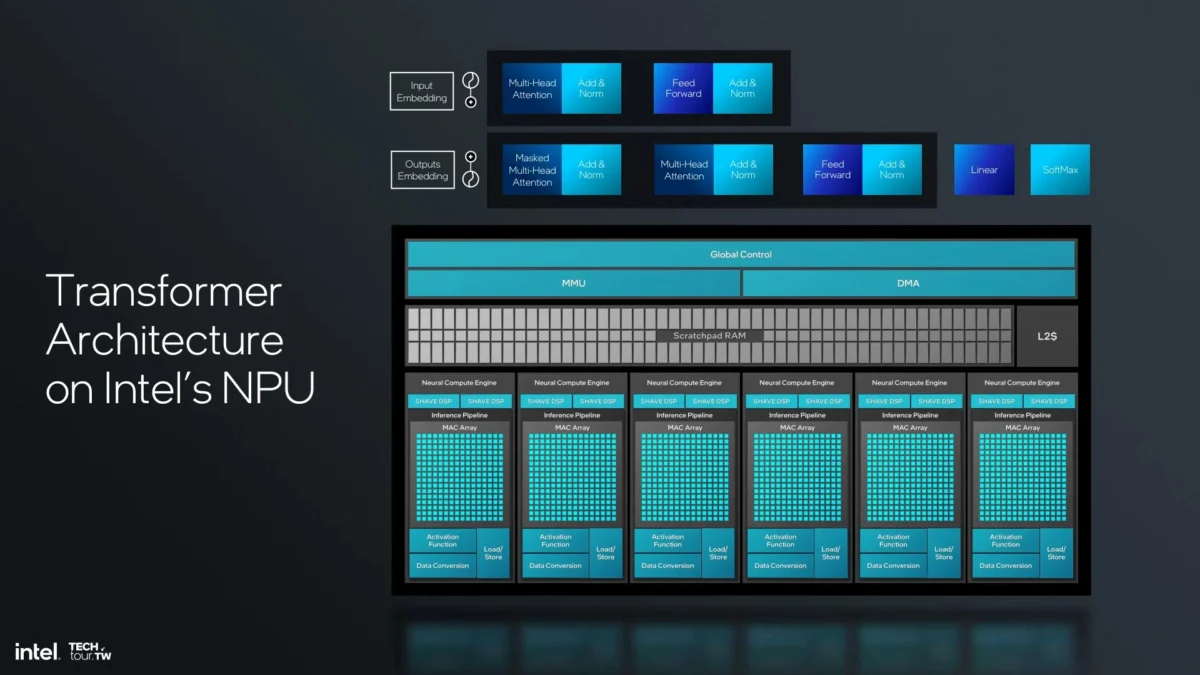

The Intel Gen 4 NPU is organized into several Neural Compute Engines (NCEs). According to technical documentation released at the 2024 Tech Tour, each NCE is a self-contained unit that houses two primary types of compute hardware: MAC (Multiply-Accumulate) Arrays and SHAVE DSPs (Digital Signal Processors).

The MAC Arrays are the "heavy lifters" of the system. They are fixed-function logic units designed specifically for dense linear algebra. Because the vast majority of a transformer model’s workload consists of matrix multiplications, these arrays are tuned for maximum throughput and minimal power per operation. However, their fixed-function nature means they are inflexible; they cannot easily process the non-linear functions or complex vector math that often follow a matrix operation.

To provide this necessary flexibility, Intel pairs the MAC Arrays with SHAVE DSPs. These are general-purpose vector processors capable of handling a wide variety of activation functions, normalization layers, and other specialized operators. Connecting these two domains is a robust shared infrastructure consisting of a high-bandwidth scratchpad RAM, a dedicated Memory Management Unit (MMU), and a Direct Memory Access (DMA) controller. This infrastructure ensures that while the compute engines are specialized, they can still function as part of a unified execution pipeline.

The Chronology of a Transformer Graph Execution

The challenges of this heterogeneous approach are best illustrated by tracing the execution of Multi-Head Attention (MHA), the foundational component of the Transformer architecture used in models like GPT-4 and Llama 3. The MHA process is not a single mathematical event but a chain of operations that must be executed in a specific sequence.

In the Intel Gen 4 NPU, this sequence follows a rigorous chronology:

- Linear Projections and Initial Similarity: The process begins with the MAC Arrays performing linear projections to generate Query (Q), Key (K), and Value (V) vectors. This is followed by a matrix multiplication (MatMul) to calculate similarity scores between tokens. These operations are perfectly suited for the MAC Arrays, which produce intermediate activations stored within their local registers or immediate cache.

- The First Migration: Once the similarity scores are computed, the next logical step in the transformer graph is the SoftMax normalization. Because SoftMax involves exponential functions and divisions—tasks the fixed-function MAC Arrays cannot perform—the data must be moved. The DMA controller fetches the intermediate activations from the MAC subsystem and writes them into the shared scratchpad RAM or cache, where the SHAVE DSPs can access them.

- Vector Processing: The SHAVE DSPs take over, performing the SoftMax operation to normalize the scores. This ensures the attention weights sum to one. While the DSP is efficient at vector math, this stage represents a pause in the high-throughput matrix pipeline.

- The Second Migration: After the DSP completes the SoftMax operation, the data must return to the MAC Arrays for the final stage of the attention mechanism. Again, the DMA moves the data across the internal fabric, transferring the normalized weights back into the MAC domain.

- Final Weighting and Projection: The MAC Arrays perform a second MatMul, applying the attention weights to the Value (V) vectors. The process concludes with a final linear projection to produce the output of the attention block.

This "ping-pong" effect—moving data from MAC to DSP and back to MAC—occurs for every single attention head in every layer of the model. In a modern LLM with dozens of layers and multiple heads per layer, these data migrations happen thousands of times per second.

Quantifying the Data Movement Tax

The implications of this movement extend beyond simple logic. In the world of semiconductor design, moving a bit of data often consumes more energy than processing it. By requiring intermediate activations to traverse the shared memory hierarchy, heterogeneous NPUs incur several hidden costs.

First is the impact on memory bandwidth. Every time data is written to or read from the scratchpad RAM to facilitate a transfer between the MAC and DSP, it consumes bandwidth that could otherwise be used to load model weights or new input data. For high-speed inference, where memory bandwidth is often the primary bottleneck, this internal "chatter" can limit the maximum achievable tokens-per-second.

Second is the latency penalty. Each transfer requires coordination. The DMA must be programmed, the MMU must resolve addresses, and the receiving engine must wait for the data to arrive before it can begin computation. While Intel’s Gen 4 architecture uses sophisticated pipelining to hide much of this latency—overlapping the computation of one block with the data movement of another—the overhead remains a factor in the system’s total execution time.

Third is the silicon area. A significant portion of the NPU’s die area is dedicated not to computation, but to the "plumbing" required to move data. DMA engines, complex bus interconnects, and large scratchpad buffers are essential for a heterogeneous design to function, but they do not directly contribute to the TFLOPS or TOPS metrics often touted in marketing materials.

Comparative Analysis: Unified vs. Heterogeneous

The alternative to the heterogeneous approach is a unified, programmable compute fabric. In a unified architecture, a single type of highly flexible processor is designed to handle both matrix multiplications and vector operations within the same execution unit.

The primary advantage of a unified fabric is the elimination of the data movement described in the Intel Tech Tour. Because the same hardware that performs the MatMul can also perform the SoftMax, intermediate activations never need to leave the local register file. This "compute-in-place" philosophy minimizes the strain on the memory subsystem and reduces the need for complex DMA coordination.

However, the unified approach is not without its own trade-offs. General-purpose units are rarely as area-efficient or power-efficient at dense matrix math as a dedicated MAC array. Designers of unified NPUs must work significantly harder to achieve the same peak TOPS-per-watt as a specialized heterogeneous design. The industry remains divided on which path is superior: Intel, Qualcomm, and Apple largely lean toward heterogeneous designs with specialized blocks, while some emerging AI chip startups and high-end data center architectures are exploring more unified, programmable fabrics.

Industry Implications and Market Context

Intel’s transparency regarding the Gen 4 NPU’s data flow comes at a time when the "AI PC" market is becoming hyper-competitive. Qualcomm’s Snapdragon X Elite, featuring the Hexagon NPU, and AMD’s Ryzen AI 300 series, featuring the XDNA 2 architecture, are both vying for dominance in the same Windows ecosystem.

Each of these competitors handles data movement differently. AMD’s XDNA architecture, for instance, uses a spatial data flow grid (based on Xilinx IP) that allows data to flow directly from one compute tile to another without necessarily returning to a central memory pool. Qualcomm, meanwhile, emphasizes a tightly integrated DSP-centric approach.

For software developers, these architectural nuances are increasingly important. Optimizing a model for an Intel NPU requires a compiler that can intelligently schedule operations to minimize MAC-to-DSP transfers. Intel’s OpenVINO toolkit is designed to handle this complexity, but the efficiency of the resulting code is ultimately capped by the physical limitations of the hardware’s data paths.

Conclusion: The Future of NPU Scaling

As AI models continue to evolve, the "operator landscape" is shifting. While transformers are the current standard, new architectures like Mamba (State Space Models) or more complex mixture-of-experts (MoE) designs introduce new types of mathematical operators. A heterogeneous NPU that is perfectly tuned for today’s SoftMax and MatMul might find itself burdened by even more frequent data migrations as new, more complex activation functions become popular.

Intel’s 2024 Tech Tour presentation serves as a reminder that in the era of AI, performance is no longer just about how many billions of operations a chip can perform per second. It is increasingly about the efficiency of the "dark matter" of computing: the movement of data between those operations. For SoC designers, the challenge of the next decade will be to bridge the gap between specialized efficiency and unified flexibility, ensuring that the data movement tax does not become an insurmountable barrier to the next generation of on-device intelligence.

The Gen 4 NPU represents a sophisticated solution to a monumental task, but its reliance on distinct compute domains highlights a fundamental architectural hurdle. As the industry moves toward Core Ultra Series 3 and beyond, the focus will likely shift from adding more raw compute units to refining the internal fabric, seeking ways to keep data closer to the logic and further reducing the energy spent on the simple act of moving bits from one side of the chip to the other.