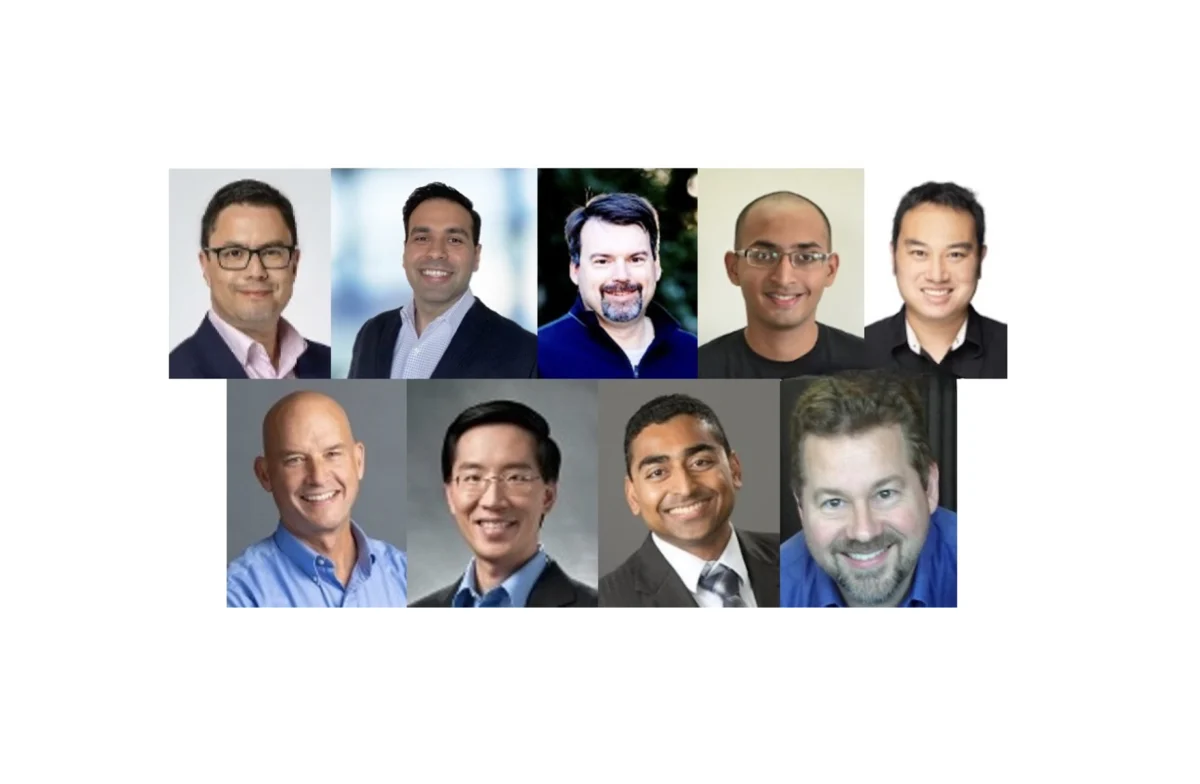

The global semiconductor industry is currently navigating a fundamental transition from passive Generative AI models to autonomous "agentic" systems, a shift that is forcing a total reconstruction of edge computing architectures. While early AI deployment focused on inference and simple classification, the next generation of silicon must support agents capable of reasoning, planning, and executing complex tasks with minimal human intervention. This transformation was the focal point of a recent high-level industry summit featuring leading architects from Arm, Cadence, Expedera, Mixel, Quadric, Rambus, Siemens EDA, and Synopsys. The consensus among these experts is that the "speed" of a processor is no longer the primary metric for success; rather, the efficiency of data movement and the flexibility of the memory hierarchy have become the new benchmarks for the edge.

Defining the Agentic Era: From Passive Response to Autonomous Action

The distinction between Generative AI and Agentic AI is a matter of autonomy and tool integration. As Sharad Chole, chief scientist and co-founder at Expedera, noted, Generative AI operates on a simple prompt-and-response loop. In contrast, Agentic AI is characterized by its ability to orchestrate high-level tasks through a series of "tool calls." These agents do not merely generate text or images; they interact with system APIs, access private memory, and iterate on their own plans based on real-time feedback.

For instance, a coding agent on an edge device can autonomously compile code, run tests, and debug errors without waiting for a human to prompt the next step. Similarly, a personal admin agent might access a user’s calendar and email to schedule appointments or navigate multiple mobile applications to complete a travel booking. This shift from "thinking" to "doing" introduces significant complexity into the processing pipeline. Because agentic workflows are multi-turn and iterative, they require a persistent state and access to a sophisticated memory architecture, moving the burden from the central processing unit (CPU) to specialized Neural Processing Units (NPUs).

The Architect’s Dilemma: Designing for an Uncertain Future

One of the most significant challenges facing chip designers today is the unprecedented rate of model evolution. In the traditional semiconductor cycle, a chip might take two to three years from conception to production, yet AI models change on a weekly basis. Architects are now forced to build "future-proof" silicon that can handle floating-point representations and model structures that may not even exist yet.

Dr. Steven Woo, a fellow and distinguished inventor at Rambus, emphasized that performance and power efficiency are now dominated by memory system design rather than raw compute cycles. "Architects need to be ruthless about what earns silicon area," Woo stated, noting that every extra feature adds a tax on Power, Performance, and Area (PPA). To survive the rapid evolution of models, designers are prioritizing "data movement first" architectures. This approach recognizes that the energy cost of moving data from memory to the processor often exceeds the energy cost of the computation itself by a factor of 100 or more.

Supporting this need for flexibility, Jason Lawley, director of product marketing for AI IP at Cadence, highlighted the rise of multimodal AI. Modern agents must process audio for noise suppression, vision for object recognition, and language for intent—all simultaneously. This requires NPUs to be highly adaptable, supporting a variety of data types including INT8, FP8, and newer representations like ANT.

Chronology of Edge AI: From Sensing to World Models

The trajectory of edge intelligence can be traced through four distinct phases:

- Phase 1: Simple Sensing (2010–2018): Early edge AI was limited to basic pattern recognition, such as "wake word" detection in smart speakers or simple motion sensing in security cameras.

- Phase 2: Generative Inference (2019–2023): The rise of Large Language Models (LLMs) and Small Language Models (SLMs) allowed edge devices to generate human-like text and images, though these systems remained largely reactive.

- Phase 3: Agentic Autonomy (2024–Present): Current systems are moving toward autonomy, where the AI uses tools, maintains long-term memory, and performs multi-step reasoning.

- Phase 4: World Models (2025 and beyond): The industry is now looking toward "world models," where autonomous platforms (like robots and drones) run massive simulations to predict physical outcomes in real time, requiring PetaOPS-level performance at the edge.

This rapid progression is unlike anything seen in the history of the semiconductor industry. While the x86 "processor wars" of the 1980s and 90s were transformative, they were largely confined to the PC market. Today’s AI revolution is "broad-based," affecting everything from industrial microwaves to autonomous vehicles and wearable medical devices.

Technical Bottlenecks: Memory Bandwidth and KV Caching

As agents become more powerful, they require longer context windows to remember previous interactions. This leads to a technical bottleneck known as the Key-Value (KV) cache. In agentic workloads, loading and storing these large caches can consume massive amounts of bandwidth, draining the battery of mobile and edge devices.

To combat this, Sharad Chole pointed out that server-class capabilities are being miniaturized for the edge. Techniques such as Mixture of Experts (MoE)—which only activates a fraction of the neural network for any given task—are becoming essential for edge deployment because they allow for high-performance inference without the power draw of a massive, monolithic model. Furthermore, prefix caching and KV cache quantization are being utilized to save two to three times the bandwidth, allowing agents to run in the background without crippling the device’s performance.

Real-World Impact: The Rise of Physical AI and Smart Infrastructure

The implications of agentic AI extend far beyond smartphones. In the automotive sector, Steve Roddy, chief marketing officer at Quadric, envisions "vehicle health agents" that do more than just flash an engine light. An agentic car could monitor a driver’s schedule, notice that the tires are balding, check the weather forecast for an upcoming ski trip, and autonomously book a service appointment at a highly-rated dealership near the driver’s destination.

In the industrial sector, Sathishkumar Balasubramanian of Siemens EDA highlighted the partnership between Siemens and Meta to equip factory floors with Ray-Ban Meta glasses. These glasses allow technicians to walk through a facility while an AI agent overlays real-time dashboards on machinery, identifying maintenance needs and providing step-by-step repair instructions via augmented reality. This "perceptive AI" reduces the need for 600-page manuals and significantly lowers operational costs.

Other emerging applications include:

- Personal Health Assistants: Wearables that don’t just sense a high heart rate but proactively contact a physician or adjust medication reminders based on the user’s activity levels.

- Humanoid Robotics: While still in the early stages, companies like Nvidia are pushing for "physical AI" where robots can perform household chores like folding clothes by utilizing vision-language-action (VLA) models.

- Smart Appliances: Voice-activated microwaves and ovens that eliminate the need for physical touch panels, reducing manufacturing costs and increasing mechanical reliability.

Broader Implications and Industry Risks

Despite the optimism, the transition to edge-based agentic AI is fraught with challenges. The primary concern remains the power supply. As Sathishkumar Balasubramanian noted, "As you’re doing a lot more, your power efficiency is much more important." For a device like a smart ring or glasses, the thermal envelope is extremely tight; any inefficiency in the chip architecture results in a device that is too hot to wear or has a battery life measured in minutes rather than days.

Furthermore, there is the issue of data privacy versus performance. While running agents on the edge is superior for privacy—keeping personal media, calendars, and health data off the cloud—the processing requirements may still favor data center inference for the most complex tasks. Ronan Naughton of Arm warned that while AI provides significant productivity and lifestyle boosters, the industry must take "measured steps" to mitigate the risks associated with autonomous agents making decisions on behalf of humans.

The consensus among the experts is clear: the semiconductor industry is in the midst of a fundamental shift. The era of the general-purpose processor is giving way to a new age of heterogeneous compute, where the "agent" is the entire system working in concert. As world models and autonomous platforms continue to push the boundaries of vision and token processing, the chip architects of today are not just building hardware—they are designing the cognitive infrastructure of the future. The pace of change is exponential, and as the industry moves toward PetaOPS-level performance at the edge, the only certainty is that the architectures of last year are already becoming relics of a previous era.