For an extended period, smartphone photography has transcended its initial utilitarian role, evolving into a cornerstone of mobile device identity and a fiercely contested battleground among manufacturers. Gone are the days when integrated phone cameras were mere novelties, capable only of capturing grainy, low-resolution images for basic documentation or casual sharing. Today, the photographic capabilities of leading smartphones frequently rival, and in some contexts even surpass, those of entry-level professional cameras, fundamentally altering how we perceive and engage with visual media. This dramatic transformation has been fueled by relentless innovation in hardware and, increasingly, sophisticated software algorithms.

However, amidst this rapid advancement, a pervasive simplification of technical specifications has emerged, often leading consumers astray. Manufacturers have inadvertently conditioned users to prioritize easily digestible metrics such as the sheer number of megapixels or the count of lenses when evaluating a mobile camera’s prowess. This tendency, while understandable from a marketing perspective, frequently obscures a critically important component: the image sensor. This fundamental element, the true gatekeeper of light and detail, often remains in the background of public discourse, despite its paramount influence on final image quality. Understanding the sensor’s role is key to deciphering the true potential of a smartphone camera, moving beyond the superficial allure of high megapixel counts.

Debunking the Megapixel Myth: The Real Unit of Photographic Quality

The common consumer reflex, shared by many, is to equate a camera’s quality directly with the number of megapixels it can render in a digital photographic file. This ingrained habit places the sensor, the very foundation upon which these pixels are built, into a secondary position. Yet, sensors are far from uniform; they come in a variety of physical sizes, and these dimensions directly correlate to their capacity for capturing light and detail, thereby dictating the ultimate quality of the resulting photographs. As the adage goes, and as this analysis will underscore, not all pixels are created equal, nor do they measure the same.

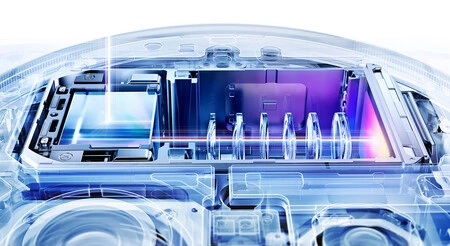

A smartphone camera, from the user’s perspective, primarily consists of visible lenses and a flash. However, concealed within the device’s chassis lies a complex array of components, with the image sensor playing an indispensable role. The photographic journey begins as light passes through the lens assembly. From there, it strikes the sensor, which acts as the initial point of conversion, transforming optical information into electrical signals. These signals are then either partially processed by the sensor’s integrated circuitry or, more commonly, handed over to the smartphone’s primary image signal processor (ISP) for further sophisticated manipulation.

At its core, the image sensor is a semiconductor chip, meticulously crafted from millions of silicon photosites. Each of these photosites, often referred to as photodiodes, corresponds to an individual pixel in the final digital photograph. More precisely, each pixel typically comprises three associated photodiodes, each sensitive to one of the primary colors: red, green, and blue (RGB). This intricate design means that if two cameras boast an identical megapixel count, but one camera possesses a physically larger sensor, its individual photosites (or photodiodes) will also be proportionally larger. This distinction is critical because the physical size of these light-gathering elements directly impacts their performance.

The historical trajectory of image sensor technology has seen significant evolution, particularly with the advent of Complementary Metal-Oxide-Semiconductor (CMOS) sensors. These groundbreaking sensors, notably developed with contributions from NASA for space applications in the late 20th century, marked a pivotal shift from earlier Charge-Coupled Device (CCD) technology. Unlike CCDs, which required an additional chip to digitize the captured analog image, CMOS sensors ingeniously integrated analog-to-digital converters directly into each transistor. This innovation yielded multiple benefits: a considerable reduction in camera size, increased processing speed, enhanced energy efficiency, and a more cost-effective manufacturing process. These advancements were instrumental in making high-quality digital photography viable for mobile devices, paving the way for the sophisticated camera systems we see today.

The Indisputable Advantage of Larger Sensors: Capturing More Information

The physical dimensions of an image sensor profoundly influence its light-gathering capabilities. The principle is straightforward: larger photodiodes possess a greater surface area, enabling them to capture a significantly higher quantity of photons. This translates directly into enhanced light sensitivity, allowing the sensor to interpret and digitize a broader spectrum of light information from the captured scene. Consequently, while the number of pixels (or megapixels, for simplification) remains a factor, the paramount consideration lies in ensuring that each individual pixel is as large as physically possible within the sensor’s architecture. Larger pixels can store substantially more information about the scene, leading to richer tonal gradients, wider dynamic range, reduced noise in low-light conditions, and ultimately, a more detailed and accurate photographic representation.

A notable historical example of this philosophy in action came from HTC with its "Ultrapixel" technology. In 2013, with the launch of the HTC One M7, the company made a bold departure from the prevailing megapixel race. While other manufacturers were pushing for higher megapixel counts, HTC’s primary camera featured a relatively modest 4-megapixel resolution. However, its sensor measured a respectable 1/3 inches, and critically, its individual pixels were exceptionally large at 2 µm (micrometers). This strategic choice prioritized pixel size over raw resolution, aiming to capture more light and produce superior low-light performance and dynamic range. While the Ultrapixel concept demonstrated clear advantages in certain scenarios, particularly in challenging lighting, its lower resolution proved to be a disadvantage for users accustomed to cropping or printing larger images, and it ultimately did not gain widespread market traction as a standalone metric. Nevertheless, it was a pioneering attempt to highlight the importance of pixel size, foreshadowing current industry trends.

The Current State of Mobile Camera Sensor Technology: A High-End Comparison

To grasp the current landscape of sensor sizes in premium mobile photography, a comparative analysis of leading flagship smartphones provides valuable insight. The following table showcases key specifications for the main camera sensors of some of the most expensive and performance-oriented smartphones, which are actively vying for photographic supremacy in the market.

| Feature | iPhone 17 Pro Max | Samsung Galaxy S26 Ultra | Xiaomi 17 Ultra | Vivo X300 Pro | Oppo Find X9 Pro | Huawei Pura 80 Ultra |

|---|---|---|---|---|---|---|

| Sensor Size | 1/1.28 inches | 1/1.3 inches | 1 inch | 1/1.28 inches | 1/1.28 inches | 1 inch |

| Megapixels (MP) | 48 MP | 200 MP | 50 MP | 50 MP | 50 MP | 50 MP |

| Pixel Size (µm) | 1.22 µm | 0.6 µm | 3.2 µm | 1.22 µm | 1.22 µm | n.d. |

Note: Sensor sizes are typically measured as a diagonal fraction of an inch (e.g., 1 inch, 1/1.28 inch). A larger denominator indicates a smaller sensor (e.g., 1/1.3 is smaller than 1/1.28), while a ‘1 inch’ sensor is the largest among these. Pixel size (µm) refers to the physical dimension of an individual pixel, with larger values generally indicating better light capture.

From this comparative data, a clear trend emerges: manufacturers like Xiaomi and Huawei are at the forefront of adopting larger sensor formats, notably incorporating impressive 1-inch type sensors into their flagship devices. Furthermore, the Xiaomi 17 Ultra distinguishes itself by featuring the largest individual pixel size among the listed competitors, at 3.2 µm (micrometers), which is significantly larger than the 0.6 µm pixels found in the Samsung Galaxy S26 Ultra’s high-megapixel sensor. This indicates a strategic emphasis on maximizing light capture per pixel.

Beyond the Sensor: The Interplay of Lenses and Computational Photography

It is crucial to understand that while a larger sensor and larger pixels fundamentally improve light gathering and signal quality, they do not exclusively determine a photograph’s ultimate aesthetic and technical excellence. The photographic process is a complex interplay of multiple factors, and other elements wield significant influence over the final output.

The quality of the lenses themselves is paramount. A high-quality lens system ensures that light is precisely focused onto the sensor without distortion, chromatic aberration, or excessive flare. Factors such as the lens’s aperture (f-number), the number of optical elements, and the quality of anti-reflective coatings all contribute to the clarity, sharpness, and light transmission efficiency of the image. A superior sensor paired with inferior optics will still yield suboptimal results.

Perhaps even more transformative in modern mobile photography is the ascendancy of computational photography. This paradigm shift involves sophisticated software algorithms and powerful Image Signal Processors (ISPs) that work in tandem with the hardware to enhance, combine, and reconstruct images. Computational photography is the "secret sauce" behind many of the impressive features we now take for granted:

- High Dynamic Range (HDR): Merging multiple exposures to capture detail in both bright highlights and deep shadows.

- Portrait Mode: Artificially creating a shallow depth of field (bokeh effect) to isolate subjects, often by mapping depth using multiple lenses or advanced AI.

- Night Mode: Combining numerous short exposures and applying noise reduction algorithms to produce bright, detailed, and low-noise images in extreme low light.

- Super-Resolution Zoom: Utilizing AI and multiple frames to enhance digital zoom quality.

- Semantic Segmentation: Identifying and processing different elements within a scene (sky, skin, foliage) to apply context-aware enhancements.

This reliance on software processing means that a camera with a smaller sensor can, to some extent, compensate for its hardware limitations through intelligent algorithms. Conversely, a large sensor’s rich data can be further optimized and refined by advanced computational techniques, unlocking even greater potential. Brands like Google, with their Pixel series, famously pioneered this approach, demonstrating that exceptional image quality could be achieved even with seemingly modest hardware specifications, primarily through groundbreaking software. The industry as a whole has embraced this direction, leading to significant investments in artificial intelligence and machine learning research dedicated to photographic enhancement.

Implications and the Future of Mobile Imaging

The intricate relationship between sensor size, pixel dimensions, lens quality, and computational photography underscores the complexity of evaluating a smartphone camera. Consumers armed with this deeper understanding can move beyond simplistic metrics and make more informed purchasing decisions. It highlights that the "best" camera is not merely the one with the highest megapixel count, but rather the one that achieves the most harmonious balance of these interconnected components, optimized through thoughtful engineering and sophisticated software.

The ongoing "battle" for camera supremacy in the mobile industry is thus multifaceted. We can anticipate continued innovation across all these fronts:

- Larger Sensors: The trend towards 1-inch type sensors, and potentially even larger formats, will likely continue in flagship models, pushing the boundaries of light capture.

- Advanced Optics: Manufacturers will continue to refine lens designs, introduce specialized elements like periscope lenses for extended optical zoom, and integrate more robust stabilization systems.

- Computational Photography Evolution: AI and machine learning will become even more integral, enabling real-time scene understanding, hyper-realistic image reconstruction, and personalized photographic styles. We may see more adaptive processing that adjusts based on user preferences or environmental conditions.

- Synergy: The future will likely see an even tighter integration between hardware and software, where sensors are designed specifically to feed data to advanced computational engines, and algorithms are optimized for the unique characteristics of each sensor.

In conclusion, photography is far from being a simple matter of megapixels or the number of cameras visible on a device. While lens quality plays a significant role, the image sensor’s size and the individual pixel dimensions within it are arguably even more critical foundational elements. The seemingly complex tapestry of modern mobile photography becomes clearer when one understands the function and interdependencies of its various components. Remember, the size of the sensor truly matters, and critically, not all pixels are created equal. This holistic perspective is essential for appreciating the true art and science behind the stunning images captured by today’s sophisticated smartphones.