The rapid proliferation of large language models (LLMs) and agent-based AI applications across industries has underscored an urgent need for robust and systematic evaluation methodologies, moving beyond subjective assessments to quantifiable metrics. This imperative is particularly acute for Retrieval-Augmented Generation (RAG) systems and sophisticated AI agents, where performance directly impacts user trust, operational efficiency, and the prevention of critical errors such as factual inaccuracies or "hallucinations." This article delves into the practical application of two pivotal frameworks, RAGAs (Retrieval-Augmented Generation Assessment) and G-Eval, demonstrating how their integration, often facilitated by platforms like DeepEval, forms a powerful toolkit for assessing the quality and reliability of modern AI systems in a practical, hands-on workflow.

The Evolution of LLM Evaluation: From Heuristics to LLM-as-a-Judge

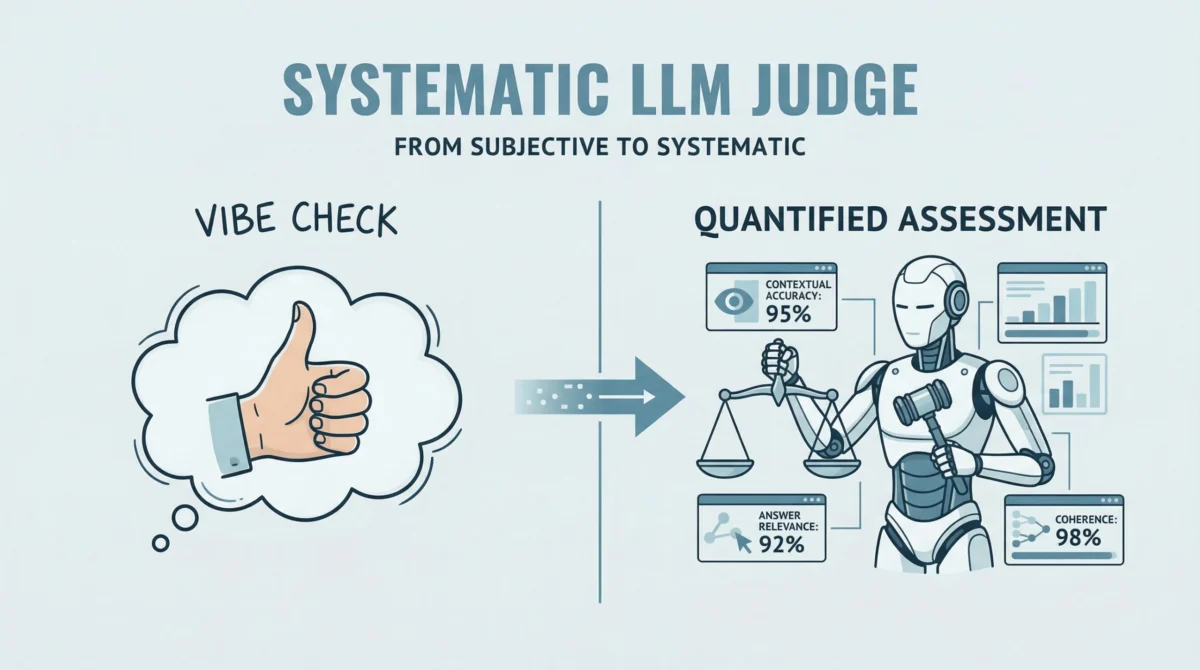

The journey of evaluating AI models has seen significant evolution. Early machine learning models relied heavily on traditional metrics like precision, recall, F1-score, and accuracy, which are well-suited for classification and regression tasks with clearly defined ground truths. However, the advent of generative AI, particularly LLMs, introduced a new set of challenges. Evaluating the quality of generated text, which is inherently creative and open-ended, proved difficult with conventional metrics. Subjective human "vibe checks" became a common, albeit unreliable, initial approach. While these provided quick feedback, they lacked consistency, scalability, and objectivity, making them unsuitable for production-grade AI systems.

The breakthrough arrived with the concept of "LLM-as-a-Judge," where an LLM itself is tasked with evaluating the output of another LLM or AI agent. This approach leverages the advanced reasoning and language understanding capabilities of LLMs to provide nuanced, context-aware assessments. RAGAs stands as a prime example of this paradigm, offering an open-source framework designed to systematically quantify the quality of RAG pipelines by replacing human subjectivity with an LLM-driven "judge."

RAGAs: Quantifying RAG Pipeline Quality

RAGAs specifically targets the unique challenges of RAG systems, which combine the generative power of LLMs with external knowledge retrieval. The core idea behind RAG is to enhance the factual accuracy and reduce hallucinations by grounding LLM responses in retrieved, relevant information. Evaluating such systems requires metrics that can assess not only the final answer but also the quality of the retrieval process and its influence on the generation.

RAGAs quantifies a critical triad of desirable RAG properties:

- Faithfulness: This metric assesses the factual consistency of the generated answer with the provided context. A high faithfulness score indicates that all claims made in the answer can be directly supported by the retrieved documents, effectively minimizing hallucinations. For instance, if a RAG system retrieves information about the capital of Japan being Tokyo, and the generated answer states "The capital of Japan is Kyoto," the faithfulness score would be low. This is paramount for applications requiring high factual accuracy, such as legal, medical, or financial information systems.

- Answer Relevancy: This metric measures how relevant the generated answer is to the original user query. An answer might be factually correct based on the context but still fail to address the user’s specific question. High answer relevancy ensures that the AI system is not only accurate but also helpful and focused on the user’s intent. For example, if a user asks "What is the capital of Japan?" and the system provides a lengthy historical overview of Japan without directly stating Tokyo, its relevancy score would suffer.

- Contextual Precision and Recall (Implicit in RAGAs’ broader scope): While not explicitly demonstrated in the provided code snippet, RAGAs also often considers metrics related to the quality of the retrieved context itself. Contextual precision measures if the retrieved context is relevant to the question, while contextual recall measures if all relevant information needed to answer the question was retrieved. These are crucial for ensuring the RAG pipeline effectively identifies and utilizes the correct information.

The RAGAs framework is particularly valuable because it moves beyond evaluating just the final output to scrutinizing the intermediate steps of the RAG process. By providing objective scores for these dimensions, RAGAs enables developers to pinpoint weaknesses in their RAG pipelines, whether it’s an issue with the retrieval mechanism failing to fetch relevant documents, or the generation model failing to synthesize information faithfully. The framework’s evolution to support agent-based applications further highlights its versatility, recognizing that agents often incorporate RAG-like components or require similar contextual grounding.

G-Eval and DeepEval: Adding Qualitative Depth with LLM-Driven Reasoning

While RAGAs excels at quantifying objective aspects like faithfulness and relevancy, the overall quality of an LLM’s output often extends to more qualitative attributes such as coherence, clarity, style, and professionalism. This is where G-Eval, often integrated through platforms like DeepEval, provides a powerful complement. G-Eval is a flexible framework that allows developers to define custom, interpretable evaluation criteria based on natural language instructions, leveraging an LLM to perform the assessment.

DeepEval acts as a unified testing sandbox, integrating various evaluation metrics, including those based on G-Eval. Its "reasoning-and-scoring" approach is particularly insightful: instead of merely providing a numerical score, DeepEval can also generate a natural language explanation (reasoning) for that score. This transparency is invaluable for debugging and refining LLM applications, as it provides specific feedback on why an output was deemed good or bad against a given criterion.

Consider the example of evaluating "coherence." With G-Eval, a custom metric can be defined with a criterion like "Determine if the answer is easy to follow and logically structured." The LLM-as-a-judge then processes the input and actual output, evaluates it against this criterion, assigns a score (e.g., on a scale of 0 to 1), and provides a textual reasoning for its decision. This capability is critical for:

- Understanding nuances: Human evaluators might intuitively grasp "coherence," but formalizing it for automated evaluation requires careful definition. G-Eval’s natural language criteria make this possible.

- Debugging: If an agent’s response consistently scores low on coherence, the provided reasoning can highlight specific structural issues or logical gaps, guiding developers towards improvements.

- Customization: Different applications may prioritize different qualitative aspects. A customer service bot might value "empathy," while a technical documentation generator might value "conciseness." G-Eval allows for the creation of metrics tailored to these specific needs.

A Hands-On Workflow: Integrating RAGAs and G-Eval with DeepEval

Implementing these evaluation frameworks typically follows a structured, iterative process. The workflow begins with defining a test dataset, moves to simulating the AI agent, and then proceeds with running both quantitative (RAGAs) and qualitative (G-Eval) evaluations.

1. Setting Up the Environment and a Simplified Agent:

The first step involves preparing the development environment, ensuring necessary libraries (ragas, deepeval, datasets, openai) are installed. For practical demonstration, a simplified LLM agent is often used. This agent, though basic (e.g., a direct query-response interface with an LLM API), represents the core interaction mechanism that needs evaluation. In a production scenario, such an agent would encompass complex functionalities like reasoning, planning, tool execution, and integration with external APIs, but for evaluation purposes, isolating the LLM’s generative and contextual understanding capabilities is key. This simplification allows developers to focus on the evaluation logic rather than the intricate agent architecture. It’s crucial to configure API keys (e.g., OpenAI or Gemini) as these frameworks rely on LLM-as-a-judge capabilities, which incur usage costs.

2. Defining Test Cases and Data Structure:

Effective evaluation hinges on a well-designed test dataset. For RAGAs, test cases typically include:

question: The user’s query.answer: The response generated by the LLM/agent.contexts: A list of retrieved documents or information snippets that the LLM used to formulate its answer. This is vital for faithfulness and contextual metrics.ground_truth: The ideal, human-verified answer to the question. While not always strictly necessary for all RAGAs metrics (faithfulness can be assessed againstcontexts), it is highly beneficial for comprehensive evaluation, especially for answer relevancy and overall accuracy.

These test cases are often structured as a list of dictionaries and then converted into a Hugging Face Dataset object. This standardized format is favored by ragas for efficient processing and compatibility within the broader AI ecosystem.

3. Running RAGAs Evaluation:

With the test data prepared, RAGAs evaluation is executed by specifying the dataset and the desired metrics (e.g., faithfulness, answer_relevancy). The framework then uses an LLM (typically via the configured API key) to act as a judge, comparing the generated answers against the provided contexts and questions to compute scores for each metric. The output provides aggregate scores, offering a quantitative snapshot of the RAG pipeline’s performance across key dimensions.

For instance, a faithfulness score of 0.9 suggests that 90% of the claims made in the answers are supported by the provided contexts, while an answer_relevancy score of 0.8 indicates a high degree of alignment between the answers and the original questions. These scores are crucial for benchmarking performance, tracking improvements over time, and setting quality thresholds for deployment.

4. Integrating DeepEval for G-Eval-Based Qualitative Assessment:

Complementing RAGAs’ quantitative analysis, DeepEval facilitates qualitative evaluation using G-Eval. This involves:

- Defining Custom Metrics: For each qualitative aspect (e.g., coherence, clarity, conciseness, safety, tone), a

GEvalmetric is instantiated. This requires aname, a detailedcriteria(a natural language instruction for the LLM-as-a-judge), and specification of whichLLMTestCaseParams(e.g.,INPUT,ACTUAL_OUTPUT,GROUND_TRUTH,CONTEXT) are relevant for the evaluation. Athresholdcan also be set for pass/fail determination. - Creating Test Cases:

LLMTestCaseobjects are created, encapsulating theinput(user query) andactual_output(agent’s response) for evaluation. Other parameters likeretrieval_contextorground_truthcan also be included if relevant to the custom metric. - Running Evaluation: Each custom metric’s

measuremethod is invoked with theLLMTestCase. DeepEval then uses an LLM to evaluate the test case against the defined criteria, producing a numerical score and, crucially, a textualreasonfor that score. This reasoning provides actionable insights, explaining why a particular response might lack coherence or clarity.

For example, if a response is evaluated for coherence, and the G-Eval score is low, the accompanying reasoning might state: "The answer jumps between topics without clear transitions, making it difficult to follow the logical flow." This feedback is far more useful than a mere numerical score, directly informing developers about specific areas for improvement in the LLM’s generation or the agent’s response structuring.

Implications and Broader Impact

The adoption of robust evaluation frameworks like RAGAs and G-Eval carries significant implications for the development and deployment of AI systems:

- Enhanced Reliability and Trustworthiness: By systematically identifying and mitigating issues like hallucinations and irrelevant responses, these frameworks contribute directly to building more reliable LLM applications. This, in turn, fosters greater user trust and accelerates the adoption of AI solutions in sensitive domains.

- Accelerated Development Cycles: Automated, objective evaluation reduces the reliance on manual human review, which is slow and expensive. Developers can iterate faster, test changes more thoroughly, and deploy updates with higher confidence. This agility is critical in the rapidly evolving landscape of generative AI.

- Responsible AI Deployment: These tools are fundamental to responsible AI practices. They provide mechanisms to monitor model performance against ethical guidelines, identify biases (if custom metrics are defined), and ensure fairness and safety. As regulatory bodies increasingly focus on AI governance, robust evaluation becomes a non-negotiable requirement.

- Improved MLOps and AI Engineering Practices: Integrating RAGAs and G-Eval into MLOps pipelines enables continuous evaluation throughout the development lifecycle. This means models can be tested not just during initial development but also during deployment for drift detection and ongoing performance monitoring, ensuring sustained quality in production.

- Democratization of Advanced Evaluation: The open-source nature of RAGAs and the accessibility of DeepEval make advanced evaluation techniques available to a wider range of developers, from individual practitioners to large enterprises, without requiring extensive in-house expertise in designing complex evaluation systems from scratch.

Industry reports consistently highlight the increasing investment in AI safety and reliability tools. With the global AI market projected to reach trillions of dollars in the coming years, the importance of robust evaluation frameworks cannot be overstated. Companies are rapidly shifting towards automated, objective evaluation to ensure their AI products meet stringent performance and safety standards, driving the demand for solutions that offer both quantitative and qualitative assessment capabilities. The ability to define custom metrics, as offered by G-Eval, allows organizations to align their AI performance directly with specific business objectives and brand values, moving beyond generic benchmarks to truly relevant quality indicators.

Conclusion

The landscape of large language model and agent-based application development is complex, demanding sophisticated tools to ensure quality and reliability. RAGAs and G-Eval, especially when integrated through platforms like DeepEval, offer a powerful and practical solution. By combining structured, quantitative metrics such as faithfulness and answer relevancy (from RAGAs) with flexible, qualitative evaluation capabilities like coherence and clarity (via G-Eval’s LLM-as-a-judge approach), developers can construct a comprehensive and reliable evaluation pipeline. This approach moves beyond subjective "vibe checks," providing actionable insights that drive continuous improvement, foster trust, and accelerate the responsible deployment of modern AI systems across all sectors. As AI continues its transformative journey, the mastery of these evaluation frameworks will be paramount for any organization committed to building high-performing, safe, and trustworthy intelligent applications.